Python之网页爬虫request模块

#########网页爬虫#########

## requests模块

- 对requests模块的理解

http/1.1请求的封装, 可以轻松实现cookie, IP代理, 登陆验证等操作;

Requests 使用的是 urllib3,因此继承了它的所有特性。Requests 支持 HTTP 连接保持和连接池,支持使用 cookie 保持会话,支持文件上传,支持自动确定响应内容的编码,支持国际化的 URL 和 POST 数据自动编码。现代、国际化、人性化。

- 对requests模块的使用 get方法

import requests

url = 'http://www.baidu.com'

# 使用get方法来获取或url地址

response = requests.get(url)

print(response)

print(response.status_code)

print(response.cookies)

print(response.text)

print(type(response.text))

import requests

"""

def post(url, data=None, json=None, **kwargs):

Sends a POST request.

:param url: URL for the new :class:`Request` object.

:param data: (optional) Dictionary (will be form-encoded), bytes, or file-like object to send in the body of the :class:`Request`.

:param json: (optional) json data to send in the body of the :class:`Request`.

:param \*\*kwargs: Optional arguments that ``request`` takes.

:return: :class:`Response ` object

:rtype: requests.Response

return request('post', url, data=data, json=json, **kwargs)

"""

response = requests.post('http://httpbin.org/post', data={'name': 'kobe', 'age': 40})

print(response.text)

response1 = requests.delete('http://httpbin.org/post', data={'name': 'kobe'})

print(response1.text)

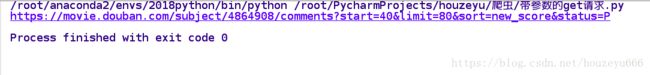

## 带参数的get请求

import requests

# url1 = 'https://movie.douban.com/subject/4864908/comments?start=20&limit=20&sort=new_score&status=P'

data = {

'start': 40,

'limit': 80,

'sort': 'new_score',

'status': 'P',

}

url = 'https://movie.douban.com/subject/4864908/comments'

response = requests.get(url, params=data)

print(response.url)

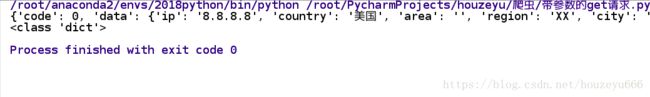

## 解析json格式

ip = '8.8.8.8'

url = "http://ip.taobao.com/service/getIpInfo.php?ip=%s" %(ip)

response = requests.get(url)

content = response.json()

print(content)

print(type(content))

## 获取二进制数据

值得注意的是:

# response.text : 返回字符串的页面信息 # response.content : 返回bytes的页面信息

# 获取二进制数据

url = 'https://gss0.bdstatic.com/-4o3dSag_xI4khGkpoWK1HF6hhy/baike/w%3D268%3Bg%3D0/sign=4f7bf38ac3fc1e17fdbf8b3772ab913e/d4628535e5dde7119c3d076aabefce1b9c1661ba.jpg'

response = requests.get(url)

# print(response.text)

with open('picture.png', 'wb') as f:

f.write(response.content)

## 下载视频

# 下载视频

url = "http://gslb.miaopai.com/stream/sJvqGN6gdTP-sWKjALzuItr7mWMiva-zduKwuw__.mp4"

response = requests.get(url)

with open('/tmp/learn.mp4', 'wb') as f:

# response.text : 返回字符串的页面信息

# response.content : 返回bytes的页面信息

f.write(response.content)

## 添加headers信息

# 添加header信息

url = 'http://www.cbrc.gov.cn/chinese/jrjg/index.html'

user_agent = "Mozilla/5.0 (X11; Linux x86_64; rv:45.0) Gecko/20100101 Firefox/45.0"

headers = {

'User-Agent': user_agent

}

response = requests.get(url,headers=headers)

print(response.status_code)- 状态码的判断

response = requests.get(url, headers=headers)

exit() if response.status_code != 200 else print("请求成功)

## 高级设置_上传文件

# 上传文件

data = {'file': open('picture.png', 'rb')}

response = requests.post('http://httpbin.org/post', files=data)

print(response.text)

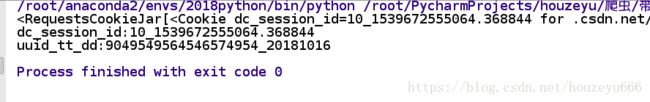

## 获取cookie信息

# 获取cookie信息

response = requests.get('http://www.csdn.net')

print(response.cookies)

for key,value in response.cookies.items():

print(key + ':' +value)

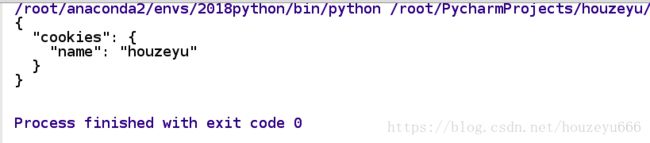

## 读取已经存在的cookie信息访问网址内容(会话维持)

## 读取已经存在的cookie信息访问网址内容(会话维持)

# 设置一个cookie: name = houzeyu

s = requests.session()

response1 = s.get('http://httpbin.org/cookies/set/name/houzeyu')

response2 = s.get('http://httpbin.org/cookies')

print(response2.text)

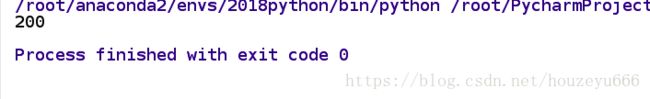

## 忽略证书验证

# 忽略证书验证

url = 'https://www.12306.cn'

response = requests.get(url, verify=False)

print(response.status_code)

print(response.text)

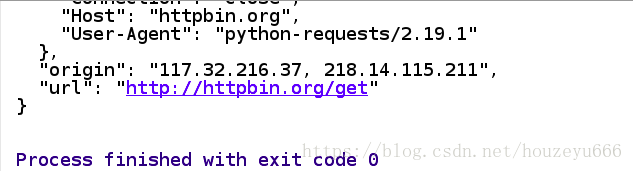

## 代理设置/设置超时间

proxy = {

'https': '171.221.239.11:808',

'http': '218.14.115.211:3128'

}

response = requests.get('http://httpbin.org/get', proxies=proxy, timeout=10)

print(response.text)

########################################