爬取知乎发现首页 requests+pyquery

本人的第一次爬虫,爬取后的文章保存在txt文件里。

参考:python3 网络爬虫开发实战

import requests

from requests import RequestException

from pyquery import PyQuery as pq

url = 'https://www.zhihu.com/explore'

headers = {

'User-Agent':

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit'

'/537.36 (KHTML, like Gecko) Chrome/74.0.3729.169 Safari/537.36'

}

def get_one_page(url):

try:

response = requests.get(url, headers=headers)

if response.status_code == 200:

return response.text

return None

except RequestException:

return None

def write_result(content):

doc = pq(content)

items = doc('.explore-tab .feed-item').items()

with open('explore.txt','a',encoding='utf-8') as file:

for item in items:

question = item.find('h2').text()

author = item.find('.author-link-line').text()

answer = pq(item.find('.content').html()).text()

file.write('\n'.join([question,author,answer]))

file.write('\n'+'='*50+'\n')

def main():

write_result(get_one_page(url))

if __name__ == '__main__':

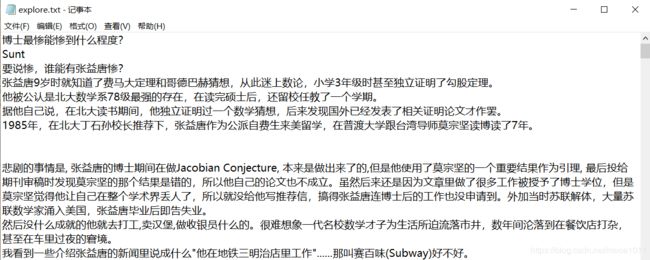

main()结果如下:

如果需要输出到csv,要注意newline='',不然会自动换行

# 重写write_result

def write_result(content):

doc = pq(content)

items = doc('.explore-tab .feed-item').items()

with open('explore.csv', 'w', encoding='utf-8', newline='') as file:

writer = csv.writer(file)

writer.writerow(['question', 'author', 'answer'])

for item in items:

question = item.find('h2').text()

author = item.find('.author-link-line').text()

answer = pq(item.find('.content').html()).text()

writer.writerow([question, author, answer])