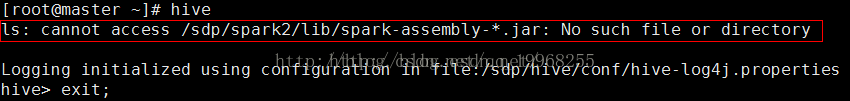

spark报错---安装系列八

1.自从spark2.0.0发布没有assembly的包了,在jars里面,是很多小jar包

修改目录查找jar

2.异常HiveConf of name hive.enable.spark.execution.engine does not exist

在hive-site.xml中:

hive.enable.spark.execution.engine过时了,配置删除即可

3.异常

Failed to execute spark task, with exception 'org.apache.hadoop.hive.ql.metadata.HiveException(Failed to create spark client.)' FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.spark.SparkTask

Spark与hive版本不对,spark的编译,在这里我使用的是hive稳定版本2.01,查看他的pom.xml需要的spark版本是1.5.0。hive与spark版本必须对应着

重新编译完报

Exception in thread "main" java.lang.NoClassDefFoundError: org/slf4j/impl/StaticLoggerBinder

在spark-env.sh里面添加

export SPARK_DIST_CLASSPATH=$(hadoop classpath)

spark master可以起来了,但是slaves仍然是上面错误

用scala./dev/change-scala-version.sh mvn -Pyarn -Phadoop-2.4 -Dscala-2.11 -DskipTests clean package

4.异常

4] shutting down ActorSystem [sparkMaster]

java.lang.NoClassDefFoundError: com/fasterxml/jackson/databind/Module

删除hive/lib

jackson-annotations-2.4.0.jar

jackson-core-2.4.2.jar

jackson-databind-2.4.2.jar

cp $HADOOP_HOME/share/hadoop/tools/lib/jackson-annotations-2.2.3.jar ./

cp $HADOOP_HOME/share/hadoop/tools/lib/jackson-core-2.2.3.jar ./

cp $HADOOP_HOME/share/hadoop/tools/lib/jackson-databind-2.2.3.jar ./

Spark运行时的日志,查看加载jar包的地方,添加上述jar

5.异常

java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: root is not allowed to impersonate root

在hadoop中在core-site.xml添加如下属性,其中

6.异常

MetaException(message:Hive Schema version 2.1.0 does not match metastore's schema version 2.0.0 Metastore is not up

graded or corrupt)

HADOOP或者hive对应着版本太高

show VARIABLES like '%char%';

7.解决一: FAILED: Error in metadata: javax.jdo.JDODataStoreException: Error(s) were found while auto-creatingalidating the datastore for classes. The errors are printed in the log, and are attached to this exception.

NestedThrowables:

com.mysql.jdbc.exceptions.jdbc4.MySQLSyntaxErrorException: Specified key was too long; max key length is 767 bytes

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.DDLTask

是到mysql中的hive数据库里执行 alter database hive character set latin1;改变hive元数据库的字符集,问题就可以解决!

解决方法二:hive元数据储存在mysql 字符集utf8 修改

注意:手动在mysql中创建数据库 创建数据库时要指定用 latin1 编码集;个别字段用到utf8编码集 须手动修改。

为了保存那些utf8的中文,要将mysql中存储注释的那几个字段的字符集单独修改为utf8。

修改字段注释字符集

alter table COLUMNS modify column COMMENT varchar(256) character set utf8;

修改表注释字符集

alter table TABLE_PARAMS modify column PARAM_VALUE varchar(4000) character set utf8;

show create table C1_DIM_ACCOUNT;