深度学习实现工业零件的缺陷检测

介绍

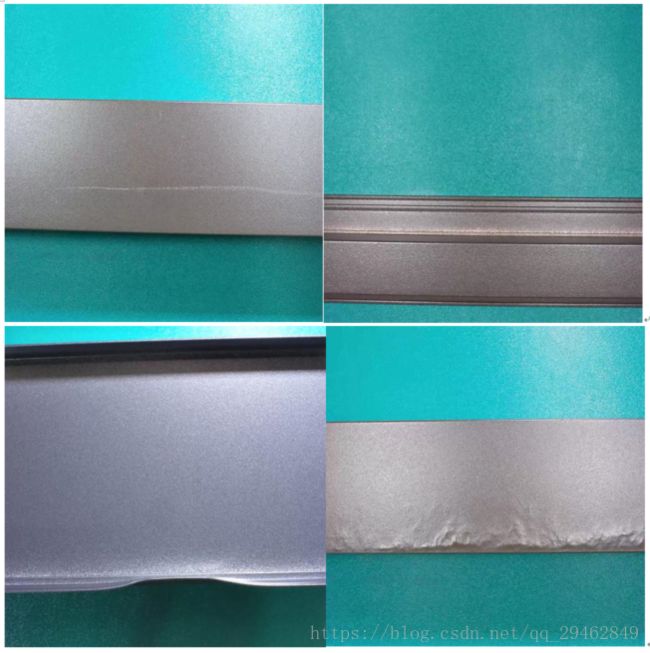

工业零件在制造完成的时候,往往需要去检测其完整性和功能性。如下图所示,从左上到右下,分别是擦花、漏底、碰凹、凸粉。本篇博文主要讲解如何去识别这四类图像,所用框架为keras-2.1.6+tensorflow-1.7.0+GTX1060。

数据集

所获得的数据不是太多,一共250幅图像,其中擦花图像29幅、漏底图像140幅、碰凹图像20幅、凸粉图像61幅。可以发现,这样的数据对深度卷积网络来说是远远不够的,而且数据分布是极不对称的。而且对特征是否明显来说,擦花特征是最不明显的,在训练的时候更要进行关注。

图像增强

对每类图像进行增强,使其扩展到300幅左右。这里使用ImageDataGenerator来完成,主要包括图像的旋转、平移、错切等。

from keras.preprocessing.image import ImageDataGenerator

import numpy as np

import numpy as np

import keras

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten

from keras.layers import Conv2D, MaxPooling2D

from keras.optimizers import SGD

import tensorflow as tf

from PIL import Image

from image_handle import *

import os

import cv2

batch_size=10

epoch_size=50

data = np.empty((250,800, 800,3), dtype="float32")

image_resize=[]

image_vector=[]

image_label=[]

image_label_list=['擦花','漏底','碰凹','凸粉'] #漏底占的比例太大了,导致后面的预测全部都偏向该物体

image_dir='F:/Data/guangdong_round1_train1_20180903/'

j=0

k=0

for fn in os.listdir(image_dir):

image_name=get_image_name(fn)

for i in range(0,4):

if image_label_list[i]==image_name:

image_name=i

image = Image.open(image_dir+fn,'r')#原始图片过大

image_down=image.resize((800, 800), Image.ANTIALIAS)

arr = np.asarray(image_down, dtype="float32")

data[j, :, :, :] = arr

j=j+1

image_label.append(image_name)

#准备好数据和标签送进VGG网络中

x_train=data[231:250]

y_train = keras.utils.to_categorical(image_label,4)[231:250]#转换成one-hot向量

print(len(x_train))

print(len(y_train))

print(image_label)

generator = ImageDataGenerator(

rotation_range=20,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.1,

zoom_range=0.1,

horizontal_flip=True,

)

for batch in generator.flow(x_train,

batch_size=100,

save_to_dir='C:/Users/18301/Desktop/image_data',#生成后的图像保存路径

save_prefix='碰凹2018',

save_format='jpg'):

k += 1

if k > 10: #这个10指出要扩增多少个数据,最终的数据为x_train的大小与10的乘积,可根据要求自行更改

break # otherwise the generator would loop indefinitely

增强后的图像:

训练-Training

主程序,main.py

import numpy as np

import keras

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten

from keras.layers import Conv2D, MaxPooling2D

from keras.layers import BatchNormalization,Activation

from sklearn.model_selection import train_test_split

from keras.optimizers import SGD

import tensorflow as tf

from PIL import Image

from image_handle import *

from model import BuildModel

import os

import cv2

import keras.backend.tensorflow_backend as KTF

#进行配置,使用60%的GPU,这是为了保护GPU

config = tf.ConfigProto()

config.gpu_options.per_process_gpu_memory_fraction = 0.6

session = tf.Session(config=config)

# 设置session

KTF.set_session(session )

batch_size=5

epoch_size=50

image_number=1451

resize_width=300

resize_height=300

image_vec=[]

image_resize=[]

image_vector=[]

image_label=[]

image_label_list=['擦花','漏底','碰凹','凸粉'] #漏底占的比例太大了,导致后面的预测全部都偏向该物体

data = np.empty((1429,300, 300,3), dtype="float32")

image_dir='F:/Data/guangdong_round1_train1_20180903/image_data/'

data,image_label,image_vec=get_image_data(image_dir,resize_width,resize_height,data,image_label,image_label_list)

#准备好数据和标签送进VGG网络中

X_train=data

Y_train = keras.utils.to_categorical(image_label,4) #转换成one-hot向量

#划分训练集和测试集

x_train, x_test,y_train, y_test = train_test_split(X_train, Y_train,test_size=0.1, random_state=None)

#开始建立序列模型

model=BuildModel(x_train,y_train,x_test,y_test,batch_size,epoch_size)

model.run_model()

model.py

import numpy as np

import keras

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten,Input

from keras.layers import Conv2D, MaxPooling2D,merge

from keras.layers import BatchNormalization,Activation

from sklearn.model_selection import train_test_split

from keras.optimizers import SGD

from keras import regularizers

from keras.models import Model

import tensorflow as tf

from PIL import Image

from image_handle import *

import os

import cv2

class BuildModel():

def __init__(self,x_train,y_train,x_test,y_test,batch_size,epoch_size):

self.x_train=x_train

self.y_train = y_train

self.x_test=x_test

self.y_test=y_test

self.batch_size=batch_size

self.epoch_size=epoch_size

def add_layer(self,X,channel_num):

#借鉴残差网络的思想

conv_1=Conv2D(channel_num, (3, 3),padding='same')(X)

conv_1=BatchNormalization()(conv_1)

conv_1= Activation('relu')(conv_1)

# conv_1 = MaxPooling2D(pool_size=(3, 3),strides=(1,1),padding='same')(conv_1)

conv_1 = Conv2D(channel_num, (3, 3),padding='same')(conv_1)

conv_1 = BatchNormalization()(conv_1)

merge_data = merge([conv_1, X], mode='sum')

out = Activation('relu')(merge_data)

return out

def google_layer(self,X):

#借鉴GoogleNet的思想,单层分支

conv_1 = Conv2D(32,(1, 1), padding='same')(X)

conv_1 = BatchNormalization()(conv_1)

conv_1 = Activation('relu')(conv_1)

conv_2 = Conv2D(32,(3, 3), padding='same')(X)

conv_2 = BatchNormalization()(conv_2)

conv_2 = Activation('relu')(conv_2)

conv_3 = Conv2D(32,(5, 5), padding='same')(X)

conv_3 = BatchNormalization()(conv_3)

conv_3 = Activation('relu')(conv_3)

pooling_1 = MaxPooling2D(pool_size=(2, 2), strides=(1, 1), padding='same')(X)

out = merge([conv_1,conv_2,conv_3,pooling_1], mode='concat')

return out

def run_model(self):

# input: 100x100 images with 3 channels -> (100, 100, 3) tensors.

# this applies 32 convolution filters of size 3x3 each.

#在这里采用函数式模型不采用序贯式模型

#发现单纯使用残差网络比使用残差+GoogleNet效果要好,收敛速度更快

inputs=Input(shape=(300,300,3))

X=Conv2D(32, (3, 3))(inputs)

X=BatchNormalization()(X)

X=Activation('relu')(X)

X=self.add_layer(X,32)

# X=self.google_layer(X)

X=MaxPooling2D(pool_size=(2, 2),strides=(2,2))(X)

X=Conv2D(64, (3, 3),kernel_regularizer=regularizers.l2(0.01))(X)

X=BatchNormalization()(X)

X=Activation('relu')(X)

X=self.add_layer(X,64)

# X = self.google_layer(X)

X = MaxPooling2D(pool_size=(2, 2), strides=(2, 2))(X)

X=Conv2D(64, (3, 3))(X)

X=BatchNormalization()(X)

X=Activation('relu')(X)

X=MaxPooling2D(pool_size=(2, 2),strides=(2,2))(X)

X = self.add_layer(X, 64)

# X = self.google_layer(X)

X=Conv2D(32, (3, 3),kernel_regularizer=regularizers.l2(0.01))(X)

X=BatchNormalization()(X)

X=Activation('relu')(X)

X=self.add_layer(X,32)

# X = self.google_layer(X)

X = MaxPooling2D(pool_size=(2, 2), strides=(2, 2))(X)

X=Conv2D(64, (3, 3))(X)

X=BatchNormalization()(X)

X=Activation('relu')(X)

X=self.add_layer(X,64)

# X = self.google_layer(X)

X=Flatten()(X)

X=Dense(256, activation='relu')(X)

X=Dropout(0.5)(X)

predictions=Dense(4, activation='softmax')(X)

model = Model(inputs=inputs, outputs=predictions)

# sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer='rmsprop', metrics=['accuracy'])

model.fit(self.x_train, self.y_train, batch_size=self.batch_size, epochs=self.epoch_size, class_weight = 'auto',validation_split=0.1)

model.save('model_google_layer.h5')

score = model.evaluate(self.x_test, self.y_test, batch_size=self.batch_size)

print(score)

image_handle.py

import numpy as np

from PIL import Image

import random

import re

import os

def get_image_name(image_name):

image_name=re.split('.jpg', image_name)

name=re.split('2018',image_name[0])

return name[0]

def one_hot_vector(image_label,image_label_list):

classes=len(image_label_list)

one_hot_label = np.zeros(shape=(image_label.shape[0], classes+1)) ##生成全0矩阵

one_hot_label[np.arange(0, image_label.shape[0]), image_label] = 1

return one_hot_label

def batch_generate(x_train,y_train,batch_size):

batch_x=[]

batch_y=[]

for i in range(batch_size):

t = random.randint(0, 249)

batch_x.append(x_train[t])

batch_y.append(y_train[t])

return batch_x,batch_y

def get_image_data(image_dir,resize_width,resize_height,data,image_label,image_label_list):

j=0

image_vec=[]

for fn in os.listdir(image_dir):

image_name = get_image_name(fn)

for i in range(0, 4):

if image_label_list[i] == image_name:

image_name = i

image = Image.open(image_dir + fn) # 原始图片过大

image_down = image.resize((resize_width,resize_height), Image.ANTIALIAS)

image_vec.append(image_down)

arr = np.asarray(image_down, dtype="float32")

data[j, :, :, :] = arr

j = j + 1

image_label.append(image_name)

return data,image_label,image_vec

训练中~

预测新的图像种类

model_test.py

import numpy as np

import keras

from keras.models import Sequential,load_model

from keras.layers import Dense, Dropout, Flatten,Input

from keras.layers import Conv2D, MaxPooling2D,merge

from keras.layers import BatchNormalization,Activation

from sklearn.model_selection import train_test_split

from keras.optimizers import SGD

from keras.models import Model

import tensorflow as tf

from PIL import Image,ImageDraw,ImageFont

from image_handle import *

import os

import cv2

from keras import backend as K

K.clear_session()

start=10

end=11

image_number=1451

resize_width=300

resize_height=300

image_resize=[]

image_vector=[]

image_label=[]

image_label_list=['擦花','漏底','碰凹','凸粉'] #漏底占的比例太大了,导致后面的预测全部都偏向该物体

data = np.empty((22,300, 300,3), dtype="float32")

image_dir='C:/Users/18301/Desktop/test_image/'

data,image_label,image_vec=get_image_data(image_dir,resize_width,resize_height,data,image_label,image_label_list)

#准备好数据和标签送进VGG网络中

X_train=data

Y_train = keras.utils.to_categorical(image_label,4) #转换成one-hot向量

# print(X_train)

print(Y_train)

x_train=X_train[start:end]

y_train=Y_train[start:end]

model = load_model('model_google_layer.h5')

preds = model.predict(x_train)

print(preds)

list_pre= preds[0].tolist()

object_index=list_pre.index(max(list_pre))

y_=Y_train[start:end][0]

y_=y_.tolist()

print(y_)

true_object_index=y_.index(max(y_))

print(object_index)

print(true_object_index)

s_pre=image_label_list[object_index]

s_true=image_label_list[true_object_index]

print(s_pre)

print(s_true)

image=image_vec[start]

draw = ImageDraw.Draw(image)

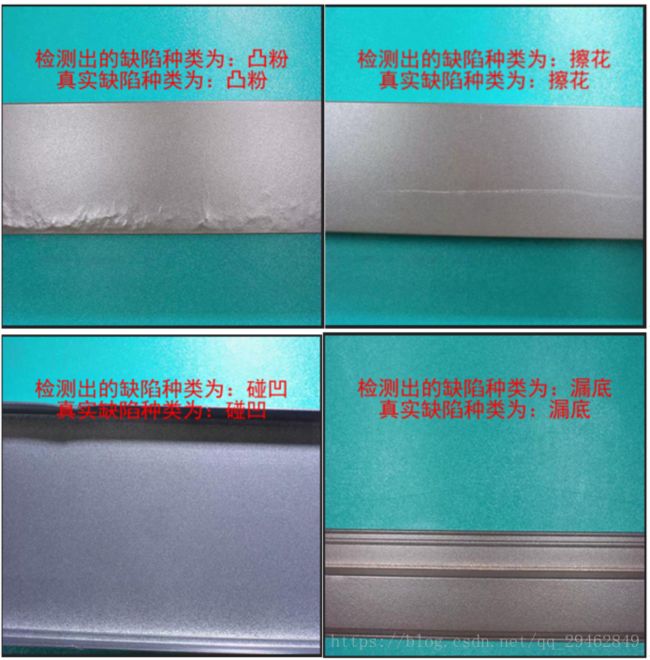

############注意:这里检测出来的物体种类,擦花由于特征不够明显,基本上被判断成凸粉,这一点需要改善#################

s_pre_out='检测出的缺陷种类为:'+s_pre

s_true_out='真实缺陷种类为:'+s_true

print(s_pre_out)

print(s_true_out)

ttfront = ImageFont.truetype('simhei.ttf', 20)#字体大小

draw.text((30,40),str(s_pre_out), fill = (255, 0 ,0), font=ttfront)

draw.text((50,60),str(s_true_out), fill = (255, 0 ,0), font=ttfront)

image.show()

from keras import backend as K

K.clear_session()

最终识别结果:

[[1.4585784e-34 0.0000000e+00 1.0000000e+00 0.0000000e+00]]

[0.0, 0.0, 1.0, 0.0]

检测出的缺陷种类为:碰凹

真实缺陷种类为:碰凹

[[1.3628840e-03 2.3904990e-18 5.2082274e-08 9.9863702e-01]]

[0.0, 0.0, 0.0, 1.0]

检测出的缺陷种类为:凸粉

真实缺陷种类为:凸粉

[[9.3814194e-01 1.4996887e-09 1.5372947e-04 6.1704315e-02]]

[1.0, 0.0, 0.0, 0.0]

检测出的缺陷种类为:擦花

真实缺陷种类为:擦花

[[0. 1. 0. 0.]]

[0.0, 1.0, 0.0, 0.0]

检测出的缺陷种类为:漏底

真实缺陷种类为:漏底

源代码

源代码请见:深度学习实现工业零件缺陷检测源代码

训练图像请见:工业零件缺陷图像

细节和技巧

- 对工业缺陷零件来说,由于特征不是太明显,往往只是一小块,在选择网络的时候,要考虑把浅层特征和深度特征进行融合,这样的话就不会造成主要特征丢失。关于网络,推荐使用ResNet、DenseNet、InceptionResNetV2这些,经过自己的测试,DenseNet效果要更好些,深度在22层左右。

- 可以选取不同的数据(数据最好不要完全相同)训练几个不同的模型,比如训练出三个模型分别对应:ResNet、DenseNet、InceptionResNetV2。训练完成后,把这三个模型的全连接层去掉,只用这三个模型的卷积层进行特征提取,然后把提取的特征进行拼接,可以在channel方向上(此时要求feature map的w和h必须相同),也可以在对应位置上进行特征相加(点加)。然后把这些特征进行汇总,重新建立三个网络,每个网络模型分别对应训练好的三个模型,提取训练好模型的参数,赋给新的模型,然后建立全连接层,这个时候只有一个全连接层。在训练的时候,新的网络只用来做特征提取,卷积层的参数不做训练,把这些网络参数冻结,只更新全连接层。

- 对于2中的特征融合,还有一种方法就是:用三个训练好的模型进行特征提取,然后建立一个mlp多层感知机类型的网络。训练好的模型去掉全连接层,只保存卷积层,做特征提取,并把产生的特征进行拼接,训练时只对全连接层进行更新。

- 如果整个场景图像特别大,缺陷特征比较局部化,对图像进行卷积操作后,特征基本上不存在,这时可以考虑把场景图像进行切分,比如一个场景图像被切分成四份,可以横向切分,也可以纵向切分。然后需要自己去做数据,赋以标签。在做数据上可能要花点时间,不过效果还不错。在预测的时候,只要场景图像的四个子图像有一个是缺陷图像,就认为其是缺陷图像。这在一定程度上避免了特征过于局部化。

- Focal Loss:用来解决数据不均衡问题。