pytorch实现resnet18(34,101,152)、vgg16对cifar10进行分类

1、pytorch简介

pytorch是由facebook所开源的深度学习框架,其框架重于强调于动态流图建立,其不同于google的tensorflow其静态Graph概念,其各有千秋。tensorflow更多贡献于distribution sysytem,企业分布式训练体系架构非常适合。pytorch是后来居上,其构造的框架API也在不断改善,但是pytorch对于类的构造、继承等发挥淋漓尽致,方便程序员、学者非常容易构建训练模型和动态Gragh。TensorflowGPU训练会先占用训练GPU上的剩余空间,即使它未发生使用,这也是资源浪费行为,在于Torch而是实际使用显存占位,这样使用实际有利于共享资源的深度学习爱好者或是其他学习者----这也可能是pytorch在高校、公司实验室受欢迎的原因之一。

2、数据与数据预处理

原来的Carf10是来自Imagenet的10个分类,而且其数据进行压缩至32*32的像素,一般用于算法高校性的测试和处理。这里我使用的AlexNet、VGG、Resnet18、Resnet34、Resnet101、Resnet152进行处理训练,所以前期需要进行数据的与处理:

transform = transforms.Compose([transforms.Resize((240,240)),

transforms.RandomCrop((224,224)),transforms.ToTensor(),

transforms.Normalize([0.485,0.456,0.406],[0.229,0.224,0.225])])

#First parameter: Resize the size of Image

#Second : RandomCrop is to increase Data Augment

#Third : ToTensor means it convert the type of numpy data into the tensor of pytorch

# Forth: Normalize Data数据提取自己封装了一个数据提取的类:

class GetTestData():

def __init__(self, loadfile , batch_size , num_work ,trainform,mode='carf10'):

self.load_files = loadfile

self.batch_size = batch_size

self.num_work = num_work # using multi-thread methods to read Datasets

self.mode = mode # tags that decide to choose different dataset

self.transform =trainform

##

##

def GetRawData(self,index=1):

if self.mode == 'carf10' :

train_data = datasets.CIFAR10(self.load_files,train=True,transform=self.transform.ToTensor())

test_data = datasets.CIFAR10(self.load_files,train=False,transform=self.transform.ToTensor())

elif self.mode == 'minist':

train_data = datasets.MNIST(self.load_files,train=True,transform=self.transform.ToTensor())

test_data = datasets.MNIST(self.load_files,train=False,transform=self.transform.ToTensor())

else :

train_data = datasets.FashionMNIST(self.load_files,train=True,transform=self.transform.ToTensor())

test_data = datasets.FashionMNIST(self.load_files,train=False,transform=self.transform.ToTensor())

if index == 1:

train_dataset = Subset(train_data,train_index)

valid_dataset = Subset(train_data,valid_index)

train_loader = DataLoader(train_data,batch_size=self.batch_size,shuffle=True,num_workers=self.num_work)

valid_loader = DataLoader(valid_dataset,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

test_loader = DataLoader(test_data,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

return train_loader , valid_loader , test_loader

else :

train_loader = DataLoader(train_data,batch_size=self.batch_size,shuffle=True,num_workers=self.num_work)

test_loader = DataLoader(test_data,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

return train_loader , test_loader

def GetResize(self,index = 1):

if self.mode == 'carf10' :

train_data = datasets.CIFAR10(self.load_files,train=True,transform=self.transform)

test_data = datasets.CIFAR10(self.load_files,train=False,transform=self.transform)

elif self.mode == 'minist':

train_data = datasets.MNIST(self.load_files,train=True,transform=self.transform)

test_data = datasets.MNIST(self.load_files,train=False,transform=self.transform )

else :

train_data = datasets.FashionMNIST(self.load_files,train=True,transform=self.transform )

test_data = datasets.FashionMNIST(self.load_files,train=False,transform=self.transform)

if index == 1:

train_dataset = Subset(train_data,train_index)

valid_dataset = Subset(train_data,valid_index)

train_loader = DataLoader(train_data,batch_size=self.batch_size,shuffle=True,num_workers=self.num_work)

valid_loader = DataLoader(valid_dataset,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

test_loader = DataLoader(test_data,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

return train_loader , valid_loader , test_loader

else :

train_loader = DataLoader(train_data,batch_size=self.batch_size,shuffle=True,num_workers=self.num_work)

test_loader = DataLoader(test_data,batch_size=self.batch_size,shuffle=False,num_workers=self.num_work)

return train_loader , test_loader

这里我之前作数据处理是发生了一个常见的错误“

ERROR Warning TypeError: tensor is not a torch image.

# 这里我是把tensor放在Normaliztion后面,提示出错,只需要将其改在Normalization前面,这是API先将其转换为tensor再进行减去RGB均值

”

3、网络结构实现

可以清晰看到resnet18、34、50、101、152的不同结构,这样我设计两个部分用于调用:

##### resnet 18 34

#[2,2,2,2]

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes, grayscale=False):

self.inplanes = 64

if grayscale:

in_dim = 1

else:

in_dim = 3

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(in_dim, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, (2. / n)**.5)

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

logits = self.fc(x)

probas = F.softmax(logits, dim=1)

return logits, probasdef conv3x3(inplane , outplane, stride=1):

return nn.Conv2d(inplane,outplane,kernel_size=3,stride=stride,padding=1)

## ZhiwenXiao

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class ResNet101(nn.Module):

def __init__(self, block, layers, num_classes, grayscale):

self.inplanes = 64

if grayscale:

in_dim = 1

else:

in_dim = 3

super(ResNet101, self).__init__()

self.conv1 = nn.Conv2d(in_dim, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1, padding=0)

#self.fc = nn.Linear(2048 * block.expansion, num_classes)

self.fc = nn.Linear(2048, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, (2. / n)**.5)

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

logits = self.fc(x)

probas = F.softmax(logits, dim=1)

return logits, probasVGG16结构网络为:

class VGG16(nn.Module):

def __init__(self,num_classes,grayscale=False):

dim = None

if grayscale==True:

dim = 1

else :

dim = 3

super(VGG16,self).__init__()

self.vgg_bone = nn.Sequential(

nn.Conv2d(dim,64,kernel_size=3,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(64,64,kernel_size=3,padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

nn.Conv2d(64,128,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(128,128,kernel_size=3, padding=1 ),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

nn.Conv2d(128,256,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(256,256,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(256,256,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

nn.Conv2d(256,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

nn.Conv2d(512,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(512,512,kernel_size=3,stride=1,padding=1),

nn.ReLU(inplace=True),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

)

self.vgg_logit = nn.Sequential(

nn.Linear(7*7*512,4096),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(4096,4096),

nn.ReLU(inplace=True),

nn.Dropout(p=0.5),

nn.Linear(4096,num_classes),

)

for m in self.modules():

if isinstance(m ,nn.Conv2d ):

m.weight.detach().normal_(0,0.05)

if m.bias is not None :

m.bias.data.detach().zero_()

elif isinstance(m,nn.Linear):

m.weight.detach().normal_(0,0.05)

m.bias.detach().detach().zero_()

def forward(self,x):

x =self.vgg_bone(x)

x =x.view(x.size(0),-1)

logit = self.vgg_logit(x)

prob = F.softmax(logit)

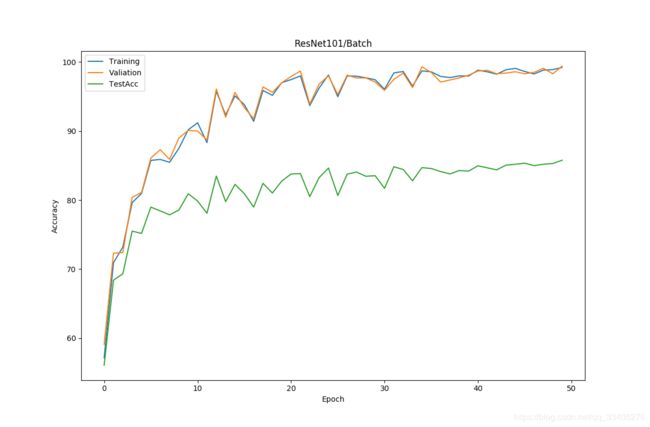

return logit,prob4、训练与对比

训练参数较大,设置的迭代的epoch设置的不是特别大

当时标题问题,这是ResNet18

这是resnet50

resnet101 with 128 batch_size only trains 10 epochs