kubernetes 集群部署:

① 把kubernetes所有组件安装到各个节点manager和node节点上,即物理机上守护进程,开机自启

② 使用kurbernetes官方提供的集群部署管理工具kubeadm部署

# cat /etc/hosts

10.40.6.165 master01

10.40.6.166 node01

10.40.6.167 node02

kubeadm

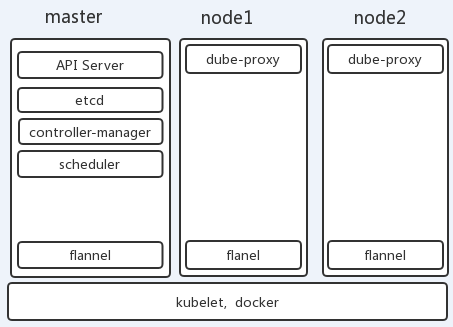

① master, nodes:安装kubelet, kubeadm, docker

② master: kubeadm init

③ nodes: kubadm join

每个节点都创建yum源:

# cd /etc/yum.repos.d/

# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# cat kibernetes.repo

[kubernetes]

name=Kubernetes Repo

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

enabled=1

# yum repolist

...

docker-ce-stable/x86_64 Docker CE Stable - x86_64 43

kubernetes Kubernetes Repo 351

....

# cd && wget https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

# rpm --import rpm-package-key.gpg

# yum install docker-ce kubelet kubeadm kubectl

# systemctl daemon-reload

# systemctl start docker

设置下面两个文件内容为1

# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

# echo 1 > /proc/sys/net/bridge/bridge-nf-call-ip6tables

# rpm -ql kubelet

/etc/kubernetes/manifests ##清单目录

/etc/sysconfig/kubelet ##配置文件

/usr/bin/kubelet ##主程序

/usr/lib/systemd/system/kubelet.service ##系统服务

# systemctl start kubelet

# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; disabled; vendor preset: disabled)

Drop-In: /usr/lib/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: activating (auto-restart) (Result: exit-code) since Thu 2019-05-23 20:35:19 CST; 9s ago

Docs: https://kubernetes.io/docs/

Process: 8085 ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS (code=exited, status=255)

Main PID: 8085 (code=exited, status=255)

May 23 20:35:19 master01 systemd[1]: Unit kubelet.service entered failed state.

May 23 20:35:19 master01 systemd[1]: kubelet.service failed.

# tail /var/log/messages

May 23 20:37:11 master01 kubelet: F0523 20:37:11.716012 8239 server.go:193] failed to load Kubelet config file /var/lib/kubelet/config.yaml, error failed to read kubelet config file "/var/lib/kubelet/config.yaml", error: open /var/lib/kubelet/config.yaml: no such file or directory

May 23 20:37:11 master01 systemd: kubelet.service: main process exited, code=exited, status=255/n/a

May 23 20:37:11 master01 systemd: Unit kubelet.service entered failed state.

May 23 20:37:11 master01 systemd: kubelet.service failed.

# systemctl stop kubelet ## 停止,因为还没初始化完成,设置开机启动即可

# systemctl enable kubelet

kubeadm init --help

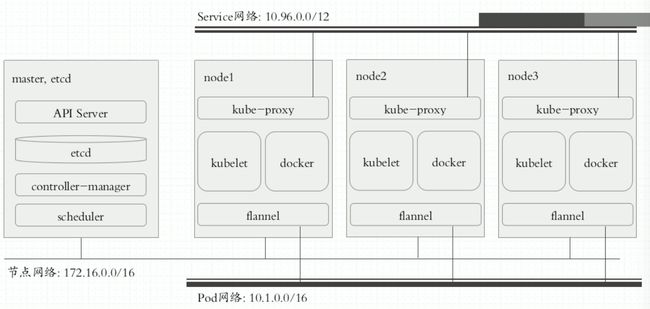

# kubeadm init --kubernetes-version=v1.14.2 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12

[init] Using Kubernetes version: v1.14.2

[preflight] Running pre-flight checks

[WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service'

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR Swap]: running with swap on is not supported. Please disable swap

# systemctl enable docker.service

#在daemon.json添加 "exec-opts": ["native.cgroupdriver=systemd"]

# cat /etc/docker/daemon.json

{

"registry-mirrors": ["http://f1361db2.m.daocloud.io"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

## 重启docker

# systemctl restart docker

# kubeadm init --kubernetes-version=v1.14.2 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

[init] Using Kubernetes version: v1.14.2

[preflight] Running pre-flight checks

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-apiserver:v1.14.2: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-controller-manager:v1.14.2: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-scheduler:v1.14.2: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-proxy:v1.14.2: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/pause:3.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/etcd:3.3.10: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/coredns:1.3.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

### 无法下载镜像。因为无法访问谷歌镜像仓库,手动下载,并打tag

# docker pull mirrorgooglecontainers/kube-apiserver:v1.14.2

# docker pull mirrorgooglecontainers/kube-controller-manager:v1.14.2

# docker pull mirrorgooglecontainers/kube-scheduler:v1.14.2

# docker pull mirrorgooglecontainers/kube-proxy:v1.14.2

# docker pull mirrorgooglecontainers/pause:3.1

# docker pull mirrorgooglecontainers/etcd:3.3.10

# 1141 docker pull coredns/coredns:1.3.1

# docker tag mirrorgooglecontainers/kube-proxy:v1.14.2 k8s.gcr.io/kube-proxy:v1.14.2

# docker tag mirrorgooglecontainers/kube-apiserver:v1.14.2 k8s.gcr.io/kube-apiserver:v1.14.2

# docker tag mirrorgooglecontainers/kube-controller-manager:v1.14.2 k8s.gcr.io/kube-controller-manager:v1.14.2

# docker tag mirrorgooglecontainers/kube-scheduler:v1.14.2 k8s.gcr.io/kube-scheduler:v1.14.2

# docker tag coredns/coredns:1.3.1 k8s.gcr.io/coredns:1.3.1

# docker tag mirrorgooglecontainers/etcd:3.3.10 k8s.gcr.io/etcd:3.3.10

# docker tag mirrorgooglecontainers/pause:3.1 k8s.gcr.io/pause:3.1

# kubeadm init --kubernetes-version=v1.14.2 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

[init] Using Kubernetes version: v1.14.2

[preflight] Running pre-flight checks

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master01 localhost] and IPs [10.40.6.165 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master01 localhost] and IPs [10.40.6.165 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master01 kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 10.40.6.165]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 16.003464 seconds

[upload-config] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.14" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --experimental-upload-certs

[mark-control-plane] Marking the node master01 as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master01 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: ym1869.1nmmpxgvo0dlcyps

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.40.6.165:6443 --token ym1869.1nmmpxgvo0dlcyps \

--discovery-token-ca-cert-hash sha256:4574b06205aef226254b461235fa3a953eed2a6ed1aded4dff0db3d662b70273

# useradd k8s

## 在/etc/sudoers 添加

k8s ALL=(ALL) NOPASSWD: ALL

# sudo su - k8s

$ mkdir -p $HOME/.kube

$ sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

$ sudo chown $(id -u):$(id -g) $HOME/.kube/config

# kubectl get componentstatus

The connection to the server localhost:8080 was refused - did you specify the right host or port?

kube-apiserver没有监听默认的http 8080端口。 所以我们使用kubectl get nodes会报以上的错误

查看kube-apiserver的监听端口可以看到只监听了https的6443端口

为了使用kubectl访问apiserver,在~/.bash_profile中追加下面的环境变量:

export KUBECONFIG=/etc/kubernetes/admin.conf

# source ~/.bash_profile

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master01 NotReady master 13h v1.14.2

# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

## 部署网络插件flannel:(注意命令执行完不会立马把镜像和pod启动)

### 地址: https://github.com/coreos/flannel

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master01 Ready master 13h v1.14.2

# kubectl get pods -n kube-system ###指定一个名称空间

NAME READY STATUS RESTARTS AGE

coredns-fb8b8dccf-5gkgk 1/1 Running 2 13h

coredns-fb8b8dccf-7mwjs 1/1 Running 1 13h

etcd-master01 1/1 Running 2 13h

kube-apiserver-master01 1/1 Running 2 13h

kube-controller-manager-master01 1/1 Running 2 13h

kube-flannel-ds-amd64-h64s9 1/1 Running 1 16m

kube-proxy-x8gnk 1/1 Running 2 13h

kube-scheduler-master01 1/1 Running 2 13h

# kubectl get ns ## 命名空间

NAME STATUS AGE

default Active 13h

kube-node-lease Active 13h

kube-public Active 13h

kube-system Active 13h

# scp rpm-package-key.gpg node01:/root/

# scp rpm-package-key.gpg node01:/root/

## node 节点 安装:

# rpm --import rpm-package-key.gpg

# yum install docker-ce kubelet kubeadm -y

# systemctl enable docker kubelet

# cat /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

# systemctl start docker

# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

# echo 1 > /proc/sys/net/bridge/bridge-nf-call-ip6tables

# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://dockerhub.azk8s.cn"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

# systemctl restart docker

# kubeadm join 10.40.6.165:6443 --token ym1869.1nmmpxgvo0dlcyps --discovery-token-ca-cert-hash sha256:4574b06205aef226254b461235fa3a953eed2a6ed1aded4dff0db3d662b70273 --ignore-preflight-errors=Swap

# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master01 Ready master 16h v1.14.2

node01 Ready 150m v1.14.2

node02 Ready 6m39s v1.14.2

Master Node初始化完成,使用kubeadm初始化的Kubernetes集群在Master节点上的核心组件:kube-apiserver, kube-scheduler, kube-controller-manager是以静态Pod的形式运行的。