实验截图

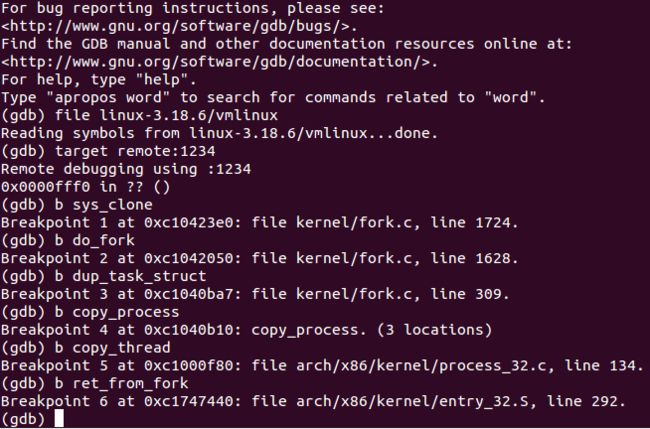

设置断点。

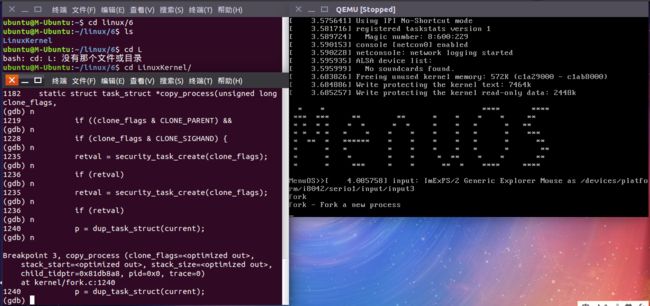

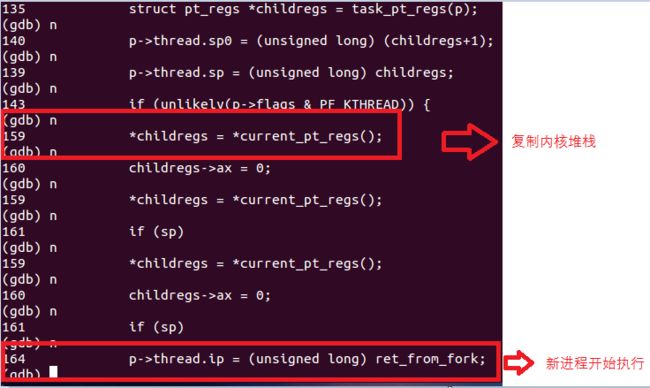

跟踪到copy_process函数。

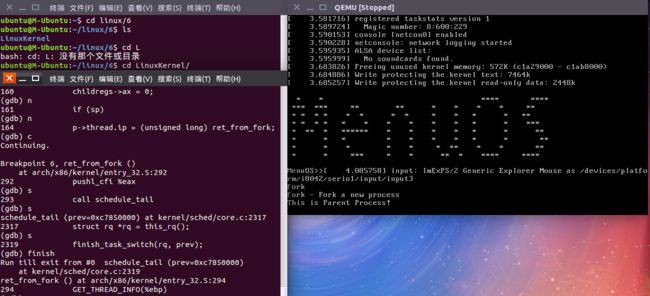

跟踪到ret_from_fork()。

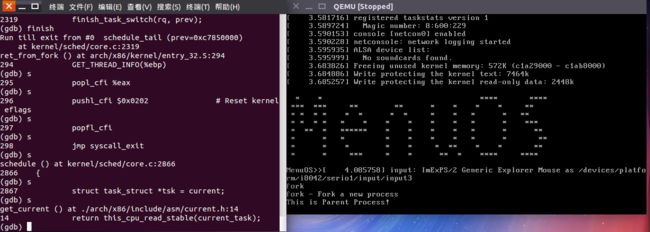

跟踪ret_from_fork()的汇编代码。

task_struct数据结构分析

struct task_struct {

volatile long state;//进程运行状态。-1为等待状态,0为运行,>0为停止状态

void *stack; //进程的内核堆栈

atomic_t usage;

unsigned int flags; //每个进程的标识符

unsigned int ptrace;//进程跟踪标识符

#ifdef CONFIG_SMP //条件编译,即对处理时用到的代码

struct llist_node wake_entry;

int on_cpu;

struct task_struct *last_wakee;

unsigned long wakee_flips;

unsigned long wakee_flip_decay_ts;

int wake_cpu;

#endif

/*运行队列和进程调度相关的代码*/

int on_rq;

int prio, static_prio, normal_prio;

unsigned int rt_priority;

const struct sched_class *sched_class;

struct sched_entity se;

struct sched_rt_entity rt;

#ifdef CONFIG_CGROUP_SCHED

struct task_group *sched_task_group;

#endif

struct sched_dl_entity dl;

#ifdef CONFIG_PREEMPT_NOTIFIERS

/* list of struct preempt_notifier: */

struct hlist_head preempt_notifiers;

#endif

#ifdef CONFIG_BLK_DEV_IO_TRACE

unsigned int btrace_seq;

#endif

unsigned int policy;

int nr_cpus_allowed;

cpumask_t cpus_allowed;

#ifdef CONFIG_PREEMPT_RCU

int rcu_read_lock_nesting;

union rcu_special rcu_read_unlock_special;

struct list_head rcu_node_entry;

#endif /* #ifdef CONFIG_PREEMPT_RCU */

#ifdef CONFIG_TREE_PREEMPT_RCU

struct rcu_node *rcu_blocked_node;

#endif /* #ifdef CONFIG_TREE_PREEMPT_RCU */

#ifdef CONFIG_TASKS_RCU

unsigned long rcu_tasks_nvcsw;

bool rcu_tasks_holdout;

struct list_head rcu_tasks_holdout_list;

int rcu_tasks_idle_cpu;

#endif /* #ifdef CONFIG_TASKS_RCU */

#if defined(CONFIG_SCHEDSTATS) || defined(CONFIG_TASK_DELAY_ACCT)

struct sched_info sched_info;

#endif

struct list_head tasks; //进程的链表,将所有进程通过双向循环链表链接在一起。

#ifdef CONFIG_SMP

struct plist_node pushable_tasks;

struct rb_node pushable_dl_tasks;

#endif

struct mm_struct *mm, *active_mm; //与进程的地址空间相关的数据结构

#ifdef CONFIG_COMPAT_BRK

unsigned brk_randomized:1;

#endif

/* per-thread vma caching */

u32 vmacache_seqnum;

struct vm_area_struct *vmacache[VMACACHE_SIZE];

#if defined(SPLIT_RSS_COUNTING)

struct task_rss_stat rss_stat;

#endif

/* task state */

int exit_state;

int exit_code, exit_signal;

int pdeath_signal; /* The signal sent when the parent dies */

unsigned int jobctl; /* JOBCTL_*, siglock protected */

/* Used for emulating ABI behavior of previous Linux versions */

unsigned int personality;

unsigned in_execve:1; /* Tell the LSMs that the process is doing an

* execve */

unsigned in_iowait:1;

/* Revert to default priority/policy when forking */

unsigned sched_reset_on_fork:1;

unsigned sched_contributes_to_load:1;

unsigned long atomic_flags; /* Flags needing atomic access. */

pid_t pid; //进程标识符

pid_t tgid; //进程标识符

#ifdef CONFIG_CC_STACKPROTECTOR

/* Canary value for the -fstack-protector gcc feature */

unsigned long stack_canary;

#endif

/*

* pointers to (original) parent process, youngest child, younger sibling,

* older sibling, respectively. (p->father can be replaced with

* p->real_parent->pid)

*/

/*与进程父子关系有关的代码*/

struct task_struct __rcu *real_parent; /* real parent process */

struct task_struct __rcu *parent; /* recipient of SIGCHLD, wait4() reports */

/*

* children/sibling forms the list of my natural children

*/

struct list_head children; /* list of my children */

struct list_head sibling; /* linkage in my parent's children list */

struct task_struct *group_leader; /* threadgroup leader */

/*

* ptraced is the list of tasks this task is using ptrace on.

* This includes both natural children and PTRACE_ATTACH targets.

* p->ptrace_entry is p's link on the p->parent->ptraced list.

*/

struct list_head ptraced;

struct list_head ptrace_entry;

/* PID/PID hash table linkage. */

struct pid_link pids[PIDTYPE_MAX];

struct list_head thread_group;

struct list_head thread_node;

struct completion *vfork_done; /* for vfork() */

int __user *set_child_tid; /* CLONE_CHILD_SETTID */

int __user *clear_child_tid; /* CLONE_CHILD_CLEARTID */

/*与时间相关的代码*/

cputime_t utime, stime, utimescaled, stimescaled;

cputime_t gtime;

#ifndef CONFIG_VIRT_CPU_ACCOUNTING_NATIVE

struct cputime prev_cputime;

#endif

#ifdef CONFIG_VIRT_CPU_ACCOUNTING_GEN

seqlock_t vtime_seqlock;

unsigned long long vtime_snap;

enum {

VTIME_SLEEPING = 0,

VTIME_USER,

VTIME_SYS,

} vtime_snap_whence;

#endif

unsigned long nvcsw, nivcsw; /* context switch counts */

u64 start_time; /* monotonic time in nsec */

u64 real_start_time; /* boot based time in nsec */

/* mm fault and swap info: this can arguably be seen as either mm-specific or thread-specific */

unsigned long min_flt, maj_flt;

struct task_cputime cputime_expires;

struct list_head cpu_timers[3];

/* process credentials */

const struct cred __rcu *real_cred; /* objective and real subjective task

* credentials (COW) */

const struct cred __rcu *cred; /* effective (overridable) subjective task

* credentials (COW) */

char comm[TASK_COMM_LEN]; /* executable name excluding path

- access with [gs]et_task_comm (which lock

it with task_lock())

- initialized normally by setup_new_exec */

/* file system info */

int link_count, total_link_count;

#ifdef CONFIG_SYSVIPC

/* ipc stuff */

struct sysv_sem sysvsem;

struct sysv_shm sysvshm;

#endif

#ifdef CONFIG_DETECT_HUNG_TASK

/* hung task detection */

unsigned long last_switch_count;

#endif

/* 与CPU有关的数据结构*/

struct thread_struct thread;

/* filesystem information */

struct fs_struct *fs;//与文件系统有关的数据结构

/* open file information */

struct files_struct *files; //文件描述符

/* namespaces */

struct nsproxy *nsproxy;

/* 与信号处理相关的数据结构 */

struct signal_struct *signal;

struct sighand_struct *sighand;

sigset_t blocked, real_blocked;

sigset_t saved_sigmask; /* restored if set_restore_sigmask() was used */

struct sigpending pending;

unsigned long sas_ss_sp;

size_t sas_ss_size;

int (*notifier)(void *priv);

void *notifier_data;

sigset_t *notifier_mask;

struct callback_head *task_works;

struct audit_context *audit_context;

#ifdef CONFIG_AUDITSYSCALL

kuid_t loginuid;

unsigned int sessionid;

#endif

struct seccomp seccomp;

/* Thread group tracking */

u32 parent_exec_id;

u32 self_exec_id;

/* Protection of (de-)allocation: mm, files, fs, tty, keyrings, mems_allowed,

* mempolicy */

spinlock_t alloc_lock;

/* Protection of the PI data structures: */

raw_spinlock_t pi_lock;

#ifdef CONFIG_RT_MUTEXES //互斥锁

/* PI waiters blocked on a rt_mutex held by this task */

struct rb_root pi_waiters;

struct rb_node *pi_waiters_leftmost;

/* Deadlock detection and priority inheritance handling */

struct rt_mutex_waiter *pi_blocked_on;

#endif

#ifdef CONFIG_DEBUG_MUTEXES//互斥锁

/* mutex deadlock detection */

struct mutex_waiter *blocked_on;

#endif

#ifdef CONFIG_TRACE_IRQFLAGS //与调试相关的数据结构

unsigned int irq_events;

unsigned long hardirq_enable_ip;

unsigned long hardirq_disable_ip;

unsigned int hardirq_enable_event;

unsigned int hardirq_disable_event;

int hardirqs_enabled;

int hardirq_context;

unsigned long softirq_disable_ip;

unsigned long softirq_enable_ip;

unsigned int softirq_disable_event;

unsigned int softirq_enable_event;

int softirqs_enabled;

int softirq_context;

#endif

#ifdef CONFIG_LOCKDEP

# define MAX_LOCK_DEPTH 48UL

u64 curr_chain_key;

int lockdep_depth;

unsigned int lockdep_recursion;

struct held_lock held_locks[MAX_LOCK_DEPTH];

gfp_t lockdep_reclaim_gfp;

#endif

/* journalling filesystem info */

void *journal_info;

/* stacked block device info */

struct bio_list *bio_list;

#ifdef CONFIG_BLOCK

/* stack plugging */

struct blk_plug *plug;

#endif

/* VM state */

struct reclaim_state *reclaim_state;

struct backing_dev_info *backing_dev_info;

struct io_context *io_context;

unsigned long ptrace_message;

siginfo_t *last_siginfo; /* For ptrace use. */

struct task_io_accounting ioac;

#if defined(CONFIG_TASK_XACCT)

u64 acct_rss_mem1; /* accumulated rss usage */

u64 acct_vm_mem1; /* accumulated virtual memory usage */

cputime_t acct_timexpd; /* stime + utime since last update */

#endif

#ifdef CONFIG_CPUSETS

nodemask_t mems_allowed; /* Protected by alloc_lock */

seqcount_t mems_allowed_seq; /* Seqence no to catch updates */

int cpuset_mem_spread_rotor;

int cpuset_slab_spread_rotor;

#endif

#ifdef CONFIG_CGROUPS

/* Control Group info protected by css_set_lock */

struct css_set __rcu *cgroups;

/* cg_list protected by css_set_lock and tsk->alloc_lock */

struct list_head cg_list;

#endif

#ifdef CONFIG_FUTEX

struct robust_list_head __user *robust_list;

#ifdef CONFIG_COMPAT

struct compat_robust_list_head __user *compat_robust_list;

#endif

struct list_head pi_state_list;

struct futex_pi_state *pi_state_cache;

#endif

#ifdef CONFIG_PERF_EVENTS

struct perf_event_context *perf_event_ctxp[perf_nr_task_contexts];

struct mutex perf_event_mutex;

struct list_head perf_event_list;

#endif

#ifdef CONFIG_DEBUG_PREEMPT

unsigned long preempt_disable_ip;

#endif

#ifdef CONFIG_NUMA

struct mempolicy *mempolicy; /* Protected by alloc_lock */

short il_next;

short pref_node_fork;

#endif

#ifdef CONFIG_NUMA_BALANCING

int numa_scan_seq;

unsigned int numa_scan_period;

unsigned int numa_scan_period_max;

int numa_preferred_nid;

unsigned long numa_migrate_retry;

u64 node_stamp; /* migration stamp */

u64 last_task_numa_placement;

u64 last_sum_exec_runtime;

struct callback_head numa_work;

struct list_head numa_entry;

struct numa_group *numa_group;

/*

* Exponential decaying average of faults on a per-node basis.

* Scheduling placement decisions are made based on the these counts.

* The values remain static for the duration of a PTE scan

*/

unsigned long *numa_faults_memory;

unsigned long total_numa_faults;

/*

* numa_faults_buffer records faults per node during the current

* scan window. When the scan completes, the counts in

* numa_faults_memory decay and these values are copied.

*/

unsigned long *numa_faults_buffer_memory;

/*

* Track the nodes the process was running on when a NUMA hinting

* fault was incurred.

*/

unsigned long *numa_faults_cpu;

unsigned long *numa_faults_buffer_cpu;

/*

* numa_faults_locality tracks if faults recorded during the last

* scan window were remote/local. The task scan period is adapted

* based on the locality of the faults with different weights

* depending on whether they were shared or private faults

*/

unsigned long numa_faults_locality[2];

unsigned long numa_pages_migrated;

#endif /* CONFIG_NUMA_BALANCING */

struct rcu_head rcu;

/*

* 与管道相关的数据结构

*/

struct pipe_inode_info *splice_pipe;

struct page_frag task_frag;

#ifdef CONFIG_TASK_DELAY_ACCT

struct task_delay_info *delays;

#endif

#ifdef CONFIG_FAULT_INJECTION

int make_it_fail;

#endif

/*

* when (nr_dirtied >= nr_dirtied_pause), it's time to call

* balance_dirty_pages() for some dirty throttling pause

*/

int nr_dirtied;

int nr_dirtied_pause;

unsigned long dirty_paused_when; /* start of a write-and-pause period */

#ifdef CONFIG_LATENCYTOP

int latency_record_count;

struct latency_record latency_record[LT_SAVECOUNT];

#endif

/*

* time slack values; these are used to round up poll() and

* select() etc timeout values. These are in nanoseconds.

*/

unsigned long timer_slack_ns;

unsigned long default_timer_slack_ns;

#ifdef CONFIG_FUNCTION_GRAPH_TRACER

/* Index of current stored address in ret_stack */

int curr_ret_stack;

/* Stack of return addresses for return function tracing */

struct ftrace_ret_stack *ret_stack;

/* time stamp for last schedule */

unsigned long long ftrace_timestamp;

/*

* Number of functions that haven't been traced

* because of depth overrun.

*/

atomic_t trace_overrun;

/* Pause for the tracing */

atomic_t tracing_graph_pause;

#endif

#ifdef CONFIG_TRACING

/* state flags for use by tracers */

unsigned long trace;

/* bitmask and counter of trace recursion */

unsigned long trace_recursion;

#endif /* CONFIG_TRACING */

#ifdef CONFIG_MEMCG /* memcg uses this to do batch job */

unsigned int memcg_kmem_skip_account;

struct memcg_oom_info {

struct mem_cgroup *memcg;

gfp_t gfp_mask;

int order;

unsigned int may_oom:1;

} memcg_oom;

#endif

#ifdef CONFIG_UPROBES

struct uprobe_task *utask;

#endif

#if defined(CONFIG_BCACHE) || defined(CONFIG_BCACHE_MODULE)

unsigned int sequential_io;

unsigned int sequential_io_avg;

#endif

};

fork函数创建新进程过程分析

在Linux系统中fork()通过调用clone系统调用实现其功能,而clone()是通过调用do_fork()实现的。

do_fork()定义在kernel/fork.c文件中。 该函数调用copy_process()开始创建新进程。工作过程如下:

1.调用dup_task_struct()为新进程创建一个内核栈、thread_info结构和task_struct(PCB),这些值与当前进程的值相同。此时,子进程和父进程的描述符是完全相同的。

2.检查并确保新创建这个子进程后,当前用户所拥有的进程数目没有超出给它分配的资源的限制。

3.子进程着手使自己与父进程区别开来。进程描述符内的许多成员都要被清0或设为初始值。那些不是继承而来的进程描述符成员,主要是统计信息。task_struct中的大多数数据都依然未被修改。

4.子进程的状态被设置为TASK_UNINTERRUPTINLE,以保证它不会投入运行。(注:TASK_UNINTERRUPTIBLE使进程只能被wake_up()唤醒,即等待状态。等待状态不可被信号解除。)

5.copy_process()调用copy_flags()以更新task_struct的flags成员。表明进程是否拥有超级用户权限的PE_SUPERPRIV标志被清0。表明进程还没有调用exec()的函数的PF_FORKNOEXEC标志被设置。

6.调用alloc_pid()为新进程分配一个有效的PID。

7.根据传递给clone()的参数标志,copy_process()拷贝或共享打开文件、文件系统信息、信号处理函数、进程地址空间和命名空间等。在一般情况下,这些资源会被给定进程的所有线程共享;否则,这些资源对每个进程是不同的,因此被拷贝到这里。

8.最后,copy_process()做扫尾工作并返回一个指向子进程的指针。

回到do_fork函数,如果copy_process()函数成功返回,新创建的子进程被唤醒并让其投入运行。

子进程是从哪开始执行的?

当执行到

p->thread.ip = (unsigned long) ret_from_fork; //调度到子进程时的第一条指令地址。

时,即子进程得到CPU时它从这个位置开始执行的。

而执行这条语句

*childregs = *current_pt_regs(); //复制内核堆栈

保证了新进程的执行起点和内核堆栈的一致性。

如下图gdb跟踪所示。

新进程执行起点对应的堆栈状态分析

gdb调试分析

总结

Linux创建一个新进程的过程:系统通过sys_fork、sys_clone、sys_vfork三个系统调用中的任意一个创建新进程,这三个系统调用都调用do_fork()函数,由do_fork()函数调用其它函数复制父进程的PCB,创建新进程的内核栈,然后根据创建时的参数修改新进程PCB中的信息将其与父进程区分开来,为子进程分配新的PID号,最后将其返回用户态。

参考资料

《Linux内核设计与实现》原书第三版

Sawoom原创作品转载请注明出处

《Linux内核分析》MOOC课程http://mooc.study.163.com/course/USTC-1000029000