共五台虚拟机

| 主机名 |

IP |

用途 | 部署软件 |

|---|---|---|---|

| hdss7-11.host.com | 10.211.55.22 | master |

apiserver,scheduler,controller-manager etcd,flanneld |

| hdss7-12.host.com | 10.211.55.23 | node | kubelet,kube-proxy etcd,flanneld |

| hdss7-11.host.com | 10.211.55.24 | node | kubelet,kube-proxy etcd,flanneld |

主机名命名规范,不建议主机名和业务挂钩,例如mysql-01;redis-02 以上这种,因为主机可能上线下

部署bind服务

编辑主配置文件:

options {

listen-on port 53 { 10.4.7.11; };

directory "/var/named";

dump-file "/var/named/data/cache_dump.db";

statistics-file "/var/named/data/named_stats.txt";

memstatistics-file "/var/named/data/named_mem_stats.txt";

recursing-file "/var/named/data/named.recursing";

secroots-file "/var/named/data/named.secroots";

allow-query { any; };

forwarders { 10.4.7.1; };

[root@hdss7-11 ~]# named-checkconf

/etc/named.conf:21: missing ';' before '}'

[root@hdss7-11 ~]# named-checkconf

检查语法没有任何输出时,配置即为正确

编辑区域配置文件:一个是主机域,一个是业务域

[root@hdss7-11 ~]# cat /etc/named.rfc1912.zones

zone "localhost.localdomain" IN {

type master;

file "named.localhost";

allow-update { none; };

};

zone "localhost" IN {

type master;

file "named.localhost";

allow-update { none; };

};

zone "1.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.ip6.arpa" IN {

type master;

file "named.loopback";

allow-update { none; };

};

zone "1.0.0.127.in-addr.arpa" IN {

type master;

file "named.loopback";

allow-update { none; };

};

zone "0.in-addr.arpa" IN {

type master;

file "named.empty";

allow-update { none; };

};

zone "host.com" IN {

type master;

file "host.com.zone";

allow-update { 10.4.7.11; };

};

zone "od.com" IN {

type master;

file "od.com.zone";

allow-update { 10.4.7.11; };

};

配置区域数据文件:

[root@hdss7-11 named]# cat host.com.zone

$ORIGIN host.com.

$TTL 600 ; 10 minutes

@IN SOAdns.host.com. dnsadmin.host.com. (

2019111001 ; serial

10800; refresh

900 ; retry

604800; expire

86400 ; minimum

)

NSdns.host.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

HDSS7-11 A 10.4.7.11

HDSS7-12 A 10.4.7.12

HDSS7-21 A 10.4.7.21

HDSS7-22 A 10.4.7.22

HDSS7-200 A 10.4.7.200

[root@hdss7-11 named]# cat od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111001 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

然后启动dns服务

systemctl restart named

解析验证:

[root@hdss7-11 named]# dig -t A hdss7-11.host.com @10.4.7.11 +short

10.4.7.11

[root@hdss7-11 named]# dig -t A hdss7-200.host.com @10.4.7.11 +short

10.4.7.200

客户端修改上游DNS服务端地址,指向我们刚搭建的dns服务:

[root@hdss7-11 named]# grep DNS1 /etc/sysconfig/network-scripts/ifcfg-eth0

DNS1=10.4.7.11

[root@hdss7-11 named]# systemctl restart network

[root@hdss7-11 named]# cat /etc/resolv.conf

# Generated by NetworkManager

# 这里的search 是指支持使用短域名来进行解析,一般会将主机名支持短域名,业务域名一般使用正常域名

search host.com

nameserver 10.4.7.11

[root@hdss7-11 named]# ping hdss7-22

PING HDSS7-22.host.com (10.4.7.22) 56(84) bytes of data.

64 bytes from 10.4.7.22: icmp_seq=1 ttl=64 time=0.637 ms

64 bytes from 10.4.7.22: icmp_seq=2 ttl=64 time=0.419 ms

[root@hdss7-11 named]# ping baidu.com

PING baidu.com (39.156.69.79) 56(84) bytes of data.

64 bytes from 39.156.69.79: icmp_seq=1 ttl=128 time=30.4 ms

64 bytes from 39.156.69.79: icmp_seq=2 ttl=128 time=28.0 ms

准备证书签发环境:

wget wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/bin/cfssl-json

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/bin/cfssl-certinfo

chmod +x /usr/bin/cfssl*

创建生成CA证书签名请求(csr)的json配置文件:

[root@hdss7-200 certs]# cat ca-csr.json

{

"CN": "DayaZZ",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

],

"ca": {

"expiry": "175200h"

}

}

cfssl gencert -initca ca-csr.json |cfssl-json -bare ca

部署docker环境:

hdss7-200/hdss7-21/hdss7-22

mkdir -p /etc/docker /data/docker

vim /etc/docker/daemon.json

{

"graph": "/data/docker",

"storage-driver": "overlay2",

"insecure-registries": ["registry.access.redhat.com","quay.io","harbor.od.com"],

"registry-mirrors": ["https://q2gr04ke.mirror.aliyuncs.com"],

"bip": "172.7.200.1/24", # 注意修改这个ip段,因为是hdss7-200,所以为172.7.200

"exec-opts": ["native.cgroupdriver=systemd"],

"live-restore": true

}

部署harbor私有镜像仓库:

hdss7-200主机操作:

https://github.com/goharbor/harbor

tar xf harbor-offline-installer-v1.8.3.tgz -C /opt/

增加版本标示,便于以后升级

mv harbor harbor-v1.8.3

ln -s /opt/harbor-v1.8.3 harbor

修改配置文件:/opt/harbor/harbor.yml

hostname: harbor.od.com

http:

port: 180

data_volume: /data/harbor

location: /data/harbor/logs

mkdir -p /data/harbor/logs

yum install docker-compose -y

sh /opt/harbor/install.sh

查看状态:

[root@hdss7-200 harbor]# docker-compose ps

Name Command State Ports

--------------------------------------------------------------------------------------

harbor-core /harbor/start.sh Up

harbor-db /entrypoint.sh postgres Up 5432/tcp

harbor-jobservice /harbor/start.sh Up

harbor-log /bin/sh -c /usr/local/bin/ ... Up 127.0.0.1:1514->10514/tcp

harbor-portal nginx -g daemon off; Up 80/tcp

nginx nginx -g daemon off; Up 0.0.0.0:180->80/tcp

redis docker-entrypoint.sh redis ... Up 6379/tcp

registry /entrypoint.sh /etc/regist ... Up 5000/tcp

registryctl /harbor/start.sh Up

安装nginx,反代harbor的180端口:

yum install nginx -y

[root@hdss7-200 conf.d]# cat /etc/nginx/conf.d/harbor.od.com.conf

server {

listen 80;

server_name harbor.od.com;

client_max_body_size 1000m;

location / {

proxy_pass http://127.0.0.1:180;

}

}

systemctl start nginx

systemctl enable nginx

给harbor配置内网域名:

hdss7-11操作:

[root@hdss7-11 ~]# cat /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600; 10 minutes

@ IN SOAdns.od.com. dnsadmin.od.com. (

2019111002 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

serial 号+1 ,重启后named服务

systemctl restart named

[root@hdss7-200 ~]# dig harbor.od.com +short

10.4.7.200

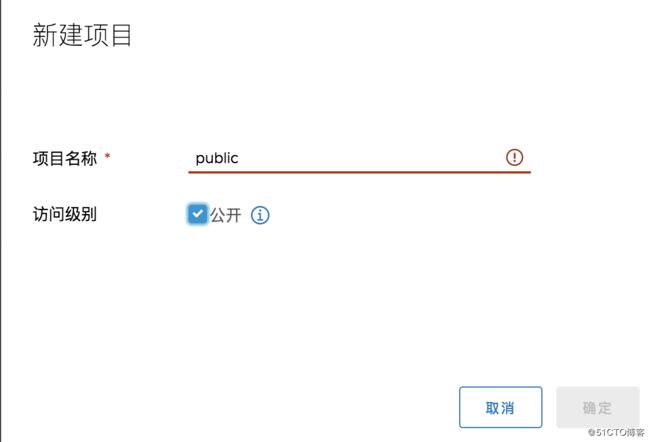

浏览器登录harbor,新建仓库:

下载镜像:

[root@hdss7-200 certs]# docker pull nginx:1.7.9

docker tag 84581e99d807 harbor.od.com/public/nginx:v1.7.9

[root@hdss7-200 certs]# docker login harbor.od.com

Username: admin

Password:

WARNING! Your password will be stored unencrypted in /root/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Login Succeeded

[root@hdss7-200 certs]# docker push harbor.od.com/public/nginx:v1.7.9

开始部署master节点的服务:

部署etcd集群:

集群规划:

| 主机名 | 角色 |

IP |

| hdss7-12.host.com | leader | 10.4.7.12 |

| hdss7-21.host.com | follow | 10.4.7.21 |

| hdss7-22.host.com | follow | 10.4.7.22 |

[root@hdss7-200 certs]# cat /opt/certs/ca-config.json

{

"signing": {

"default": {

"expiry": "175200h"

},

"profiles": {

"server": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth"

]

},

"client": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"client auth"

]

},

"peer": {

"expiry": "175200h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

[root@hdss7-200 certs]# cat /opt/certs/etcd-peer-csr.json

{

"CN": "k8s-etcd",

"hosts": [

"10.4.7.11",

"10.4.7.12",

"10.4.7.21",

"10.4.7.22"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json |cfssl-json -bare etcd-peer

2020/03/25 21:41:00 [INFO] generate received request

2020/03/25 21:41:00 [INFO] received CSR

2020/03/25 21:41:00 [INFO] generating key: rsa-2048

2020/03/25 21:41:00 [INFO] encoded CSR

2020/03/25 21:41:00 [INFO] signed certificate with serial number 724689607557459896267199932166764372562376760465

2020/03/25 21:41:00 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@hdss7-12 ~]# useradd -s /sbin/nologin -M etcd

[root@hdss7-12 tmp]# mkdir -p /data/etcd /data/logs/etcd-server

[root@hdss7-12 tmp]# tar -xf etcd-v3.1.20-linux-amd64.tar.gz -C /opt/

[root@hdss7-12 opt]# mv etcd-v3.1.20-linux-amd64 etcd-v3.1.20

[root@hdss7-12 opt]# ln -s /opt/etcd-v3.1.20 etcd

将两张证书一张私钥拷贝到机器上:

[root@hdss7-12 certs]# ll

总用量 12

-rw-r--r-- 1 root root 1338 3月 25 22:00 ca.pem

-rw------- 1 root root 1679 3月 25 22:03 etcd-peer-key.pem

-rw-r--r-- 1 root root 1424 3月 25 21:58 etcd-peer.pem

启动文件:

cat /opt/etcd/etcd-server-startup.sh

chmod +x /opt/etcd/etcd-server-startup.sh

#!/bin/sh

./etcd --name etcd-server-7-12 \

--data-dir /data/etcd/etcd-server \

--listen-peer-urls https://10.4.7.12:2380 \

--listen-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--quota-backend-bytes 8000000000 \

--initial-advertise-peer-urls https://10.4.7.12:2380 \

--advertise-client-urls https://10.4.7.12:2379,http://127.0.0.1:2379 \

--initial-cluster etcd-server-7-12=https://10.4.7.12:2380,etcd-server-7-21=https://10.4.7.21:2380,etcd-server-7-22=https://10.4.7.22:2380 \

--ca-file ./certs/ca.pem \

--cert-file ./certs/etcd-peer.pem \

--key-file ./certs/etcd-peer-key.pem \

--client-cert-auth \

--trusted-ca-file ./certs/ca.pem \

--peer-ca-file ./certs/ca.pem \

--peer-cert-file ./certs/etcd-peer.pem \

--peer-key-file ./certs/etcd-peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file ./certs/ca.pem \

--log-output stdout

chown -R etcd.etcd /opt/etcd-v3.1.20/

[root@hdss7-12 etcd]# chown -R etcd:etcd /data/etcd/

[root@hdss7-12 etcd]# chown -R etcd:etcd /data/logs/etcd-server/

[root@hdss7-12 etcd]# yum install supervisor -y

[root@hdss7-12 etcd]# systemctl start supervisord

[root@hdss7-12 etcd]# systemctl enable supervisord

[root@hdss7-12 etcd]# supervisorctl status

etcd-server-7-12 RUNNING pid 20034, uptime 0:01:05

[root@hdss7-12 etcd]# netstat -nap|grep etcd

tcp 0 0 10.4.7.12:2379 0.0.0.0:* LISTEN 20035/./etcd

tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN 20035/./etcd

tcp 0 0 10.4.7.12:2380 0.0.0.0:* LISTEN 20035/./etcd

unix 2 [ ] DGRAM 416989 20035/./etcd

检查etcd健康状态:

[root@hdss7-22 etcd]# ./etcdctl cluster-health

member 988139385f78284 is healthy: got healthy result from http://127.0.0.1:2379

member 5a0ef2a004fc4349 is healthy: got healthy result from http://127.0.0.1:2379

member f4a0cb0a765574a8 is healthy: got healthy result from http://127.0.0.1:2379

cluster is healthy

[root@hdss7-22 etcd]# ./etcdctl member list

988139385f78284: name=etcd-server-7-22 peerURLs=https://10.4.7.22:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.22:2379 isLeader=false

5a0ef2a004fc4349: name=etcd-server-7-21 peerURLs=https://10.4.7.21:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.21:2379 isLeader=false

f4a0cb0a765574a8: name=etcd-server-7-12 peerURLs=https://10.4.7.12:2380 clientURLs=http://127.0.0.1:2379,https://10.4.7.12:2379 isLeader=true

部署master节点apiserver

签发client证书(client证书是apiserver和etcd通信时使用的证书):

[root@hdss7-12 nginx]# yum install keepalived -y

keepalived 主节点:

[root@hdss7-11 keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.11

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 251

priority 100

advert_int 1

mcast_src_ip 10.4.7.11

nopreempt

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

keepalived 从节点:

[root@hdss7-12 keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id 10.4.7.12

}

vrrp_script chk_nginx {

script "/etc/keepalived/check_port.sh 7443"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 251

mcast_src_ip 10.4.7.12

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 11111111

}

track_script {

chk_nginx

}

virtual_ipaddress {

10.4.7.10

}

}

启动两台机器上的keepalived 然后在主节点查看VIP是否绑定成功

systemctl start keepalived

systemctl enable keepalived

[root@hdss7-11 keepalived]# ip a

1: lo:

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: eth0:

link/ether 00:50:56:ad:fb:d7 brd ff:ff:ff:ff:ff:ff

inet 10.4.7.11/16 brd 10.4.255.255 scope global eth0

valid_lft forever preferred_lft forever

inet 10.4.7.10/32 scope global eth0

valid_lft forever preferred_lft forever

[root@hdss7-21 supervisord.d]# kubectl get cs

NAME STATUS MESSAGE ERROR

etcd-0 Healthy {"health": "true"}

etcd-2 Healthy {"health": "true"}

etcd-1 Healthy {"health": "true"}

scheduler Healthy ok

controller-manager Healthy ok

[root@hdss7-21 conf]# kubectl config set-cluster myk8s \

> --certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

> --embed-certs=true \

> --server=https://10.4.7.11:7443 \

> --kubeconfig=kubelet.kubeconfig

Cluster "myk8s" set.

[root@hdss7-21 conf]# kubectl config set-credentials k8s-node \

> --client-certificate=/opt/kubernetes/server/bin/cert/client.pem \

> --client-key=/opt/kubernetes/server/bin/cert/client-key.pem \

> --embed-certs=true \

> --kubeconfig=kubelet.kubeconfig

[root@hdss7-21 conf]# kubectl config set-context myk8s-context \

> --cluster=myk8s \

> --user=k8s-node \

> --kubeconfig=kubelet.kubeconfig

Context "myk8s-context" created.

[root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig

Switched to context "myk8s-context".

创建一个k8s-node的资源,让k8s-node这个用户具备集群中运算节点的权限

[root@hdss7-21 conf]# kubectl apply -f k8s-node.yaml

clusterrolebinding.rbac.authorization.k8s.io/k8s-node created

[root@hdss7-21 conf]# kubectl get clusterrolebinding k8s-node -o yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"rbac.authorization.k8s.io/v1","kind":"ClusterRoleBinding","metadata":{"annotations":{},"name":"k8s-node"},"roleRef":{"apiGroup":"rbac.authorization.k8s.io","kind":"ClusterRole","name":"system:node"},"subjects":[{"apiGroup":"rbac.authorization.k8s.io","kind":"User","name":"k8s-node"}]}

creationTimestamp: "2020-03-26T14:00:26Z"

name: k8s-node

resourceVersion: "19187"

selfLink: /apis/rbac.authorization.k8s.io/v1/clusterrolebindings/k8s-node

uid: 8b7856de-2ee7-4c3b-be8f-992f2eb19d69

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:node

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: k8s-node

[root@hdss7-21 conf]# kubectl get clusterrolebinding k8s-node

NAME AGE

k8s-node 2m7s

将kubelet.kubeconfig拷贝到hdss7-22 上:

准备pause基础镜像:

负责给业务容器初始化

[root@hdss7-200 ~]# docker pull kubernetes/pause

Using default tag: latest

latest: Pulling from kubernetes/pause

4f4fb700ef54: Pull complete

b9c8ec465f6b: Pull complete

Digest: sha256:b31bfb4d0213f254d361e0079deaaebefa4f82ba7aa76ef82e90b4935ad5b105

Status: Downloaded newer image for kubernetes/pause:latest

docker.io/kubernetes/pause:latest

[root@hdss7-200 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

goharbor/chartmuseum-photon v0.9.0-v1.8.3 ec654bcf3624 6 months ago 131MB

goharbor/harbor-migrator v1.8.3 6f945bb96ea3 6 months ago 362MB

goharbor/redis-photon v1.8.3 cda8fa1932ec 6 months ago 109MB

goharbor/clair-photon v2.0.8-v1.8.3 5630fa937f6d 6 months ago 165MB

goharbor/notary-server-photon v0.6.1-v1.8.3 e0a54affd0c8 6 months ago 136MB

goharbor/notary-signer-photon v0.6.1-v1.8.3 72708cdfb905 6 months ago 133MB

goharbor/harbor-registryctl v1.8.3 9dc783842a19 6 months ago 97.2MB

goharbor/registry-photon v2.7.1-patch-2819-v1.8.3 a05e085842f5 6 months ago 82.3MB

goharbor/nginx-photon v1.8.3 3a016e0dc7de 6 months ago 37MB

goharbor/harbor-log v1.8.3 b92621c47043 6 months ago 82.6MB

goharbor/harbor-jobservice v1.8.3 53bc2359083f 6 months ago 120MB

goharbor/harbor-core v1.8.3 a3ccc3897bc0 6 months ago 136MB

goharbor/harbor-portal v1.8.3 514f2fb70e90 6 months ago 43.9MB

goharbor/harbor-db v1.8.3 d1b8adbed58f 6 months ago 147MB

goharbor/prepare v1.8.3 a37e777b7fe7 6 months ago 147MB

nginx 1.7.9 84581e99d807 5 years ago 91.7MB

harbor.od.com/public/nginx v1.7.9 84581e99d807 5 years ago 91.7MB

kubernetes/pause latest f9d5de079539 5 years ago 240kB

[root@hdss7-200 ~]# docker tag f9d5de079539 harbor.od.com/public/pause:latest

[root@hdss7-200 ~]#

[root@hdss7-200 ~]#

[root@hdss7-200 ~]# docker push harbor.od.com/public/pause:latest

The push refers to repository [harbor.od.com/public/pause]

5f70bf18a086: Mounted from public/nginx

e16a89738269: Pushed

latest: digest: sha256:b31bfb4d0213f254d361e0079deaaebefa4f82ba7aa76ef82e90b4935ad5b105 size: 938

21和22 上面创建kubelet启动脚本:

[root@hdss7-21 bin]# cat kubelet.sh

#!/bin/sh

./kubelet \

--anonymous-auth=false \

--cgroup-driver systemd \

--cluster-dns 192.168.0.2 \

--cluster-domain cluster.local \

--runtime-cgroups=/systemd/system.slice \

--kubelet-cgroups=/systemd/system.slice \

--fail-swap-on="false" \

--client-ca-file ./cert/ca.pem \

--tls-cert-file ./cert/kubelet.pem \

--tls-private-key-file ./cert/kubelet-key.pem \

--hostname-override hdss7-21.host.com \

--image-gc-high-threshold 20 \

--image-gc-low-threshold 10 \

--kubeconfig ./conf/kubelet.kubeconfig \

--log-dir /data/logs/kubernetes/kube-kubelet \

--pod-infra-container-image harbor.od.com/public/pause:latest \

--root-dir /data/kubelet

chmod +x kubelet.sh

mkdir -p /data/logs/kubernetes/kube-kubelet /data/kubelet

[root@hdss7-22 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready

hdss7-22.host.com Ready

[root@hdss7-21 conf]# kubectl config set-cluster myk8s \

> --certificate-authority=/opt/kubernetes/server/bin/cert/ca.pem \

> --embed-certs=true \

> --server=https://10.4.7.11:7443 \

> --kubeconfig=kube-proxy.kubeconfig

Cluster "myk8s" set.

[root@hdss7-21 conf]# kubectl config set-credentials kube-proxy \

> --client-certificate=/opt/kubernetes/server/bin/cert/kube-proxy-client.pem \

> --client-key=/opt/kubernetes/server/bin/cert/kube-proxy-client-key.pem \

> --embed-certs=true \

> --kubeconfig=kube-proxy.kubeconfig

User "kube-proxy" set.

[root@hdss7-21 conf]# ll

总用量 24

-rw-r--r-- 1 root root 2223 3月 25 23:36 audit.yaml

-rw-r--r-- 1 root root 258 3月 26 21:58 k8s-node.yaml

-rw------- 1 root root 6187 3月 26 21:57 kubelet.kubeconfig

-rw------- 1 root root 6126 3月 26 23:14 kube-proxy.kubeconfig

[root@hdss7-21 conf]#

[root@hdss7-21 conf]#

[root@hdss7-21 conf]# kubectl config set-context myk8s-context \

> --cluster=myk8s \

> --user=kube-proxy \

> --kubeconfig=kube-proxy.kubeconfig

Context "myk8s-context" created.

[root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kube-proxy.kubeconfig

Switched to context "myk8s-context".

加载ipvs模块:

[root@hdss7-22 ~]# cat ipvs.sh

#!/bin/bash

ipvs_mods_dir="/usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs"

for i in $(ls $ipvs_mods_dir|grep -o "^[^.]*")

do

/sbin/modinfo -F filename $i &>/dev/null

if [ $? -eq 0 ];then

/sbin/modprobe $i

fi

done

[root@hdss7-21 ~]# lsmod |grep ip_vs

[root@hdss7-21 ~]# chmod +x ipvs.sh

[root@hdss7-21 ~]# ./ipvs.sh

[root@hdss7-21 ~]# lsmod |grep ip_vs

ip_vs_wrr 12697 0

ip_vs_wlc 12519 0

ip_vs_sh 12688 0

ip_vs_sed 12519 0

ip_vs_rr 12600 0

ip_vs_pe_sip 12740 0

nf_conntrack_sip 33860 1 ip_vs_pe_sip

ip_vs_nq 12516 0

ip_vs_lc 12516 0

ip_vs_lblcr 12922 0

ip_vs_lblc 12819 0

ip_vs_ftp 13079 0

ip_vs_dh 12688 0

ip_vs 145497 24 ip_vs_dh,ip_vs_lc,ip_vs_nq,ip_vs_rr,ip_vs_sh,ip_vs_ftp,ip_vs_sed,ip_vs_wlc,ip_vs_wrr,ip_vs_pe_sip,ip_vs_lblcr,ip_vs_lblc

nf_nat 26583 3 ip_vs_ftp,nf_nat_ipv4,nf_nat_masquerade_ipv4

nf_conntrack 137239 8 ip_vs,nf_nat,nf_nat_ipv4,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_sip,nf_conntrack_ipv4

libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

[root@hdss7-22 bin]# cat kube-proxy.sh

#!/bin/sh

./kube-proxy \

--cluster-cidr 172.7.0.0/16 \

--hostname-override hdss7-22.host.com \

--proxy-mode=ipvs \

--ipvs-scheduler=nq \

--kubeconfig ./conf/kube-proxy.kubeconfig

chmod +x kube-proxy.sh

mkdir -p /data/logs/kubernetes/kube-proxy

[root@hdss7-22 bin]# cat /etc/supervisord.d/kube-proxy.ini

[program:kube-proxy-7-22]

command=/opt/kubernetes/server/bin/kube-proxy.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/kubernetes/server/bin ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/kubernetes/kube-proxy/proxy.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

[root@hdss7-22 supervisord.d]# supervisorctl update

kube-proxy-7-22: added process group

[root@hdss7-21 kube-proxy]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1

[root@hdss7-21 ~]# kubectl label node hdss7-22.host.com node-role.kubernetes.io/node=

node/hdss7-22.host.com labeled

[root@hdss7-21 ~]# kubectl label node hdss7-22.host.com node-role.kubernetes.io/master=

node/hdss7-22.host.com labeled

[root@hdss7-21 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master,node 21h v1.15.2

hdss7-22.host.com Ready master,node 21h v1.15.2