In-Stream Big Data Processing

http://highlyscalable.wordpress.com/2013/08/20/in-stream-big-data-processing/

Overview

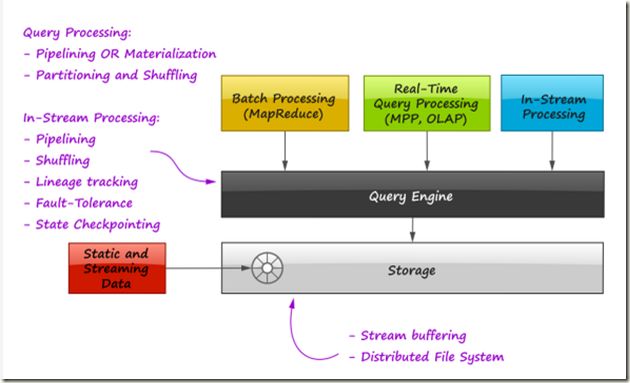

In recent years, this idea got a lot of traction and a whole bunch of solutions like Twitter’s Storm, Yahoo’s S4, Cloudera’s Impala, Apache Spark, and Apache Tez appeared and joined the army of Big Data and NoSQL systems. This article is an effort to explore techniques used by developers of in-stream data processing systems, trace the connections of these techniques to massive batch processing and OLTP/OLAP databases, and discuss how one unified query engine can support in-stream, batch, and OLAP processing at the same time.

本文的目的: 阐述在各个流处理系统中使用到的技术, 分析这些技术和传统的batch或OLTP/OLAP技术的关联, 最终讨论开发一个可以涵盖in-stream, batch, and OLAP的unified query engine的可能性

At Grid Dynamics, we recently faced a necessity to build an in-stream data processing system that aimed to crunch about 8 billion events daily providing fault-tolerance and strict transactioanlity i.e. none of these events can be lost or duplicated.

我们需要这样的in-stream data processing system,

速度快, 8 billion events daily

易容错, fault-tolerance

数据的完整性, 不丢也不多

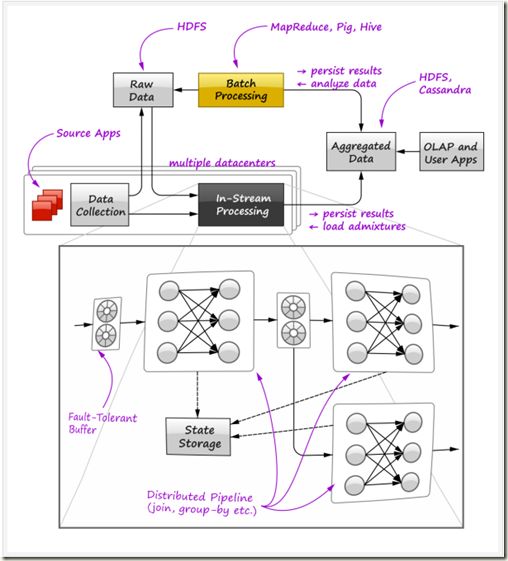

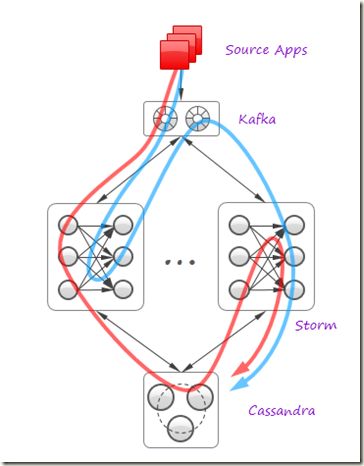

A high-level overview of the environment we worked with is shown in the figure below:

One can see that this environment is a typical Big Data installation: there is a set of applications that produce the raw data in multiple datacenters, the data is shipped by means of Data Collection subsystem to HDFS located in the central facility, then the raw data is aggregated and analyzed using the standard Hadoop stack (MapReduce, Pig, Hive) and the aggregated results are stored in HDFS and NoSQL, imported to the OLAP database and accessed by custom user applications. Our goal was to equip all facilities with a new in-stream engine (shown in the bottom of the figure) that processes most intensive data flows and ships the pre-aggregated data to the central facility, thus decreasing the amount of raw data and heavy batch jobs in Hadoop.

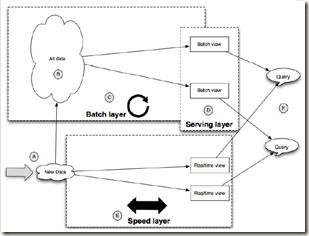

首先这是典型的Lamda架构, 这应该是作者对他说的unified query engine的整体的构想

Lamda架构的关键就是在原来的batch流程上, 加上in-stream engine部分降低系统的latency

Nathan Marz, 提出的一种结合实时和batch的大数据架构, 在他的多篇文章中都表达了类似的思想

在可以牺牲几个小时的latency的条件下, Batch layer+Serving layer已经是完美的方案, 简单, 易容错, 易扩展...

当然很多场景是无法容忍这几个小时的latency的, 这样就需要加上Speed layer, 使用incremental update的方式实时的统计recent data.

可以想象这层的实现是相对复杂的, 也是容易出错的, 因为往往实时方案都是基于内存的, 但这个架构好的是, 如果speed layer发生任何问题, 可以简单的将其丢弃, 因为Batch layer最终在几个小时后产生正确的数据, 你所损失的仅仅是暂时的数据丢失和滞后

对in-stream engine提出的具体需求,

The design of the in-stream processing engine itself was driven by the following requirements:

- SQL-like functionality. The engine has to evaluate SQL-like queries continuously, including joins over time windows and different aggregation functions that implement quite complex custom business logic. The engine can also involve relatively static data (admixtures) loaded from the stores of Aggregated Data. Complex multi-pass data mining algorithms are beyond the immediate goals.

- Modularity and flexibility. It is not to say that one can simply issue a SQL-like query and the corresponding pipeline will be created and deployed automatically, but it should be relatively easy to assemble quite complex data processing chains by linking one block to another.

- Fault-tolerance. Strict fault-tolerance is a principal requirement for the engine. As it sketched in the bottom part of the figure, one possible design of the engine is to use distributed data processing pipelines that implement operations like joins and aggregations or chains of such operations, and connect these pipelines by means of fault-tolerant persistent buffers. These buffers also improve modularity of the system by enabling publish/subscribe communication style and easy addition/removal of the pipelines. The pipelines can be stateful and the engine’s middleware should provide a persistent storage to enable state checkpointing. All these topics will be discussed in the later sections of the article.

- Interoperability with Hadoop. The engine should be able to ingest both streaming data and data from Hadoop i.e. serve as a custom query engine atop of HDFS.

- High performance and mobility. The system should deliver performance of tens of thousands messages per second even on clusters of minimal size. The engine should be compact and efficient, so one can deploy it in multiple datacenters on small clusters.

Basics of Distributed Query Processing

It is clear that distributed in-stream data processing has something to do with query processing in distributed relational databases. Many standard query processing techniques can be employed by in-stream processing engine, so it is extremely useful to understand classical algorithms of distributed query processing and see how it all relates to in-stream processing and other popular paradigms like MapReduce. Distributed query processing is a very large area of knowledge that was under development for decades, so we start with a brief overview of the main techniques just to provide a context for further discussion.

由于in-stream data processing和query processing有很密切的关系

理解classical algorithms of distributed query processing, 以及和in-stream processing与other popular paradigms like MapReduce之间的关系

所以这里讨论的是一些在各个系统中都需要的共有技术

Partitioning and Shuffling

Distributed and parallel query processing heavily relies on data partitioning to break down a large data set into multiple pieces that can be processed by independent processors. Query processing could consist of multiple steps and each step could require its own partitioning strategy, so data shuffling is an operation frequently performed by distributed databases.

分布式query, 需要有两个过程,

首先是把数据break down到各个节点, 称为data partitioning

但在query的时候, 经常有需要把部分数据合并起来, 称为data shuffling

Distributed Joins

下面通过distributed joins这个典型的例子来看看partitioning和shuffling技术

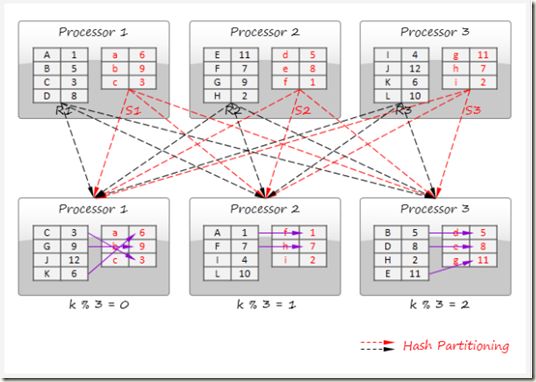

There are two main data partitioning techniques that can be employed by distributed join processing:

- Disjoint data partitioning

按照join key对两个数据集上的数据进行重新shuffling, 这样两个数据集都需要迁移

Disjoint data partitioning technique shuffles the data into several partitions in such a way that join keys in different partitions do not overlap. Each processor performs the join operation on each of these partitions and the final result is obtained as a simple concatenation of the results obtained from different processors. Consider an example where relation R is joined with relation S on a numerical key k and a simple modulo-based hash function is used to produce the partitions (it is assumes that the data initially distributed among the processors based on some other policy):

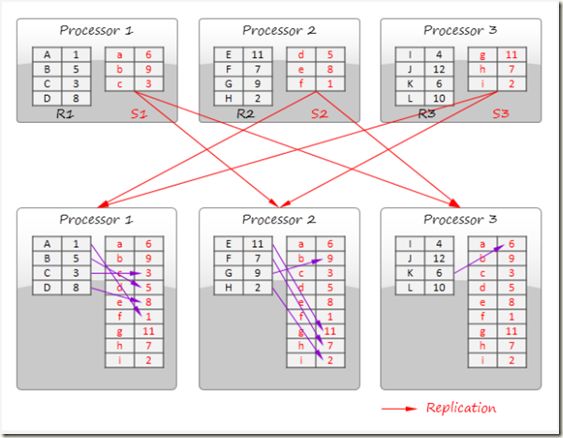

- Divide and broadcast join

将比较小数据集广播, 在每个processor上面保留整个数据集, 前提是这个数据集确实足够小

在流处理上的应用就是, joining a static data (admixture) to a data stream

The divide and broadcast join algorithm is illustrated in the figure below. This method divides the first data set into multiple disjoint partitions (R1, R2, and R3 in the figure) and replicates the second data set to all processors. In a distributed database, division typically is not a part of the query processing itself because data sets are initially distributed among multiple nodes.

This strategy is applicable for joining of a large relation with a small relation or two small relations. In-stream data processing systems can employ this technique for stream enrichment i.e. joining a static data (admixture) to a data stream.

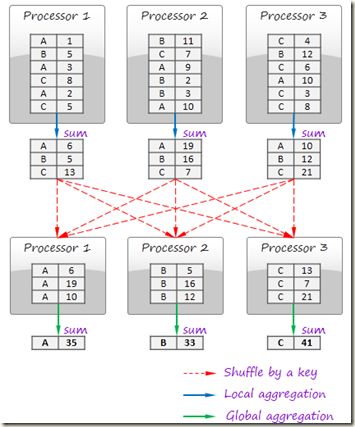

GroupBy

类似map/reduce的过程

Processing of GroupBy queries also relies on shuffling and fundamentally similar to the MapReduce paradigm in its pure form.

Consider an example where the data is grouped by a string key and sum of the numerical values is computed in each group:

In this example, computation consists of two steps: local aggregation and global aggregation. These steps basically correspond to Map and Reduce operations. Local aggregation is optional and raw records can be emitted, shuffled, and aggregated on a global aggregation phase.

Pipelining

In the previous section, we noted that many distributed query processing algorithms resemble message passing networks.

However, it is not enough to organize efficient in-stream processing: all operators in a query should be chained in such a way that the data flows smoothly through the entire pipeline i.e. neither operation should block processing by waiting for a large piece of input data without producing any output or by writing intermediate results on disk. Some operations like sorting are inherently incompatible with this concept (obviously, a sorting block cannot produce any output until the entire input is ingested), but in many cases pipelining algorithms are applicable.

A typical example of pipelining is shown below:

讨论一些纯粹基于Pipelining, 流方式, 的处理算法,

a. Stream和Static Data进行join, Simple Hash Join

如下面的例子stream R1和static表S1, S2, S3进行join

比较简单, 把所有的static表都载入到hash table里面, 然后让stream R1以pipeline的方式流过

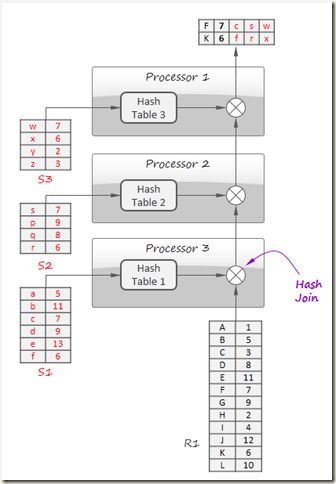

In this example, the hash join algorithm is employed to join four relations: R1, S1, S2, and S3 using 3 processors.

The idea is to build hash tables for S1, S2 and S3 in parallel and then stream R1 tuples one by one though the pipeline that joins them with S1, S2 and S3 by looking up matches in the hash tables. In-stream processing naturally employs this technique to join a data stream with the static data (admixtures).

b. Stream间的join, Symmetric Hash Join

其实这是Simple hash join方法的generalization, 通过下面的图很清晰

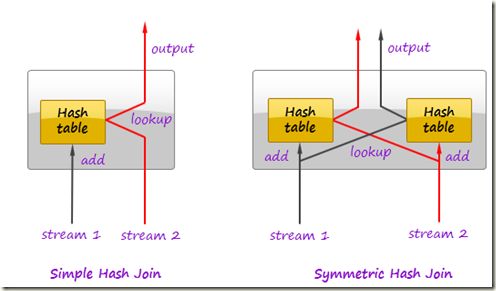

In relational databases, join operation can take advantage of pipelining by using the symmetric hash join algorithm or some of its advanced variants [1,2]. Symmetric hash join is a generalization of hash join. Whereas a normal hash join requires at least one of its inputs to be completely available to produce first results (the input is needed to build a hash table), symmetric hash join is able to produce first results immediately. In contrast to the normal hash join, it maintains hash tables for both inputs and populates these tables as tuples arrive:

As a tuple comes in, the joiner first looks it up in the hash table of the other stream. If match is found, an output tuple is produced. Then the tuple is inserted in its own hash table.

c. Infinite stream的join, Window-based

对于流, 往往是无限的, 所以流算法往往是window-based

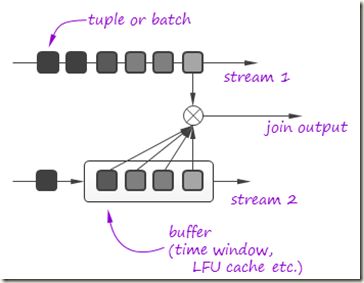

However, it does not make a lot of sense to perform a complete join of infinite streams. In many cases join is performed on a finite time window or other type of buffer e.g. LFU cache that contains most frequent tuples in the stream. Symmetric hash join can be employed if the buffer is large comparing to the stream rate or buffer is flushed frequently according to some application logic or buffer eviction strategy is not predictable. In other cases, simple hash join is often sufficient since the buffer is constantly full and does not block the processing:

In-Stream Processing Patterns

In the previous section, we discussed a number of standard query processing techniques that can be used in massively parallel stream processing.

Thus, on a conceptual level, an efficient query engine in a distributed database can act as a stream processing system and vice versa, a stream processing system can act as a distributed database query engine. Shuffling and pipelining are the key techniques of distributed query processing and message passing networks can naturally implement them. However, things are not so simple. In a contrast to database query engines where reliability is not critical because a read-only query can always be restarted, streaming systems should pay a lot of attention to reliable events processing. In this section, we discuss a number of techniques that are used by streaming systems to provide message delivery guarantees and some other patterns that are not typical for standard query processing.

上一章描述了分布式query的相关技术,

并且作者认为在概念上, 分布式数据库的query engine和stream处理系统是等价的

但是事情没有那么简单, query engine没有关注fault tolerant, 因为read-only query失败, 可以again

但是stream处理系统必须考虑reliable events processing问题, 所有这里着重讨论一些message delivery guarantees技术

所以这里讨论的是在In-Stream Processing中所特有的技术

Stream Replay

Stream replay对于容错, 代码调试或优化都有非常重要的意义, Kafka具备这个功能, 是他不同于其他message queue最大特点

首先, 对于容错, 当发生异常时, 对于流处理系统常常的做法是replay, 但是当前比如Storm或Spark都是在source端replay, 当然Spark借助checkpoint会有所改善

而基于Kafka的Samza系统, 由于每一步中间数据都会放在kafka中, 会提高replay的效率

并且Storm本身不具备replay的能力, 必须要借助类似Kafka这样的系统

Ability to rewind data stream back in time and replay the data is very important for in-stream processing systems because of the following reasons:

- This is the only way to guarantee correct data processing. Even if data processing pipeline is fault-tolerant, it is very problematic to guarantee that the deployed processing logic is defect-free. One can always face a necessity to fix and redeploy the system and replay the data on a new version of the pipeline.

- Issue investigation could require ad hoc queries. If something goes wrong, one could need to rerun the system on the problematic data with better logging or with code alternations.

- Although it is not always the case, the in-stream processing system can be designed in such a way that it re-reads individual messages from the source in case of processing errors and local failures, even if the system in general is fault-tolerant.

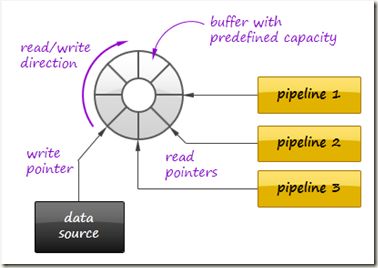

As a result, the input data typically goes from the data source to the in-stream pipeline via a persistent buffer that allows clients to move their reading pointers back and forth.

Kafka messaging queue is well known implementation of such a buffer that also supports scalable distributed deployments, fault-tolerance, and provides high performance.

As a bottom line, Stream Replay technique imposes the following requirements of the system design:

- The system is able to store the raw input data for a preconfigured period time.

- The system is able to revoke a part of the produced results, replay the corresponding input data and produce a new version of the results.

- The system should work fast enough to rewind the data back in time, replay them, and then catch up with the constantly arriving data stream.

Lineage Tracking

In a streaming system, events flow through a chain of processors until the result reaches the final destination (like an external database). Each input event produces a directed graph of descendant events (lineage) that ends by the final results. To guarantee reliable data processing, it is necessary to ensure that the entire graph was processed successfully and to restart processing in case of failures.

在流处理中, 一个比较重要的问题是如何保证每个tuple都被成功的处理, 因为一个tuple在处理过程中, 可能会导致很多其他的tuple, 最终是DAG, 所以是个比较复杂的问题,

Storm

这个问题在storm中已经得到比较优雅的解决

具体过程如下, 核心思想就是

两个相同值异或为0, 所以在tuple发生的时候记录tupleid, 在tuple被ack的时候再用tupleid进行异或, 只要tupleid是成对出现的, 最终结果就应该是0

advantage,

逻辑简单, 设计优雅

大大提高了空间效率, 将对一个DAG的track, 转化为对一个字段的track

disadvantage,

大大增加了网络通信量, 使得需要发送的tuple数量double

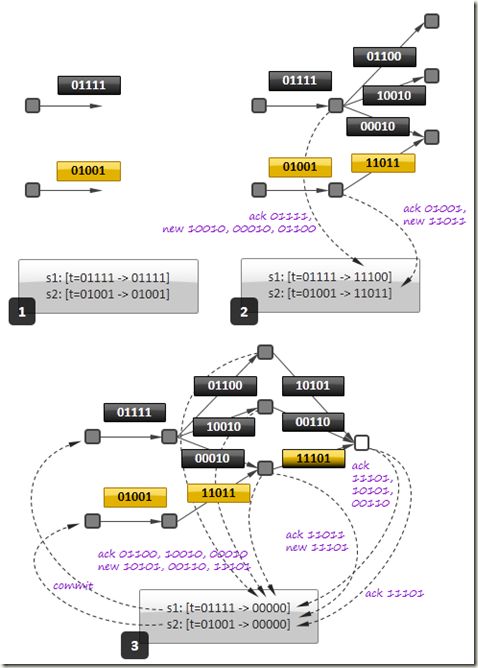

Let us first consider how Twitter’s Storm tracks the messages to guarantee at-least-once delivery semantics.

- All events that emitted by the sources (first nodes in the data processing graph) are marked by a random ID. For each source, the framework maintains a set of pairs [event ID -> signature] for each initial event. The signature is initially initialized by the event ID.

- Downstream nodes can generate zero or more events based on the received initial event. Each event carries its own random ID and the ID of the initial event.

- If the event is successfully received and processed by the next node in the graph, this node updates the signature of the corresponding initial event by XORing the signature with (a) ID of the incoming event and (b) IDs of all events produced based on the incoming event. In the part 2 of diagram below, event 01111 produces events 01100, 10010, and 00010, so the signature for event 01111 becomes 11100 (= 01111 (initial value) xor 01111 xor 01100 xor 10010 xor 00010).

- An event can be produced based on more than one incoming event. In this case, it is attached several initial event and carries more than one initial IDs downstream (yellow-black event in the part 3 of the figure below).

- The event considered to be successfully processed as soon as its signature turns into zero i.e. the final node acknowledged that the last event in the graph was processed successfully and no events were emitted downstream. The framework sends a commit message to the source node (see part 3 in the diagram below).

- The framework traverses a table of the initial events periodically looking for old uncommitted events (events with non-zero signature). Such events are considered as failed and the framework asks the source nodes to replay them.

- It is important to note that the order of signature updates is not important due to commutative nature of the XOR operation. In the figure below, acknowledgements depicted in the part 2 can arrive after acknowledgements depicted in the part 3. This enables fully asynchronous processing.

- One can note that the algorithm above is not strictly reliable – the signature could turn into zero accidentally due to unfortunate combination of IDs. However, 64-bit IDs are sufficient to guarantee a very low probability of error, about 2^(-64), that is acceptable in almost all practical applications. As result, the table of signatures could have a small memory footprint.

The described approach is elegant due to its decentrilized nature: nodes act independently sending acknowledgement messages, there is no cental entity that tracks all lineages explicitly.

However, it could be difficult to manage transactional processing in this way for flows that maintain sliding windows or other buffers. For example, processing on a sliding window can involve hundreds of thousands events at each moment of time, so it becomes difficult to manage acknowledgements because many events stay uncommitted or computational state should be persisted frequently.

Spark

Spark是基于RDD数据结构, 只支持粗粒度的操作, 不支持对单个tuple的操作, 所以在track和replay上都简单的多

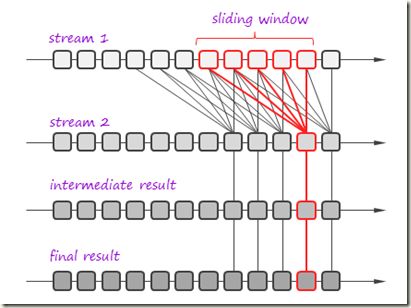

An alternative approach is used in Apache Spark [3]. The idea is to consider the final result as a function of the incoming data.

To simplify lineage tracking, the framework processes events in batches, so the result is a sequence of batches where each batch is a function of the input batches.

Resulting batches can be computed in parallel and if some computation fails, the framework simply reruns it. Consider an example:

In this example, the framework joins two streams on a sliding window and then the result passes through one more processing stage.

The framework considers the incoming streams not as streams, but as set of batches. Each batch has an ID and the framework can fetch it by the ID at any moment of time.

So, stream processing can be represented as a bunch of transactions where each transaction takes a group of input batches, transforms them using a processing function, and persists a result.

In the figure above, one of such transactions is highlighted in red. If the transaction fails, the framework simply reruns it. It is important that transactions can be executed in parallel.

This simple but powerful paradigm enables centralized transaction management and inherently provides exactly-once message processing semantics. It is worth nothing that this technique can be used both for batch processing and for stream processing because it treats the input data as a set of batches regardless to their streaming of static nature.

State Checkpointing

In the previous section we have considered the lineage tracking algorithm that uses signatures (checksums) to provide at-least-one message delivery semantics.

This technique improves reliability of the system, but it leaves at least two major open questions:

- In many cases, exactly-once processing semantics is required. For example, the pipeline that counts events can produce incorrect results if some messages will be delivered twice.

- Nodes in the pipeline can have a computational state that is updated as the messages processed. This state can be lost in case of node failure, so it is necessary to persist or replicate it.

上面Storm的Lineage Tracking技术可以保证at-least-once语义, 但是仍然有两个问题没有解决

a. exactly-once语义, 比如对于count

b. 临时状态的管理, 比如缓存的count值

Storm提供transaction topology来保证exactly-once语义, 参考Storm - Transactional-topologies

transaction topology的核心思想,

将tuple以batch为单位进行track和replay(提高效率, 以tuple为单位overhead太高)

transaction以transaction ID为标识(递增的)

将transaction分为processing阶段和commit阶段, processing阶段是可以各个transaction并行做的, 但是commit阶段必须按照transaction ID依次完成

Storm如何管理state?

采用比较简单的方法, 解决外部存储, 在每次transaction commit的时候, 都将临时state commit到外部存储当中

并且在存储state的同时, 需要存下transaciton ID, 用于防止transaction被执行多次

关于state management的其他技术, 参考Apache Samza - Reliable Stream Processing atop Apache Kafka and Hadoop YARN, State Management部分

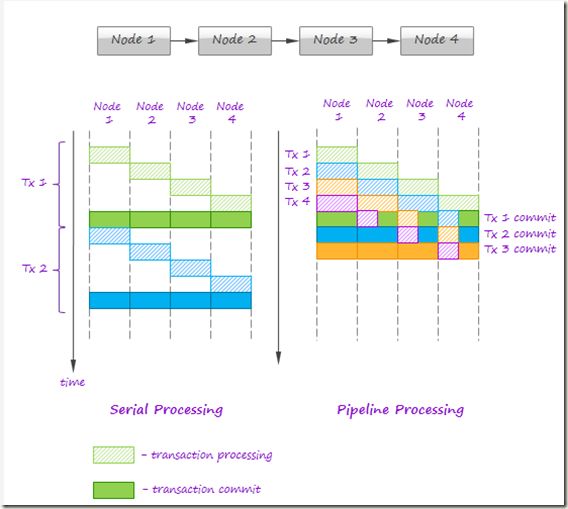

Twitter’s Storm addresses these issues by using the following protocol:

- Events are grouped into batches and each batch is associated with a transaction ID. A transaction ID is a monotonically growing numerical value (e.g. the first batch has ID 1, the second ID 2, and so on). If the pipeline fails to process a batch, this batch is re-emitted with the same transaction ID.

- First, the framework announces to the nodes in the pipeline that a new transaction attempt is started. Second, the framework to sends the batch through the pipeline. Finally, the framework announces that transaction attempt if completed and all nodes can commit their state e.g. update it in the external database.

- The framework guarantees that commit phases are globally ordered across all transactions i.e. the transaction 2 can never be committed before the transaction 1. This guarantee enables processing nodes to use following logic of persistent state updates:

- The latest transaction ID is persisted along with the state.

- If the framework requests to commit the current transaction with the ID that differs from the ID value persisted in the database, the state can be updated e.g. a counter in the database can be incremented. Assuming a strong ordering of transactions, such update will happen exactly one for each batch.

- If the current transaction ID equals to the value persisted in the storage, the node skips the commit because this is a batch replay. The node must have processed the batch earlier and updated the state accordingly, but the transaction failed due to an error somewhere else in the pipeline.

- Strong order of commits is important to achieve exactly-once processing semantics. However, strictly sequential processing of transactions is not feasible because first nodes in the pipeline will often be idle waiting until processing on the downstream nodes is completed. This issues can be alleviated by allowing parallel processing of transactions but serialization of commit steps only, as it shown in the figure below:

This technique allows one to achieve exactly-once processing semantics assuming that data sources are fault-tolerant and can be replayed.

However, persistent state updates can cause serious performance degradation even if large batches are used. By this reason, the intermediate computational state should be minimized or avoided whenever possible.

As a footnote, it is worth mentioning that state writing can be implemented in different ways. The most straightforward approach is to dump in-memory state to the persistent store as part of the transaction commit process. This does not work well for large states (sliding windows an so on). An alternative is to write a kind of transaction log i.e. a sequence of operations that transform the old state into the new one (for a sliding window it can be a set of added and evicted events). This approach complicates crash recovery because the state has to be reconstructed from the log, but can provide performance benefits in a variety of cases.

Additive State and Sketches

Additivity of intermediate and final computational results is an important property that drastically simplifies design, implementation, maintenance, and recovery of in-stream data processing systems. Additivity means that the computational result for a larger time range or a larger data partition can be calculated as a combination of results for smaller time ranges or smaller partitions.

For example, a daily number of page views can be calculated as a sum of hourly numbers of page views. Additive state allows one to split processing of a stream into processing of batches that can be computed and re-computed independently and, as we discussed in the previous sections, this helps to simplify lineage tracking and reduce complexity of state maintenance.

什么叫做Additivity? 简单的理解, 就是可以分而治之的数据, 可以计算局部最优, 比如count

比如要算一个月的page views, 可以先独立统计每天的page views, 然后加起来

如果需求变成一个星期的page views, 没有问题, 半年的page views, 仍然ok……

这样的数据非常便于做基于流的时间窗口相关处理

当然不是什么数据都是Additivity?

有些数据通过记录少许辅助数据就可以达到Additivity, 比如平均值, 需要记录下个数

有些数据是无法达到Additivity的, 比如unique visitors count

对于这样的数据, 可以使用Sketches技术来转化为Additivity数据, 比如使用Linear Counting来记录每个hourly的UV, 对于任意时间范围的UV, Linear Counting是可以合并的, 所以只需要将多个hourly的Linear Counting进行取or合并, 然后再估算整个的UV, 就解决了Additivity问题, 参考大数据处理中基于概率的数据结构

It is not always trivial to achieve additivity:

- In many cases, additivity is indeed trivial. For example, simple counters are additive.

- In some cases, it is possible to achieve additivity by storing a small amount of additional information. For example, consider a system that calculates average purchase value in the internet shop for each hour. Daily average cannot be obtained from 24 hourly average values. However, the system can easily store a number of transactions along with each hourly average and it is enough to calculate the daily average value.

- In many cases, it is very difficult or impossible to achieve additivity. For example, consider a system that counts unique visitors on some internet site. If 100 unique users visited the site yesterday and 100 unique user visited the site today, the total number of unique user for two days can be from 100 to 200 depends on how many users visited the site both yesterday and today. One have to maintain lists of user IDs to achieve additivity through intersection/union of the ID lists. Size and processing complexity for these lists can be comparable to the size and processing complexity of the raw data.

Sketches is a very efficient way to transform non-additive values into additive. In the previous example, lists of ID can be replaced by compact additive statistical counters. These counters provide approximations instead of precise result, but it is acceptable for many practical applications. Sketches are very popular in certain areas like internet advertising and can be considered as an independent pattern of in-stream processing. A thorough overview of the sketching techniques can be found in [5].

Logical Time Tracking

It is very common for in-stream computations to depend on time: aggregations and joins are often performed on sliding time windows; processing logic often depends on a time interval between events and so on. Obviously, the in-stream processing system should have a notion of application’s view of time, instead of CPU wall-clock.

However, proper time tracking is not trivial because data streams and particular events can be replayed in case of failures. It is often a good idea to have a notion of global logical time that can be implemented as follows:

- All events should be marked with a timestamp generated by the original application.

- Each processor in a pipeline tracks the maximal timestamp it has seen in a stream and updates a global persistent clock by this timestamp if the global clock is behind. All other processors synchronize their time with the global clock.

- Global clock can be reset in case of data replay.

Aggregation in a Persistent Store

如何使用Cassandra来进行基于streaming的join?

把所有流的数据都以join key为row key存到Cassandra中, Cassandra 会按row key排序

所有另外start一个进程, 定时的读出具有相同的join key的数据来进行join操作

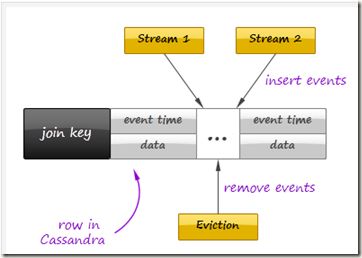

We already have discussed that persistent store can be used for state checkpointing. However, it not the only way to employ an external store for in-stream processing. Let us consider an example that employs Cassandra to join multiple data streams over a time window. Instead of maintaining in-memory event buffers, one can simply save all incoming events from all data streams to Casandra using a join key as row key, as it shown in the figure below:

On the other side, the second process traverses the records periodically, assembles and emits joined events, and evicts the events that fell out of the time window. Cassandra even can facilitate this activity by sorting events according to their timestamps.

It is important to understand that such techniques can defeat the whole purpose of in-stream data processing if implemented incorrectly – writing individual events to the data store can introduce a serious performance bottleneck even for fast stores like Cassandra or Redis. On the other hand, this approach provides perfect persistence of the computational state and different performance optimizations – say, batch writes – can help to achieve acceptable performance in many use cases.

Aggregation on a Sliding Window

描述了在sliding window上的增量算法

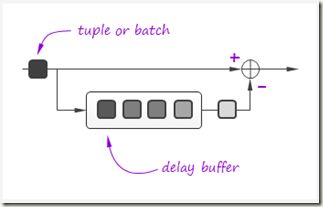

In-stream data processing frequently deals with queries like “What is the sum of the values in the stream over last 10 minutes?” i.e. with continuous queries on a sliding time window.

A straightforward approach to processing of such queries is to compute the aggregation function like sum for each instance of the time window independently. It is clear that this approach is not optimal because of the high similarity between two sequential instances of the time window. If the window at the time T contains samples {s(0), s(1), s(2), …, s(T-1), s(T)}, then the window at the time T+1 contains samples {s(1), s(2), s(3), …, s(T), s(T+1)}. This observation suggests that incremental processing might be used.

Incremental computations over sliding windows is a group of techniques that are widely used in digital signal processing, in both software and hardware. A typical example is a computation of the sum function. If the sum over the current time window is known, then the sum over the next time window can be computed by adding a new sample and subtracting the eldest sample in the window:

Similar techniques exist not only for simple aggregations like sums or products, but also for more complex transformations. For example, the SDFT (Sliding Discreet Fourier Transform) algorithm [4] is a computationally efficient alternative to per-window calculation of the FFT (Fast Fourier Transform) algorithm.

Query Processing Pipeline: Storm, Cassandra, Kafka

Now let us return to the practical problem that was stated in the beginning of this article.

We have designed and implemented our in-stream data processing system on top of Storm, Kafka, and Cassandra adopting the techniques described earlier in this article. Here we provide just a very brief overview of the solution – a detailed description of all implementation pitfalls and tricks is too large and probably requires a separate article.

还记得文章刚开始的抽象架构图吗, 这里通过那么多分析, 使用Storm, Kafka, and Cassandra来给出实际的In-Stream Processing的架构图

Kafka 0.8 as a partitioned fault-tolerant event buffer to enable stream replay and improve system extensibility by easy addition of new event producers and consumers. Kafka’s ability to rewind read pointers also enables random access to the incoming batches and, consequently, Spark-style lineage tracking. It is also possible to point the system input to HDFS to process the historical data.

Cassandra is employed for state checkpointing and in-store aggregation, as described earlier. In many use cases, it also stores the final results.

Twitter’s Storm is a backbone of the system. All active query processing is performed in Storm’s topologies that interact with Kafka and Cassandra. Some data flows are simple and straightforward: the data arrives to Kafka; Storm reads and processes it and persist the results to Cassandra or other destination. Other flows are more sophisticated: one Storm topology can pass the data to another topology via Kafka or Cassandra. Two examples of such flows are shown in the figure above (red and blue curved arrows).

Towards Unified Big Data Processing

总结,

首先现在有很多的系统用于batch processing, real-time query processing和in-stream processing(注意, real-time和streaming不是一个概念)

Lambda Architecture可以converge所有这些系统, 来构建一个全栈的分析系统...

其次, 分析这3种不同的分析系统中所共有的一些技术, 比如shuffling and pipelining

而差异点是, In-stream processing更强调严格的数据delivery guarantees和中间结果的管理, 并且In-stream processing更依赖于pipeline技术

It is great that the existing technologies like Hive, Storm, and Impala enable us to crunch Big Data using both batch processing for complex analytics and machine learning, and real-time query processing for online analytics, and in-stream processing for continuous querying.

Moreover, techniques like Lambda Architecture [6, 7] were developed and adopted to combine these solutions efficiently. This brings us to the question of how all these technologies and approaches could converge to a solid solution in the future.

In this section, we discuss the striking similarity between distributed relational query processing, batch processing, and in-stream query processing to figure out the technologies that could cover all these use cases and, consequently, have the highest potential in this area.

The key observation is that relational query processing, MapReduce, and in-stream processing could be implemented using exactly the same concepts and techniques like shuffling and pipelining.

At the same time:

- In-stream processing could require strict data delivery guarantees and persistence of the intermediate state. These properties are not crucial for batch processing where computations can be easily restarted.

- In-stream processing is inseparable from pipelining. For batch processing, pipelining is not so crucial and even inapplicable in certain cases. Systems like Apache Hive are based on staged MapReduce with materialization of the intermediate state and do not take full advantage of pipelining.

The two statement above imply that tunable persistence (in-memory message passing versus on-disk materialization) and reliability are the distinctive features of the imaginary query engine that provides a set of processing primitives and interfaces to the high-level frameworks:

Among the emerging technologies, the following two are especially notable in the context of this discussion:

- Apache Tez [8], a part of the Stinger Initiative [9]. Apache Tez is designed to succeed the MapReduce framework introducing a set of fine-grained query processing primitives. The goal is to enable frameworks like Apache Pig and Apache Hive to decompose their queries and scripts into efficient query processing pipelines instead of sequences of MapReduce jobs that are generally slow due to materialization of intermediate results.

- Apache Spark [10]. This project is probably the most advanced and promising technology for unified Big Data processing that already includes a batch processing framework, SQL query engine, and a stream processing framework.

References

- A. Wilschut and P. Apers, “Dataflow Query Execution in a Parallel Main-Memory Environment “

- T. Urhan and M. Franklin, “XJoin: A Reactively-Scheduled Pipelined Join Operator“

- M. Zaharia, T. Das, H. Li, S. Shenker, and I. Stoica, “Discretized Streams: An Efficient and Fault-Tolerant Model for Stream Processing on Large Clusters”

- E. Jacobsen and R. Lyons, “The Sliding DFT“

- A. Elmagarmid, Data Streams Models and Algorithms

- N. Marz, “Big Data Lambda Architecture”

- J. Kinley, “The Lambda architecture: principles for architecting realtime Big Data systems”

- http://hortonworks.com/hadoop/tez/

- http://hortonworks.com/stinger/

- http://spark-project.org/