一元线性回归(梯度下降法)

实战:梯度下降法实现一元线性回归

- 手写代码实现一元线性回归问题

- 调用scikit learn库实现一元线性回归

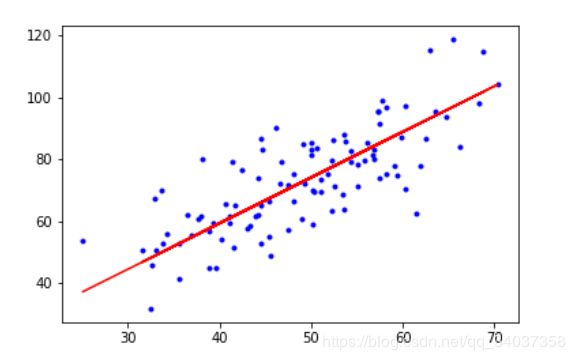

- 运行图

手写代码实现一元线性回归问题

代码片.

// An highlighted block

import numpy as np

import matplotlib.pyplot as plt

# 载入数据

data = np.genfromtxt("../data.csv", delimiter=",")

x_data = data[:,0]

y_data = data[:,1]

plt.scatter(x_data,y_data)

plt.show()

# 学习率learning rate

lr = 0.0001

# 截距

b = 0

# 斜率

k = 0

# 最大迭代次数

epochs = 50

# 最小二乘法

def compute_error(b, k, x_data, y_data):

totalError = 0

for i in range(0, len(x_data)):

totalError += (y_data[i] - (k * x_data[i] + b)) ** 2

return totalError / float(len(x_data)) / 2.0

def gradient_descent_runner(x_data, y_data, b, k, lr, epochs):

# 计算总数据量

m = float(len(x_data))

# 循环epochs次

for i in range(epochs):

b_grad = 0

k_grad = 0

# 计算梯度的总和再求平均

for j in range(0, len(x_data)):

b_grad += (1/m) * (((k * x_data[j]) + b) - y_data[j])

k_grad += (1/m) * x_data[j] * (((k * x_data[j]) + b) - y_data[j])

# 更新b和k

b = b - (lr * b_grad)

k = k - (lr * k_grad)

# 每迭代5次,输出一次图像

# if i % 5==0:

# print("epochs:",i)

# plt.plot(x_data, y_data, 'b.')

# plt.plot(x_data, k*x_data + b, 'r')

# plt.show()

return b, k

print("Starting b = {0}, k = {1}, error = {2}".format(b, k, compute_error(b, k, x_data, y_data)))

print("Running...")

b, k = gradient_descent_runner(x_data, y_data, b, k, lr, epochs)

print(k,b)

print("After {0} iterations b = {1}, k = {2}, error = {3}".format(epochs, b, k, compute_error(b, k, x_data, y_data)))

# 画图

#b. b是blue .是画点

plt.plot(x_data, y_data, 'b.')

plt.plot(x_data, k*x_data + b, 'r')

plt.show()

调用scikit learn库实现一元线性回归

代码片.

// An highlighted block

from sklearn.linear_model import LinearRegression

import numpy as np

import matplotlib.pyplot as plt

# 载入数据

data = np.genfromtxt("../data.csv", delimiter=",")

x_data = data[:,0]

y_data = data[:,1]

plt.scatter(x_data,y_data)

plt.show()

print(x_data.shape)

/*输出结果为(100,),是一维数组的意思,但是sklearn需要接受二维数组,

所以要将一维数组转成二维数组,即下面两行代码

*/

x_data = data[:,0,np.newaxis]

y_data = data[:,1,np.newaxis]

# 创建并拟合模型

model = LinearRegression()

model.fit(x_data, y_data)

# 画图

plt.plot(x_data, y_data, 'b.')

plt.plot(x_data, model.predict(x_data), 'r')

plt.show()

sklearn库实现的一元线性回归算法非常简洁。。。