GStreamer官方入门课程8:短切管道——如何把数据灵活读出或写入管道

使用GStreamer建造的管道不需要完全封闭。数据可以随时以各种方式注入管道并从中提取。本教程显示:

- 如何将外部数据注入通用GStreamer管道。

- 如何从通用GStreamer管道中提取数据。

- 如何访问和操作这些数据。

回放教程3:缩短管道说明如何在基于playbin的管道中实现相同的目标。

1. 引言

应用程序可以通过多种方式与通过GStreamer管道的数据交互。本教程介绍了最简单的一个,因为它使用的元素都是为此目的创建的。

用于将应用程序数据注入GStreamer管道的元素是appsrc,用于将GStreamer数据提取回应用程序的元素是appsink。为了避免混淆这些名称,请从GStreamer的角度考虑:appsrc只是一个常规的源,它提供神奇地从天上掉下来的数据(实际上是由应用程序提供的)。appsink是一个常规的接收器,在这里通过GStreamer管道的数据会死掉(实际上是由应用程序恢复的)。

appsrc和appsink非常通用,它们提供了自己的API(参见文档),可以通过链接gstreamer应用程序库来访问它们。不过,在本教程中,我们将使用更简单的方法并通过信号控制它们。

appsrc可以在多种模式下工作:在pull模式下,它每次需要数据时都会向应用程序请求数据。在推送模式下,应用程序以自己的速度推送数据。此外,在推送模式下,当已经提供了足够的数据时,应用程序可以选择在推送功能中被阻塞,或者它可以监听足够的数据并需要数据信号来控制流。这个例子实现了后一种方法。有关其他方法的信息可以在appsrc文档中找到。

(1) 缓冲器

数据以称为缓冲区的块形式通过GStreamer管道。由于这个示例生成并使用数据,因此我们需要了解GstBuffers。

源pad生成缓冲区,由Sink pad使用;GStreamer接收这些缓冲区并将它们从元素传递到元素。

缓冲区只是表示一个数据单位,不要假设所有缓冲区都有相同的大小,或者表示相同的时间量。也不应该假设如果一个缓冲区进入一个元素,就会有一个缓冲区出来。元素可以随意处理接收到的缓冲区。GstBuffers还可以包含多个实际内存缓冲区。实际的内存缓冲区是使用GstMemory对象抽象出来的,GstBuffer可以包含多个GstMemory对象。

每个缓冲区都附加了时间戳和持续时间,这些时间戳和持续时间描述了缓冲区内容应在哪一刻解码、呈现或显示。时间戳是一个非常复杂和微妙的主题,但这种简化的视觉应该已经足够了。

例如,filesrc(读取文件的GStreamer元素)生成带有“ANY”大写的缓冲区,并且没有时间戳信息。解组后(参见基本教程3:动态管道),缓冲区可以有一些特定的上限,例如“video/x-h264”。解码后,每个缓冲区将包含一个带有原始大小写的视频帧(例如,“video/x-raw-yuv”)和非常精确的时间戳,指示应在何时显示该帧。

(2) 本教程

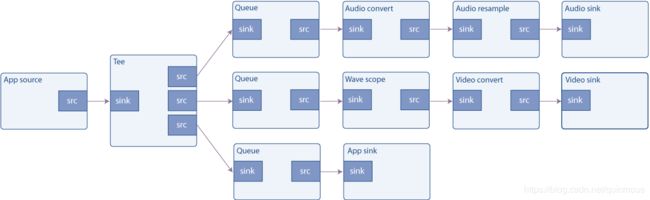

本教程以两种方式扩展了基本教程7:多线程和Pad可用性:首先,audiotestsrc被一个将生成音频数据的appsrc替换。其次,在tee中添加了一个新的分支,这样进入音频接收器和wave显示的数据也会复制到appsink中。appsink将信息上传回应用程序,然后应用程序只通知用户已经收到数据,但它显然可以执行更复杂的任务。

2. 粗波发生器

将此代码复制到名为basic-tutorial-8.c的文本文件中(或在GStreamer安装中找到它)。

#include 用下面命令编译:

gcc basic-tutorial-8.c -o basic-tutorial-8 `pkg-config --cflags --libs gstreamer-1.0 gstreamer-audio-1.0`

3. 代码详解

创建管道(第131至205行)的代码是Basic tutorial 7:多线程和Pad可用性的扩展版本。它包括实例化所有元素,将元素与Always Pads链接,并手动链接tee元素的请求Pads。

关于appsrc和appsink元素的配置:

/* Configure appsrc */

gst_audio_info_set_format (&info, GST_AUDIO_FORMAT_S16, SAMPLE_RATE, 1, NULL);

audio_caps = gst_audio_info_to_caps (&info);

g_object_set (data.app_source, "caps", audio_caps, NULL);

g_signal_connect (data.app_source, "need-data", G_CALLBACK (start_feed), &data);

g_signal_connect (data.app_source, "enough-data", G_CALLBACK (stop_feed), &data);

需要在appsrc上设置的第一个属性是caps。它指定元素将生成的数据类型,因此GStreamer可以检查是否可以与下游元素链接(也就是说,下游元素是否理解这类数据)。此属性必须是gst caps对象,该对象很容易从具有gst-caps-from-gst-string()的字符串生成。

然后我们连接到需要的数据和足够的数据信号。当appsrc的内部数据队列分别运行得很低或几乎已满时,它们将被触发。我们将使用这些信号(分别)启动和停止我们的信号生成过程。

/* Configure appsink */

g_object_set (data.app_sink, "emit-signals", TRUE, "caps", audio_caps, NULL);

g_signal_connect (data.app_sink, "new-sample", G_CALLBACK (new_sample), &data);

gst_caps_unref (audio_caps);

关于appsink配置,我们连接到新的采样信号,每次sink接收缓冲区时都会发出该信号。此外,还需要通过emit signals属性启用信号发射,因为在默认情况下,它是禁用的。

启动管道、等待消息和最终清理工作照常进行。让我们回顾一下我们刚刚注册的回调:

/* This signal callback triggers when appsrc needs data. Here, we add an idle handler

* to the mainloop to start pushing data into the appsrc */

static void start_feed (GstElement *source, guint size, CustomData *data) {

if (data->sourceid == 0) {

g_print ("Start feeding\n");

data->sourceid = g_idle_add ((GSourceFunc) push_data, data);

}

}

当appsrc的内部队列即将耗尽(数据用完)时调用此函数。我们在这里做的唯一事情是用g_idle_add()注册一个GLib idle函数,它将数据馈送给appsrc,直到它再次满。GLib idle函数是GLib在“空闲”时从主循环调用的方法,也就是说,当GLib没有更高优先级的任务要执行时。显然,它需要一个GLib GMainLoop来实例化和运行。

这只是appsrc允许的多种方法之一。特别是,缓冲区不需要使用GLib从主线程馈入appsrc,也不需要使用所需数据和足够的数据信号来与appsrc同步(尽管这据称是最方便的)。

我们注意到g_idle_add()返回的sourceid,以便以后禁用它。

/* This callback triggers when appsrc has enough data and we can stop sending.

* We remove the idle handler from the mainloop */

static void stop_feed (GstElement *source, CustomData *data) {

if (data->sourceid != 0) {

g_print ("Stop feeding\n");

g_source_remove (data->sourceid);

data->sourceid = 0;

}

}

This function is called when the internal queue of appsrc is full enough so we stop pushing data. Here we simply remove the idle function by using g_source_remove() (The idle function is implemented as a GSource).

/* This method is called by the idle GSource in the mainloop, to feed CHUNK_SIZE bytes into appsrc.

* The ide handler is added to the mainloop when appsrc requests us to start sending data (need-data signal)

* and is removed when appsrc has enough data (enough-data signal).

*/

static gboolean push_data (CustomData *data) {

GstBuffer *buffer;

GstFlowReturn ret;

int i;

gint16 *raw;

gint num_samples = CHUNK_SIZE / 2; /* Because each sample is 16 bits */

gfloat freq;

/* Create a new empty buffer */

buffer = gst_buffer_new_and_alloc (CHUNK_SIZE);

/* Set its timestamp and duration */

GST_BUFFER_TIMESTAMP (buffer) = gst_util_uint64_scale (data->num_samples, GST_SECOND, SAMPLE_RATE);

GST_BUFFER_DURATION (buffer) = gst_util_uint64_scale (num_samples, GST_SECOND, SAMPLE_RATE);

/* Generate some psychodelic waveforms */

raw = (gint16 *)GST_BUFFER_DATA (buffer);

这是为appsrc提供数据的函数。GLib将以超出我们控制的时间和速率调用它,但是我们知道,当它的任务完成时(当appsrc中的队列已满时),我们将禁用它。

它的第一个任务是使用gst_buffer_new_和_alloc()创建具有给定大小的新缓冲区(在本例中,它被任意设置为1024字节)。

我们计算到目前为止已经生成的样本数CustomData.num_示例变量,因此我们可以使用GST buffer中的GST_buffer_time stamp宏对此缓冲区进行时间戳。

因为我们正在生产相同大小的缓冲区,所以它们的持续时间是相同的,并且是使用GST BUFFER中的GST_BUFFER_duration设置的。

gst_util_uint64_scale()是一个实用函数,它可以缩放(乘和除)可以很大的数字,而不必担心溢出。

可以使用GST buffer中的GST_buffer_数据访问缓冲区的字节(注意不要写超过缓冲区的末尾:您分配了它,所以您知道它的大小)。

我们将跳过波形生成,因为它不在本教程的范围内(这只是生成一个非常迷幻波的有趣方法)。

/* Push the buffer into the appsrc */

g_signal_emit_by_name (data->app_source, "push-buffer", buffer, &ret);

/* Free the buffer now that we are done with it */

gst_buffer_unref (buffer);

一旦我们准备好了缓冲区,我们就用push buffer动作信号把它传递给appsrc(见回放教程1:Playbin用法结尾的信息框),然后gst_buffer_unref()它,因为我们不再需要它。

/* The appsink has received a buffer */

static GstFlowReturn new_sample (GstElement *sink, CustomData *data) {

GstSample *sample;

/* Retrieve the buffer */

g_signal_emit_by_name (sink, "pull-sample", &sample);

if (sample) {

/* The only thing we do in this example is print a * to indicate a received buffer */

g_print ("*");

gst_sample_unref (sample);

return GST_FLOW_OK;

}

return GST_FLOW_ERROR;

}

最后,这是appsink接收缓冲区时调用的函数。我们使用pull sample动作信号检索缓冲区,然后在屏幕上打印一些指示器。我们可以使用GST缓冲区数据宏检索数据指针,使用GST缓冲区中的GST缓冲区大小宏检索数据大小。请记住,此缓冲区不必与我们在push_data函数中生成的缓冲区匹配,路径中的任何元素都可以以任何方式更改缓冲区(在本例中并非如此:在appsrc和appsink之间的路径中只有一个tee,它不会更改缓冲区的内容)。

然后gst_buffer_unref()缓冲区,本教程就完成了。

4. 小结

本教程展示了应用程序如何:

- 使用appsrcement将数据注入管道。

- 使用appsink元素从管道检索数据。

- 通过访问GstBuffer来操作此数据。

在基于playbin的管道中,相同的目标以稍微不同的方式实现。回放教程3:短切管道显示了如何做到这一点。

欢迎阅读本课程,下次再见!