ffmpeg学习十二:滤镜(实现视频缩放,裁剪,水印等)

这篇文章对使用滤镜进行视频缩放,裁剪水印等做简单介绍。

一.滤镜

滤镜可以实现多路视频的叠加,水印,缩放,裁剪等功能,ffmpeg提供了丰富的滤镜,可以使用ffmpeg -filters来查看:

Filters:

T.. = Timeline support

.S. = Slice threading

..C = Command support

A = Audio input/output

V = Video input/output

N = Dynamic number and/or type of input/output

| = Source or sink filter

T.. adelay A->A Delay one or more audio channels.

… aecho A->A Add echoing to the audio.

… aeval A->A Filter audio signal according to a specified expression.

T.. afade A->A Fade in/out input audio.

… aformat A->A Convert the input audio to one of the specified formats.

… ainterleave N->A Temporally interleave audio inputs.

… allpass A->A Apply a two-pole all-pass filter.

… amerge N->A Merge two or more audio streams into a single multi-channel stream.

… amix N->A Audio mixing.

… anull A->A Pass the source unchanged to the output.

T.. apad A->A Pad audio with silence.

… aperms A->A Set permissions for the output audio frame.

… aphaser A->A Add a phasing effect to the audio.

… aresample A->A Resample audio data.

… aselect A->N Select audio frames to pass in output.

… asendcmd A->A Send commands to filters.

… asetnsamples A->A Set the number of samples for each output audio frames.

… asetpts A->A Set PTS for the output audio frame.

… asetrate A->A Change the sample rate without altering the data.

… asettb A->A Set timebase for the audio output link.

… ashowinfo A->A Show textual information for each audio frame.

… asplit A->N Pass on the audio input to N audio outputs.

…..

这里只是列出其中一小部分,可见ffmpeg提供了非常丰富的滤镜。

滤镜的几个基本概念

Filter:代表单个filter

FilterPad:代表一个filter的输入或输出端口,每个filter都可以有多个输入和多个输出,只有输出pad的filter称为source,只有输入pad的filter称为sink

FilterLink:若一个filter的输出pad和另一个filter的输入pad名字相同,即认为两个filter之间建立了link

FilterChain:代表一串相互连接的filters,除了source和sink外,要求每个filter的输入输出pad都有对应的输出和输入pad

**FilterGraph:**FilterChain的集合

经典示例:

图中每一个节点就是一个Filter,每一个方括号所代表的就是FilterPad,可以看到split的输出pad中有一个叫tmp的,而crop的输入pad中也有一个tmp,由此在二者之间建立了link,当然input和output代表的就是source和sink,此外,图中有三条FilterChain,第一条由input和split组成,第二条由crop和vflip组成,第三条由overlay和output组成,整张图即是一个拥有三个FilterChain的FilterGraph。

使用libavfilter为视频添加滤镜

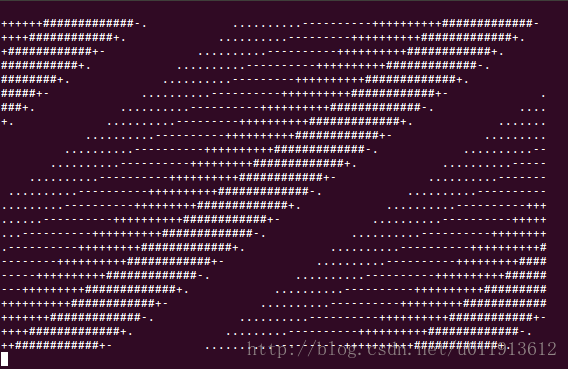

ffmpeg官网给出的filtering_video.c介绍了滤镜的用法,这个例子将一个视频文件解码成原始的一帧数据,然后再将这一帧数据使用滤镜惊醒缩放,缩小到78x24大小后,将像素中的点转换成字符,然后显示在终端中,效果如下:

这个程序结构非常清晰的介绍了滤镜的用法,但这种将视频中的像素转换为字符,然后显示在终端的做法并不能很直观的感受滤镜的作用,因此,我对这个程序做了简单的修改,将滤镜处理后的视频保存在文件中而不是显示在终端。下面为我修改过后,完整的程序,只有一个.c文件:

#define _XOPEN_SOURCE 600 /* for usleep */

#include 文件编译参考前面的文章,便宜时会报错:No such filter: ‘drawtext’。这是因为我们没有是能这个滤镜。

解决办法:程序找不到 drawtext这个filter,一般是因为使用默认编译选项是该filter并未被编译进库里面,所以重新再编译ffmpeg,并且在执行”./configure ……”时加上“–enable-libfreetype”。这样就ok了。

完整的配置如下,我们之前已经使能了aac,h.264编解码器。

./configure --enable-libx264 --enable-gpl --enable-decoder=h264 --enable-encoder=libx264 --enable-shared --enable-static --disable-yasm -enable-nonfree --enable-libfdk-aac --enable-shared --enable-libfreetype --prefix=tmp配置结束后执行make && make install安装即可。

编译后,执行实例如下:

./out.bin rain.mp4

然后再当前文件下会生成hello.yuv的视频文件,这是原始数据格式的视频,可以使用ffplay来播放:

ffplay -s 1280x640 hello.yuv

改程序会打印出视频的长和宽,这里的1280x640请使用打印出来的长和宽来代替。

三.缩放,裁剪,添加字符串水印

滤镜的用法基本就是如上程序给出的步骤,我们可以通过指定不同的描述字符串来实现不同的滤镜功能。下面的字符串将视频缩放为200x100大小,着我在程序里的注释中已经给出了。

原视频:

缩小为一半:

const char *filter_descr = “scale=iw/2:ih/2”;

缩小后现象不明显,就不贴图了。

如下命令将视频裁剪出中间的1/4。

const char *filter_descr = “crop=iw/2:ih/2:iw/4:ih/4”;

效果如下:

下面字符串给视频添加hello的字符串水印:

const char *filter_descr = “drawtext=fontfile=FreeSans.ttf:fontcolor=green:fontsize=30:text=’Hello’”;

效果如下:

更复杂的滤镜的使用,期待和大家一起学习。