zookeeper分布式安装

zk介绍

ZooKeeper是一个分布式开源框架,提供了协调分布式应用的基本服务,它向外部应用暴露一组通用服务——分布式同步(Distributed Synchronization)、命名服务(Naming Service)、集群维护(Group Maintenance)等,简化分布式应用协调及其管理的难度,提供高性能的分布式服务。ZooKeeper本身可以以Standalone模式安装运行,不过它的长处在于通过分布式ZooKeeper集群(一个Leader,多个Follower),基于一定的策略来保证ZooKeeper集群的稳定性和可用性,从而实现分布式应用的可靠性。

分布式安装

主机名称到IP地址映射配置

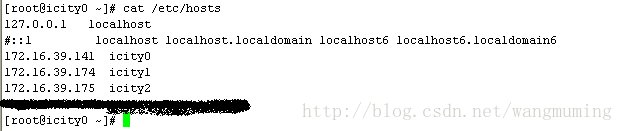

ZooKeeper集群中具有两个关键的角色:Leader和Follower。集群中所有的结点作为一个整体对分布式应用提供服务,集群中每个结点之间都互相连接,所以,在配置的ZooKeeper集群的时候,每一个结点的host到IP地址的映射都要配置上集群中其它结点的映射信息。

例如,我的ZooKeeper集群中每个结点的配置,以icity0为例,/etc/hosts内容如下所示:

另外icity1icity2依此配置即可。

ZooKeeper采用一种称为Leaderelection的选举算法。在整个集群运行过程中,只有一个Leader,其他的都是Follower,如果ZooKeeper集群在运行过程中Leader出了问题,系统会采用该算法重新选出一个Leader。因此,各个结点之间要能够保证互相连接,必须配置上述映射。

ZooKeeper集群启动的时候,会首先选出一个Leader,在Leaderelection过程中,某一个满足选举算的结点就能成为Leader。

修改ZooKeeper配置文件

1. 上传zookeeper安装包到icity0服务器的/home/hadoop/目录下面,并解压,重命名为zk;

tar –zxvf zookeeper-3.4.5.tar.gz

mv zookeeper zk

2. 进入zk目录

cd zk/conf

cp zoo_sample.cfg zoo.cfg

vi zoo.cfg

dataDir为数据存放目录;

server.*为集群配置;

3. 创建data目录并设置myid

在我们配置的dataDir指定的目录下面,创建一个myid文件,里面内容为一个数字,用来标识当前主机,conf/zoo.cfg文件中配置的server.X中X为什么数字,则myid文件中就输入这个数字。

例如icity0服务器上的配置:

cd zk

mkdir data

cd data

vi myid

Icity1 icity2依此配置即可。

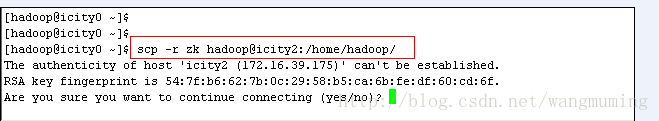

远程复制分发安装文件

scp –r zk hadoop@icity1:/home/hadoop/

scp –r zk hadoop@icity2:/home/hadoop/

由于暂时未配置无密码登录,故需要输入yes,密码;

如图所示:

设置myid

修改icity1和icity2上面的myid值分别为1和2;

设置zookeeper环境变量

这一步非必要操作,但是建议设置。

source .bash_profile

Icity1 icity2服务器依此配置,可将此配置scp到另外两台服务器的/home/hadoop/目录下面;

启动ZooKeeper集群

Icity2日志信息:

2014-04-11 19:42:03,835 [myid:] - INFO [main:QuorumPeerConfig@101] - Readingconfiguration from: /home/hadoop/zk/bin/../conf/zoo.cfg

2014-04-11 19:42:03,863 [myid:] - INFO [main:QuorumPeerConfig@334] - Defaulting tomajority quorums

2014-04-11 19:42:03,872 [myid:2] -INFO [main:DatadirCleanupManager@78] -autopurge.snapRetainCount set to 3

2014-04-11 19:42:03,873 [myid:2] -INFO [main:DatadirCleanupManager@79] -autopurge.purgeInterval set to 0

2014-04-11 19:42:03,874 [myid:2] -INFO [main:DatadirCleanupManager@101] -Purge task is not scheduled.

2014-04-11 19:42:03,913 [myid:2] -INFO [main:QuorumPeerMain@127] -Starting quorum peer

2014-04-11 19:42:04,035 [myid:2] -INFO [main:NIOServerCnxnFactory@94] -binding to port 0.0.0.0/0.0.0.0:2181

2014-04-11 19:42:04,138 [myid:2] -INFO [main:QuorumPeer@913] - tickTimeset to 2000

2014-04-11 19:42:04,139 [myid:2] -INFO [main:QuorumPeer@933] -minSessionTimeout set to -1

2014-04-11 19:42:04,140 [myid:2] -INFO [main:QuorumPeer@944] -maxSessionTimeout set to -1

2014-04-11 19:42:04,141 [myid:2] -INFO [main:QuorumPeer@959] - initLimitset to 10

2014-04-11 19:42:04,179 [myid:2] -INFO [main:QuorumPeer@429] -currentEpoch not found! Creating with a reasonable default of 0. This shouldonly happen when you are upgrading your installation

2014-04-11 19:42:04,187 [myid:2] -INFO [main:QuorumPeer@444] -acceptedEpoch not found! Creating with a reasonable default of 0. This shouldonly happen when you are upgrading your installation

2014-04-11 19:42:04,198 [myid:2] -INFO [Thread-1:QuorumCnxManager$Listener@486]- My election bind port: 0.0.0.0/0.0.0.0:3888

2014-04-11 19:42:04,221 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:QuorumPeer@670] - LOOKING

2014-04-11 19:42:04,225 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:FastLeaderElection@740]- New election. My id = 2, proposedzxid=0x0

2014-04-11 19:42:04,246 [myid:2] -INFO [WorkerReceiver[myid=2]:FastLeaderElection@542] - Notification: 2(n.leader), 0x0 (n.zxid), 0x1 (n.round), LOOKING (n.state), 2 (n.sid), 0x0(n.peerEPoch), LOOKI

NG (my state)

2014-04-11 19:42:04,248 [myid:2] -INFO [WorkerReceiver[myid=2]:FastLeaderElection@542] - Notification: 1(n.leader), 0x0 (n.zxid), 0x1 (n.round), LOOKING (n.state), 0 (n.sid), 0x0(n.peerEPoch), LOOKI

NG (my state)

2014-04-11 19:42:04,249 [myid:2] -INFO [WorkerReceiver[myid=2]:FastLeaderElection@542] - Notification: 1(n.leader), 0x0 (n.zxid), 0x1 (n.round), FOLLOWING (n.state), 0 (n.sid), 0x0(n.peerEPoch), LOO

KING (my state)

2014-04-11 19:42:04,251 [myid:2] -INFO [WorkerReceiver[myid=2]:FastLeaderElection@542] - Notification: 1(n.leader), 0x0 (n.zxid), 0x1 (n.round), LEADING (n.state), 1 (n.sid), 0x0(n.peerEPoch), LOOKI

NG (my state)

2014-04-11 19:42:04,258 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:QuorumPeer@738]- FOLLOWING

2014-04-11 19:42:04,280 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:Learner@85] - TCP NoDelay setto: true

2014-04-11 19:42:04,309 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:Environment@100] - Serverenvironment:zookeeper.version=3.4.5-1392090, built on 09/30/2012 17:52 GMT

2014-04-11 19:42:04,310 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:Environment@100] - Serverenvironment:host.name=manage.smartsh-test.net

2014-04-11 19:42:04,438 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:Environment@100] - Serverenvironment:java.version=1.7.0_09

2014-04-11 19:42:04,439 [myid:2] -INFO [QuorumPeer[myid=2]/0:0:0:0:0:0:0:0:2181:Environment@100] - Serverenvironment:java.vendor=Oracle Corporation

我启动的顺序是icity0>icity1>icity2,由于ZooKeeper集群启动的时候,每个结点都试图去连接集群中的其它结点,先启动的肯定连不上后面还没启动的,有些服务器日志前面部分的异常是可以忽略的,这个是正常的。观察日志,集群在选出一个Leader后,就稳定了。

停止zookeeper进程:zkServer.sh stop

安装验证

可以通过ZooKeeper的脚本来查看启动状态,包括集群中各个结点的角色(或是Leader,或是Follower),如下所示,是在ZooKeeper集群中的每个结点上查询的结果:

[hadoop@icity0 bin]$ zkServer.sh status

JMX enabled by default

Using config:/home/hadoop/zk/bin/../conf/zoo.cfg

Mode: follower

[hadoop@icity1 bin]$ zkServer.sh status

JMX enabled by default

Using config: /home/hadoop/zk/bin/../conf/zoo.cfg

Mode: leader

[hadoop@icity2 bin]$ zkServer.sh status

JMX enabled by default

Using config:/home/hadoop/zk/bin/../conf/zoo.cfg

Mode: follower

通过上面状态查询结果可见,icity2是集群的Leader,其余的两个结点是Follower。

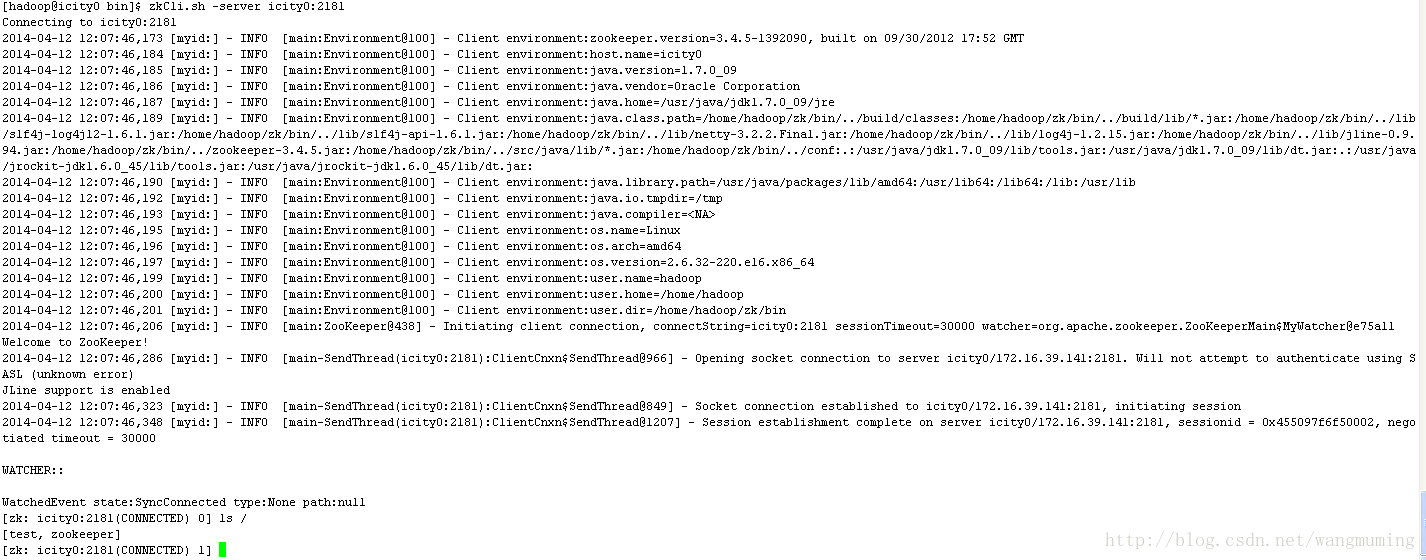

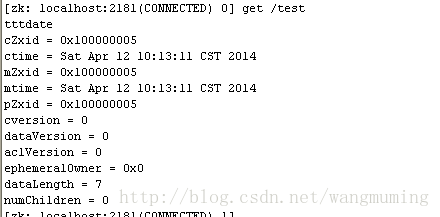

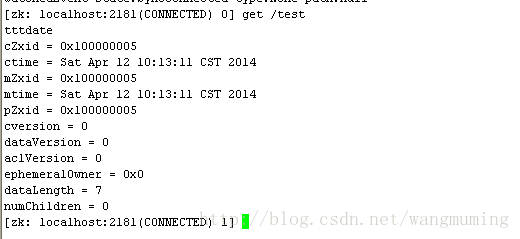

另外,可以通过客户端脚本,连接到ZooKeeper集群上。对于客户端来说,ZooKeeper是一个整体(ensemble),连接到ZooKeeper集群实际上感觉在独享整个集群的服务,所以,你可以在任何一个结点上建立到服务集群的连接,例如:

注意:我在之前有设置环境变量,所有在任何地方输入zkCli.sh都可以找到并且执行。

zkCli.sh –server icity0:2181 也可以直接写出zkCli.sh 直接连接本地是一样的效果。

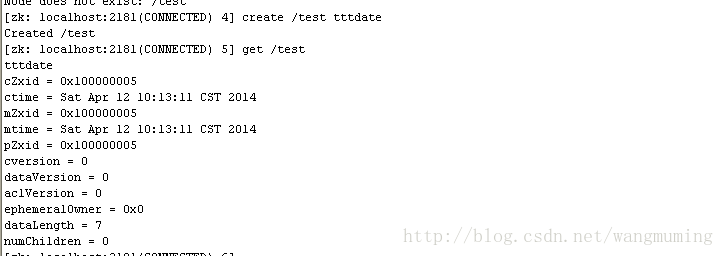

集群测试

Icity0设置一个节点:

Icity1:

Icity2: