keras实战-入门之卷积自编码器

keras实战-入门之卷积自编码器

- 卷积自编码器

卷积自编码器

对于图像效果比全连接的好,毕竟提取了像素之间的空间信息。

import numpy as np

np.random.seed(11)

from keras.datasets import mnist

import matplotlib.pyplot as plt

Using TensorFlow backend.

(x_train_image,y_train_label),(x_test_image,y_test_label)=mnist.load_data()

plt.imshow(x_test_image[0],cmap='binary')

print('x_train_image',len(x_train_image))

print('x_test_image',len(x_test_image))

x_train_image 60000

x_test_image 10000

print('y_train_label',x_train_image.shape)

print('y_train_label',y_train_label.shape)

y_train_label (60000, 28, 28)

y_train_label (60000,)

x_train4D=x_train_image.reshape(x_train_image.shape[0],28,28,1).astype('float32')

x_test4D=x_test_image.reshape(x_test_image.shape[0],28,28,1).astype('float32')

print(x_train4D.shape)

print(x_test4D.shape)

(60000, 28, 28, 1)

(10000, 28, 28, 1)

x_train_normalize=x_train4D/255

x_test_normalize=x_test4D/255

from keras import Model

from keras.layers import Dense,Input,MaxPooling2D,Conv2D,UpSampling2D

from keras.callbacks import TensorBoard

#函数式要定义输入

input_image=Input((28,28,1))

#编码器 2个简单的卷积,2个最大池化,缩小为原来的1/4了

encoder=Conv2D(16,(3,3),padding='same',activation='relu')(input_image)

encoder=MaxPooling2D((2,2))(encoder)

encoder=Conv2D(8,(3,3),padding='same',activation='relu')(encoder)

encoder_out=MaxPooling2D((2,2))(encoder)

# 构建编码模型,可以提取特征图片,展开了就是特征向量

encoder_model = Model(inputs=input_image, outputs=encoder_out)

#解码器,反过来

decoder=UpSampling2D((2,2))(encoder_out)

decoder=Conv2D(8,(3,3),padding='same',activation='relu')(decoder)

decoder=UpSampling2D((2,2))(decoder)

decoder=Conv2D(16,(3,3),padding='same',activation='relu')(decoder)

#转成原始图片尺寸

decoder_out=Conv2D(1, (3, 3), padding='same',activation='sigmoid')(decoder)

autoencoder=Model(input_image,decoder_out)

autoencoder.summary()

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

input_4 (InputLayer) (None, 28, 28, 1) 0

_________________________________________________________________

conv2d_16 (Conv2D) (None, 28, 28, 16) 160

_________________________________________________________________

max_pooling2d_7 (MaxPooling2 (None, 14, 14, 16) 0

_________________________________________________________________

conv2d_17 (Conv2D) (None, 14, 14, 8) 1160

_________________________________________________________________

max_pooling2d_8 (MaxPooling2 (None, 7, 7, 8) 0

_________________________________________________________________

up_sampling2d_7 (UpSampling2 (None, 14, 14, 8) 0

_________________________________________________________________

conv2d_18 (Conv2D) (None, 14, 14, 8) 584

_________________________________________________________________

up_sampling2d_8 (UpSampling2 (None, 28, 28, 8) 0

_________________________________________________________________

conv2d_19 (Conv2D) (None, 28, 28, 16) 1168

_________________________________________________________________

conv2d_20 (Conv2D) (None, 28, 28, 1) 145

=================================================================

Total params: 3,217

Trainable params: 3,217

Non-trainable params: 0

_________________________________________________________________

autoencoder.compile(optimizer='adam', loss='binary_crossentropy')

autoencoder.fit(x_train_normalize, x_train_normalize, epochs=20, batch_size=256, shuffle=True,verbose=1,

# validation_data=(x_test_normalize, x_test_normalize),

callbacks=[TensorBoard(log_dir='autoencoder_cnn_log')])

Epoch 1/20

60000/60000 [==============================] - 4s 65us/step - loss: 0.1951

Epoch 2/20

60000/60000 [==============================] - 3s 58us/step - loss: 0.0898

Epoch 3/20

60000/60000 [==============================] - 3s 58us/step - loss: 0.0840

Epoch 4/20

60000/60000 [==============================] - 4s 61us/step - loss: 0.0814

Epoch 5/20

60000/60000 [==============================] - ETA: 0s - loss: 0.079 - 4s 62us/step - loss: 0.0798

Epoch 6/20

60000/60000 [==============================] - 4s 60us/step - loss: 0.0788

Epoch 7/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0780

Epoch 8/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0773

Epoch 9/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0767

Epoch 10/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0762: 0

Epoch 11/20

60000/60000 [==============================] - 4s 72us/step - loss: 0.0757

Epoch 12/20

60000/60000 [==============================] - 4s 65us/step - loss: 0.0753

Epoch 13/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0749

Epoch 14/20

60000/60000 [==============================] - 4s 60us/step - loss: 0.0746

Epoch 15/20

60000/60000 [==============================] - 4s 60us/step - loss: 0.0744

Epoch 16/20

60000/60000 [==============================] - 4s 60us/step - loss: 0.0741

Epoch 17/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0739

Epoch 18/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0737

Epoch 19/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0736

Epoch 20/20

60000/60000 [==============================] - 4s 59us/step - loss: 0.0734

decoded_imgs = autoencoder.predict(x_test_normalize)

print(decoded_imgs.shape)

(10000, 28, 28, 1)

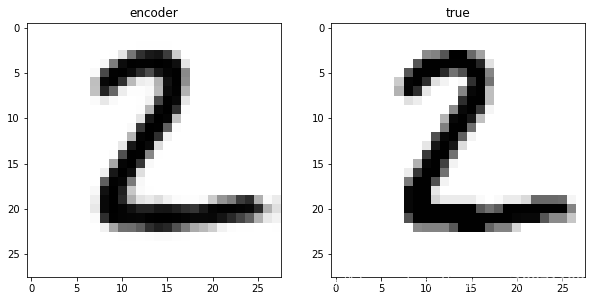

#显示编码器生成的图和真实的图

def show_images(index):

image=decoded_imgs[index,:]

image=image.reshape(28,28)

plt.figure(figsize=(10,10))

plt.subplot(1,2,1)

plt.title('encoder')

plt.imshow(image,cmap='binary')

plt.subplot(1,2,2)

plt.title('true')

plt.imshow(x_test_image[index],cmap='binary')

plt.show()

show_images(0)

show_images(1)

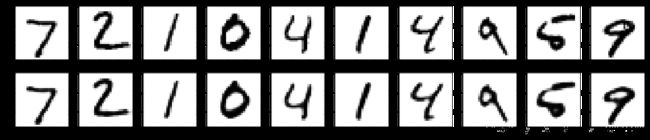

def show_num_images(start=0,end=5):

plt.figure(figsize=(20, 4))

for i in range(start,end):

ax = plt.subplot(2,end, i+1)

plt.imshow(x_test_normalize[i].reshape(28, 28),cmap='binary')

ax = plt.subplot(2, end, i+1 + end)

plt.imshow(decoded_imgs[i].reshape(28, 28),cmap='binary')

plt.show()

show_num_images(0,10)

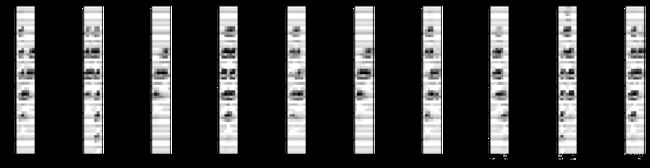

#压缩后的特征图片

latent=encoder_model.predict(x_test_normalize)

print(latent.shape)

(10000, 7, 7, 8)

def show_latent_images(start=0,end=5):

plt.figure(figsize=(20, 10))

for i in range(start,end):

ax = plt.subplot(2,end, i+1)

plt.imshow(latent[i].reshape(7, -1).T,cmap='binary')

plt.show()

#可以看到相同数字的特征图片很相近

show_latent_images(0,10)

好了,今天就到这里了,希望对学习理解有帮助,大神看见勿喷,仅为自己的学习理解,能力有限,请多包涵,侵删。