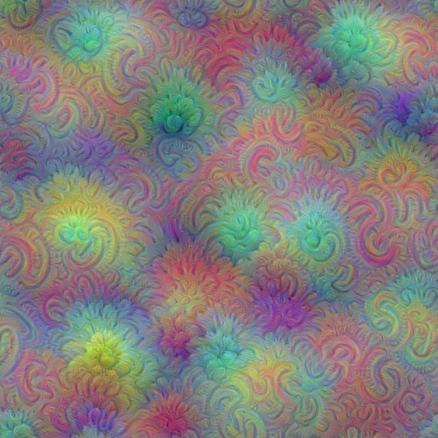

我们看到,利用TensorFlow 和训练好的Googlenet 可以生成多尺度的pattern,那些pattern看起来比起单一通道的pattern你要更好,但是有一个问题就是多尺度的pattern里高频分量太多,显得图像的噪点很多,为了解决这个问题,可以进一步的引入一个先验平滑函数,这样每次迭代的时候可以对图像进行模糊,去除高频分量,这样一般来说需要更多的迭代次数,另一种方式就是每次迭代中增强低频分量的梯度,这种技术被称为: 拉普拉斯金字塔分解,这里我们就要用到这种技术,我们称为:Laplacian Pyramid Gradient Normalization,利用LPGN,可以使生成的多尺度pattern图像更加平滑:

Laplacian Pyramid Gradient Normalization

# boilerplate code

from __future__ import print_function

import os

from io import BytesIO

import numpy as np

from functools import partial

import PIL.Image

from IPython.display import clear_output, Image, display, HTML

import tensorflow as tf

# !wget https://storage.googleapis.com/download.tensorflow.org/models/inception5h.zip && unzip inception5h.zip

model_fn = 'tensorflow_inception_graph.pb'

# creating TensorFlow session and loading the model

graph = tf.Graph()

sess = tf.InteractiveSession(graph=graph)

with tf.gfile.FastGFile(model_fn, 'rb') as f:

graph_def = tf.GraphDef()

graph_def.ParseFromString(f.read())

t_input = tf.placeholder(np.float32, name='input') # define the input tensor

imagenet_mean = 117.0

t_preprocessed = tf.expand_dims(t_input-imagenet_mean, 0)

tf.import_graph_def(graph_def, {'input':t_preprocessed})

layers = [op.name for op in graph.get_operations() if op.type=='Conv2D' and 'import/' in op.name]

feature_nums = [int(graph.get_tensor_by_name(name+':0').get_shape()[-1]) for name in layers]

print('Number of layers', len(layers))

print('Total number of feature channels:', sum(feature_nums))

# Picking some internal layer. Note that we use outputs before applying the ReLU nonlinearity

# to have non-zero gradients for features with negative initial activations.

layer = 'mixed4b_3x3_bottleneck_pre_relu'

channel = 24 # picking some feature channel to visualize

# start with a gray image with a little noise

img_noise = np.random.uniform(size=(224,224,3)) + 100.0

def showarray(a, fmt='jpeg'):

a = np.uint8(np.clip(a, 0, 1)*255)

f = BytesIO()

PIL.Image.fromarray(a).save(f, fmt)

display(Image(data=f.getvalue()))

def visstd(a, s=0.1):

# Normalize the image range for visualization

return (a-a.mean())/max(a.std(), 1e-4)*s + 0.5

def T(layer):

# Helper for getting layer output tensor

return graph.get_tensor_by_name("import/%s:0"%layer)

def tffunc(*argtypes):

# Helper that transforms TF-graph generating function into a regular one.

# See "resize" function below.

placeholders = list(map(tf.placeholder, argtypes))

def wrap(f):

out = f(*placeholders)

def wrapper(*args, **kw):

return out.eval(dict(zip(placeholders, args)), session=kw.get('session'))

return wrapper

return wrap

# Helper function that uses TF to resize an image

def resize(img, size):

img = tf.expand_dims(img, 0)

return tf.image.resize_bilinear(img, size)[0,:,:,:]

resize = tffunc(np.float32, np.int32)(resize)

def calc_grad_tiled(img, t_grad, tile_size=512):

# Compute the value of tensor t_grad over the image in a tiled way.

# Random shifts are applied to the image to blur tile boundaries over

# multiple iterations.

sz = tile_size

h, w = img.shape[:2]

sx, sy = np.random.randint(sz, size=2)

img_shift = np.roll(np.roll(img, sx, 1), sy, 0)

grad = np.zeros_like(img)

for y in range(0, max(h-sz//2, sz),sz):

for x in range(0, max(w-sz//2, sz),sz):

sub = img_shift[y:y+sz,x:x+sz]

g = sess.run(t_grad, {t_input:sub})

grad[y:y+sz,x:x+sz] = g

return np.roll(np.roll(grad, -sx, 1), -sy, 0)

# Laplacian Pyramid Gradient Normalization

k = np.float32([1,4,6,4,1])

k = np.outer(k, k)

k5x5 = k[:,:,None,None]/k.sum()*np.eye(3, dtype=np.float32)

def lap_split(img):

# Split the image into lo and hi frequency components

with tf.name_scope('split'):

lo = tf.nn.conv2d(img, k5x5, [1,2,2,1], 'SAME')

lo2 = tf.nn.conv2d_transpose(lo, k5x5*4, tf.shape(img), [1,2,2,1])

hi = img-lo2

return lo, hi

def lap_split_n(img, n):

# Build Laplacian pyramid with n splits

levels = []

for i in range(n):

img, hi = lap_split(img)

levels.append(hi)

levels.append(img)

return levels[::-1]

def lap_merge(levels):

# Merge Laplacian pyramid

img = levels[0]

for hi in levels[1:]:

with tf.name_scope('merge'):

img = tf.nn.conv2d_transpose(img, k5x5*4, tf.shape(hi), [1,2,2,1]) + hi

return img

def normalize_std(img, eps=1e-10):

# Normalize image by making its standard deviation = 1.0

with tf.name_scope('normalize'):

std = tf.sqrt(tf.reduce_mean(tf.square(img)))

return img/tf.maximum(std, eps)

def lap_normalize(img, scale_n=4):

# Perform the Laplacian pyramid normalization

img = tf.expand_dims(img,0)

tlevels = lap_split_n(img, scale_n)

tlevels = list(map(normalize_std, tlevels))

out = lap_merge(tlevels)

return out[0,:,:,:]

def render_lapnorm(t_obj, img0=img_noise, visfunc=visstd,

iter_n=10, step=1.0, octave_n=3, octave_scale=1.4, lap_n=4):

t_score = tf.reduce_mean(t_obj) # defining the optimization objective

t_grad = tf.gradients(t_score, t_input)[0] # behold the power of automatic differentiation!

# build the laplacian normalization graph

lap_norm_func = tffunc(np.float32)(partial(lap_normalize, scale_n=lap_n))

img = img0.copy()

for octave in range(octave_n):

if octave>0:

hw = np.float32(img.shape[:2])*octave_scale

img = resize(img, np.int32(hw))

for i in range(iter_n):

g = calc_grad_tiled(img, t_grad)

g = lap_norm_func(g)

img += g*step

print('.', end = ' ')

clear_output()

showarray(visfunc(img))

render_lapnorm(T(layer)[:,:,:,channel])

# render_lapnorm(T(layer)[:,:,:,65])

# render_lapnorm(T('mixed3b_1x1_pre_relu')[:,:,:,101])

# render_lapnorm(T(layer)[:,:,:,65]+T(layer)[:,:,:,139], octave_n=4)

生成的效果图如下所示:

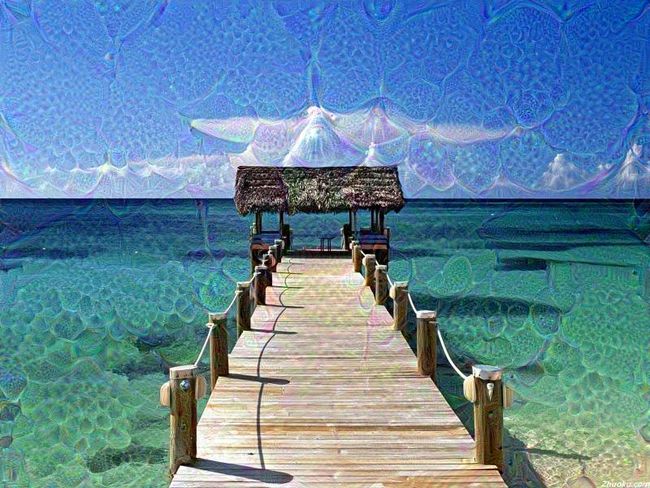

Deepdream

最后,我们再来看看Deep dream的生成,

def render_deepdream(t_obj, img0=img_noise,

iter_n=10, step=1.5, octave_n=4, octave_scale=1.4):

t_score = tf.reduce_mean(t_obj) # defining the optimization objective

t_grad = tf.gradients(t_score, t_input)[0] # behold the power of automatic differentiation!

# split the image into a number of octaves

img = img0

octaves = []

for i in range(octave_n-1):

hw = img.shape[:2]

lo = resize(img, np.int32(np.float32(hw)/octave_scale))

hi = img-resize(lo, hw)

img = lo

octaves.append(hi)

# generate details octave by octave

for octave in range(octave_n):

if octave>0:

hi = octaves[-octave]

img = resize(img, hi.shape[:2])+hi

for i in range(iter_n):

g = calc_grad_tiled(img, t_grad)

img += g*(step / (np.abs(g).mean()+1e-7))

print('.',end = ' ')

clear_output()

showarray(img/255.0)

img0 = PIL.Image.open('1.jpg')

img0 = np.float32(img0)

showarray(img0/255.0)

# render_deepdream(T(layer)[:,:,:,139], img0)

# render_deepdream(tf.square(T('mixed4c')), img0)生成的效果图如下所示:

参考来源

https://nbviewer.jupyter.org/github/tensorflow/tensorflow/blob/master/tensorflow/examples/tutorials/deepdream/deepdream.ipynb#multiscale