tensorflow 中对 tf.estimator 分配 GPU 方法

注:本文适用于Linux环境的操作。

1. 指定使用哪一块 GPU:

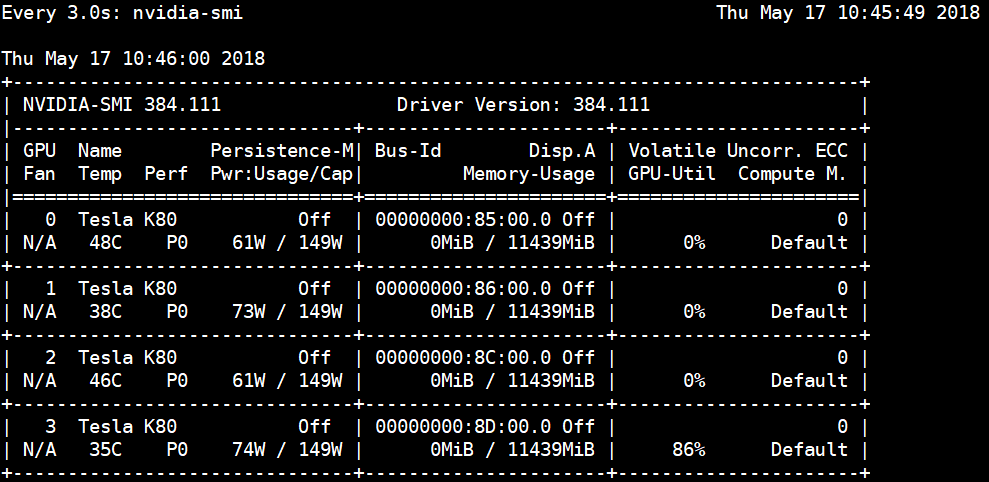

先查找有多少块 GPU,并且获得设备编号:

$ nvidia-smi在代码执行指定使用哪块GPU:

CUDA_VISIBLE_DEVICES=0 ./myapp

指定使用第0块或者第0,1块GPU:

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

os.environ['CUDA_VISIBLE_DEVICES'] = '0,1'

2 在tf.estimator() 控制GPU资源使用率:

session_config = tf.ConfigProto(log_device_placement=True)

session_config.gpu_options.per_process_gpu_memory_fraction = 0.5

run_config = tf.estimator.RunConfig().replace(session_config=session_config)

# Instantiate Estimator

nn = tf.estimator.Estimator(model_fn=model_fn, config=run_config, params=model_params)

3 tf.Config() 还可以这样用:

356 session_config = tf.ConfigProto(

log_device_placement=True

357 inter_op_parallelism_threads=0,

358 intra_op_parallelism_threads=0,

359 allow_soft_placement=True)

360

361 #lingfeng

362 session_config.gpu_options.allow_growth = True

363 session_config.gpu_options.allocator_type = 'BFC'

具体解释

log_device_placement=True

- 设置为True时,会打印出TensorFlow使用了那种操作

inter_op_parallelism_threads=0

- 设置线程一个操作内部并行运算的线程数,比如矩阵乘法,如果设置为0,则表示以最优的线程数处理

intra_op_parallelism_threads=0

- 设置多个操作并行运算的线程数,比如 c = a + b,d = e + f . 可以并行运算

allow_soft_placement=True

- 有时候,不同的设备,它的cpu和gpu是不同的,如果将这个选项设置成True,那么当运行设备不满足要求时,会自动分配GPU或者CPU。

参考代码:

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import os

import argparse

import sys

import tempfile

# Import urllib

from six.moves import urllib

import numpy as np

import tensorflow as tf

FLAGS = None

os.environ['CUDA_VISIBLE_DEVICES'] = '0'

# 开启loggging.

tf.logging.set_verbosity(tf.logging.INFO)

# 定义下载数据集.

def maybe_download(train_data, test_data, predict_data):

"""Maybe downloads training data and returns train and test file names."""

if train_data:

train_file_name = train_data

else:

train_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve(

"http://download.tensorflow.org/data/abalone_train.csv",

train_file.name)

train_file_name = train_file.name

train_file.close()

print("Training data is downloaded to %s" % train_file_name)

if test_data:

test_file_name = test_data

else:

test_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve(

"http://download.tensorflow.org/data/abalone_test.csv", test_file.name)

test_file_name = test_file.name

test_file.close()

print("Test data is downloaded to %s" % test_file_name)

if predict_data:

predict_file_name = predict_data

else:

predict_file = tempfile.NamedTemporaryFile(delete=False)

urllib.request.urlretrieve(

"http://download.tensorflow.org/data/abalone_predict.csv",

predict_file.name)

predict_file_name = predict_file.name

predict_file.close()

print("Prediction data is downloaded to %s" % predict_file_name)

return train_file_name, test_file_name, predict_file_name

def model_fn(features, labels, mode, params):

"""Model function for Estimator."""

# Connect the first hidden layer to input layer

# (features["x"]) with relu activation

first_hidden_layer = tf.layers.dense(features["x"], 10, activation=tf.nn.relu)

# Connect the second hidden layer to first hidden layer with relu

second_hidden_layer = tf.layers.dense(

first_hidden_layer, 10, activation=tf.nn.relu)

# Connect the output layer to second hidden layer (no activation fn)

output_layer = tf.layers.dense(second_hidden_layer, 1)

# Reshape output layer to 1-dim Tensor to return predictions

predictions = tf.reshape(output_layer, [-1])

# Provide an estimator spec for `ModeKeys.PREDICT`.

if mode == tf.estimator.ModeKeys.PREDICT:

return tf.estimator.EstimatorSpec(

mode=mode,

predictions={"ages": predictions})

# Calculate loss using mean squared error

loss = tf.losses.mean_squared_error(labels, predictions)

# Calculate root mean squared error as additional eval metric

eval_metric_ops = {

"rmse": tf.metrics.root_mean_squared_error(

tf.cast(labels, tf.float64), predictions)

}

optimizer = tf.train.GradientDescentOptimizer(

learning_rate=params["learning_rate"])

train_op = optimizer.minimize(

loss=loss, global_step=tf.train.get_global_step())

# Provide an estimator spec for `ModeKeys.EVAL` and `ModeKeys.TRAIN` modes.

return tf.estimator.EstimatorSpec(

mode=mode,

loss=loss,

train_op=train_op,

eval_metric_ops=eval_metric_ops)

# 创建main()函数,加载train/test/predict数据集.

def main(unused_argv):

# Load datasets

abalone_train, abalone_test, abalone_predict = maybe_download(

FLAGS.train_data, FLAGS.test_data, FLAGS.predict_data)

# Training examples

training_set = tf.contrib.learn.datasets.base.load_csv_without_header(

filename=abalone_train, target_dtype=np.int, features_dtype=np.float64)

# Test examples

test_set = tf.contrib.learn.datasets.base.load_csv_without_header(

filename=abalone_test, target_dtype=np.int, features_dtype=np.float64)

# Set of 7 examples for which to predict abalone ages

prediction_set = tf.contrib.learn.datasets.base.load_csv_without_header(

filename=abalone_predict, target_dtype=np.int, features_dtype=np.float64)

train_input_fn = tf.estimator.inputs.numpy_input_fn(

x={"x": np.array(training_set.data)},

y=np.array(training_set.target),

num_epochs=None,

shuffle=True)

LEARNING_RATE = 0.1

model_params = {"learning_rate": LEARNING_RATE}

session_config = tf.ConfigProto(log_device_placement=True)

session_config.gpu_options.per_process_gpu_memory_fraction = 0.5

run_config = tf.estimator.RunConfig().replace(session_config=session_config)

# Instantiate Estimator

nn = tf.estimator.Estimator(model_fn=model_fn, config=run_config, params=model_params)

print("training---")

nn.train(input_fn=train_input_fn, steps=5000)

# Score accuracy

test_input_fn = tf.estimator.inputs.numpy_input_fn(

x={"x": np.array(test_set.data)},

y=np.array(test_set.target),

num_epochs=1,

shuffle=False)

ev = nn.evaluate(input_fn=test_input_fn)

print("Loss: %s" % ev["loss"])

print("Root Mean Squared Error: %s" % ev["rmse"])

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.register("type", "bool", lambda v: v.lower() == "true")

parser.add_argument(

"--train_data", type=str, default="", help="Path to the training data.")

parser.add_argument(

"--test_data", type=str, default="", help="Path to the test data.")

parser.add_argument(

"--predict_data",

type=str,

default="",

help="Path to the prediction data.")

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

参考: 使用tf.estimator中创建Estimators