ijkplayer-android框架详解

本文重点分析其C语言实现的核心代码,涉及到不同平台下的封装接口或处理方式时,均以Android平台为例。

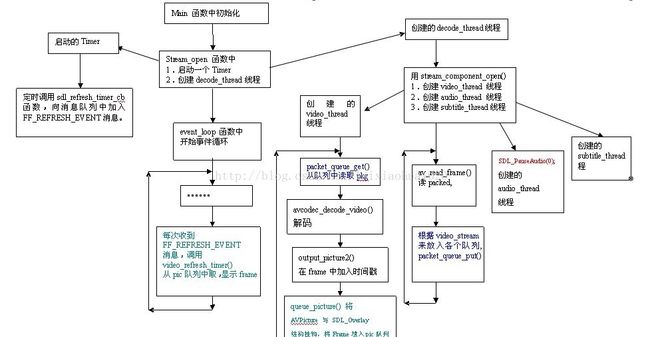

一、FFplay源码流程图

由于ijkplayer底层是基于ffplay的,首先需要了解ffplay的代码处理流程。FFplay是FFmpeg项目提供的播放器示例。

1). FFplay源代码的流程图如图所示。

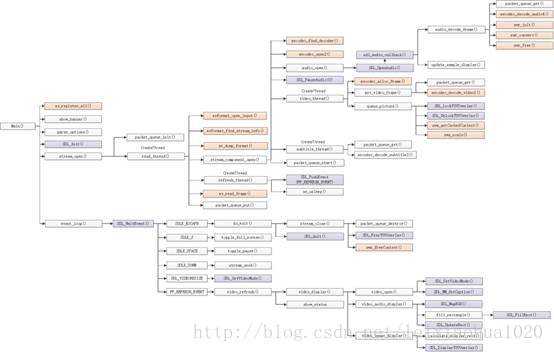

2). FFplay的总体函数调用结构图如下图所示。

函数的具体流程分析请参照雷神的博客: http://blog.csdn.net/leixiaohua1020/article/details/39762143

3). ijkplayer在底层重写了ffplay.c文件,主要是去除ffplay中使用sdl音视频库播放音视频的部分;并且增加了对移动端硬件解码部分,视频渲染部分,以及音频播放部分的实现,这些部分在android和ios下有不同的实现,具体如下:

| Platform | 硬件解码 | 视频渲染 | 音频播放 |

| IOS | VideoToolBox | OpenGL ES | AudioQueue |

| Android | MediaCodec | OpenGL ES、ANativeWindow | OpenSL ES、AudioTrack |

从上面可以看出ijkplayer是暂时不支持音频硬件解码的。

二、ijkplayer代码目录说明

打开ijkplayer,可看到其主要目录结构如下:

三、初始化流程android - android平台上的上层接口封装以及平台相关方法

config - 编译ffmpeg使用的配置文件

extra - 存放编译ijkplayer所需的依赖源文件, 如ffmpeg、openssl等

ijkmedia - 核心代码

ijkj4a - android平台下使用,用来实现c代码调用java层代码,这个文件夹是通过bilibili的另一个开源项目jni4android自动生成的。

ijkplayer - 播放器数据下载及解码相关

ijksdl - 音视频数据渲染相关

ios - iOS平台上的上层接口封装以及平台相关方法

tools - 初始化项目工程脚本

初始化完成的主要工作就是创建播放器对象,在IjkMediaPlayer类中initPlayer方法会调用native_setup完成这个这个工作。

private void initPlayer(IjkLibLoader libLoader) {

loadLibrariesOnce(libLoader);

initNativeOnce();

Looper looper;

if ((looper = Looper.myLooper()) != null) {

mEventHandler = new EventHandler(this, looper);

} else if ((looper = Looper.getMainLooper()) != null) {

mEventHandler = new EventHandler(this, looper);

} else {

mEventHandler = null;

}

/*

* Native setup requires a weak reference to our object. It's easier to

* create it here than in C++.

*/

native_setup(new WeakReference(this));

} native_setup是native本地方法,最终会调用ijkplayer_jni.c中的IjkMediaPlayer_native_setup方法

关于ijkplayer 中jni方法调用的代码解析可以参考:https://www.jianshu.com/p/75c31d05c6d9

static void

IjkMediaPlayer_native_setup(JNIEnv *env, jobject thiz, jobject weak_this)

{

MPTRACE("%s\n", __func__);

IjkMediaPlayer *mp = ijkmp_android_create(message_loop);

JNI_CHECK_GOTO(mp, env, "java/lang/OutOfMemoryError", "mpjni: native_setup: ijkmp_create() failed", LABEL_RETURN);

jni_set_media_player(env, thiz, mp);

ijkmp_set_weak_thiz(mp, (*env)->NewGlobalRef(env, weak_this));

ijkmp_set_inject_opaque(mp, ijkmp_get_weak_thiz(mp));

ijkmp_android_set_mediacodec_select_callback(mp, mediacodec_select_callback, (*env)->NewGlobalRef(env, weak_this));

LABEL_RETURN:

ijkmp_dec_ref_p(&mp);

}IjkMediaPlayer *ijkmp_android_create(int(*msg_loop)(void*))

{

IjkMediaPlayer *mp = ijkmp_create(msg_loop);

if (!mp)

goto fail;

mp->ffplayer->vout = SDL_VoutAndroid_CreateForAndroidSurface();

if (!mp->ffplayer->vout)

goto fail;

mp->ffplayer->pipeline = ffpipeline_create_from_android(mp->ffplayer);

if (!mp->ffplayer->pipeline)

goto fail;

ffpipeline_set_vout(mp->ffplayer->pipeline, mp->ffplayer->vout);

return mp;

fail:

ijkmp_dec_ref_p(&mp);

return NULL;

}在该方法中主要完成了三个动作:

1. 创建IJKMediaPlayer对象, 通过ffp_create方法创建了FFPlayer对象,并设置消息处理函数。

IjkMediaPlayer *ijkmp_create(int (*msg_loop)(void*))

{

IjkMediaPlayer *mp = (IjkMediaPlayer *) mallocz(sizeof(IjkMediaPlayer));

......

mp->ffplayer = ffp_create();

......

mp->msg_loop = msg_loop;

......

return mp;

}

2. 创建图像渲染对象SDL_Vout

SDL_Vout *SDL_VoutAndroid_CreateForANativeWindow()

{

SDL_Vout *vout = SDL_Vout_CreateInternal(sizeof(SDL_Vout_Opaque));

if (!vout)

return NULL;

SDL_Vout_Opaque *opaque = vout->opaque;

opaque->native_window = NULL;

if (ISDL_Array__init(&opaque->overlay_manager, 32))

goto fail;

if (ISDL_Array__init(&opaque->overlay_pool, 32))

goto fail;

opaque->egl = IJK_EGL_create();

if (!opaque->egl)

goto fail;

vout->opaque_class = &g_nativewindow_class;

vout->create_overlay = func_create_overlay;

vout->free_l = func_free_l;

vout->display_overlay = func_display_overlay;

return vout;

fail:

func_free_l(vout);

return NULL;

}

IJKFF_Pipeline *ffpipeline_create_from_android(FFPlayer *ffp)

{

ALOGD("ffpipeline_create_from_android()\n");

IJKFF_Pipeline *pipeline = ffpipeline_alloc(&g_pipeline_class, sizeof(IJKFF_Pipeline_Opaque));

if (!pipeline)

return pipeline;

IJKFF_Pipeline_Opaque *opaque = pipeline->opaque;

opaque->ffp = ffp;

opaque->surface_mutex = SDL_CreateMutex();

opaque->left_volume = 1.0f;

opaque->right_volume = 1.0f;

if (!opaque->surface_mutex) {

ALOGE("ffpipeline-android:create SDL_CreateMutex failed\n");

goto fail;

}

pipeline->func_destroy = func_destroy;

pipeline->func_open_video_decoder = func_open_video_decoder;

pipeline->func_open_audio_output = func_open_audio_output;

return pipeline;

fail:

ffpipeline_free_p(&pipeline);

return NULL;

}更详细的ijkplayer初始化的流程大家请参照:https://www.jianshu.com/p/75c31d05c6d9

四、核心代码剖析

ijkplayer实际上是基于ffplay.c实现的,本章节将以该文件为主线,从数据接收、音视频解码、音视频渲染及同步这三大方面进行讲解,要求读者有基本的ffmpeg知识。

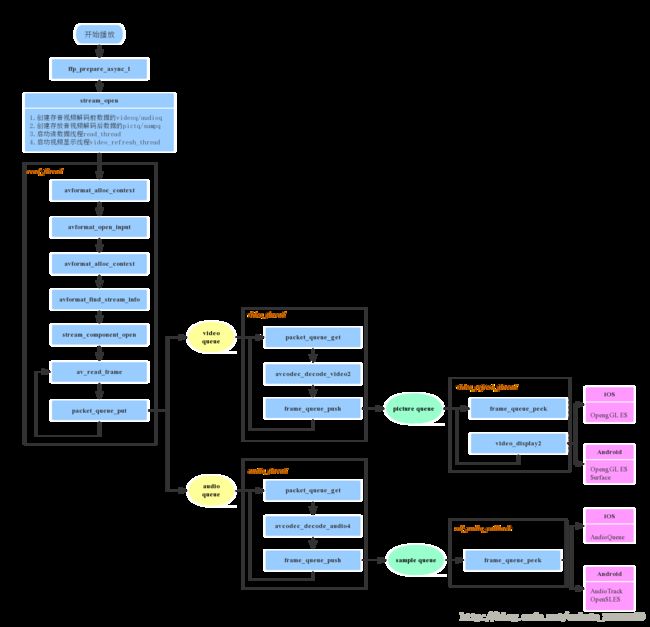

ffplay.c中主要的代码调用流程如下图所示:

当外部调用prepareToPlay启动播放后,ijkplayer内部最终会调用到ffplay.c中的方法

int ffp_prepare_async_l(FFPlayer *ffp, const char *file_name)

该方法是启动播放器的入口函数,在此会设置player选项,打开audio output,最重要的是调用stream_open方法。

static VideoState *stream_open(FFPlayer *ffp, const char *filename, AVInputFormat *iformat)

{

......

/* start video display */

if (frame_queue_init(&is->pictq, &is->videoq, ffp->pictq_size, 1) < 0)

goto fail;

if (frame_queue_init(&is->sampq, &is->audioq, SAMPLE_QUEUE_SIZE, 1) < 0)

goto fail;

if (packet_queue_init(&is->videoq) < 0 ||

packet_queue_init(&is->audioq) < 0 )

goto fail;

......

is->video_refresh_tid = SDL_CreateThreadEx(&is->_video_refresh_tid, video_refresh_thread, ffp, "ff_vout");

......

is->read_tid = SDL_CreateThreadEx(&is->_read_tid, read_thread, ffp, "ff_read");

......

}创建存放video/audio解码前数据的videoq/audioq

创建存放video/audio解码后数据的pictq/sampq

创建读数据线程read_thread

创建视频渲染线程video_refresh_thread

说明:subtitle是与video、audio平行的一个stream,ffplay中也支持对它的处理,即创建存放解码前后数据的两个queue,并且当文件中存在subtitle时,还会启动subtitle的解码线程。

4.1 数据读取

数据读取的整个过程都是由ffmpeg内部完成的,接收到网络过来的数据后,ffmpeg根据其封装格式,完成了解复用的动作,我们得到的,是音视频分离开的解码前的数据,步骤如下:

a). 创建上下文结构体,这个结构体是最上层的结构体,表示输入上下文

ic = avformat_alloc_context();b). 设置中断函数,如果出错或者退出,就可以立刻退出

ic->interrupt_callback.callback = decode_interrupt_cb;

ic->interrupt_callback.opaque = is;

err = avformat_open_input(&ic, is->filename, is->iformat, &ffp->format_opts);

err = avformat_find_stream_info(ic, opts);stream_component_open(ffp, st_index[AVMEDIA_TYPE_AUDIO]);ret = av_read_frame(ic, pkt);if (pkt->stream_index == is->audio_stream && pkt_in_play_range) {

packet_queue_put(&is->audioq, pkt);

} else if (pkt->stream_index == is->video_stream && pkt_in_play_range && !(is->video_st && (is->video_st->disposition & AV_DISPOSITION_ATTACHED_PIC))) {

packet_queue_put(&is->videoq, pkt);

......

} else {

av_packet_unref(pkt);

}4.2 音视频解码

ijkplayer在视频解码上支持软解和硬解两种方式,可在起播前配置优先使用的解码方式,播放过程中不可切换。iOS平台上硬解使用VideoToolbox,Android平台上使用MediaCodec。ijkplayer中的音频解码只支持软解,暂不支持硬解。

4.2.1 视频解码方式选择

在打开解码器的方法中:

static int stream_component_open(FFPlayer *ffp, int stream_index)

{

......

codec = avcodec_find_decoder(avctx->codec_id);

......

if ((ret = avcodec_open2(avctx, codec, &opts)) < 0) {

goto fail;

}

......

case AVMEDIA_TYPE_VIDEO:

......

decoder_init(&is->viddec, avctx, &is->videoq, is->continue_read_thread);

ffp->node_vdec = ffpipeline_open_video_decoder(ffp->pipeline, ffp);

if (!ffp->node_vdec)

goto fail;

if ((ret = decoder_start(&is->viddec, video_thread, ffp, "ff_video_dec")) < 0)

goto out;

......

}

首先会打开ffmpeg的解码器,然后通过ffpipeline_open_video_decoder创建IJKFF_Pipenode。

第三章节中有介绍,在创建IJKMediaPlayer对象时,通过ffpipeline_create_from_android创建了pipeline。该函数实现如下:

IJKFF_Pipenode* ffpipeline_open_video_decoder(IJKFF_Pipeline *pipeline, FFPlayer *ffp)

{

return pipeline->func_open_video_decoder(pipeline, ffp);

}

func_open_video_decoder

函数指针最后指向的是ffpipeline_android.c中的

func_open_video_decoder

,其定义如下:

static IJKFF_Pipenode *func_open_video_decoder(IJKFF_Pipeline *pipeline, FFPlayer *ffp)

{

IJKFF_Pipeline_Opaque *opaque = pipeline->opaque;

IJKFF_Pipenode *node = NULL;

if (ffp->mediacodec_all_videos || ffp->mediacodec_avc || ffp->mediacodec_hevc || ffp->mediacodec_mpeg2)

node = ffpipenode_create_video_decoder_from_android_mediacodec(ffp, pipeline, opaque->weak_vout);

if (!node) {

node = ffpipenode_create_video_decoder_from_ffplay(ffp);

}

return node;

}关于ffp->mediacodec_all_videos 、ffp->mediacodec_avc 、ffp->mediacodec_hevc 、ffp->mediacodec_mpeg2它们的值需要在起播前通过如下方法配置:

ijkmp_set_option_int(_mediaPlayer, IJKMP_OPT_CATEGORY_PLAYER, "xxxxx", 1);

4.2.2 音视频解码

video的解码线程为video_thread,audio的解码线程为audio_thread。

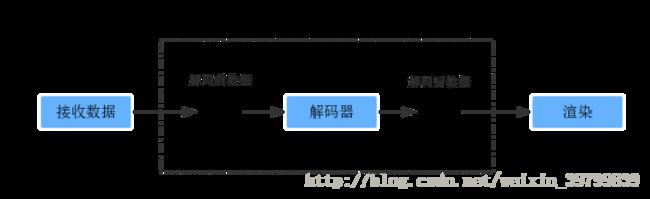

不管视频解码还是音频解码,其基本流程都是从解码前的数据缓冲区中取出一帧数据进行解码,完成后放入相应的解码后的数据缓冲区,如下图所示:

本文以video的硬件解码流程为例进行分析,audio的流程可对照研究。

视频解码线程

static int video_thread(void *arg)

{

FFPlayer *ffp = (FFPlayer *)arg;

int ret = 0;

if (ffp->node_vdec) {

ret = ffpipenode_run_sync(ffp->node_vdec);

}

return ret;

}ffpipenode_run_sync

中调用的是IJKFF_Pipenode对象中的

func_run_sync

int ffpipenode_run_sync(IJKFF_Pipenode *node)

{

return node->func_run_sync(node);

}func_run_sync

取决于播放前配置的软硬解,假设为硬解,func_run_sync函数指针最后指向的是ffpipenode_android_mediacodec_vdec.c中的func_run_sync,其定义如下:

static int func_run_sync(IJKFF_Pipenode *node)

{

.......

opaque->enqueue_thread = SDL_CreateThreadEx(&opaque->_enqueue_thread, enqueue_thread_func, node, "amediacodec_input_thread");

if (!opaque->enqueue_thread) {

ALOGE("%s: SDL_CreateThreadEx failed\n", __func__);

ret = -1;

goto fail;

}

while (!q->abort_request) {

int64_t timeUs = opaque->acodec_first_dequeue_output_request ? 0 : AMC_OUTPUT_TIMEOUT_US;

got_frame = 0;

ret = drain_output_buffer(env, node, timeUs, &dequeue_count, frame, &got_frame);

.......

if (got_frame) {

duration = (frame_rate.num && frame_rate.den ? av_q2d((AVRational){frame_rate.den, frame_rate.num}) : 0);

pts = (frame->pts == AV_NOPTS_VALUE) ? NAN : frame->pts * av_q2d(tb);

ret = ffp_queue_picture(ffp, frame, pts, duration, av_frame_get_pkt_pos(frame), is->viddec.pkt_serial);

......

}

}

}static int enqueue_thread_func(void *arg)

{

......

while (!q->abort_request) {

ret = feed_input_buffer(env, node, AMC_INPUT_TIMEOUT_US, &dequeue_count);

if (ret != 0) {

goto fail;

}

}

......

}b). 创建完输入线程后,直接进入while循环,循环中调用drain_output_buffer去获取硬件解码后的数据,该函数最后一个参数用来标记是否接收到完整的一帧数据。

c). 当got_frame为true时,将接收的帧通过ffp_queue_picture送入pictq队列里。

4.3 音视频渲染及同步

4.3.1 音频输出

ijkplayer中Android平台使用OpenSL ES或AudioTrack输出音频,iOS平台使用AudioQueue输出音频。

audio output节点,在ffp_prepare_async_l方法中被创建:

ffp->aout = ffpipeline_open_audio_output(ffp->pipeline, ffp);

static SDL_Aout *func_open_audio_output(IJKFF_Pipeline *pipeline, FFPlayer *ffp)

{

SDL_Aout *aout = NULL;

if (ffp->opensles) {

aout = SDL_AoutAndroid_CreateForOpenSLES();

} else {

aout = SDL_AoutAndroid_CreateForAudioTrack();

}

if (aout)

SDL_AoutSetStereoVolume(aout, pipeline->opaque->left_volume, pipeline->opaque->right_volume);

return aout;

} SDL_AoutAndroid_CreateForOpenSLES定义如下,主要完成的是创建SDL_Aout对象

SDL_Aout *SDL_AoutAndroid_CreateForOpenSLES()

{

SDLTRACE("%s\n", __func__);

SDL_Aout *aout = SDL_Aout_CreateInternal(sizeof(SDL_Aout_Opaque));

if (!aout)

return NULL;

SDL_Aout_Opaque *opaque = aout->opaque;

opaque->wakeup_cond = SDL_CreateCond();

opaque->wakeup_mutex = SDL_CreateMutex();

int ret = 0;

SLObjectItf slObject = NULL;

ret = slCreateEngine(&slObject, 0, NULL, 0, NULL, NULL);

CHECK_OPENSL_ERROR(ret, "%s: slCreateEngine() failed", __func__);

opaque->slObject = slObject;

ret = (*slObject)->Realize(slObject, SL_BOOLEAN_FALSE);

CHECK_OPENSL_ERROR(ret, "%s: slObject->Realize() failed", __func__);

SLEngineItf slEngine = NULL;

ret = (*slObject)->GetInterface(slObject, SL_IID_ENGINE, &slEngine);

CHECK_OPENSL_ERROR(ret, "%s: slObject->GetInterface() failed", __func__);

opaque->slEngine = slEngine;

SLObjectItf slOutputMixObject = NULL;

const SLInterfaceID ids1[] = {SL_IID_VOLUME};

const SLboolean req1[] = {SL_BOOLEAN_FALSE};

ret = (*slEngine)->CreateOutputMix(slEngine, &slOutputMixObject, 1, ids1, req1);

CHECK_OPENSL_ERROR(ret, "%s: slEngine->CreateOutputMix() failed", __func__);

opaque->slOutputMixObject = slOutputMixObject;

ret = (*slOutputMixObject)->Realize(slOutputMixObject, SL_BOOLEAN_FALSE);

CHECK_OPENSL_ERROR(ret, "%s: slOutputMixObject->Realize() failed", __func__);

aout->free_l = aout_free_l;

aout->opaque_class = &g_opensles_class;

aout->open_audio = aout_open_audio;

aout->pause_audio = aout_pause_audio;

aout->flush_audio = aout_flush_audio;

aout->close_audio = aout_close_audio;

aout->set_volume = aout_set_volume;

aout->func_get_latency_seconds = aout_get_latency_seconds;

return aout;

fail:

aout_free_l(aout);

return NULL;

}stream_component_open

打开解码器时,该方法里面也调用

audio_open

打开了audio output设备。

static int audio_open(FFPlayer *opaque, int64_t wanted_channel_layout, int wanted_nb_channels, int wanted_sample_rate, struct AudioParams *audio_hw_params)

{

FFPlayer *ffp = opaque;

VideoState *is = ffp->is;

SDL_AudioSpec wanted_spec, spec;

......

wanted_nb_channels = av_get_channel_layout_nb_channels(wanted_channel_layout);

wanted_spec.channels = wanted_nb_channels;

wanted_spec.freq = wanted_sample_rate;

wanted_spec.format = AUDIO_S16SYS;

wanted_spec.silence = 0;

wanted_spec.samples = FFMAX(SDL_AUDIO_MIN_BUFFER_SIZE, 2 << av_log2(wanted_spec.freq / SDL_AoutGetAudioPerSecondCallBacks(ffp->aout)));

wanted_spec.callback = sdl_audio_callback;

wanted_spec.userdata = opaque;

while (SDL_AoutOpenAudio(ffp->aout, &wanted_spec, &spec) < 0) {

.....

}

......

return spec.size;

}audio_open

中配置了音频输出的相关参数

SDL_AudioSpec

,并通过

int SDL_AoutOpenAudio(SDL_Aout *aout, const SDL_AudioSpec *desired, SDL_AudioSpec *obtained)

{

if (aout && desired && aout->open_audio)

return aout->open_audio(aout, desired, obtained);

return -1;

}设置给了Audio Output, android平台上即为OpenSLES。

OpenSLES模块在工作过程中,通过不断的callback来获取pcm数据进行播放。

4.3.2 视频渲染这里只介绍Android平台上采用OpenGL渲染解码后的YUV图像,渲染线程为video_refresh_thread,最后渲染图像的方法为video_image_display2,定义如下:

static void video_image_display2(FFPlayer *ffp)

{

VideoState *is = ffp->is;

Frame *vp;

Frame *sp = NULL;

vp = frame_queue_peek_last(&is->pictq);

......

SDL_VoutDisplayYUVOverlay(ffp->vout, vp->bmp);

......

}从代码实现上可以看出,该线程的主要工作为:

-

调用

frame_queue_peek_last从pictq中读取当前需要显示视频帧 -

调用

SDL_VoutDisplayYUVOverlay进行绘制

int SDL_VoutDisplayYUVOverlay(SDL_Vout *vout, SDL_VoutOverlay *overlay)

{

if (vout && overlay && vout->display_overlay)

return vout->display_overlay(vout, overlay);

return -1;

}display_overlay函数指针在前面初始化流程有介绍过,它在函数SDL_VoutAndroid_CreateForANativeWindow()中被赋值为vout_display_overlay,该方法就是调用OpengGL绘制图像。

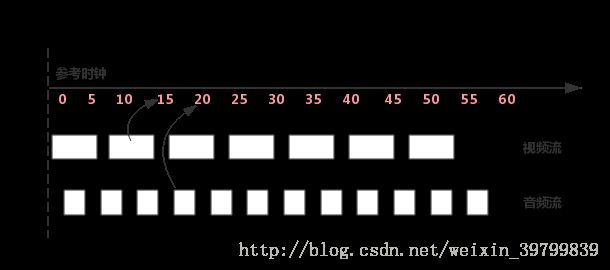

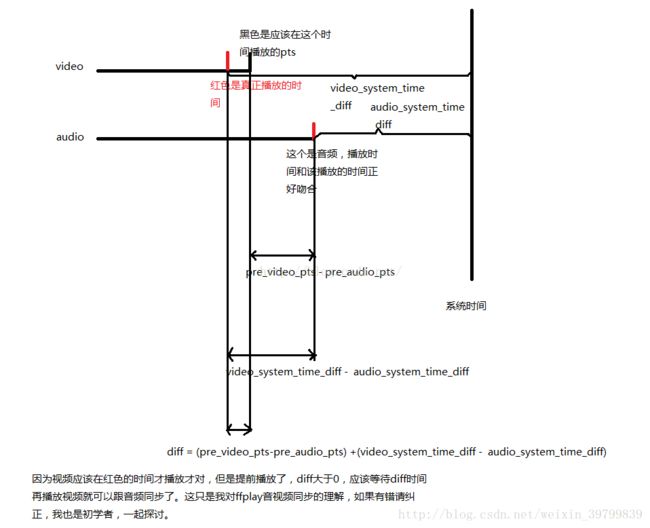

对于播放器来说,音视频同步是一个关键点,同时也是一个难点,同步效果的好坏,直接决定着播放器的质量。通常音视频同步的解决方案就是选择一个参考时钟,播放时读取音视频帧上的时间戳,同时参考当前时钟参考时钟上的时间来安排播放。如下图所示:

如果音视频帧的播放时间大于当前参考时钟上的时间,则不急于播放该帧,直到参考时钟达到该帧的时间戳;如果音视频帧的时间戳小于当前参考时钟上的时间,则需要“尽快”播放该帧或丢弃,以便播放进度追上参考时钟。

参考时钟的选择也有多种方式:

选取视频时间戳作为参考时钟源

选取音频时间戳作为参考时钟源

选取外部时间作为参考时钟源

考虑人对视频、和音频的敏感度,在存在音频的情况下,优先选择音频作为主时钟源。

ijkplayer在默认情况下也是使用音频作为参考时钟源,处理同步的过程主要在视频渲染video_refresh_thread的线程中:

static int video_refresh_thread(void *arg)

{

FFPlayer *ffp = arg;

VideoState *is = ffp->is;

double remaining_time = 0.0;

while (!is->abort_request) {

if (remaining_time > 0.0)

av_usleep((int)(int64_t)(remaining_time * 1000000.0));

remaining_time = REFRESH_RATE;

if (is->show_mode != SHOW_MODE_NONE && (!is->paused || is->force_refresh))

video_refresh(ffp, &remaining_time);

}

return 0;

}从上述实现可以看出,该方法中主要循环做两件事情:

- 休眠等待,

remaining_time的计算在video_refresh中 - 调用

video_refresh方法,刷新视频帧

可见同步的重点是在video_refresh中,下面着重分析该方法:

lastvp = frame_queue_peek_last(&is->pictq);

vp = frame_queue_peek(&is->pictq);

......

/* compute nominal last_duration */

last_duration = vp_duration(is, lastvp, vp);

delay = compute_target_delay(ffp, last_duration, is);compute_target_delay

方法,计算出显示当前帧需要等待的时间。

static double compute_target_delay(FFPlayer *ffp, double delay, VideoState *is)

{

double sync_threshold, diff = 0;

/* update delay to follow master synchronisation source */

if (get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER) {

/* if video is slave, we try to correct big delays by

duplicating or deleting a frame */

diff = get_clock(&is->vidclk) - get_master_clock(is);

/* skip or repeat frame. We take into account the

delay to compute the threshold. I still don't know

if it is the best guess */

sync_threshold = FFMAX(AV_SYNC_THRESHOLD_MIN, FFMIN(AV_SYNC_THRESHOLD_MAX, delay));

/* -- by bbcallen: replace is->max_frame_duration with AV_NOSYNC_THRESHOLD */

if (!isnan(diff) && fabs(diff) < AV_NOSYNC_THRESHOLD) {

if (diff <= -sync_threshold)

delay = FFMAX(0, delay + diff);

else if (diff >= sync_threshold && delay > AV_SYNC_FRAMEDUP_THRESHOLD)

delay = delay + diff;

else if (diff >= sync_threshold)

delay = 2 * delay;

}

}

.....

return delay;

}******************************************************************************************************************

关于diff = get_clock(&is->vidclk) - get_master_clock(is)的计算说明(转发)

最终视频和音频的diff可以用下面的公式表示:

diff = (pre_video_pts-pre_audio_pts) +(video_system_time_diff - audio_system_time_diff)

****************************************************************************************************************************************************

如果当前视频帧落后于主时钟源,则需要减小下一帧画面的等待时间;

如果视频帧超前,并且该帧的显示时间大于显示更新门槛,则显示下一帧的时间为超前的时间差加上上一帧的显示时间

如果视频帧超前,并且上一帧的显示时间小于显示更新门槛,则采取加倍延时的策略。

回到video_refresh中

time= av_gettime_relative()/1000000.0;

if (isnan(is->frame_timer) || time < is->frame_timer)

is->frame_timer = time;

if (time < is->frame_timer + delay) {

*remaining_time = FFMIN(is->frame_timer + delay - time, *remaining_time);

goto display;

}is->frame_timer += delay;

if (delay > 0 && time - is->frame_timer > AV_SYNC_THRESHOLD_MAX)

is->frame_timer = time;

SDL_LockMutex(is->pictq.mutex);

if (!isnan(vp->pts))

update_video_pts(is, vp->pts, vp->pos, vp->serial);

SDL_UnlockMutex(is->pictq.mutex);

if (frame_queue_nb_remaining(&is->pictq) > 1) {

Frame *nextvp = frame_queue_peek_next(&is->pictq);

duration = vp_duration(is, vp, nextvp);

if(!is->step && (ffp->framedrop > 0 || (ffp->framedrop && get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER)) && time > is->frame_timer + duration) {

frame_queue_next(&is->pictq);

goto retry;

}

}{

frame_queue_next(&is->pictq);

is->force_refresh = 1;

SDL_LockMutex(ffp->is->play_mutex);

......

display:

/* display picture */

if (!ffp->display_disable && is->force_refresh && is->show_mode == SHOW_MODE_VIDEO && is->pictq.rindex_shown)

video_display2(ffp);video_display2

开始渲染。

五、事件处理

在播放过程中,某些行为的完成或者变化,如prepare完成,开始渲染等,需要以事件形式通知到外部,以便上层作出具体的业务处理。

ijkplayer支持的事件比较多,具体定义在ijkplayer/ijkmedia/ijkplayer/ff_ffmsg.h中

#define FFP_MSG_FLUSH 0

#define FFP_MSG_ERROR 100 /* arg1 = error */

#define FFP_MSG_PREPARED 200

#define FFP_MSG_COMPLETED 300

#define FFP_MSG_VIDEO_SIZE_CHANGED 400 /* arg1 = width, arg2 = height */

#define FFP_MSG_SAR_CHANGED 401 /* arg1 = sar.num, arg2 = sar.den */

#define FFP_MSG_VIDEO_RENDERING_START 402

#define FFP_MSG_AUDIO_RENDERING_START 403

#define FFP_MSG_VIDEO_ROTATION_CHANGED 404 /* arg1 = degree */

#define FFP_MSG_BUFFERING_START 500

#define FFP_MSG_BUFFERING_END 501

#define FFP_MSG_BUFFERING_UPDATE 502 /* arg1 = buffering head position in time, arg2 = minimum percent in time or bytes */

#define FFP_MSG_BUFFERING_BYTES_UPDATE 503 /* arg1 = cached data in bytes, arg2 = high water mark */

#define FFP_MSG_BUFFERING_TIME_UPDATE 504 /* arg1 = cached duration in milliseconds, arg2 = high water mark */

#define FFP_MSG_SEEK_COMPLETE 600 /* arg1 = seek position, arg2 = error */

#define FFP_MSG_PLAYBACK_STATE_CHANGED 700

#define FFP_MSG_TIMED_TEXT 800

#define FFP_MSG_VIDEO_DECODER_OPEN 10001在IJKMediaPlayer的初始化方法中:

static void

IjkMediaPlayer_native_setup(JNIEnv *env, jobject thiz, jobject weak_this)

{

MPTRACE("%s\n", __func__);

IjkMediaPlayer *mp = ijkmp_android_create(message_loop);

......

}message_loop

函数地址作为参数传入了

ijkmp_android_create

,继续跟踪代码,可以发现,该函数地址最终被赋值给了IjkMediaPlayer中的

msg_loop

函数指针

IjkMediaPlayer *ijkmp_create(int (*msg_loop)(void*))

{

......

mp->msg_loop = msg_loop;

......

}static int ijkmp_prepare_async_l(IjkMediaPlayer *mp)

{

......

mp->msg_thread = SDL_CreateThreadEx(&mp->_msg_thread, ijkmp_msg_loop, mp, "ff_msg_loop");

......

}ijkmp_msg_loop

方法中调用的即是

mp->msg_loop

。

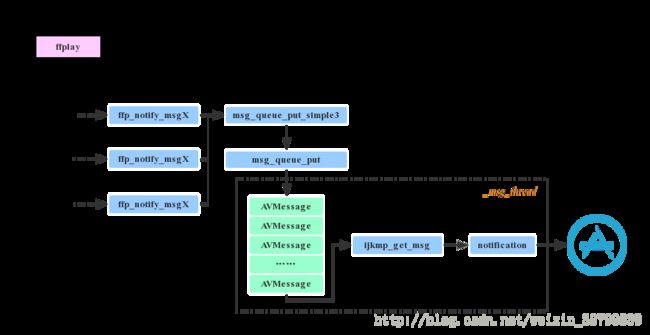

5.2 消息上报处理

播放器底层上报事件时,实际上就是将待发送的消息放入消息队列,另外有一个线程会不断从队列中取出消息,上报给外部,其代码流程大致如下图所示:

以prepare完成事件为例,看看代码中事件上报的具体流程。

ffplay.c中上报PREPARED完成时调用:

ffp_notify_msg1(ffp, FFP_MSG_PREPARED);ffp_notify_msg1

方法实现如下:

inline static void ffp_notify_msg1(FFPlayer *ffp, int what) {

msg_queue_put_simple3(&ffp->msg_queue, what, 0, 0);

}msg_queue_put_simple3

中将事件及其参数封装成了AVMessge对象,

inline static void msg_queue_put_simple3(MessageQueue *q, int what, int arg1, int arg2)

{

AVMessage msg;

msg_init_msg(&msg);

msg.what = what;

msg.arg1 = arg1;

msg.arg2 = arg2;

msg_queue_put(q, &msg);

}最后调用msg_queue_put_private将消息加入到队列里。

在5.1节中,我们有提到在创建播放器时,会传入

message_loop

函数地址,最后作为一个单独的线程运行,现在来看一下message_loop

方法的实现:

static void message_loop_n(JNIEnv *env, IjkMediaPlayer *mp)

{

jobject weak_thiz = (jobject) ijkmp_get_weak_thiz(mp);

JNI_CHECK_GOTO(weak_thiz, env, NULL, "mpjni: message_loop_n: null weak_thiz", LABEL_RETURN);

while (1) {

AVMessage msg;

int retval = ijkmp_get_msg(mp, &msg, 1);

if (retval < 0)

break;

// block-get should never return 0

assert(retval > 0);

switch (msg.what) {

case FFP_MSG_FLUSH:

MPTRACE("FFP_MSG_FLUSH:\n");

post_event(env, weak_thiz, MEDIA_NOP, 0, 0);

break;

case FFP_MSG_ERROR:

MPTRACE("FFP_MSG_ERROR: %d\n", msg.arg1);

post_event(env, weak_thiz, MEDIA_ERROR, MEDIA_ERROR_IJK_PLAYER, msg.arg1);

break;

case FFP_MSG_PREPARED:

MPTRACE("FFP_MSG_PREPARED:\n");

post_event(env, weak_thiz, MEDIA_PREPARED, 0, 0);

break;

......

}

}inline static void post_event(JNIEnv *env, jobject weak_this, int what, int arg1, int arg2)

{

// MPTRACE("post_event(%p, %p, %d, %d, %d)", (void*)env, (void*) weak_this, what, arg1, arg2);

J4AC_IjkMediaPlayer__postEventFromNative(env, weak_this, what, arg1, arg2, NULL);

// MPTRACE("post_event()=void");

}post_event方法调用J4AC_IjkMediaPlayer__postEventFromNative(j4a文件夹中实现c代码调用java代码),调用该函数其实就是调用IJKMeadiaPlayer.java类的postEventFromNative方法。

@CalledByNative

private static void postEventFromNative(Object weakThiz, int what,

int arg1, int arg2, Object obj) {

if (weakThiz == null)

return;

@SuppressWarnings("rawtypes")

IjkMediaPlayer mp = (IjkMediaPlayer) ((WeakReference) weakThiz).get();

if (mp == null) {

return;

}

if (what == MEDIA_INFO && arg1 == MEDIA_INFO_STARTED_AS_NEXT) {

// this acquires the wakelock if needed, and sets the client side

// state

mp.start();

}

if (mp.mEventHandler != null) {

Message m = mp.mEventHandler.obtainMessage(what, arg1, arg2, obj);

mp.mEventHandler.sendMessage(m);

}

}public void handleMessage(Message msg) {

IjkMediaPlayer player = mWeakPlayer.get();

if (player == null || player.mNativeMediaPlayer == 0) {

DebugLog.w(TAG,

"IjkMediaPlayer went away with unhandled events");

return;

}

switch (msg.what) {

case MEDIA_PREPARED:

player.notifyOnPrepared();

return;

......

}对于MEDIA_PREPARED消息,会使用IjkMediaPlayer的notifyOnPrepared()方法,该方法定义在基类AbstractMediaPlayer.java中,具体如下:

protected final void notifyOnPrepared() {

if (mOnPreparedListener != null)

mOnPreparedListener.onPrepared(this);

}成员变量mOnPreparedListener是通过其set方法赋值的,如下:

public final void setOnPreparedListener(OnPreparedListener listener) {

mOnPreparedListener = listener;

} private void openVideo() {

if (mUri == null || mSurfaceHolder == null) {

// not ready for playback just yet, will try again later

return;

}

// we shouldn't clear the target state, because somebody might have

// called start() previously

release(false);

AudioManager am = (AudioManager) mAppContext.getSystemService(Context.AUDIO_SERVICE);

am.requestAudioFocus(null, AudioManager.STREAM_MUSIC, AudioManager.AUDIOFOCUS_GAIN);

try {

mMediaPlayer = createPlayer(mSettings.getPlayer());

// TODO: create SubtitleController in MediaPlayer, but we need

// a context for the subtitle renderers

final Context context = getContext();

// REMOVED: SubtitleController

// REMOVED: mAudioSession

mMediaPlayer.setOnPreparedListener(mPreparedListener);

mMediaPlayer.setOnVideoSizeChangedListener(mSizeChangedListener);

......

}文章中三、四、五部分主要是参考下面的文章写的:

https://www.jianshu.com/p/daf0a61cc1e0