《IDEA创建Spark工程并submit执行》

《windows下IDEA创建Spark工程并提交执行》

1、创建时在Scala中选择IDEA项目

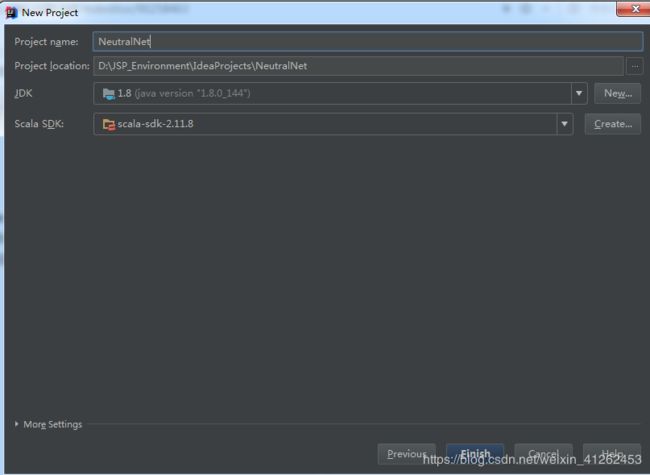

2、设置JAVA JDK 和Scala的JDK,其中Project SDK需要通过“New…”设置为JDK的路径;Scala SDK需要通过“Create…”设置为Scala的路径,同时命名项目

3、在弹出的窗口中单击“Modules”命令,在对应的右侧窗口中,选中“src”文件夹并单击右键,选择“New Folder…”命令,创建“main”文件夹,如下图。同样的步骤,再选中“main”,在其中分别创建“java”、“resources”、"scala"文件夹,然后通过“Mark as”设置文件夹属性,目录结构如下图。

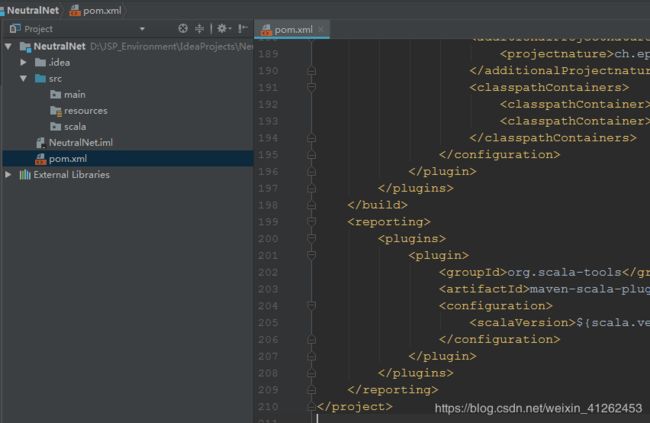

4、在项目目录下添加pom.xml文件

pom.xml文件中的内容为:很多暂时没用到的依赖也先放进来了,根据自己版本和需要更改

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>zjut</groupId>

<artifactId>NeutralNet</artifactId>

<version>1.0</version>

<inceptionYear>2008</inceptionYear>

<properties>

<scala.version>2.11.8</scala.version>

<kafka.version>0.9.0.0</kafka.version>

<spark.version>2.2.0</spark.version>

<hadoop.version>2.6.0-cdh5.7.0</hadoop.version>

<hbase.version>1.2.0-cdh5.7.0</hbase.version>

</properties>

<!--添加cloudera的repository-->

<repositories>

<repository>

<id>cloudera</id>

<url>https://repository.cloudera.com/artifactory/cloudera-repos</url>

</repository>

</repositories>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!-- Kafka 依赖-->

<!--

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>${kafka.version}</version>

</dependency>

-->

<!-- Hadoop 依赖-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

<!-- HBase 依赖

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>${hbase.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>${hbase.version}</version>

</dependency>

-->

<!-- Spark Streaming 依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

-->

<!-- Spark Streaming整合Flume 依赖-->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-flume_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-flume-sink_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-8_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.commons</groupId>

<artifactId>commons-lang3</artifactId>

<version>3.5</version>

</dependency>

<!-- Spark SQL 依赖-->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>com.fasterxml.jackson.module</groupId>

<artifactId>jackson-module-scala_2.11</artifactId>

<version>2.6.5</version>

</dependency>

<dependency>

<groupId>net.jpountz.lz4</groupId>

<artifactId>lz4</artifactId>

<version>1.3.0</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.38</version>

</dependency>

<dependency>

<groupId>org.apache.flume.flume-ng-clients</groupId>

<artifactId>flume-ng-log4jappender</artifactId>

<version>1.6.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.mortbay.jetty/jetty-util -->

</dependencies>

<build>

<!--

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

-->

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

<args>

<arg>-target:jvm-1.5</arg>

</args>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.1.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

<archive>

<manifest>

<mainClass>com.immoc.spark.project.spark.ImmocStatStreamingApp</mainClass>

</manifest>

</archive>

</configuration>

<executions>

<execution>

<id>make-assembly</id> <!-- this is used for inheritance merges -->

<phase>package</phase> <!-- bind to the packaging phase -->

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-eclipse-plugin</artifactId>

<configuration>

<downloadSources>true</downloadSources>

<buildcommands>

<buildcommand>ch.epfl.lamp.sdt.core.scalabuilder</buildcommand>

</buildcommands>

<additionalProjectnatures>

<projectnature>ch.epfl.lamp.sdt.core.scalanature</projectnature>

</additionalProjectnatures>

<classpathContainers>

<classpathContainer>org.eclipse.jdt.launching.JRE_CONTAINER</classpathContainer>

<classpathContainer>ch.epfl.lamp.sdt.launching.SCALA_CONTAINER</classpathContainer>

</classpathContainers>

</configuration>

</plugin>

</plugins>

</build>

<reporting>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

</configuration>

</plugin>

</plugins>

</reporting>

</project>

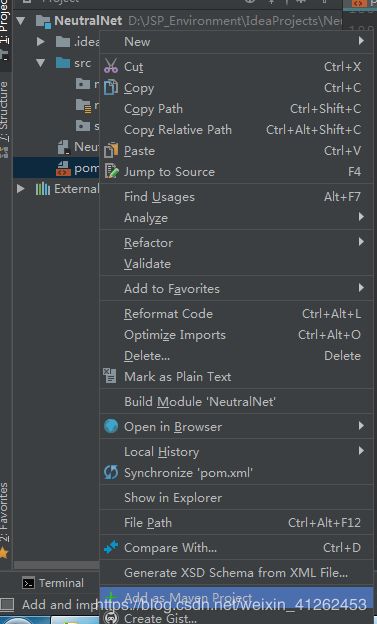

5、右键Pom文件,add as maven project。

6、依赖文件pom.xml报错,需要自己重新指定仓库位置与配置文件,enable auto change

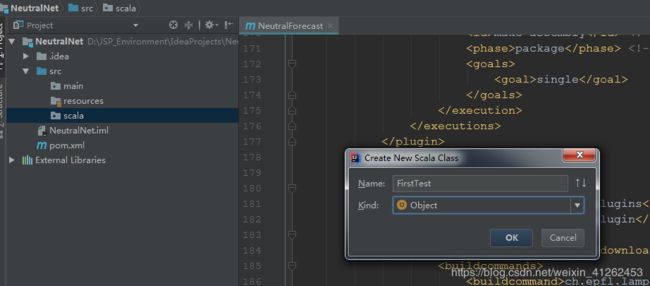

7、在scala包下,新建FirstTest类

FirstTest内容:/etc/profile是我centos下的环境变量配置文件,/software/wordcount是我指定输出在/software目录下,输出wordcount

package scala

import org.apache.spark.{SparkContext,SparkConf}

object FirstTest {

def main(args: Array[String]): Unit = {

val conf = new SparkConf().setAppName("wordcount")

val sc = new SparkContext(conf)

val input = sc.textFile("/etc/profile")

val lines = input.flatMap(line=>line.split(" "))

val count = lines.map(word=>(word,1)).reduceByKey{case(x,y)=>x+y}

val output = count.saveAsTextFile("/software/wordcount")

}

}

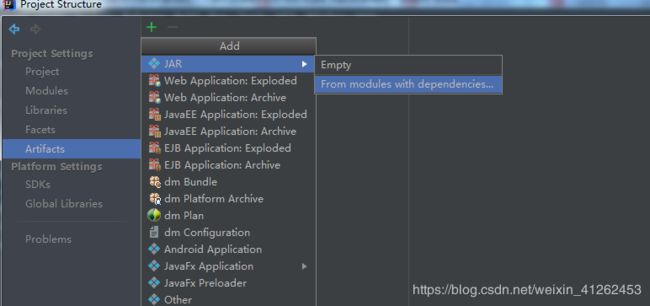

8、指定生成的artifact版本,注意指定打包的入口类

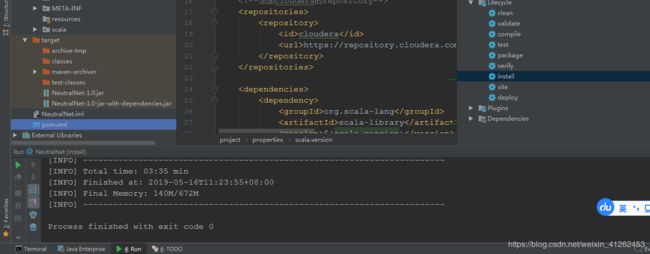

9、双击右侧maven project中的Lifecycle中的install

finish后,将NeutralNet-1.0-jar-with-dependencies.jar上传至spark环境下:

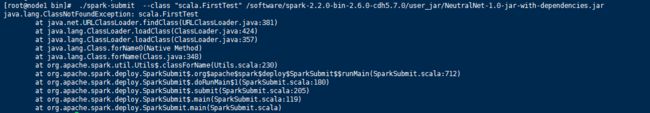

10、将打包好的jar拷贝至spark环境下,启动spark集群,提交。最常出现的问题,提示没有出现的类

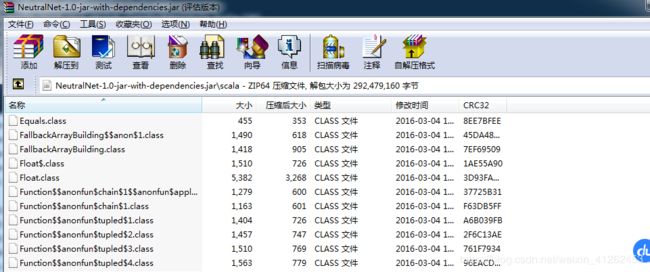

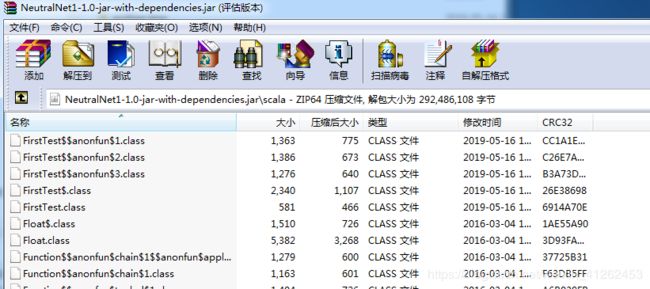

你会打开自己打包的jar,会发现确实没有scala.FirstTest的类

解决办法:尝试在build中选择build artifacts,rebuild,而不是直接install

你可以看到此时NeturalNet1-1.0.jar中有FirstTest.class文件

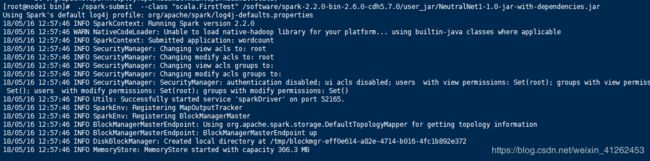

重新提交指令:./spark-submit --class “scala.FirstTest” /software/spark-2.2.0-bin-2.6.0-cdh5.7.0/user_jar/NeutralNet1-1.0-jar-with-dependencies.jar 其中, /software/spark-2.2.0-bin-2.6.0-cdh5.7.0/user_jar/放着我的jar。开始运行,如下图:

10、在我的wordcount下,你可以看到我的输出结果: