大众点评 数据爬取 (字体反爬)

大众点评 数据爬取 (字体反爬)

项目描述

在码市的平台上看到的一个项目:现在已经能爬取到需要的数据,但是在爬取的效率和反爬措施上还需要加强。

项目分析

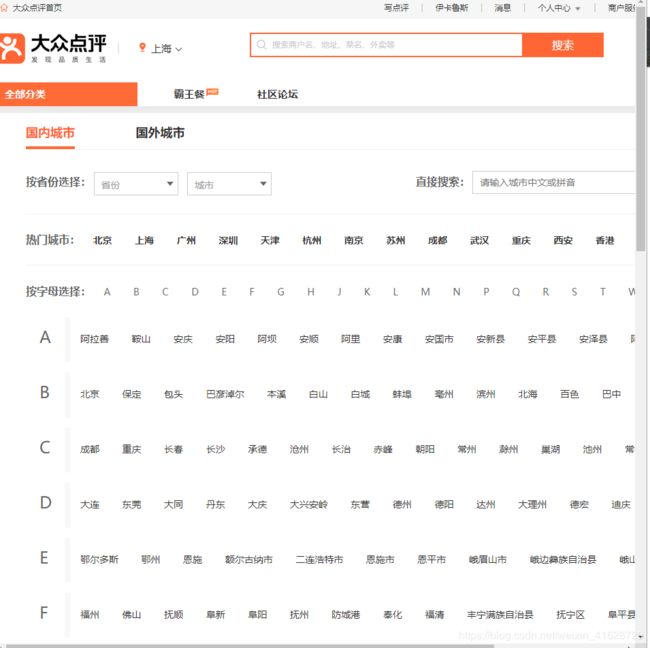

1.打开大众点评的首页‘http://www.dianping.com/ ’一般网页会提示选择所在的城市,而根据项目需求发现,我们要爬取的不仅仅是某一个城市的信息,而是所有的信息由此,我们必须要获取到所有城市的url列表

[外链图片转存失败(img-PuMp7CHc-1564878470695)(E:\CSDN 博客\大众点评\所有城市.png)]

2.以上海这一座城市为例,我们来观察网页上的信息。信息被分成了几大类,由这几大类在点击进去到我们想要的页面,所以这些分类也理所当让的成为了一个接口,我们还要获得每个城市的分类法人url列表

3.以美食为例来分析页面,在美食界面,能直观的获得的信息是一些热门推荐或者一些其他的具有时效性的信息,所以我选择了从商区分类入手,那么我就需要一个所有商区的url列表

分析了以后再一个角落里发现了这个dl标签里包含了商圈url

4.点进徐汇区,可以清楚的发现了商家列表,这正是我们需要的东西,但是具体的商家信息还要在商家的页面获取。

5.随便点击一个商家,可以发现,关键的商家信息大众点评使用了字体反爬,而字体文件放在了几个字体文件中,[外链图片转存失败(img-yElnd8xS-1564878470696)(E:\CSDN 博客\大众点评\字体.png)]

我们把字体文件下载下来后,将网页上的编码与字体对应起来形成一个字典用来替换成真实的信息

最终的爬取结果

dianping spider

items

import scrapy

class DianpingItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

f_c_classification = scrapy.Field() # 上海美食

food_classification = scrapy.Field() # 西餐

f_c_r_classification = scrapy.Field() # 徐汇区

food_shop_name = scrapy.Field() # 店家名字

food_shop_addr = scrapy.Field() # 店家地址

food_shop_phone = scrapy.Field() # 店家电话

pipeline

这里有一点要注意的,scrapy框架本身已经是异步的了,才存入数据库的时候就不用担心数据的存储了(Scrapy是一个使用Python语言(基于Twisted框架)编写的开源网络爬 虫框架,目前由Scrapinghub Ltd维护。)

from .import items

import pymysql

from scrapy.exceptions import DropItem

from twisted.enterprise import adbapi

class DianpingPipeline(object):

def __init__(self):

self.dianping_set = set()

def process_item(self,item,spider):

if isinstance(item, items.DianpingItem):

print('正在清洗数据+++++++++++++++++++++++++++++++++++++++++++++++++++')

if item['food_shop_phone'] in self.dianping_set: # 清洗数据,将重复的数据删除

raise DropItem("Duplicatebookfound:%s" % item)

else:

self.dianping_set.add(item['food_shop_phone'])

return item

# ==========================================================================================================================

# 异步存入数据库目的就是爬虫速度太快数据存入数据库代码,此处异步存储加快速度分布存入,防止数

# 据太多数据库堵着

class MysqlTwistedPipline(object):

def __init__(self):

self.conn = pymysql.connect(host="127.0.0.1", user="admin", password="Root110qwe", db="dianping", charset='utf8mb4',port=3306)

self.cursor = self.conn.cursor()

print('连接数据库成功 啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啦啊啦')

def process_item(self, item, spider):

insert_sql = 'insert into da_zhongdianping(f_c_classification,food_classification,f_c_r_classification,food_shop_name,food_shop_addr,food_shop_phone)values(%s,%s,%s,%s,%s,%s)'

self.cursor.execute(insert_sql, (

item['f_c_classification'], item['food_classification'], item['f_c_r_classification'], item['food_shop_name'],item['food_shop_addr'],item['food_shop_phone']

))

print('写入数据库成功 哈哈哈哈哈哈哈哈哈哈哈哈哈哈啊哈哈哈哈哈哈哈哈哈哈哈哈哈哈哈哈')

self.conn.commit()

def close_spider(self, spider): # TypeError: close_spider() takes 1 positional argument but 2 were given

self.cursor.close()

self.conn.close()

生成字体的映射表

import re

from .base_address_map import Base_adderss_map

from .base_phone_map import Base_phone_map

from fontTools.ttLib import TTFont

import json

def creat_addr_base_map(font):

'''下载好字体文件,将字体文件的信息转换成字典的映射表'''

character = list('1234567890店中美家馆小车大市公酒行国品发电金心业商司超生装园场食有新限天面工服海华水房饰城乐汽香部利子老艺花专东肉菜学福饭人百餐茶务通味所山区门药银农龙停尚安广鑫一容动南具源兴鲜记时机烤文康信果阳理锅宝达地儿衣特产西批坊州牛佳化五米修爱北养卖建材三会鸡室红站德王光名丽油院堂烧江社合星货型村自科快便日民营和活童明器烟育宾精屋经居庄石顺林尔县手厅销用好客火雅盛体旅之鞋辣作粉包楼校鱼平彩上吧保永万物教吃设医正造丰健点汤网庆技斯洗料配汇木缘加麻联卫川泰色世方寓风幼羊烫来高厂兰阿贝皮全女拉成云维贸道术运都口博河瑞宏京际路祥青镇厨培力惠连马鸿钢训影甲助窗布富牌头四多妆吉苑沙恒隆春干饼氏里二管诚制售嘉长轩杂副清计黄讯太鸭号街交与叉附近层旁对巷栋环省桥湖段乡厦府铺内侧元购前幢滨处向座下県凤港开关景泉塘放昌线湾政步宁解白田町溪十八古双胜本单同九迎第台玉锦底后七斜期武岭松角纪朝峰六振珠局岗洲横边济井办汉代临弄团外塔杨铁浦字年岛陵原梅进荣友虹央桂沿事津凯莲丁秀柳集紫旗张谷的是不了很还个也这我就在以可到错没去过感次要比觉看得说常真们但最喜哈么别位能较境非为欢然他挺着价那意种想出员两推做排实分间甜度起满给热完格荐喝等其再几只现朋候样直而买于般豆量选奶打每评少算又因情找些份置适什蛋师气你姐棒试总定啊足级整带虾如态且尝主话强当更板知己无酸让入啦式笑赞片酱差像提队走嫩才刚午接重串回晚微周值费性桌拍跟块调糕')

data = {}

# 按顺序获取到字体的编码信息

keys = font.getGlyphOrder()

for i in range(len(keys[2:])):

key = keys[i+2].replace('uni','&#x')

data[key] = character[i]

with open('base_address_map.py', 'w', encoding='utf-8') as f:

dict_str = json.dumps(data,ensure_ascii=False,indent=4)

f.write('Base_adderss_map = ')

f.write(dict_str)

def creat_phone_base_map(font):

'''下载好字体文件,将字体文件的信息转换成字典的映射表'''

character = list('1234567890店中美家馆小车大市公酒行国品发电金心业商司超生装园场食有新限天面工服海华水房饰城乐汽香部利子老艺花专东肉菜学福饭人百餐茶务通味所山区门药银农龙停尚安广鑫一容动南具源兴鲜记时机烤文康信果阳理锅宝达地儿衣特产西批坊州牛佳化五米修爱北养卖建材三会鸡室红站德王光名丽油院堂烧江社合星货型村自科快便日民营和活童明器烟育宾精屋经居庄石顺林尔县手厅销用好客火雅盛体旅之鞋辣作粉包楼校鱼平彩上吧保永万物教吃设医正造丰健点汤网庆技斯洗料配汇木缘加麻联卫川泰色世方寓风幼羊烫来高厂兰阿贝皮全女拉成云维贸道术运都口博河瑞宏京际路祥青镇厨培力惠连马鸿钢训影甲助窗布富牌头四多妆吉苑沙恒隆春干饼氏里二管诚制售嘉长轩杂副清计黄讯太鸭号街交与叉附近层旁对巷栋环省桥湖段乡厦府铺内侧元购前幢滨处向座下県凤港开关景泉塘放昌线湾政步宁解白田町溪十八古双胜本单同九迎第台玉锦底后七斜期武岭松角纪朝峰六振珠局岗洲横边济井办汉代临弄团外塔杨铁浦字年岛陵原梅进荣友虹央桂沿事津凯莲丁秀柳集紫旗张谷的是不了很还个也这我就在以可到错没去过感次要比觉看得说常真们但最喜哈么别位能较境非为欢然他挺着价那意种想出员两推做排实分间甜度起满给热完格荐喝等其再几只现朋候样直而买于般豆量选奶打每评少算又因情找些份置适什蛋师气你姐棒试总定啊足级整带虾如态且尝主话强当更板知己无酸让入啦式笑赞片酱差像提队走嫩才刚午接重串回晚微周值费性桌拍跟块调糕')

data = {}

# 按顺序获取到字体的编码信息

keys = font.getGlyphOrder()

for i in range(len(keys[2:])):

key = keys[i+2].replace('uni','&#x')

data[key] = character[i]

with open('base_phone_map.py', 'w', encoding='utf-8') as f:

dict_str = json.dumps(data,ensure_ascii=False,indent=4)

f.write('Base_phone_map = ')

f.write(dict_str)

#

def extract_information(addr):

addre = addr.split(';')

addresses = addr.replace('', '').replace(' ', '').replace('', '').replace(' ', '')

aaa = re.findall(r'&(.*?);',addresses)

aa = addresses.split(';')

real_address = ''

for i in range(len(aaa)):

if 'address' in addre[i]:

address = aa[i].replace('&'+aaa[i],Base_adderss_map['&'+aaa[i]])

real_address =real_address +address

elif 'num' in addre[i]:

address = aa[i].replace('&'+aaa[i],Base_phone_map['&'+aaa[i]])

real_address = real_address+address

return real_address

settings里的配置

USER_AGENT = ‘Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.142 Safari/537.36’

Obey robots.txt rules

ROBOTSTXT_OBEY = False

DEFAULT_REQUEST_HEADERS = {

‘Accept’: ‘text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,/;q=0.8,application/signed-exchange;v=b3’,

‘Accept-Encoding’: ‘gzip, deflate’,

‘Accept-Language’: ‘zh-CN,zh;q=0.9’,

‘Cache-Control’: ‘no-cache’,

‘Connection’: ‘keep-alive’,

‘Cookie’: ‘_lxsdk_cuid=16c19704861c8-05e2ad89692f0f-c343162-1fa400-16c19704861c8; _lxsdk=16c19704861c8-05e2ad89692f0f-c343162-1fa400-16c19704861c8; _hc.v=11ff6ffb-2a80-d450-6f3b-81beb5474db7.1563794885; s_ViewType=10; aburl=1; Hm_lvt_dbeeb675516927da776beeb1d9802bd4=1563796204; Hm_lvt_4c4fc10949f0d691f3a2cc4ca5065397=1563978129; cityInfo=%7B%22cityId%22%3A7%2C%22cityName%22%3A%22%E6%B7%B1%E5%9C%B3%22%2C%22provinceId%22%3A0%2C%22parentCityId%22%3A0%2C%22cityOrderId%22%3A0%2C%22isActiveCity%22%3Afalse%2C%22cityEnName%22%3A%22shenzhen%22%2C%22cityPyName%22%3Anull%2C%22cityAreaCode%22%3Anull%2C%22cityAbbrCode%22%3Anull%2C%22isOverseasCity%22%3Afalse%2C%22isScenery%22%3Afalse%2C%22TuanGouFlag%22%3A0%2C%22cityLevel%22%3A0%2C%22appHotLevel%22%3A0%2C%22gLat%22%3A0%2C%22gLng%22%3A0%2C%22directURL%22%3Anull%2C%22standardEnName%22%3Anull%7D; ctu=2b7c08185b82e8ae4df8f87aec20348bf116a030d673eff3a9b54efd230bd068; ua=%E4%BC%8A%E5%8D%A1%E9%B2%81%E6%96%AF; cy=2; cye=beijing; _lx_utm=utm_source%3DBaidu%26utm_medium%3Dorganic; uamo=13622051920; _lxsdk_s=16c40465ce1-90c-b2-244%7C%7C59; lgtoken=078fec8b0-6a63-4c88-a368-41f57b0b2e1f; dper=46ff226a44351c57f61b4d66b0808f67bbf2e7d28de0fc3c302206817496e522b7e4a640ee66b8b1d0cb5f6210dcaa60975820fe362bca5418135d1b64c9db57b6bbc5e1088dda31ba9076facc2e4fb8fd218c36cc8294645208e58936b97033; ll=7fd06e815b796be3df069dec7836c3df’,

‘Host’: ‘www.dianping.com’,

‘Pragma’: ‘no-cache’,

‘Upgrade-Insecure-Requests’: ‘1’,

‘User-Agent’: ‘Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/75.0.3770.142 Safari/537.36’,

}

ITEM_PIPELINES = {

‘dianping.pipelines.DianpingPipeline’: 300,

‘dianping.pipelines.MysqlTwistedPipline’: 301,