二手车交易价格预测|Task2 EDA-数据探索性分析

核心内容介绍:

EDA(Exploratory Data Analysis):

是指对已有的数据(特别是调查或观察得来的原始数据)在尽量少的先验假定下进行探索,通过作图、制表、方程拟合、计算特征量等手段探索数据的结构和规律的一种数据分析方法。

Flowchart流程图:

本文以Datawhale 零基础入门数据挖掘(天池)赛题-二手车交易价格预测为例

1.常用的各种数据科学及可视化库

pandas,numpy,scipy,matplotlib,seabon等等(均采用pip包管理器进行安装,可使用国内镜像提升下载安装速度)

import warnings

warnings.filterwarnings('ignore')

#导入warnings包,利用过滤器来实现忽略警告语句。

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import missingno as msno

2.载入数据

path = './datalab/231784/'

Train_data = pd.read_csv(path+'used_car_train_20200313.csv', sep=' ')

Test_data = pd.read_csv(path+'used_car_testA_20200313.csv', sep=' ')

#sep=' '做数据的切割处理,因为原数据的每组都在CSV的一行中

然后对Train_data和Test_data进行粗略的观察数据,使用head()和shape()

Train_data.head().append(Train_data.tail())

#head和tail对表首尾进行观察

Train_data.shape

总览数据概况

## 1) 通过describe()来熟悉数据的相关统计量

Train_data.describe()

## 2) 通过info()来熟悉数据类型

Train_data.info()

4.判断数据缺失和异常

## 1) 查看每列的存在nan情况并进行可视化(对Test_data同样处理)

Train_data.isnull().sum()

# nan可视化

missing = Train_data.isnull().sum()

missing = missing[missing > 0]

missing.sort_values(inplace=True)

missing.plot.bar()

对缺失数据进行可视化分析,直观了解“nan”个数并打印分析,对缺失很少的进行补充继续使用数据,或者使用lgb等树模型进行空缺后优化,若缺失过多则直接删去该类型的数据。

随机采样250,1000个样本进行查看缺失值

# 可视化看下缺省值

msno.matrix(Train_data.sample(250))

msno.bar(Train_data.sample(1000))

# 可视化看下缺省值

msno.matrix(Test_data.sample(250))

msno.bar(Test_data.sample(1000))

查看异常值:

Train_data.info()

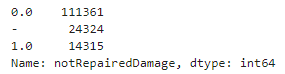

发现只有notRepairedDamage的类型特殊,为object,其他均为数字类型。

对其进行显示:

Train_data['notRepairedDamage'].value_counts()

‘ - ’也为空缺值,因为很多模型对nan有直接的处理,故这里先不做处理,先替换成nan:(对Test_data同样处理)

此处重点:分析特征应该是填充(填充方式是什么,均值填充,0填充,众数填充等),还是舍去,还是先做样本分类用不同的特征模型去预测。

## 替换'_'

Train_data['notRepairedDamage'].replace('-', np.nan, inplace=True)

##再次显示notRepairedDamage

Train_data['notRepairedDamage'].value_counts()

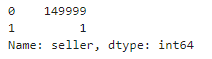

类别特征倾斜十分严重的进行删除,大概率无意义。如seller和offType这两个:

Train_data["seller"].value_counts()

Train_data["offerType"].value_counts()

![]()

删去seller和offType:

对于异常值做专门的分析,分析特征异常的label是否为异常值(或者偏离均值较远或者事特殊符号),异常值是否应该剔除,还是用正常值填充,是记录异常,还是机器本身异常等。

del Train_data["seller"]

del Train_data["offerType"]

del Test_data["seller"]

del Test_data["offerType"]

5.了解预测值的分布

Train_data['price']

Train_data['price'].value_counts()

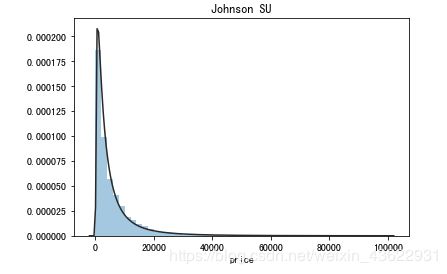

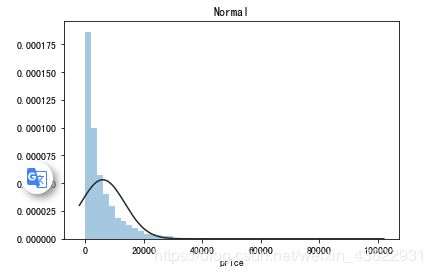

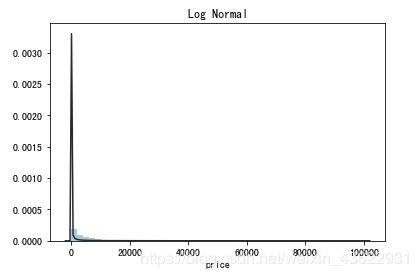

1)price的总体分布概况分析,画出norm,lognorm以及无界约翰逊分布来观察。

## 1) 总体分布概况(无界约翰逊分布等)

import scipy.stats as st

y = Train_data['price']

plt.figure(1); plt.title('Johnson SU')

sns.distplot(y, kde=False, fit=st.johnsonsu)

plt.figure(2); plt.title('Normal')

sns.distplot(y, kde=False, fit=st.norm)

plt.figure(3); plt.title('Log Normal')

sns.distplot(y, kde=False, fit=st.lognorm)

2)查看price的偏度(skewness)和峰度(kurtosis)

偏度(Skewness)可以用来度量随机变量概率分布的不对称性。

当偏度<0时,概率分布图左偏。

当偏度=0时,表示数据相对均匀的分布在平均值两侧,不一定是绝对的对称分布。

当偏度>0时,概率分布图右偏。

峰度(Kurtosis)可以用来度量随机变量概率分布的陡峭程度。

峰度的取值范围为[1,+∞),完全服从正态分布的数据的峰度值为 3,峰度值越大,概率分布图越高尖,峰度值越小,越矮胖。

## 2) 查看skewness and kurtosis

sns.distplot(Train_data['price']);

print("Skewness: %f" % Train_data['price'].skew())

print("Kurtosis: %f" % Train_data['price'].kurt())

3)查看并画出Train_data和Test_data的skew与kurt分布

Train_data.skew(), Train_data.kurt()

sns.distplot(Train_data.skew(),color='blue',axlabel ='Skewness')

sns.distplot(Train_data.kurt(),color='orange',axlabel ='Kurtness')

4)查看预测值的具体频数

## 3) 查看预测值的具体频数

plt.hist(Train_data['price'], orientation = 'vertical',histtype = 'bar', color ='red')

plt.show()

查看频数, 大于20000得值极少,其实这里也可以把这些当作特殊得值(异常值)直接用填充或者删掉。

log变换后的分布会比较均匀,是预测问题中常用的技巧trick

# log变换 z之后的分布较均匀,可以进行log变换进行预测,这也是预测问题常用的trick

plt.hist(np.log(Train_data['price']), orientation = 'vertical',histtype = 'bar', color ='red')

plt.show()

6.特征分为类别特征和数字特征,并对类别特征查看unique分布

1)分离label,即预测值

Y_train = Train_data['price']

2)人为进行特征分类

numeric_features = ['power', 'kilometer', 'v_0', 'v_1', 'v_2', 'v_3', 'v_4', 'v_5', 'v_6', 'v_7', 'v_8', 'v_9', 'v_10', 'v_11', 'v_12', 'v_13','v_14' ]

categorical_features = ['name', 'model', 'brand', 'bodyType', 'fuelType', 'gearbox', 'notRepairedDamage', 'regionCode',]

3)查看unique分布

# 特征nunique分布

for cat_fea in categorical_features:

print(cat_fea + "的特征分布如下:")

print("{}特征有个{}不同的值".format(cat_fea, Train_data[cat_fea].nunique()))

print(Train_data[cat_fea].value_counts())

7.数字特征分析

numeric_features.append('price')

numeric_features

Train_data.head()

1)相关性分析

price_numeric = Train_data[numeric_features]

correlation = price_numeric.corr()

print(correlation['price'].sort_values(ascending = False),'\n')

#可视化热图

f , ax = plt.subplots(figsize = (7, 7))

plt.title('Correlation of Numeric Features with Price',y=1,size=16)

sns.heatmap(correlation,square = True, vmax=0.8)

#最后从中删除price

del price_numeric['price']

2)查看几个特征的偏度和峰值

for col in numeric_features:

print('{:15}'.format(col),

'Skewness: {:05.2f}'.format(Train_data[col].skew()) ,

' ' ,

'Kurtosis: {:06.2f}'.format(Train_data[col].kurt())

)

3)每个数字特征的分布可视化

f = pd.melt(Train_data, value_vars=numeric_features)

g = sns.FacetGrid(f, col="variable", col_wrap=2, sharex=False, sharey=False)

g = g.map(sns.distplot, "value")

4)数字特征相互之间的关系可视化

sns.set()

columns = ['price', 'v_12', 'v_8' , 'v_0', 'power', 'v_5', 'v_2', 'v_6', 'v_1', 'v_14']

sns.pairplot(Train_data[columns],size = 2 ,kind ='scatter',diag_kind='kde')

plt.show()

5)多变量互相回归关系可视化

fig, ((ax1, ax2), (ax3, ax4), (ax5, ax6), (ax7, ax8), (ax9, ax10)) = plt.subplots(nrows=5, ncols=2, figsize=(24, 20))

# ['v_12', 'v_8' , 'v_0', 'power', 'v_5', 'v_2', 'v_6', 'v_1', 'v_14']

v_12_scatter_plot = pd.concat([Y_train,Train_data['v_12']],axis = 1)

sns.regplot(x='v_12',y = 'price', data = v_12_scatter_plot,scatter= True, fit_reg=True, ax=ax1)

v_8_scatter_plot = pd.concat([Y_train,Train_data['v_8']],axis = 1)

sns.regplot(x='v_8',y = 'price',data = v_8_scatter_plot,scatter= True, fit_reg=True, ax=ax2)

v_0_scatter_plot = pd.concat([Y_train,Train_data['v_0']],axis = 1)

sns.regplot(x='v_0',y = 'price',data = v_0_scatter_plot,scatter= True, fit_reg=True, ax=ax3)

power_scatter_plot = pd.concat([Y_train,Train_data['power']],axis = 1)

sns.regplot(x='power',y = 'price',data = power_scatter_plot,scatter= True, fit_reg=True, ax=ax4)

v_5_scatter_plot = pd.concat([Y_train,Train_data['v_5']],axis = 1)

sns.regplot(x='v_5',y = 'price',data = v_5_scatter_plot,scatter= True, fit_reg=True, ax=ax5)

v_2_scatter_plot = pd.concat([Y_train,Train_data['v_2']],axis = 1)

sns.regplot(x='v_2',y = 'price',data = v_2_scatter_plot,scatter= True, fit_reg=True, ax=ax6)

v_6_scatter_plot = pd.concat([Y_train,Train_data['v_6']],axis = 1)

sns.regplot(x='v_6',y = 'price',data = v_6_scatter_plot,scatter= True, fit_reg=True, ax=ax7)

v_1_scatter_plot = pd.concat([Y_train,Train_data['v_1']],axis = 1)

sns.regplot(x='v_1',y = 'price',data = v_1_scatter_plot,scatter= True, fit_reg=True, ax=ax8)

v_14_scatter_plot = pd.concat([Y_train,Train_data['v_14']],axis = 1)

sns.regplot(x='v_14',y = 'price',data = v_14_scatter_plot,scatter= True, fit_reg=True, ax=ax9)

v_13_scatter_plot = pd.concat([Y_train,Train_data['v_13']],axis = 1)

sns.regplot(x='v_13',y = 'price',data = v_13_scatter_plot,scatter= True, fit_reg=True, ax=ax10)

8.类别特征分析

1)unique分布

for fea in categorical_features:

print(Train_data[fea].nunique())

categorical_features

2)类别特征箱形图可视化

# 因为 name和 regionCode的类别太稀疏了,这里我们把不稀疏的几类画一下

categorical_features = ['model',

'brand',

'bodyType',

'fuelType',

'gearbox',

'notRepairedDamage']

for c in categorical_features:

Train_data[c] = Train_data[c].astype('category')

if Train_data[c].isnull().any():

Train_data[c] = Train_data[c].cat.add_categories(['MISSING'])

Train_data[c] = Train_data[c].fillna('MISSING')

def boxplot(x, y, **kwargs):

sns.boxplot(x=x, y=y)

x=plt.xticks(rotation=90)

f = pd.melt(Train_data, id_vars=['price'], value_vars=categorical_features)

g = sns.FacetGrid(f, col="variable", col_wrap=2, sharex=False, sharey=False, size=5)

g = g.map(boxplot, "value", "price")

3)类别特征的小提琴图可视化

catg_list = categorical_features

target = 'price'

for catg in catg_list :

sns.violinplot(x=catg, y=target, data=Train_data)

plt.show()

categorical_features = ['model',

'brand',

'bodyType',

'fuelType',

'gearbox',

'notRepairedDamage']

4)类别特征的柱状图可视化

def bar_plot(x, y, **kwargs):

sns.barplot(x=x, y=y)

x=plt.xticks(rotation=90)

f = pd.melt(Train_data, id_vars=['price'], value_vars=categorical_features)

g = sns.FacetGrid(f, col="variable", col_wrap=2, sharex=False, sharey=False, size=5)

g = g.map(bar_plot, "value", "price")

5) 类别特征的每个类别频数可视化(count_plot)

def count_plot(x, **kwargs):

sns.countplot(x=x)

x=plt.xticks(rotation=90)

f = pd.melt(Train_data, value_vars=categorical_features)

g = sns.FacetGrid(f, col="variable", col_wrap=2, sharex=False, sharey=False, size=5)

g = g.map(count_plot, "value")

9.用pandas_profiling生成数据报告

import pandas_profiling

pfr = pandas_profiling.ProfileReport(Train_data)

pfr.to_file("./example.html")