DL知识拾贝(Pytorch)(五):如何调整学习率

文章目录

- 1. Pytorch中的学习率调整API

- 1.1 等间隔调整学习率

- 1.2 按指定区间调整学习率

- 1.3 指数衰减调整学习率

- 1.4 余弦退火调整学习率

- 1.5 自适应调整学习率

- 1.6 自定义规则学习率

- 2. cyclical learning rate

- 2.1 原理及超参数解释

- 2.2 如何确定超参数的值

- 2.3 cyclical learning rate的三种调整方式

- 2.4 Pytorch实战案例

- 2.4.1 定义cyclical_learning_rate函数

- 2.4.2 CIFAR10数据集划分和预处理

- 2.4.3 定义深度卷积分类网络

- 2.4.4 超参数范围的确定

- 2.4.5 模型训练和测试结果

- 3. warm up

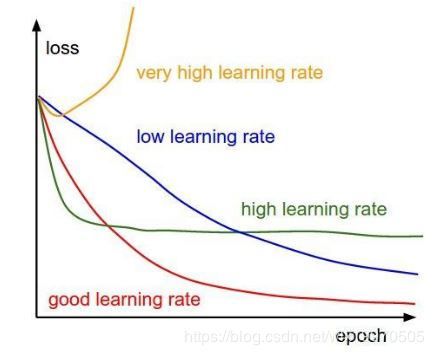

学习率对于深度学习是一个重要的超参数,它控制着基于损失梯度调整神经网络权值的速度,大多数优化算法(SGD、RMSprop、Adam)对其都有所涉及。学习率过下,收敛的太慢,网络学习的也太慢;学习率过大,最优化的“步伐”太大,往往会跨过最优值,从而达不到好的训练效果。

1. Pytorch中的学习率调整API

1.1 等间隔调整学习率

class torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1)

等间隔调整学习率,调整倍数为 gamma 倍,调整间隔为 step_size。当last_epoch = -1时,将初始lr设置为lr。

参数:

optimizer (Optimizer) – 包装的优化器。

step_size (int) – 学习率衰减间隔,例如若为 30,则会在 30、 60、 90…个 epoch 时,将学习率调整为 lr * gamma。

gamma (float) – 学习率衰减的乘积因子。

last_epoch (int) – 最后一个epoch的指数。这个变量用来指示学习率是否需要调整。当last_epoch 符合设定的间隔时,就会对学习率进行调整。当为-1 时,学习率设置为初始值。

'''

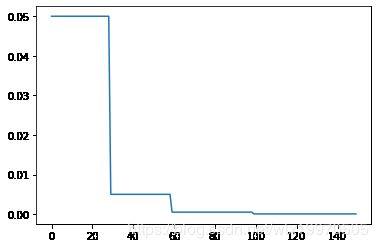

例:lr = 0.05, step_size=30, gamma=0.1

lr = 0.05 if epoch < 30

lr = 0.005 if 30 <= epoch < 60

lr = 0.0005 if 60 <= epoch < 90

'''

import torch

import torch.optim as optim

from torch.optim import lr_scheduler

from torchvision.models import AlexNet

import matplotlib.pyplot as plt

model = AlexNet(num_classes=2)

optimizer = optim.SGD(params=model.parameters(), lr=0.05)

scheduler1 = lr_scheduler.StepLR(optimizer, step_size=30, gamma=0.1)

plt.figure()

x1 = list(range(100))

y1 = []

for epoch in range(100):

scheduler1.step()

y1.append(scheduler1.get_lr()[0])

plt.plot(x1, y1)

1.2 按指定区间调整学习率

class torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=-1)

参数:

optimizer (Optimizer) – 包装的优化器。

milestones (list) – 分隔区间,例如[a,b](a

last_epoch (int) – 最后一个epoch的指数。这个变量用来指示学习率是否需要调整。当last_epoch 符合设定的间隔时,就会对学习率进行调整。当为-1 时,学习率设置为初始值。

'''

例:lr = 0.05, milestones=[30,60,100], gamma=0.1

lr = 0.05 if epoch < 30

lr = 0.005 if 30 <= epoch < 60

lr = 0.0005 if 60 <= epoch < 100

lr = 0.00005 if epoch >= 100

'''

scheduler2 = lr_scheduler.MultiStepLR(optimizer, [30, 60,100], 0.1)

plt.figure()

x2 = list(range(150))

y2 = []

for epoch in range(150):

scheduler2.step()

y2.append(scheduler2.get_lr()[0])

plt.plot(x2, y2)

1.3 指数衰减调整学习率

class torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=-1)

根据epoch数和gamma调整学习率,每个epoch都在改变,调整公式:lr∗gamma^epoch。

参数:

optimizer (Optimizer) – 包装的优化器。

gamma (float) – 学习率衰减的乘积因子。

last_epoch (int) – 最后一个epoch的指数。这个变量用来指示学习率是否需要调整。当last_epoch 符合设定的间隔时,就会对学习率进行调整。当为-1 时,学习率设置为初始值。

scheduler3 = lr_scheduler.ExponentialLR(optimizer, gamma=0.9)

plt.figure()

x3 = list(range(100))

y3 = []

for epoch in range(100):

scheduler3.step()

y3.append(scheduler3.get_lr()[0])

plt.plot(x3, y3)

1.4 余弦退火调整学习率

以余弦函数为周期,并在每个周期最大值时重新设置学习率。以初始学习率为最大学习率,以 2∗Tmax 为周期,在一个周期内先下降,后上升。

torch.optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=-1)

参数:

T_max(int) – 一次学习率周期的迭代次数,即 T_max 个 epoch 之后重新设置学习率。

eta_min(float) – 最小学习率,即在一个周期中,学习率最小会下降到 eta_min,默认值为 0。

scheduler4 = lr_scheduler.CosineAnnealingLR(optimizer, 10, eta_min=0, last_epoch=-1)

plt.figure()

x4 = list(range(100))

y4 = []

for epoch in range(100):

scheduler4.step()

y4.append(scheduler4.get_lr()[0])

plt.plot(x4, y4)

1.5 自适应调整学习率

当某指标不再变化(下降或升高),调整学习率,这是非常实用的学习率调整策略。

例如,当验证集的 loss 不再下降时,进行学习率调整;或者监测验证集的 accuracy,当accuracy 不再上升时,则调整学习率。

torch.optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10,

verbose=False, threshold=0.0001, threshold_mode='rel', cooldown=0, min_lr=0, eps=1e-08)

参数:

mode(str) – 模式选择,有 min 和 max 两种模式, min 表示当指标不再降低(如监测loss), max 表示当指标不再升高(如监测 accuracy)。

factor(float) – 学习率调整倍数(等同于其它方法的 gamma),即学习率更新为 lr = lr * factor。

patience(int) –忍受该指标多少个 step 不变化,当忍无可忍时,调整学习率。

verbose(bool) – 是否打印学习率信息, print(‘Epoch {:5d}: reducing learning rate of group {} to {:.4e}.’.format(epoch, i, new_lr))

threshold_mode(str) – 选择判断指标是否达最优的模式,有两种模式, rel 和 abs:

1.当 threshold_mode == rel,并且 mode == max 时, dynamic_threshold = best * ( 1 +threshold );

2.当 threshold_mode == rel,并且 mode == min 时, dynamic_threshold = best * ( 1 -threshold );

2.当 threshold_mode == abs,并且 mode== max 时, dynamic_threshold = best + threshold ;

4.当 threshold_mode == rel,并且 mode == max 时, dynamic_threshold = best - threshold;

threshold(float) – 配合 threshold_mode 使用。

cooldown(int) – “冷却时间“,当调整学习率之后,让学习率调整策略冷静一下,让模型再训练一段时间,再重启监测模式。

min_lr(float or list) – 学习率下限,可为 float,或者 list,当有多个参数组时,可用 list 进行设置。

eps(float) – 学习率衰减的最小值,当学习率变化小于 eps 时,则不调整学习率。

optimizer = torch.optim.SGD(model.parameters(), lr=0.1, momentum=0.9)

scheduler = ReduceLROnPlateau(optimizer, 'min')

for epoch in range(10):

train(...)

val_loss = validate(...)

# Note that step should be called after validate()

scheduler.step(val_loss)

1.6 自定义规则学习率

将每个参数组的学习速率设置为初始的lr乘以一个给定的函数。

class torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=-1)

参数:

optimizer (Optimizer) – 包装的优化器。

lr_lambda (function or list) – 一个函数来计算一个乘法因子给定一个整数参数的epoch,或列表等功能,为每个组optimizer.param_groups。

last_epoch (int) – 最后一个epoch的指数。这个变量用来指示学习率是否需要调整。当last_epoch 符合设定的间隔时,就会对学习率进行调整。当为-1 时,学习率设置为初始值。

lambda1 = lambda epoch: epoch // 30

lambda2 = lambda epoch: 0.95 ** epoch

scheduler = LambdaLR(optimizer, lr_lambda=[lambda1, lambda2]) # lambda的结果作为乘法因子与学习率相乘

for epoch in range(100):

scheduler.step() # 在训练的时候进行迭代

train(...)

validate(...)

2. cyclical learning rate

2.1 原理及超参数解释

该方法来自于IEEE 2017 的一篇论文:论文地址,所谓的cyclical learning rate,就是采用周期变化的策略调整学习率,在上面说到的余弦退火策略实际上也是一种cyclical learning rate。

周期变化的策略能够使模型跳出在训练过程中遇到的局部最低点和鞍点。有理论表明,相比于局部最低点,鞍点更加阻碍收敛。如果鞍点正好发生在一个巧妙的平衡点,小的学习率通常不能产生足够大的梯度变化使其跳过该点(即使跳过,也需要花费很长时间)。这正是周期性高学习率的作用所在,它能够更快地跳过鞍点。同时,假设最佳的LR一定会落在预设的max和min之间,那么周期性的调整相当于不断地迭代寻找出最优解。

来看论文中对cyclical learning rate的定义:

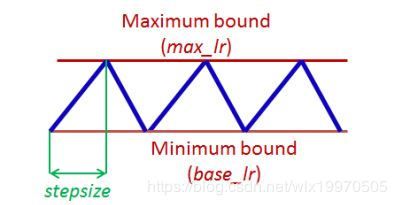

假设训练样本数为N,那么一个epoch的iteration就是(iteration = N / batch_size)。一个cycle定义为学习率从低到高,然后从高到低走一轮所用的iteration数。而stepsize指的是cycle迭代步数的一半。图中的上下两条红线分别代表LR周期变化的最大值(max_lr)和最小值(base_lr)。

2.2 如何确定超参数的值

cyclical learning rate主要的超参数为stepsize, max_lr 和base_lr。原论文中给出三个超参的确定方式。其中实验证明将stepsize设成一个epoch包含的iteration数量的2-10倍能取得理想效果。而对于max_lr 和base_lr则需要做一个pre_Experiments:

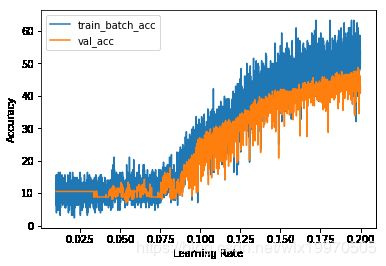

在pre_Experiments开始训练模型的同时,从低到高地增加学习率,将损失和学习率画在一张图中:

在上图中,可以看出,开始的时候,准确率随着学习率的增加而增加,然后进入平缓起期,然后又开始减小,出现震荡。准确率开始迅速增加的临界点和开始下降或者发生波动的临界点的附近,便可以取base_lr和max_lr的值,在上图中可以取:base_lr = 0.001 ; max_lr = 0.006。

2.3 cyclical learning rate的三种调整方式

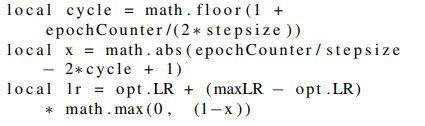

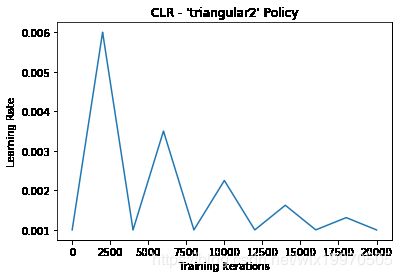

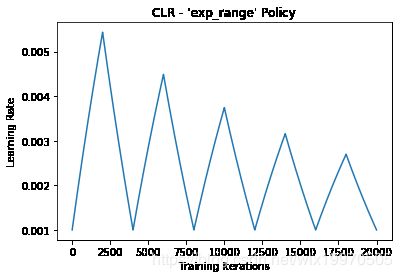

cyclical learning rate的计算方法分为Triangular以及它的两个变种:Triangular2和Exp_range。

- Triangular LR计算方法如下,其中:

- opt.LR是指定的最小学习率,等于上面图中的base_lr

- epochCounter是当前epochs数

- lr是当前用于迭代的学习率

- stepsize是周期长度(cycle length)二分之一

- max_lr是最大学习率,等于图中的max_lr

- Triangular2 LR与Triangular LR策略相同,只是在每个周期结束时将学习率差减半。这意味着学习率的差异在每个周期后都会下降,如下:

- Exp_range LR学习率变化速度在最小和最大边界之间变化,并且每个边界值都会随着伽玛迭代的指数因子而下降:

2.4 Pytorch实战案例

我们用 p y t o r c h [ 2 ] pytorch^{[2]} pytorch[2] 来实现这个方法并应用在CIFAR10的深度学习任务中:

2.4.1 定义cyclical_learning_rate函数

import numpy as np

import matplotlib.pyplot as plt

##定义cyclical_learning_rate,这里为了方便将triangular2和exp_range放在一起来定义

def cyclical_learning_rate(batch_step,

step_size,

base_lr=0.001,

max_lr=0.006,

mode='triangular',

gamma=0.999995):

cycle = np.floor(1 + batch_step / (2. * step_size))

x = np.abs(batch_step / float(step_size) - 2 * cycle + 1)

lr_delta = (max_lr - base_lr) * np.maximum(0, (1 - x)) #triangular LR

if mode == 'triangular':

pass

elif mode == 'triangular2':

lr_delta = lr_delta * 1 / (2. ** (cycle - 1)) #triangular2 LR

elif mode == 'exp_range':

lr_delta = lr_delta * (gamma**(batch_step)) #exp_range LR

else:

raise ValueError('mode must be "triangular", "triangular2", or "exp_range"')

lr = base_lr + lr_delta

return lr

##定义超参数

num_epochs = 50 #定义epochs数

num_train = 50000 #定义训练样本

batch_size = 100 #定义batch_size

iter_per_ep = num_train // batch_size #计算iteration

##triangular可视化

batch_step = -1

collect_lr = []

for e in range(num_epochs):

for i in range(iter_per_ep):

batch_step += 1

cur_lr = cyclical_learning_rate(batch_step=batch_step,

step_size=iter_per_ep*5)

collect_lr.append(cur_lr)

plt.scatter(range(len(collect_lr)), collect_lr)

plt.ylim([0.0, 0.01])

plt.xlim([0, num_epochs*iter_per_ep + 5000])

plt.show()

2.4.2 CIFAR10数据集划分和预处理

下面在CIFAR10上搭建深度学习任务:

import time

import torch

import torch.nn.functional as F

from torchvision import datasets

from torchvision import transforms

from torch.utils.data.sampler import SubsetRandomSampler

from torch.utils.data import DataLoader

if torch.cuda.is_available():

torch.backends.cudnn.deterministic = True

Device

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# 定义超参数

random_seed = 1

batch_size = 128

num_classes = 10 #10为类别数

## 划分训练集和验证集

np.random.seed(random_seed)

idx = np.arange(50000) # the size of CIFAR10-train

np.random.shuffle(idx)

val_idx, train_idx = idx[:1000], idx[1000:]

train_sampler = SubsetRandomSampler(train_idx)

val_sampler = SubsetRandomSampler(val_idx)

train_dataset = datasets.CIFAR10(root='data',

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = datasets.CIFAR10(root='data',

train=False,

transform=transforms.ToTensor())

##Pytorch DataLoader容器载入数据

train_loader = DataLoader(dataset=train_dataset,

batch_size=batch_size,

# shuffle=True, # Subsetsampler already shuffles

sampler=train_sampler)

val_loader = DataLoader(dataset=train_dataset,

batch_size=batch_size,

# shuffle=True,

sampler=val_sampler)

test_loader = DataLoader(dataset=test_dataset,

batch_size=batch_size,

shuffle=False)

# Checking the dataset

for images, labels in train_loader:

print('Image batch dimensions:', images.shape)

print('Image label dimensions:', labels.shape)

break

cnt = 0

for images, labels in train_loader:

cnt += images.shape[0]

print('Number of training examples:', cnt)

cnt = 0

for images, labels in val_loader:

cnt += images.shape[0]

print('Number of validation instances:', cnt)

cnt = 0

for images, labels in test_loader:

cnt += images.shape[0]

print('Number of test instances:', cnt)

输出:

Files already downloaded and verified

Image batch dimensions: torch.Size([128, 3, 32, 32])

Image label dimensions: torch.Size([128])

Number of training examples: 49000

Number of validation instances: 1000

Number of test instances: 10000

每一个batch的样本为128个,图像大小为33232,划分的训练集样本为49000,验证集样本为1000,测试集样本为10000。

2.4.3 定义深度卷积分类网络

下面定义网络结构和前向传播过程:

class ConvNet(torch.nn.Module):

def __init__(self, num_classes):

super(ConvNet, self).__init__()

# calculate same padding:

# (w - k + 2*p)/s + 1 = o

# => p = (s(o-1) - w + k)/2

# 32x32x3 => 32x32x6

self.conv_1 = torch.nn.Conv2d(in_channels=3,

out_channels=6,

kernel_size=(3, 3),

stride=(1, 1),

padding=1) # (1(32-1) - 32 + 3) / 2) = 1

# 32x32x4 => 16x16x6

self.pool_1 = torch.nn.MaxPool2d(kernel_size=(2, 2),

stride=(2, 2),

padding=0) # (2(16-1) - 32 + 2) = 0

# 16x16x6 => 16x16x12

self.conv_2 = torch.nn.Conv2d(in_channels=6,

out_channels=12,

kernel_size=(3, 3),

stride=(1, 1),

padding=1) # (1(16-1) - 16 + 3) / 2 = 1

# 16x16x12 => 8x8x12

self.pool_2 = torch.nn.MaxPool2d(kernel_size=(2, 2),

stride=(2, 2),

padding=0) # (2(8-1) - 16 + 2) = 0

# 8x8x12 => 8x8x18

self.conv_3 = torch.nn.Conv2d(in_channels=12,

out_channels=18,

kernel_size=(3, 3),

stride=(1, 1),

padding=1) # (1(8-1) - 8 + 3) / 2 = 1

# 8x8x18 => 4x4x18

self.pool_3 = torch.nn.MaxPool2d(kernel_size=(2, 2),

stride=(2, 2),

padding=0) # (2(4-1) - 8 + 2) = 0

# 4x4x18 => 4x4x24

self.conv_4 = torch.nn.Conv2d(in_channels=18,

out_channels=24,

kernel_size=(3, 3),

stride=(1, 1),

padding=1)

# 4x4x24 => 2x2x24

self.pool_4 = torch.nn.MaxPool2d(kernel_size=(2, 2),

stride=(2, 2),

padding=0)

# 2x2x24 => 2x2x30

self.conv_5 = torch.nn.Conv2d(in_channels=24,

out_channels=30,

kernel_size=(3, 3),

stride=(1, 1),

padding=1)

# 2x2x30 => 1x1x30

self.pool_5 = torch.nn.MaxPool2d(kernel_size=(2, 2),

stride=(2, 2),

padding=0)

self.linear_1 = torch.nn.Linear(1*1*30, num_classes)

def forward(self, x):

out = self.conv_1(x)

out = F.relu(out)

out = self.pool_1(out)

out = self.conv_2(out)

out = F.relu(out)

out = self.pool_2(out)

out = self.conv_3(out)

out = F.relu(out)

out = self.pool_3(out)

out = self.conv_4(out)

out = F.relu(out)

out = self.pool_4(out)

out = self.conv_5(out)

out = F.relu(out)

out = self.pool_5(out)

logits = self.linear_1(out.view(-1, 1*1*30))

probas = F.softmax(logits, dim=1)

return logits, probas

该网络结构比较简单,输入数据经过五层卷积层之后,由全连接层接softmax输出结果,这里并非为CIFAR10数据集分类的最佳网络设计,只是为了演示三种CLR学习率调整方法在相同网络下的不同表现。

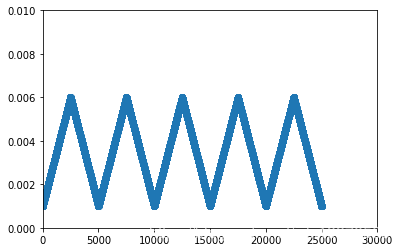

2.4.4 超参数范围的确定

根据2.2节的描述确定超参数,我们将训练进行5-10个时期,并将学习率线性提高到一个上限。 选择(训练或验证)准确性开始提高的临界值作为base_lr。选择精度提高停止,降低或大幅波动的临界值作为max_lr。

def compute_accuracy(model, data_loader): ##定义计算accuracy函数

correct_pred, num_examples = 0, 0

for features, targets in data_loader:

features = features.to(device)

targets = targets.to(device)

logits, probas = model(features)

_, predicted_labels = torch.max(probas, 1)

num_examples += targets.size(0)

correct_pred += (predicted_labels == targets).sum()

return correct_pred.float()/num_examples * 100

################################

### Setting for this run

#################################

num_epochs = 10

iter_per_ep = len(train_loader)

base_lr = 0.01 #粗略的定base_lr

max_lr = 0.2 ##粗略的定max_lr

#################################

### Init Model

#################################

torch.manual_seed(random_seed)

model = ConvNet(num_classes=num_classes)

model = model.to(device)

##########################

### COST AND OPTIMIZER

##########################

cost_fn = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=base_lr)

########################################################################

# Collect the data to be evaluated via the LR Range Test

collect = {'lr': [], 'cost': [], 'train_batch_acc': [], 'val_acc': []}

########################################################################

batch_step = -1

cur_lr = base_lr

start_time = time.time()

for epoch in range(num_epochs):

for batch_idx, (features, targets) in enumerate(train_loader):

batch_step += 1

features = features.to(device)

targets = targets.to(device)

### FORWARD AND BACK PROP

logits, probas = model(features)

cost = cost_fn(logits, targets)

optimizer.zero_grad()

cost.backward()

### UPDATE MODEL PARAMETERS

optimizer.step()

#############################################

# Logging

if not batch_step % 200:

print('Total batch # %5d/%d' % (batch_step,

iter_per_ep*num_epochs),

end='')

print(' Curr. Batch Cost: %.5f' % cost)

#############################################

# Collect stats

model = model.eval()

train_acc = compute_accuracy(model, [[features, targets]])

val_acc = compute_accuracy(model, val_loader)

collect['lr'].append(cur_lr)

collect['train_batch_acc'].append(train_acc)

collect['val_acc'].append(val_acc)

collect['cost'].append(cost)

model = model.train()

#############################################

# update learning rate

cur_lr = cyclical_learning_rate(batch_step=batch_step,

step_size=num_epochs*iter_per_ep,

base_lr=base_lr,

max_lr=max_lr)

for g in optimizer.param_groups:

g['lr'] = cur_lr

############################################

print('Time elapsed: %.2f min' % ((time.time() - start_time)/60))

print('Total Training Time: %.2f min' % ((time.time() - start_time)/60))

##可视化:Learning Rate---Accuracy

plt.plot(collect['lr'], collect['train_batch_acc'], label='train_batch_acc')

plt.plot(collect['lr'], collect['val_acc'], label='val_acc')

plt.xlabel('Learning Rate')

plt.ylabel('Accuracy')

plt.legend()

plt.show()

通过可视化的结果可以看出base_lr大概在0.08-0.09之间,max_lr大概在0.175或是在0.2之间。

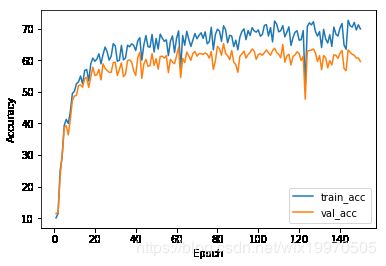

2.4.5 模型训练和测试结果

下面将参数确定结果应用到模型中,其中,base_lr = 0.09; max_lr = 0.175:

################################

### Setting for this run

#################################

num_epochs = 150

iter_per_ep = len(train_loader.sampler.indices) // train_loader.batch_size

base_lr = 0.09

max_lr = 0.175

#################################

### Init Model

#################################

torch.manual_seed(random_seed)

model = ConvNet(num_classes=num_classes)

model = model.to(device)

##########################

### COST AND OPTIMIZER

##########################

cost_fn = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=base_lr)

########################################################################

collect = {'epoch': [], 'cost': [], 'train_acc': [], 'val_acc': []}

########################################################################

start_time = time.time()

for epoch in range(num_epochs):

epoch_avg_cost = 0.

model = model.train()

for batch_idx, (features, targets) in enumerate(train_loader):

features = features.to(device)

targets = targets.to(device)

### FORWARD AND BACK PROP

logits, probas = model(features)

cost = cost_fn(logits, targets)

optimizer.zero_grad()

cost.backward()

### UPDATE MODEL PARAMETERS

optimizer.step()

epoch_avg_cost += cost

#############################################

# Logging

if not batch_step % 600:

print('Batch %5d/%d' % (batch_step, iter_per_ep*num_epochs),

end='')

print(' Cost: %.5f' % cost)

#############################################

# Collect stats

model = model.eval()

train_acc = compute_accuracy(model, train_loader)

val_acc = compute_accuracy(model, val_loader)

epoch_avg_cost /= batch_idx+1

collect['epoch'].append(epoch+1)

collect['val_acc'].append(val_acc)

collect['train_acc'].append(train_acc)

collect['cost'].append(epoch_avg_cost / iter_per_ep)

################################################

# Logging

print('Epoch %3d' % (epoch+1), end='')

print(' | Train/Valid Acc: %.2f/%.2f' % (train_acc, val_acc))

#############################################

# cyclical_learning_rate

base_lr = cyclical_learning_rate(batch_step=batch_step,

step_size=num_epochs*iter_per_ep,

base_lr=base_lr,

max_lr=max_lr)

for g in optimizer.param_groups:

g['lr'] = base_lr

############################################

print('Time elapsed: %.2f min' % ((time.time() - start_time)/60))

print('Total Training Time: %.2f min' % ((time.time() - start_time)/60))

##训练结果可视化

plt.plot(collect['epoch'], collect['train_acc'], label='train_acc')

plt.plot(collect['epoch'], collect['val_acc'], label='val_acc')

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.legend()

plt.show()

plt.plot(collect['epoch'], collect['cost'])

plt.xlabel('Epoch')

plt.ylabel('Avg. Cost Per Epoch')

plt.show()

print('Test accuracy: %.2f%%' % (compute_accuracy(model, test_loader)))

'''

Test accuracy: 61.69%

'''

3. warm up

warm up 已经被很多任务当作是训练时候的tricks使用,例如这篇CVPR 2019 的文章:论文链接

为什么会用warm up 呢?由于刚开始训练时,模型的权重(weights)是随机初始化的,此时若选择一个较大的学习率,可能带来模型的不稳定(振荡),选择Warmup预热学习率的方式,可以使得开始训练的几个epoches或者一些steps内学习率较小,在预热的小学习率下,模型可以慢慢趋于稳定,等模型相对稳定后再选择预先设置的学习率进行训练,使得模型收敛速度变得更快,模型效果更佳。

按作者所说:Warmup学习率并不是一个新颖的东西, 在很多task上面都被证明是有效的,在之前的工作中也有过验证。j假设初始学习率为3.5e-4,总共训,120个epoch,在第40和70个epoch进行学习率下降。用一个很大的学习率初始化网路可能使得网络震荡到一个次优空间,因为网络初期的梯度是很大的。Warmup的策略就是初期用一个逐渐递增的学习率去初始化网络,渐渐初始化到一个更优的搜索空间。下图是论文中用最简单的线性策略,即前10个epoch学习从0逐渐增加到初始学习率。

![]()

参考:

[1] https://www.jianshu.com/p/e014539d2962

[2] https://github.com/rasbt/deeplearning-models/blob/master/pytorch_ipynb/

[3] https://zhuanlan.zhihu.com/p/61831669