吴恩达深度学习课后编程题讲解(python)

小博极其喜欢这位人工智能领域大牛,非常膜拜,早在他出机器学习的课程的时候,就对机器学习产生了浓厚的兴趣,最近他又推出深度学习的课程,实在是又大火了一把,小博怎能不关注呢,我也跟随着吴恩达老师慢慢敲开深度学习的大门。

吴恩达是斯坦福大学计算机科学系和电子工程系副教授,人工智能实验室主任。吴恩达主要成就在机器学习和人工智能领域,他是人工智能和机器学习领域最权威的学者之一。

2010年,时任斯坦福大学教授的吴恩达加入谷歌开发团队XLab——这个团队已先后为谷歌开发无人驾驶汽车和谷歌眼镜两个知名项目。

吴恩达与谷歌顶级工程师开始合作建立全球最大的“神经网络”,这个神经网络能以与人类大脑学习新事物相同的方式来学习现实生活。谷歌将这个项目命名为“谷歌大脑”。

吴恩达最知名的是,所开发的人工神经网络通过观看一周YouTube视频,自主学会识别哪些是关于猫的视频。这个案例为人工智能领域翻开崭新一页。吴恩达表示,未来将会在谷歌无人驾驶汽车上使用该项技术,来识别车前面的动物或者小孩,从而及时躲避。

2014年5月16日,百度宣布吴恩达加入百度,担任百度公司首席科学家,负责百度研究院的领导工作,尤其是Baidu Brain计划。

2014年5月19日,百度宣布任命吴恩达博士为百度首席科学家,全面负责百度研究院。这是中国互联网公司迄今为止引进的最重量级人物。消息一经公布,就成为国际科技界的关注话题。美国权威杂志《麻省理工科技评论》(MIT Technology Review)甚至用充满激情的笔调对未来给予展望:“百度将领导一个创新的软件技术时代,更加了解世界。

相比较在百度当首席科学家闷声搞商业化项目的日子,对于吴恩达而言,做一个单纯的学术专家和AI布道者可能是更幸福的一件事。

早在今年 6 月24 日,吴恩达在 Twitter 上宣布了他的新项目 Deeplearning.ai,但这是一个什么项目始终处于神秘状态。

北京时间8月9日凌晨,神秘面纱终于揭开,吴恩达宣布deeplearning.ai将通过 Coursera 网站向大众提供一套深度学习方面的最新在线课程,这些课程,将由吴恩达和斯坦福研究生Kian Katanforoosh以及Younes Mourr合作教授,有关内容包含视频讲解、练习和扩展阅读,8月15即将开课。

吴恩达说:“新课程将注重于引导应用机器学习的方向,它会为人们提供一个深度学习的「模拟器」。就像训练飞行员一样,一个学员需要先在模拟器上进行多次训练,积累数年经验后才能在真实场景中起飞。”

不多说,直接上题目和答案吧。

Python Basics with Numpy (optional assignment)

Welcome to your first assignment. This exercise gives you a brief introduction to Python. Even if you’ve used Python before, this will help familiarize you with functions we’ll need.

Instructions:

You will be using Python 3.

Avoid using for-loops and while-loops, unless you are explicitly told to do so.

Do not modify the (# GRADED FUNCTION [function name]) comment in some cells. Your work would not be graded if you change this. Each cell containing that comment should only contain one function.

After coding your function, run the cell right below it to check if your result is correct.

After this assignment you will:

Be able to use iPython Notebooks

Be able to use numpy functions and numpy matrix/vector operations

Understand the concept of "broadcasting"

Be able to vectorize code

Let’s get started!

About iPython Notebooks

iPython Notebooks are interactive coding environments embedded in a webpage. You will be using iPython notebooks in this class. You only need to write code between the ### START CODE HERE ### and ### END CODE HERE ### comments. After writing your code, you can run the cell by either pressing “SHIFT”+”ENTER” or by clicking on “Run Cell” (denoted by a play symbol) in the upper bar of the notebook.

We will often specify “(≈ X lines of code)” in the comments to tell you about how much code you need to write. It is just a rough estimate, so don’t feel bad if your code is longer or shorter.

Exercise: Set test to “Hello World” in the cell below to print “Hello World” and run the two cells below.

### START CODE HERE ### (≈ 1 line of code)

test = "hello world"

print ("hello world")

### END CODE HERE ###print ("test: " + test)Expected output: test: Hello World

What you need to remember:

Run your cells using SHIFT+ENTER (or "Run cell")

Write code in the designated areas using Python 3 only

Do not modify the code outside of the designated areas

1 - Building basic functions with numpy

Numpy is the main package for scientific computing in Python. It is maintained by a large community (www.numpy.org). In this exercise you will learn several key numpy functions such as np.exp, np.log, and np.reshape. You will need to know how to use these functions for future assignments.

1.1 - sigmoid function, np.exp()

Before using np.exp(), you will use math.exp() to implement the sigmoid function. You will then see why np.exp() is preferable to math.exp().

Exercise: Build a function that returns the sigmoid of a real number x. Use math.exp(x) for the exponential function.

Reminder: sigmoid(x)=11+e−xsigmoid(x)=11+e−x is sometimes also known as the logistic function. It is a non-linear function used not only in Machine Learning (Logistic Regression), but also in Deep Learning.

To refer to a function belonging to a specific package you could call it using package_name.function(). Run the code below to see an example with math.exp().

# GRADED FUNCTION: basic_sigmoid

import math

def basic_sigmoid(x):

"""

Compute sigmoid of x.

Arguments:

x -- A scalar

Return:

s -- sigmoid(x)

"""

### START CODE HERE ### (≈ 1 line of code)

s =1/ (1+math.exp(-x))

### END CODE HERE ###

return sbasic_sigmoid(3)Expected Output:

basic_sigmoid(3) 0.9525741268224334 Actually, we rarely use the “math” library in deep learning because the inputs of the functions are real numbers. In deep learning we mostly use matrices and vectors. This is why numpy is more useful.

### One reason why we use "numpy" instead of "math" in Deep Learning ###

x = [1, 2, 3]

basic_sigmoid(x) # you will see this give an error when you run it, because x is a vector.---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

input -7-2e11097d6860> in <module>()

1 ### One reason why we use "numpy" instead of "math" in Deep Learning ###

2 x = [1, 2, 3]

----> 3 basic_sigmoid(x) # you will see this give an error when you run it, because x is a vector.

input-5-0e907b8c130c> in basic_sigmoid(x)

15

16 ### START CODE HERE ### (≈ 1 line of code)

---> 17 s =1/ (1+math.exp(-x))

18 ### END CODE HERE ###

19

TypeError: bad operand type for unary -: 'list'

In fact, if x=(x1,x2,…,xn)x=(x1,x2,…,xn) is a row vector then np.exp(x)np.exp(x) will apply the exponential function to every element of x. The output will thus be: np.exp(x)=(ex1,ex2,…,exn)

import numpy as np

# example of np.exp

x = np.array([1, 2, 3])

print(np.exp(x)) # result is (exp(1), exp(2), exp(3))Furthermore, if x is a vector, then a Python operation such as s=x+3s=x+3 or s=1xs=1x will output s as a vector of the same size as x.

# example of vector operation

x = np.array([1, 2, 3])

print (x + 3)Any time you need more info on a numpy function, we encourage you to look at the official documentation.

You can also create a new cell in the notebook and write np.exp? (for example) to get quick access to the documentation.

Exercise: Implement the sigmoid function using numpy.

Instructions: x could now be either a real number, a vector, or a matrix. The data structures we use in numpy to represent these shapes (vectors, matrices…) are called numpy arrays. You don’t need to know more for now.

For x∈ℝn,

# GRADED FUNCTION: sigmoid

import numpy as np # this means you can access numpy functions by writing np.function() instead of numpy.function()

def sigmoid(x):

"""

Compute the sigmoid of x

Arguments:

x -- A scalar or numpy array of any size

Return:

s -- sigmoid(x)

"""

### START CODE HERE ### (≈ 1 line of code)

s = 1/(1+np.exp(x))

### END CODE HERE ###

return sx = np.array([1, 2, 3])

sigmoid(x)Expected Output:

sigmoid([1,2,3]) array([ 0.73105858, 0.88079708, 0.95257413]) 1.2 - Sigmoid gradient

As you’ve seen in lecture, you will need to compute gradients to optimize loss functions using backpropagation. Let’s code your first gradient function.

Exercise: Implement the function sigmoid_grad() to compute the gradient of the sigmoid function with respect to its input x. The formula is:

sigmoid_derivative(x)=σ′(x)=σ(x)(1−σ(x))(2)

sigmoid_derivative(x)=σ′(x)=σ(x)(1−σ(x))

You often code this function in two steps:

Set s to be the sigmoid of x. You might find your sigmoid(x) function useful.

Compute σ′(x)=s(1−s)

# GRADED FUNCTION: sigmoid_derivative

import numpy as np

def sigmoid_derivative(x):

"""

Compute the gradient (also called the slope or derivative) of the sigmoid function with respect to its input x.

You can store the output of the sigmoid function into variables and then use it to calculate the gradient.

Arguments:

x -- A scalar or numpy array

Return:

ds -- Your computed gradient.

"""

### START CODE HERE ### (≈ 2 lines of code)

s = 1/(1+np.exp(-x))

print (s.shape)

ds = s*(1-s)

### END CODE HERE ###

return dsx = np.array([1, 2, 3])

print ("sigmoid_derivative(x) = " + str(sigmoid_derivative(x)))Expected Output:

sigmoid_derivative([1,2,3]) [ 0.19661193 0.10499359 0.04517666] 1.3 - Reshaping arrays

Two common numpy functions used in deep learning are np.shape and np.reshape().

X.shape is used to get the shape (dimension) of a matrix/vector X.

X.reshape(...) is used to reshape X into some other dimension.

For example, in computer science, an image is represented by a 3D array of shape (length,height,depth=3)(length,height,depth=3). However, when you read an image as the input of an algorithm you convert it to a vector of shape (length∗height∗3,1)(length∗height∗3,1). In other words, you “unroll”, or reshape, the 3D array into a 1D vector.Exercise: Implement image2vector() that takes an input of shape (length, height, 3) and returns a vector of shape (length*height*3, 1). For example, if you would like to reshape an array v of shape (a, b, c) into a vector of shape (a*b,c) you would do:

v = v.reshape((v.shape[0]*v.shape[1], v.shape[2])) # v.shape[0] = a ; v.shape[1] = b ; v.shape[2] = c

Please don’t hardcode the dimensions of image as a constant. Instead look up the quantities you need with image.shape[0], etc.

# GRADED FUNCTION: image2vector

def image2vector(image):

"""

Argument:

image -- a numpy array of shape (length, height, depth)

Returns:

v -- a vector of shape (length*height*depth, 1)

"""

### START CODE HERE ### (≈ 1 line of code)

v = image.reshape(image.shape[0]*image.shape[1]*image.shape[2],1)

### END CODE HERE ###

return v# This is a 3 by 3 by 2 array, typically images will be (num_px_x, num_px_y,3) where 3 represents the RGB values

image = np.array([[[ 0.67826139, 0.29380381],

[ 0.90714982, 0.52835647],

[ 0.4215251 , 0.45017551]],

[[ 0.92814219, 0.96677647],

[ 0.85304703, 0.52351845],

[ 0.19981397, 0.27417313]],

[[ 0.60659855, 0.00533165],

[ 0.10820313, 0.49978937],

[ 0.34144279, 0.94630077]]])

print ("image2vector(image) = " + str(image2vector(image)))Expected Output:

image2vector(image) [[ 0.67826139] [ 0.29380381] [ 0.90714982] [ 0.52835647] [ 0.4215251 ] [ 0.45017551] [ 0.92814219] [ 0.96677647] [ 0.85304703] [ 0.52351845] [ 0.19981397] [ 0.27417313] [ 0.60659855] [ 0.00533165] [ 0.10820313] [ 0.49978937] [ 0.34144279] [ 0.94630077]]1.4 - Normalizing rows

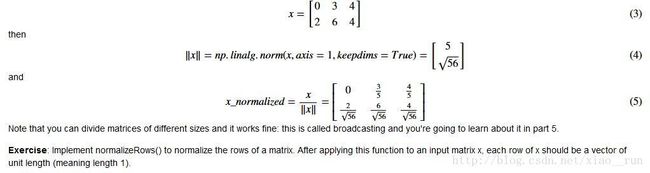

Another common technique we use in Machine Learning and Deep Learning is to normalize our data. It often leads to a better performance because gradient descent converges faster after normalization. Here, by normalization we mean changing x to x∥x∥x∥x∥ (dividing each row vector of x by its norm).

For example, if

Note that you can divide matrices of different sizes and it works fine: this is called broadcasting and you’re going to learn about it in part 5.

Exercise: Implement normalizeRows() to normalize the rows of a matrix. After applying this function to an input matrix x, each row of x should be a vector of unit length (meaning length 1).

# GRADED FUNCTION: normalizeRows

def normalizeRows(x):

"""

Implement a function that normalizes each row of the matrix x (to have unit length).

Argument:

x -- A numpy matrix of shape (n, m)

Returns:

x -- The normalized (by row) numpy matrix. You are allowed to modify x.

"""

### START CODE HERE ### (≈ 2 lines of code)

# Compute x_norm as the norm 2 of x. Use np.linalg.norm(..., ord = 2, axis = ..., keepdims = True)

x_norm = np.linalg.norm(x,axis=1,keepdims=True)

print(x_norm)

#np.linalg.norm 调用方式 norm(x, ord=None, axis=None, keepdims=False),其中x表示输入矩阵,ord表示范数的种类,默认为2范数

# x : array_like

#axis : {int, 2-tuple of ints, None}, optional

#If axis is an integer, axis=-1 argument to sum along rows):

#keepdims : bool, optional

#If this is set to True, the axes which are normed over are left in the result as dimensions with size one. With this option the result will broadcast correctly against the original x.

# Divide x by its norm.

x = x/x_norm

### END CODE HERE ###

return xx = np.array([

[0, 3, 4],

[1, 6, 4]])

print("normalizeRows(x) = " + str(normalizeRows(x)))Expected Output:

normalizeRows(x) [[ 0. 0.6 0.8 ] [ 0.13736056 0.82416338 0.54944226]]Note: In normalizeRows(), you can try to print the shapes of x_norm and x, and then rerun the assessment. You’ll find out that they have different shapes. This is normal given that x_norm takes the norm of each row of x. So x_norm has the same number of rows but only 1 column. So how did it work when you divided x by x_norm? This is called broadcasting and we’ll talk about it now!

1.5 - Broadcasting and the softmax function

A very important concept to understand in numpy is “broadcasting”. It is very useful for performing mathematical operations between arrays of different shapes. For the full details on broadcasting, you can read the official broadcasting documentation.

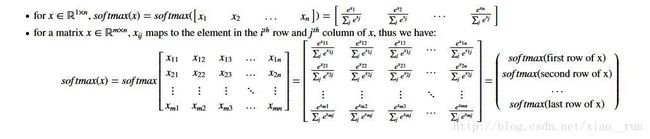

Exercise: Implement a softmax function using numpy. You can think of softmax as a normalizing function used when your algorithm needs to classify two or more classes. You will learn more about softmax in the second course of this specialization.

# GRADED FUNCTION: softmax

def softmax(x):

"""Calculates the softmax for each row of the input x.

Your code should work for a row vector and also for matrices of shape (n, m).

Argument:

x -- A numpy matrix of shape (n,m)

Returns:

s -- A numpy matrix equal to the softmax of x, of shape (n,m)

"""

#如果某一个zj大过其他z,那这个映射的分量就逼近于1,其他就逼近于0,

#主要应用就是多分类,sigmoid函数只能分两类,而softmax能分多类,softmax是sigmoid的扩展。

### START CODE HERE ### (≈ 3 lines of code)

# Apply exp() element-wise to x. Use np.exp(...).

x_exp = np.exp(x)

print(x_exp)

# Create a vector x_sum that sums each row of x_exp. Use np.sum(..., axis = 1, keepdims = True).

x_sum = np.sum(x_exp,axis=1,keepdims=True)

# Compute softmax(x) by dividing x_exp by x_sum. It should automatically use numpy broadcasting.

s = x_exp/x_sum

### END CODE HERE ###

return sx = np.array([

[9, 2, 5, 0, 0],

[7, 5, 0, 0 ,0]])

print("softmax(x) = " + str(softmax(x)))Expected Output:

softmax(x) [[ 9.80897665e-01 8.94462891e-04 1.79657674e-02 1.21052389e-04 1.21052389e-04] [ 8.78679856e-01 1.18916387e-01 8.01252314e-04 8.01252314e-04 8.01252314e-04]]Note:

If you print the shapes of x_exp, x_sum and s above and rerun the assessment cell, you will see that x_sum is of shape (2,1) while x_exp and s are of shape (2,5). x_exp/x_sum works due to python broadcasting.

Congratulations! You now have a pretty good understanding of python numpy and have implemented a few useful functions that you will be using in deep learning.

What you need to remember:

np.exp(x) works for any np.array x and applies the exponential function to every coordinate

the sigmoid function and its gradient

image2vector is commonly used in deep learning

np.reshape is widely used. In the future, you'll see that keeping your matrix/vector dimensions straight will go toward eliminating a lot of bugs.

numpy has efficient built-in functions

broadcasting is extremely useful

2) Vectorization

In deep learning, you deal with very large datasets. Hence, a non-computationally-optimal function can become a huge bottleneck in your algorithm and can result in a model that takes ages to run. To make sure that your code is computationally efficient, you will use vectorization. For example, try to tell the difference between the following implementations of the dot/outer/elementwise product.

import time

x1 = [9, 2, 5, 0, 0, 7, 5, 0, 0, 0, 9, 2, 5, 0, 0]

x2 = [9, 2, 2, 9, 0, 9, 2, 5, 0, 0, 9, 2, 5, 0, 0]

### CLASSIC DOT PRODUCT OF VECTORS IMPLEMENTATION ###

tic = time.process_time()

dot = 0

for i in range(len(x1)):

dot+= x1[i]*x2[i]

toc = time.process_time()

print ("dot = " + str(dot) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### CLASSIC OUTER PRODUCT IMPLEMENTATION ###

tic = time.process_time()

outer = np.zeros((len(x1),len(x2))) # we create a len(x1)*len(x2) matrix with only zeros

for i in range(len(x1)):

for j in range(len(x2)):

outer[i,j] = x1[i]*x2[j]

toc = time.process_time()

print ("outer = " + str(outer) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### CLASSIC ELEMENTWISE IMPLEMENTATION ###

tic = time.process_time()

mul = np.zeros(len(x1))

for i in range(len(x1)):

mul[i] = x1[i]*x2[i]

toc = time.process_time()

print ("elementwise multiplication = " + str(mul) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### CLASSIC GENERAL DOT PRODUCT IMPLEMENTATION ###

W = np.random.rand(3,len(x1)) # Random 3*len(x1) numpy array

tic = time.process_time()

gdot = np.zeros(W.shape[0])

for i in range(W.shape[0]):

for j in range(len(x1)):

gdot[i] += W[i,j]*x1[j]

toc = time.process_time()

print ("gdot = " + str(gdot) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")x1 = [9, 2, 5, 0, 0, 7, 5, 0, 0, 0, 9, 2, 5, 0, 0]

x2 = [9, 2, 2, 9, 0, 9, 2, 5, 0, 0, 9, 2, 5, 0, 0]

### VECTORIZED DOT PRODUCT OF VECTORS ###

tic = time.process_time()

dot = np.dot(x1,x2)

toc = time.process_time()

print ("dot = " + str(dot) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### VECTORIZED OUTER PRODUCT ###

tic = time.process_time()

outer = np.outer(x1,x2)

toc = time.process_time()

print ("outer = " + str(outer) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### VECTORIZED ELEMENTWISE MULTIPLICATION ###

tic = time.process_time()

mul = np.multiply(x1,x2)

toc = time.process_time()

print ("elementwise multiplication = " + str(mul) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")

### VECTORIZED GENERAL DOT PRODUCT ###

tic = time.process_time()

dot = np.dot(W,x1)

toc = time.process_time()

print ("gdot = " + str(dot) + "\n ----- Computation time = " + str(1000*(toc - tic)) + "ms")As you may have noticed, the vectorized implementation is much cleaner and more efficient. For bigger vectors/matrices, the differences in running time become even bigger.

Note that np.dot() performs a matrix-matrix or matrix-vector multiplication. This is different from np.multiply() and the * operator (which is equivalent to .* in Matlab/Octave), which performs an element-wise multiplication.

2.1 Implement the L1 and L2 loss functions

Exercise: Implement the numpy vectorized version of the L1 loss. You may find the function abs(x) (absolute value of x) useful.

Reminder:

The loss is used to evaluate the performance of your model. The bigger your loss is, the more different your predictions (ŷ y^) are from the true values (yy). In deep learning, you use optimization algorithms like Gradient Descent to train your model and to minimize the cost.

L1 loss is defined as:

# GRADED FUNCTION: L1

def L1(yhat, y):

"""

Arguments:

yhat -- vector of size m (predicted labels)

y -- vector of size m (true labels)

Returns:

loss -- the value of the L1 loss function defined above

"""

### START CODE HERE ### (≈ 1 line of code)

# loss = np.sum(abs(np.y-np.yhat))

loss = np.sum(np.abs(y - yhat))

### END CODE HERE ###

return lossyhat = np.array([.9, 0.2, 0.1, .4, .9])

y = np.array([1, 0, 0, 1, 1])

print("L1 = " + str(L1(yhat,y)))Expected Output:

L1 1.1 # GRADED FUNCTION: L2

def L2(yhat, y):

"""

Arguments:

yhat -- vector of size m (predicted labels)

y -- vector of size m (true labels)

Returns:

loss -- the value of the L2 loss function defined above

"""

### START CODE HERE ### (≈ 1 line of code)

loss = np.dot((yhat-y),(yhat-y))

### END CODE HERE ###

return lossyhat = np.array([.9, 0.2, 0.1, .4, .9])

y = np.array([1, 0, 0, 1, 1])

print("L2 = " + str(L2(yhat,y)))Expected Output:

L2 0.43 Congratulations on completing this assignment. We hope that this little warm-up exercise helps you in the future assignments, which will be more exciting and interesting!

What to remember:

Vectorization is very important in deep learning. It provides computational efficiency and clarity.

You have reviewed the L1 and L2 loss.

You are familiar with many numpy functions such as np.sum, np.dot, np.multiply, np.maximum, etc...

softmax函数解释请参考 https://www.zhihu.com/question/23765351