天猫评论的爬取(附词云解析)

废话少说,直接上干货

选择的淘宝产品是olay官方旗舰店下的产品,

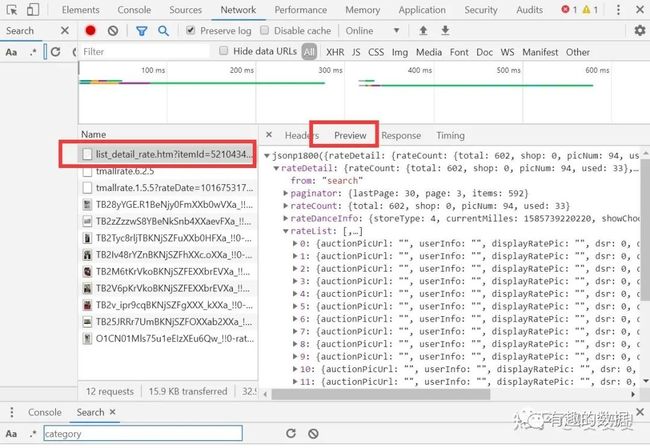

点击到评价页面之后,打开网页源代码,找到评论的链接如下所示

接下来就是爬取的过程了,找到链接:

rate.tmall.com/list_det

为了发现不同页数URL链接的区别,多选择几页

rate.tmall.com/list_det

随后你就会发现,变化的参数是currentPage,_ksTS,callback,其中主要的参数是currentPage,其他的参数只是随着时间不同在变化,没啥用

接下来就需要写代码了,完整代码如下:

#导入需要的库

import requests

from bs4 import BeautifulSoup as bs

import json

import csv

import re

import time

#宏变量存储目标js的URL列表

URL_LIST = []

cookies=['放置自己的cookies']

'''

URL链接中的_ksTS,callback参数的解析

_ksTS=1526545121518_1881

callback=jsonp1882

'''

t=str(time.time()).split('.')

print(t[0],t[1])

#生成链接列表

def get_url(num):

# urlFront = 'https://rate.tmall.com/list_detail_rate.htm?itemId=10905215461&spuId=273210686&sellerId=525910381&order=3¤tPage='

url='https://rate.tmall.com/list_detail_rate.htm?itemId=597319717047&spuId=1216294042&sellerId=2201435095942&order=3¤tPage='

urlRear = '&append=0&content=1&tagId=&posi=&picture=&groupId=&ua=098%23E1hvHQvRvpQvUpCkvvvvvjiPRLqp0jlbn2q96jD2PmPWsjn2RL5wQjnhn2cysjnhR86CvC8h98KKXvvveSQDj60x0foAKqytvpvhvvCvp86Cvvyv9PPQt9vvHI4rvpvEvUmkIb%2BvvvRCiQhvCvvvpZptvpvhvvCvpUyCvvOCvhE20WAivpvUvvCC8n5y6J0tvpvIvvCvpvvvvvvvvhZLvvvvtQvvBBWvvUhvvvCHhQvvv7QvvhZLvvvCfvyCvhAC03yXjNpfVE%2BffCuYiLUpVE6Fp%2B0xhCeOjLEc6aZtn1mAVAdZaXTAdXQaWg03%2B2e3rABCCahZ%2Bu0OJooy%2Bb8reEyaUExreEKKD5HavphvC9vhphvvvvGCvvpvvPMM3QhvCvmvphmCvpvZzPQvcrfNznswOiaftlSwvnQ%2B7e9%3D&needFold=0&_ksTS=1552466697082_2019&callback=jsonp2020'

for i in range(0,num):

URL_LIST.append(url+str(1+i)+urlRear)

#获取评论数据

def get_content(num):

#循环获取每一页评论

for i in range(num):

#头文件,没有头文件会返回错误的js

headers = {

'cookie':cookies[0],

'user-agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.132 Safari/537.36',

'referer': 'https://detail.tmall.com/item.htm?spm=a1z10.5-b-s.w4011-17205939323.51.30156440Aer569&id=41212119204&rn=06f66c024f3726f8520bb678398053d8&abbucket=19&on_comment=1&sku_properties=134942334:3226348',

'accept': '*/*',

'accept-encoding':'gzip, deflate, br',

'accept-language': 'zh-CN,zh;q=0.9'

}

#解析JS文件内容

print('第{}页'.format(i+1))

# print(URL_LIST[i])

content = requests.get(URL_LIST[i],headers=headers).text

data=re.findall(r'{.*}',content)[0]

data=json.loads(data)

# print(data)

items=data['rateDetail']['rateList']

D=[]

for item in items:

product=item['auctionSku']

name=item['displayUserNick']

content=item['rateContent']

times=item['rateDate']

data=[product,name,content,times]

D.append(data)

save_csv(D)

def save_csv(data):

with open('./text.csv', 'a', encoding='utf-8',newline='')as file:

writer = csv.writer(file)

writer.writerows(data)

#主函数

if __name__ == "__main__":

header = ['产品','评论人','评论内容','评论时间']

with open('text.csv', 'a',encoding='utf-8',newline='')as f:

write=csv.writer(f)

write.writerow(header)

page=100

get_url(page)

# 获取评论页数

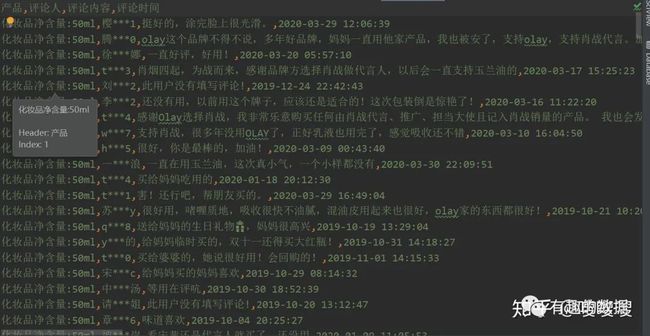

get_content(100)在爬取的时候必须加上cookies才能获取数据,可以选择自己的cookies来测试一下,爬取的结果如下所示:

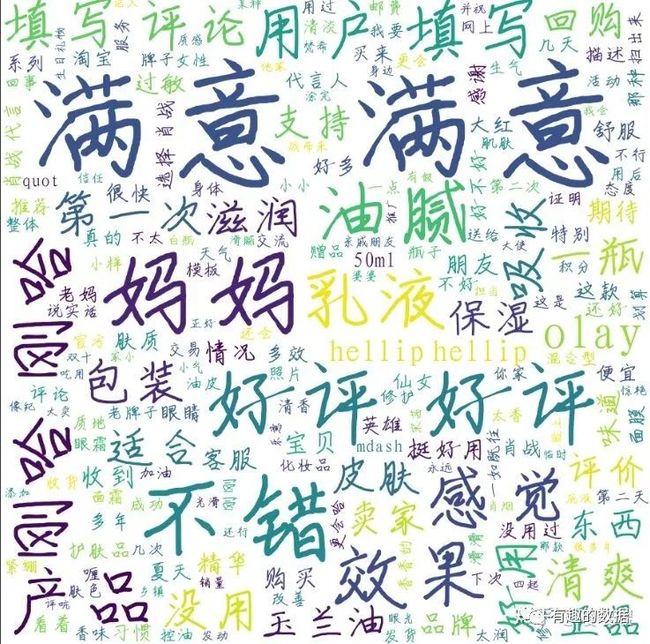

少侠别走,作为数据分析师,怎么只能简单的爬取数据就完事了呢,怎么着也得简单的分析一下啦,做个词云图什么的啦。

上面词云图只提供参考,毕竟只爬取了160天评论,想要做详细的分析可以爬取完整,

当然了,图形可以换,字体也可以换,词频也可以统计,这里就不做过多描述。

参考代码:

import pandas as pd

import jieba

import time

import csv

import re

from wordcloud import WordCloud

from PIL import Image

import matplotlib.pyplot as plt

import numpy as np

df=pd.read_csv('./olay.csv',encoding='utf-8')

print(df['评论内容'])

items=df['评论内容'].astype(str).tolist()

# 创建停用词list

def stopwordslist():

stopwords = [line.strip() for line in open('./stop_word.txt', 'r', encoding='utf-8').readlines()]

return stopwords

# 去除英文,数字等其他特殊符号

def remove_sub(input_str):

# 去除数字

shuzi=u'123456789.'

# 去除字母

zimu = u'a-zA-Z'

output_str = re.sub(r'[{}]+'.format(shuzi), '', input_str)

return output_str

def main():

outstr = ''

for item in items:

b=jieba.cut(item,cut_all=False)

# 创建一个停用词表

stopwords=stopwordslist()

for j in b:

if j not in stopwords:

if not remove_sub(j):

continue

if j !='\t':

outstr+=j

outstr+=" "

return outstr

alice_mask = np.array(Image.open('./0.png'))

cloud = WordCloud(

#设置字体,不指定就会出现乱码

font_path="./ziti.ttf",

#font_path=path.join(d,'simsun.ttc'),

#设置背景色

background_color='white',

#词云形状

mask=alice_mask,

#允许最大词汇

max_words=200,

#最大号字体

max_font_size=200,

random_state=1,

width=400,

height=800

)

cloud.generate(main())

cloud.to_file('./pic1.png')觉得不错的话,可以关注一下我的公众号喽

随着大数据的时代的到来,数据变得越来越重要,数据可以帮助我们来看清行业的本质,也可以帮助我们更加快速的了解一个行业,关注公众号——DT学说,走进数据的时代

![]()