TensorBoard 可视化

TensorBoard 可视化

命名空间与 TensorBoard 图上的节点

为了更好地组织可视化效果图中的计算节点,TensorBoard 支持通过 Tensorflow 命名空间来管理可视化效果图上的节点。

import tensorflow as tf

with tf.variable_scope("foo"):

a = tf.get_variable("bar", [1])

print(a.name)

with tf.variable_scope("bar"):

b = tf.get_variable("bar", [1])

print(b.name)

"""

foo/bar:0

bar/bar:0

"""除了 tf.variable_scope 函数,tf.name_scope 函数也提供了命名空间管理的功能。这两个函数在大部分情况下是等价的,唯一的区别是在使用 tf.getVariable 函数时,tf.name_scope 函数将不起作用。

with tf.name_scope("a"):

a = tf.Variable([1])

print(a.name)

a = tf.get_variable("b", [1])

print(a.name)

"""

a/Variable:0

b:0

"""TensorBoard 根据命名空间来整理可视化效果图上的节点

with tf.name_scope("input1"):

input1 = tf.constant([1.0, 2.0, 3.0], name="input2")

with tf.name_scope("input2"):

input2 = tf.Variable(tf.random_uniform([3]), name="input2")

output = tf.add_n([input1, input2], name="add")

writer = tf.summary.FileWriter("log/simple_example.log", tf.get_default_graph())

writer.close()之后通过运行 tensorboard 就能直接通过网页 localhost:6006 访问计算图。

tensorboard --logdir=log/simple_example.log之后针对 mnist.py 使用 tensorboard 进行可视化

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_inference

BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 3000

MOVING_AVERAGE_DECAY = 0.99

def train(mnist):

# 输入数据的命名空间。

with tf.name_scope('input'):

x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name='y-input')

regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

y = mnist_inference.inference(x, regularizer)

global_step = tf.Variable(0, trainable=False)

# 处理滑动平均的命名空间。

with tf.name_scope("moving_average"):

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

# 计算损失函数的命名空间。

with tf.name_scope("loss_function"):

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

# 定义学习率、优化方法及每一轮执行训练的操作的命名空间。

with tf.name_scope("train_step"):

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,

staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

writer = tf.summary.FileWriter("log", tf.get_default_graph())

# 训练模型。

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

if i % 1000 == 0:

# 配置运行时需要记录的信息。

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

# 运行时记录运行信息的proto。

run_metadata = tf.RunMetadata()

_, loss_value, step = sess.run(

[train_op, loss, global_step], feed_dict={x: xs, y_: ys},

options=run_options, run_metadata=run_metadata)

writer.add_run_metadata(run_metadata=run_metadata, tag=("tag%d" % i), global_step=i)

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

else:

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

writer.close()

def main(argv=None):

mnist = input_data.read_data_sets("../../datasets/MNIST_data", one_hot=True)

train(mnist)

if __name__ == '__main__':

main()

"""

After 1 training step(s), loss on training batch is 2.87635.

After 1001 training step(s), loss on training batch is 0.364974.

After 2001 training step(s), loss on training batch is 0.174141.

"""监控指标可视化

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

SUMMARY_DIR = "log"

BATCH_SIZE = 100

TRAIN_STEPS = 3000

def variable_summaries(var, name):

with tf.name_scope('summaries'):

tf.summary.histogram(name, var)

mean = tf.reduce_mean(var)

tf.summary.scalar('mean/' + name, mean)

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev/' + name, stddev)

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

with tf.name_scope(layer_name):

with tf.name_scope('weights'):

weights = tf.Variable(tf.truncated_normal([input_dim, output_dim], stddev=0.1))

variable_summaries(weights, layer_name + '/weights')

with tf.name_scope('biases'):

biases = tf.Variable(tf.constant(0.0, shape=[output_dim]))

variable_summaries(biases, layer_name + '/biases')

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram(layer_name + '/pre_activations', preactivate)

activations = act(preactivate, name='activation')

# 记录神经网络节点输出在经过激活函数之后的分布。

tf.summary.histogram(layer_name + '/activations', activations)

return activations

def main():

mnist = input_data.read_data_sets("../../datasets/MNIST_data", one_hot=True)

with tf.name_scope('input'):

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y_ = tf.placeholder(tf.float32, [None, 10], name='y-input')

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10)

hidden1 = nn_layer(x, 784, 500, 'layer1')

y = nn_layer(hidden1, 500, 10, 'layer2', act=tf.identity)

with tf.name_scope('cross_entropy'):

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=y, labels=y_))

tf.summary.scalar('cross_entropy', cross_entropy)

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(0.001).minimize(cross_entropy)

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('accuracy', accuracy)

merged = tf.summary.merge_all()

with tf.Session() as sess:

summary_writer = tf.summary.FileWriter(SUMMARY_DIR, sess.graph)

tf.global_variables_initializer().run()

for i in range(TRAIN_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

# 运行训练步骤以及所有的日志生成操作,得到这次运行的日志。

summary, _ = sess.run([merged, train_step], feed_dict={x: xs, y_: ys})

# 将得到的所有日志写入日志文件,这样TensorBoard程序就可以拿到这次运行所对应的

# 运行信息。

summary_writer.add_summary(summary, i)

summary_writer.close()

if __name__ == '__main__':

main()

Tensorflow 官网 TensorBoard 使用实例

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the 'License');

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an 'AS IS' BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

"""A simple MNIST classifier which displays summaries in TensorBoard.

This is an unimpressive MNIST model, but it is a good example of using

tf.name_scope to make a graph legible in the TensorBoard graph explorer, and of

naming summary tags so that they are grouped meaningfully in TensorBoard.

It demonstrates the functionality of every TensorBoard dashboard.

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import argparse

import os

import sys

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

FLAGS = None

def train():

# Import data

mnist = input_data.read_data_sets(FLAGS.data_dir,

fake_data=FLAGS.fake_data)

sess = tf.InteractiveSession()

# Create a multilayer model.

# Input placeholders

with tf.name_scope('input'):

x = tf.placeholder(tf.float32, [None, 784], name='x-input')

y_ = tf.placeholder(tf.int64, [None], name='y-input')

with tf.name_scope('input_reshape'):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image('input', image_shaped_input, 10)

# We can't initialize these variables to 0 - the network will get stuck.

def weight_variable(shape):

"""Create a weight variable with appropriate initialization."""

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

"""Create a bias variable with appropriate initialization."""

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def variable_summaries(var):

"""Attach a lot of summaries to a Tensor (for TensorBoard visualization)."""

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)

tf.summary.scalar('max', tf.reduce_max(var))

tf.summary.scalar('min', tf.reduce_min(var))

tf.summary.histogram('histogram', var)

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

"""Reusable code for making a simple neural net layer.

It does a matrix multiply, bias add, and then uses ReLU to nonlinearize.

It also sets up name scoping so that the resultant graph is easy to read,

and adds a number of summary ops.

"""

# Adding a name scope ensures logical grouping of the layers in the graph.

with tf.name_scope(layer_name):

# This Variable will hold the state of the weights for the layer

with tf.name_scope('weights'):

weights = weight_variable([input_dim, output_dim])

variable_summaries(weights)

with tf.name_scope('biases'):

biases = bias_variable([output_dim])

variable_summaries(biases)

with tf.name_scope('Wx_plus_b'):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram('pre_activations', preactivate)

activations = act(preactivate, name='activation')

tf.summary.histogram('activations', activations)

return activations

hidden1 = nn_layer(x, 784, 500, 'layer1')

with tf.name_scope('dropout'):

keep_prob = tf.placeholder(tf.float32)

tf.summary.scalar('dropout_keep_probability', keep_prob)

dropped = tf.nn.dropout(hidden1, keep_prob)

# Do not apply softmax activation yet, see below.

y = nn_layer(dropped, 500, 10, 'layer2', act=tf.identity)

with tf.name_scope('cross_entropy'):

# The raw formulation of cross-entropy,

#

# tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(tf.softmax(y)),

# reduction_indices=[1]))

#

# can be numerically unstable.

#

# So here we use tf.losses.sparse_softmax_cross_entropy on the

# raw logit outputs of the nn_layer above, and then average across

# the batch.

with tf.name_scope('total'):

cross_entropy = tf.losses.sparse_softmax_cross_entropy(

labels=y_, logits=y)

tf.summary.scalar('cross_entropy', cross_entropy)

with tf.name_scope('train'):

train_step = tf.train.AdamOptimizer(FLAGS.learning_rate).minimize(

cross_entropy)

with tf.name_scope('accuracy'):

with tf.name_scope('correct_prediction'):

correct_prediction = tf.equal(tf.argmax(y, 1), y_)

with tf.name_scope('accuracy'):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('accuracy', accuracy)

# Merge all the summaries and write them out to

# /tmp/tensorflow/mnist/logs/mnist_with_summaries (by default)

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter(FLAGS.log_dir + '/train', sess.graph)

test_writer = tf.summary.FileWriter(FLAGS.log_dir + '/test')

tf.global_variables_initializer().run()

# Train the model, and also write summaries.

# Every 10th step, measure test-set accuracy, and write test summaries

# All other steps, run train_step on training data, & add training summaries

def feed_dict(train):

"""Make a TensorFlow feed_dict: maps data onto Tensor placeholders."""

if train or FLAGS.fake_data:

xs, ys = mnist.train.next_batch(100, fake_data=FLAGS.fake_data)

k = FLAGS.dropout

else:

xs, ys = mnist.test.images, mnist.test.labels

k = 1.0

return {x: xs, y_: ys, keep_prob: k}

for i in range(FLAGS.max_steps):

if i % 10 == 0: # Record summaries and test-set accuracy

summary, acc = sess.run([merged, accuracy], feed_dict=feed_dict(False))

test_writer.add_summary(summary, i)

print('Accuracy at step %s: %s' % (i, acc))

else: # Record train set summaries, and train

if i % 100 == 99: # Record execution stats

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary, _ = sess.run([merged, train_step],

feed_dict=feed_dict(True),

options=run_options,

run_metadata=run_metadata)

train_writer.add_run_metadata(run_metadata, 'step%03d' % i)

train_writer.add_summary(summary, i)

print('Adding run metadata for', i)

else: # Record a summary

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_summary(summary, i)

train_writer.close()

test_writer.close()

def main(_):

if tf.gfile.Exists(FLAGS.log_dir):

tf.gfile.DeleteRecursively(FLAGS.log_dir)

tf.gfile.MakeDirs(FLAGS.log_dir)

train()

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--fake_data', nargs='?', const=True, type=bool,

default=False,

help='If true, uses fake data for unit testing.')

parser.add_argument('--max_steps', type=int, default=1000,

help='Number of steps to run trainer.')

parser.add_argument('--learning_rate', type=float, default=0.001,

help='Initial learning rate')

parser.add_argument('--dropout', type=float, default=0.9,

help='Keep probability for training dropout.')

parser.add_argument(

'--data_dir',

type=str,

default=os.path.join(os.getenv('TEST_TMPDIR', '/tmp'),

'tensorflow/mnist/input_data'),

help='Directory for storing input data')

parser.add_argument(

'--log_dir',

type=str,

default=os.path.join(os.getenv('TEST_TMPDIR', '/tmp'),

'tensorflow/mnist/logs/mnist_with_summaries'),

help='Summaries log directory')

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

其运行时需要指定数据路径和保存路径

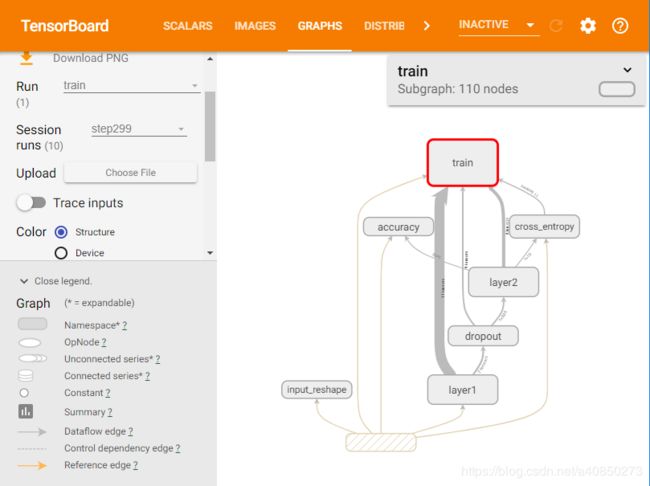

python mnist.py --data_dir=datapath --log_dir=logpath之后通过 Tensorboard 就能查看得到的计算图了

tensorboard --logdir=logpath # 与之前指定的 log 路径一致在调试过程中,运行 tensorboard 报错:GetNext() takes 1 positional argument but 2 were given,可通过以下方案解决。

pip install tb-nightly

pip install -U protobuf