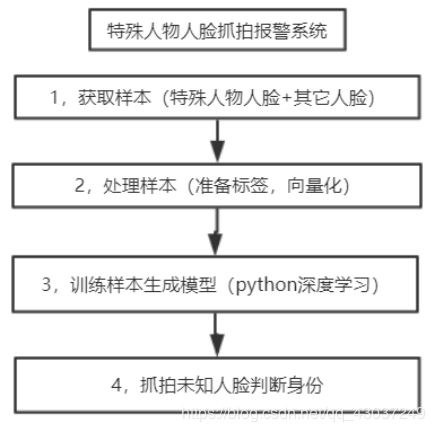

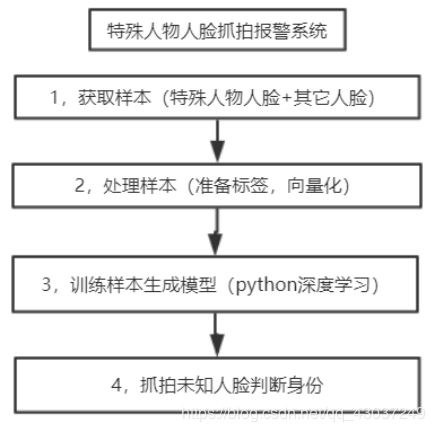

概念框架

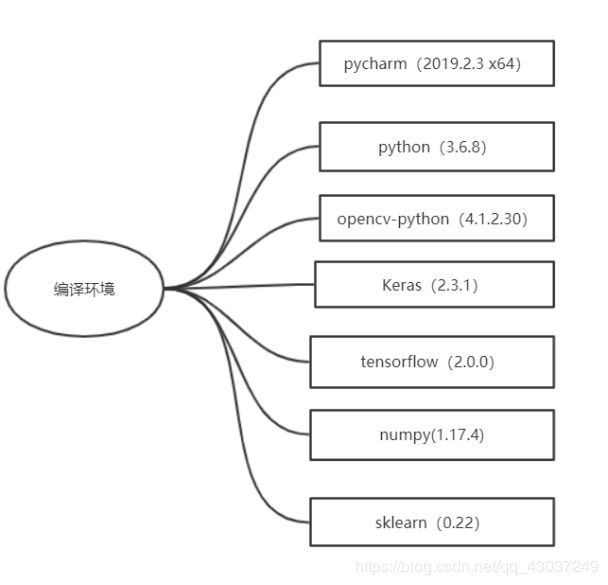

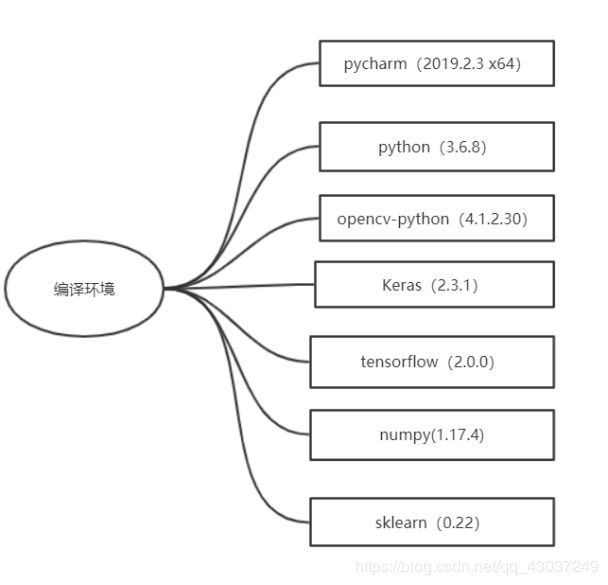

环境配置

data_preparaation.py(作用:摄像头抓拍与保存人脸)

import cv2

def CatchPICFromVideo(catch_num, path_name):

face_cascade = cv2.CascadeClassifier('E:/anaconda/Anaconda3/pkgs/libopencv-3.4.2-h20b85fd_0/Library/etc/haarcascades/haarcascade_frontalface_alt2.xml')

camera = cv2.VideoCapture(0)

num = 0

while True:

ret, frame = camera.read()

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

faces = face_cascade.detectMultiScale(gray, 1.1, 5)

for (x, y, w, h) in faces:

img_name = f'{path_name}/{str(num)}.jpg'

image = frame[y:y + h, x:x + w]

print(img_name)

cv2.imwrite(img_name, image)

num += 1

if num > catch_num:

break

cv2.rectangle(frame, (x, y), (x + w, y + h), (255, 0, 0), 2)

font = cv2.FONT_HERSHEY_SIMPLEX

cv2.putText(frame, f'num:{str(num)}', (x + 30, y + 30), font, 1, (255, 0, 255), 4)

if num > catch_num:

break

cv2.imshow('camera', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

camera.release()

cv2.destroyAllWindows()

if __name__ == '__main__':

CatchPICFromVideo(100, './data/criminal')

photo_face.py(作用:从图片上截取与保存有效人脸)

import os

import cv2

import time

import shutil

def getAllPath(dirpath, *suffix):

PathArray = []

for r, ds, fs in os.walk(dirpath):

for fn in fs:

if os.path.splitext(fn)[1] in suffix:

fname = os.path.join(r, fn)

PathArray.append(fname)

return PathArray

def readPicSaveFace_1(sourcePath, targetPath, invalidPath, *suffix):

try:

ImagePaths = getAllPath(sourcePath, *suffix)

count = 1

face_cascade = cv2.CascadeClassifier('E:/anaconda/Anaconda3/pkgs/libopencv-3.4.2-h20b85fd_0/Library/etc/haarcascades/haarcascade_frontalface_alt.xml')

for imagePath in ImagePaths:

try:

img = cv2.imread(imagePath)

if type(img) != str:

faces = face_cascade.detectMultiScale(img, 1.1, 5)

if len(faces):

for (x, y, w, h) in faces:

if w >= 16 and h >= 16:

listStr = [str(int(time.time())), str(count)]

fileName = ''.join(listStr)

X = int(x)

W = min(int(x + w), img.shape[1])

Y = int(y)

H = min(int(y + h), img.shape[0])

f = cv2.resize(img[Y:H, X:W], (W - X, H - Y))

cv2.imwrite(targetPath + os.sep + '%s.jpg' % fileName, f)

count += 1

print(imagePath + "have face")

else:

shutil.move(imagePath, invalidPath)

except:

continue

except IOError:

print("Error")

else:

print('Find ' + str(count - 1) + ' faces to Destination ' + targetPath)

if __name__ == '__main__':

invalidPath = r'C:\Users\ASUS\Desktop\data\invalid'

sourcePath = r'C:\Users\ASUS\Desktop\data\web'

targetPath1 = r'C:\Users\ASUS\Desktop\data\new'

readPicSaveFace_1(sourcePath, targetPath1, invalidPath, '.jpg', '.JPG', 'png', 'PNG')

face_dataset.py(作用:样本预处理)

import os

import numpy as np

import cv2

IMAGE_SIZE = 64

def resize_image(image, height=IMAGE_SIZE, width=IMAGE_SIZE):

top, bottom, left, right = 0, 0, 0, 0

h, w, _ = image.shape

longest_edge = max(h, w)

if h < longest_edge:

d = longest_edge - h

top = d // 2

bottom = d // 2

elif w < longest_edge:

d = longest_edge - w

left = d // 2

right = d // 2

else:

pass

BLACK = [0, 0, 0]

constant = cv2.copyMakeBorder(image, top, bottom, left, right, cv2.BORDER_CONSTANT, value=BLACK)

return cv2.resize(constant, (height, width))

images, labels = list(), list()

def read_path(path_name):

for dir_item in os.listdir(path_name):

full_path = os.path.abspath(os.path.join(path_name, dir_item))

if os.path.isdir(full_path):

read_path(full_path)

else:

if dir_item.endswith('.jpg'):

image = cv2.imread(full_path)

image = resize_image(image, IMAGE_SIZE, IMAGE_SIZE)

images.append(image)

labels.append(path_name)

return images, labels

def load_dataset(path_name):

images, labels = read_path(path_name)

images = np.array(images)

print(images.shape)

labels = np.array([1 if label.endswith('criminal') else 0 for label in labels])

print(labels)

return images, labels

if __name__ == '__main__':

images, labels = load_dataset(os.getcwd()+ '/data')

print('load over')

face_train.py(利用Keras搭建卷积神经网络)

import random

from sklearn.model_selection import train_test_split

from keras.preprocessing.image import ImageDataGenerator

from keras.models import Sequential

from keras.layers import Dense, Activation, Flatten, Dropout

from keras.layers import Conv2D, MaxPool2D

from keras.optimizers import SGD

from keras.utils import np_utils

from keras.models import load_model

from keras import backend as K

from face_dataset import load_dataset, resize_image, IMAGE_SIZE

import warnings

warnings.filterwarnings('ignore')

class Dataset:

def __init__(self, path_name):

self.train_images = None

self.train_labels = None

self.valid_images = None

self.valid_labels = None

self.path_name = path_name

self.input_shape = None

def load(self, img_rows=IMAGE_SIZE, img_cols=IMAGE_SIZE, img_channels=3, nb_classes=2):

images, labels = load_dataset(self.path_name)

train_images, valid_images, train_labels,valid_labels = train_test_split(images, labels, test_size=0.3,

random_state=random.randint(0, 10))

train_images = train_images.reshape(train_images.shape[0], img_rows, img_cols, img_channels)

valid_images = valid_images.reshape(valid_images.shape[0], img_rows, img_cols, img_channels)

self.input_shape = (img_rows, img_cols, img_channels)

print(train_images.shape[0], 'train samples')

print(valid_images.shape[0], 'valid samples')

train_labels = np_utils.to_categorical(train_labels, nb_classes)

valid_labels = np_utils.to_categorical(valid_labels, nb_classes)

train_images = train_images.astype('float32')

valid_images = valid_images.astype('float32')

train_images /= 255.0

valid_images /= 255.0

self.train_images = train_images

self.valid_images = valid_images

self.train_labels = train_labels

self.valid_labels = valid_labels

class Model:

def __init__(self):

self.model = None

def build_model(self, dataset, nb_classes=2):

self.model = Sequential()

self.model.add(Conv2D(32, 3, 3, border_mode='same',input_shape=dataset.input_shape))

self.model.add(Activation('relu'))

self.model.add(Conv2D(32, 3, 3))

self.model.add(Activation('relu'))

self.model.add(MaxPool2D(pool_size=(2, 2)))

self.model.add(Dropout(0.25))

self.model.add(Conv2D(64, 3, 3, border_mode='same'))

self.model.add(Activation('relu'))

self.model.add(Conv2D(64, 3, 3))

self.model.add(Activation('relu'))

self.model.add(MaxPool2D(pool_size=(2, 2)))

self.model.add(Dropout(0.25))

self.model.add(Flatten())

self.model.add(Dense(512))

self.model.add(Activation('relu'))

self.model.add(Dropout(0.5))

self.model.add(Dense(nb_classes))

self.model.add(Activation('softmax'))

self.model.summary()

def train(self, dataset, batch_size=20, nb_epoch=20, data_augmentation=True):

sgd = SGD(lr=0.01, decay=1e-6,

momentum=0.9, nesterov=True)

self.model.compile(loss='categorical_crossentropy',

optimizer=sgd,

metrics=['accuracy'])

if not data_augmentation:

self.model.fit(dataset.train_images,

dataset.train_labels,

batch_size=batch_size,

nb_epoch=nb_epoch,

validation_data=(dataset.valid_images, dataset.valid_labels),

shuffle=True)

else:

datagen = ImageDataGenerator(

featurewise_center=False,

samplewise_center=False,

featurewise_std_normalization=False,

samplewise_std_normalization=False,

zca_whitening=False,

rotation_range=20,

width_shift_range=0.2,

height_shift_range=0.2,

horizontal_flip=True,

vertical_flip=False)

datagen.fit(dataset.train_images)

self.model.fit_generator(datagen.flow(dataset.train_images, dataset.train_labels,

batch_size=batch_size),

samples_per_epoch=dataset.train_images.shape[0],

nb_epoch=nb_epoch,

validation_data=(dataset.valid_images, dataset.valid_labels))

MODEL_PATH = './face.model.h5'

def save_model(self, file_path=MODEL_PATH):

self.model.save(file_path)

def load_model(self, file_path=MODEL_PATH):

self.model = load_model(file_path)

def evaluate(self, dataset):

score = self.model.evaluate(dataset.valid_images, dataset.valid_labels, verbose=1)

print(self.model.metrics_names[1],':',score[1] * 100)

def face_predict(self, image):

image = image.reshape((1,IMAGE_SIZE , IMAGE_SIZE, 3))

image = image.astype('float32')

image /= 255.0

result = self.model.predict_proba(image)

print('result:', result)

result = self.model.predict_classes(image)

return result[0]

if __name__ == '__main__':

dataset = Dataset('./data/')

dataset.load()

model = Model()

model.build_model(dataset)

model.train(dataset)

model.save_model(file_path='./data/me.face.model.h5')

model.load_model(file_path='./data/me.face.model.h5')

model.evaluate(dataset)

face_test.py(抓怕人脸与识别身份)

import cv2

from face_train import Model

import face_dataset

if __name__ == '__main__':

model = Model()

model.load_model(file_path='./data/me.face.model.h5')

color = (0, 255, 0)

camera = cv2.VideoCapture(0)

cascade_path = "E:/anaconda/Anaconda3/pkgs/libopencv-3.4.2-h20b85fd_0/Library/etc/haarcascades/haarcascade_frontalface_alt2.xml"

while True:

ret, frame = camera.read()

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

cascade = cv2.CascadeClassifier(cascade_path)

faces = cascade.detectMultiScale(gray, 1.1, 5)

if len(faces) > 0:

for (x, y, w, h) in faces:

image = frame[y: y + h, x: x + w]

image=face_dataset.resize_image(image)

faceID = model.face_predict(image)

if faceID == 1:

cv2.rectangle(frame, (x, y), (x + w, y + h), color, thickness=2)

cv2.putText(frame, 'criminal',

(x + 30, y + 30),

cv2.FONT_HERSHEY_SIMPLEX,

1,

(255, 0, 255),

2)

else:

cv2.rectangle(frame, (x, y), (x + w, y + h), color, thickness=2)

cv2.putText(frame, 'others',

(x + 30, y + 30),

cv2.FONT_HERSHEY_SIMPLEX,

1,

(255, 0, 255),

2)

cv2.imshow("camera", frame)

k = cv2.waitKey(1)

if k & 0xFF == ord('q'):

break

camera.release()

cv2.destroyAllWindows()