决策树

- 1. 决策树的构造

- 1.1 信息增益

- 1.2 划分数据集

- 1.3 递归构建决策树

- 2. 使用 Matplotlib 注解绘制树形图

- 3. 测试和存储分类器

- 3.1 测试算法:使用决策树执行分类

- 3.2 使用算法:决策树的存储

- 4. 实例:使用决策树预测隐形眼镜类型

1. 决策树的构造

- 优点:计算复杂度不高,输出结果易于理解,对中间值的缺失不敏感,可以处理不相关特征数据

- 缺点:可能会产生过度匹配问题

- 适用数据类型:数值型(离散化)和标称型

- 本文使用 ID3 算法(信息增益)划分数据集

1.1 信息增益

- 信息(information):如果待分类的事物可能划分在多个分类之中,则符号 x i x_i xi 的信息定义为: l ( x i ) = − l o g 2 p ( x i ) l(x_i) = -log_2p(x_i) l(xi)=−log2p(xi) 其中 p ( x i ) p(x_i) p(xi) 是选择该分类的概率

- 熵(entropy):定义为信息的期望值,即 H = − ∑ i = 1 n p ( x i ) l o g 2 p ( x i ) H = -\sum_{i=1}^np(x_i)log_2p(x_i) H=−i=1∑np(xi)log2p(xi) 其中 n 是分类的数目

- 计算给定数据集的香农熵:增加类别数,熵也相应地增加

'''计算给定数据集的香农熵'''

from math import log

def calcShannonEnt(dataSet):

numEntries = len(dataSet)

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys():

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key]) / numEntries

shannonEnt -= prob * log(prob, 2)

return shannonEnt

[IN]: test = [[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

[IN]: calcShannonEnt(test)

[OUT]: 0.9709505944546686

[IN]: test2 = [[1, 1, 'maybe'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

[IN]: calcShannonEnt(test2)

[OUT]: 1.3709505944546687

1.2 划分数据集

- 按照给定特征划分数据集,输出为符合特征要求的数据集(去掉了给定的特征列):

'''按照给定特征划分数据集'''

def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

[IN]: splitDataSet(test, 0, 1)

[OUT]: [[1, 'yes'], [1, 'yes'], [0, 'no']]

[IN]: splitDataSet(test, 0, 0)

[OUT]: [[1, 'no'], [1, 'no']]

- 选择最好的数据集划分方式:遍历特征计算信息增益,然后进行比较,取信息增益最大的特征

'''选择最好的数据集划分方式'''

def chooseBestFeatureToSplit(dataSet):

numFeatures = len(dataSet[0]) - 1

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0

bestFeature = -1

for i in range(numFeatures):

featList = [example[i] for example in dataSet]

uniqueVals = set(featList)

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet) / float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy

if (infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature

[IN]: chooseBestFeatureToSplit(test)

[OUT]: 0

1.3 递归构建决策树

- 构建决策树的原理:对于原始数据集,基于最好的属性值划分数据集。第一次划分之后,数据将被向下传递到树分支的下一个节点,在该节点上再次划分数据。递归结束的条件是:程序遍历完所有划分数据集的属性,或者每个分支下的所有实例都具有相同的分类,此时我们得到了所有的叶子节点,任何到达叶子节点的数据必然属于叶子节点的分类。

- 如果数据集已经处理了所有的属性,但是类标签依然不是唯一的,此时我们需要决定如何定义该子节点,一般情况下采用多数表决的方式

- 多数表决函数代码:

'''多数表决'''

from collections import Counter

def majorityCnt(classList):

class_count = Counter(classList).most_common(1)

return class_count[0][0]

- 创建树:

'''创建树'''

def createTree(dataSet, labels):

classList = [example[-1] for example in dataSet]

if classList.count(classList[0]) == len(classList):

return classList[0]

if len(dataSet[0]) == 1:

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:]

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value), subLabels)

return myTree

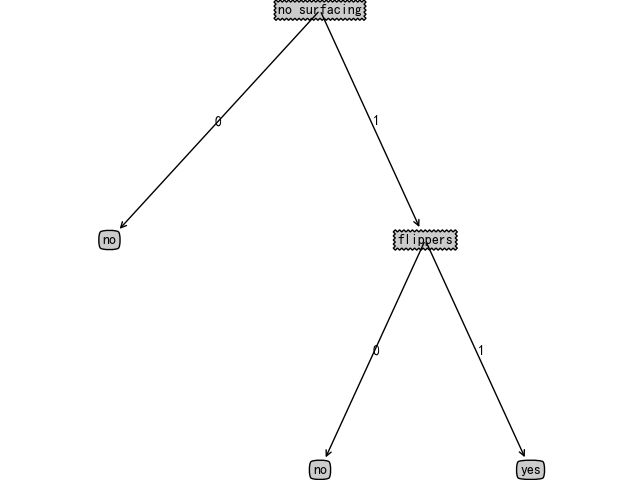

[IN]: labels = ['no surfacing', 'flippers']

[IN]: createTree(test, labels)

[OUT]: {'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}}

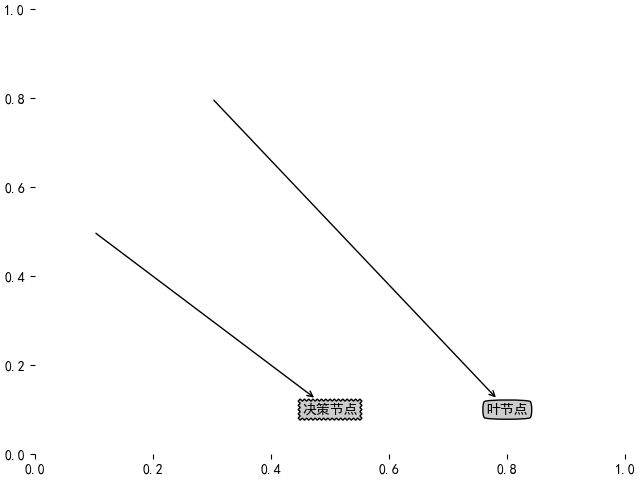

2. 使用 Matplotlib 注解绘制树形图

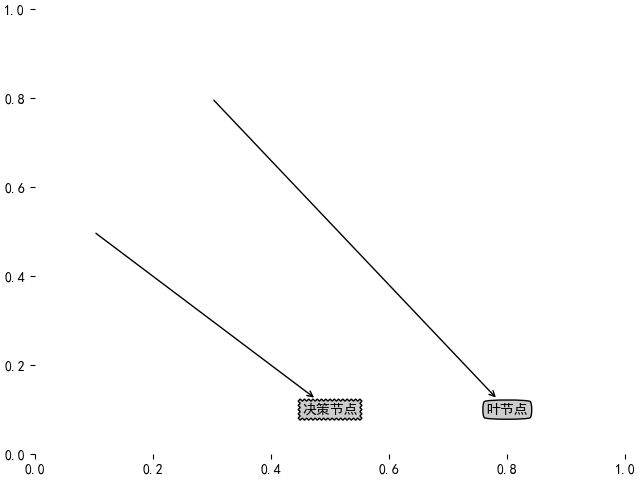

- 使用文本注解绘制树节点:

'''使用文本注解绘制树节点'''

import matplotlib.pyplot as plt

plt.rcParams['figure.constrained_layout.use'] = True

plt.rcParams['font.sans-serif'] = ['SimHei']

decisionNode = dict(boxstyle='sawtooth', fc='0.8')

leafNode = dict(boxstyle='round4', fc='0.8')

arrow_args = dict(arrowstyle='<-')

def plotNode(nodeTxt, centerPt, parentPt, nodeType):

createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction', xytext=centerPt, textcoords='axes fraction',

va='center', ha='center', bbox=nodeType, arrowprops=arrow_args)

def createPlot():

fig = plt.figure(1, facecolor='white')

fig.clf()

createPlot.ax1 = plt.subplot(111, frameon=False)

plotNode('决策节点', (0.5, 0.1), (0.1, 0.5), decisionNode)

plotNode('叶节点', (0.8, 0.1), (0.3, 0.8), leafNode)

plt.show()

2. 获取叶节点的数目和树的层数

'''获取叶节点的数目和树的层数'''

def getNumLeafs(myTree):

numLeafs = 0

firstStr = list(myTree.keys())[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__ == 'dict':

numLeafs += getNumLeafs(secondDict[key])

else:

numLeafs += 1

return numLeafs

def getTreeDepth(myTree):

maxDepth = 0

firstStr = list(myTree.keys())[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__== 'dict':

thisDepth = 1 + getTreeDepth(secondDict[key])

else:

thisDepth = 1

if thisDepth > maxDepth:

maxDepth = thisDepth

return maxDepth

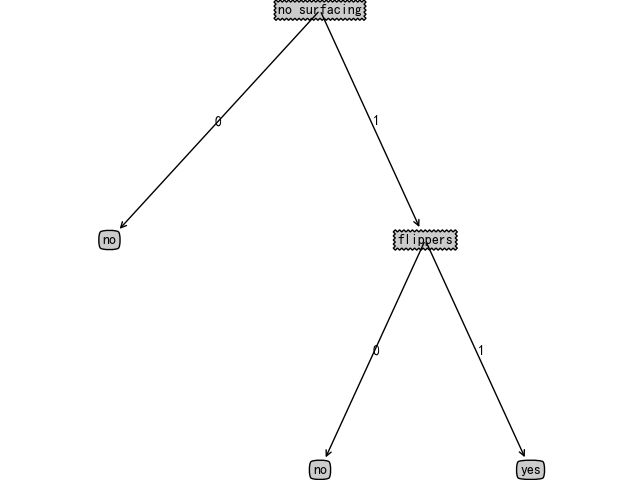

- 绘制决策树

'''plotTree 函数'''

def plotMidText(cntrPt, parentPt, txtString):

xMid = (parentPt[0] - cntrPt[0]) / 2.0 + cntrPt[0]

yMid = (parentPt[1] - cntrPt[1]) / 2.0 + cntrPt[1]

createPlot.ax1.text(xMid, yMid, txtString)

def plotTree(myTree, parentPt, nodeTxt):

numLeafs = getNumLeafs(myTree)

depth = getTreeDepth(myTree)

firstStr = list(myTree.keys())[0]

cntrPt = (plotTree.xOff + (1.0 + float(numLeafs)) / 2.0 / plotTree.totalW, plotTree.yOff)

plotMidText(cntrPt, parentPt, nodeTxt)

plotNode(firstStr, cntrPt, parentPt, decisionNode)

seconddict = myTree[firstStr]

plotTree.yOff = plotTree.yOff - 1.0 / plotTree.totalD

for key in seconddict.keys():

if type(seconddict[key]).__name__ == 'dict':

plotTree(seconddict[key], cntrPt, str(key))

else:

plotTree.xOff = plotTree.xOff + 1.0 / plotTree.totalW

plotNode(seconddict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)

plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))

plotTree.yOff = plotTree.yOff + 1.0 / plotTree.totalD

def createPlot(inTree):

fig = plt.figure(1, facecolor='white')

fig.clf()

axprops = dict(xticks=[], yticks=[])

createPlot.ax1 = plt.subplot(111, frameon=False, **axprops)

plotTree.totalW = float(getNumLeafs(inTree))

plotTree.totalD = float(getTreeDepth(inTree))

plotTree.xOff = -0.5 / plotTree.totalW

plotTree.yOff = 1.0

plotTree(inTree, (0.5, 1.0), '')

plt.show()

3. 测试和存储分类器

3.1 测试算法:使用决策树执行分类

'''使用决策树的分类函数'''

def classify(inputTree, featLabels, testVec):

firstStr = list(inputTree.keys())[0]

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)

for key in secondDict.keys():

if testVec[featIndex] == key:

if type(secondDict[key]).__name__ == 'dict':

classLabel = classify(secondDict[key], featLabels, testVec)

else:

classLabel = secondDict[key]

return classLabel

[IN]: listOfTrees = [{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}},

{'no surfacing': {0: 'no', 1: {'flippers': {0: {'head':{0: 'no', 1: 'yes'}}, 1: 'no'}}}}]

[IN]: labels = ['no surfacing', 'flippers']

[IN]: classify(listOfTrees[0], labels, [1,0])

[OUT]: 'no'

[IN]: classify(listOfTrees[0], labels, [1,1])

[OUT]: 'yes'

3.2 使用算法:决策树的存储

'''使用 pickle 模块存储/载入决策树'''

def storeTree(inputTree, filename):

import pickle

with open(filename, 'wb') as f:

pickle.dump(inputTree, f)

def grabTree(filename):

import pickle

with open(filename, 'rb') as f:

tree = pickle.load(f)

return tree

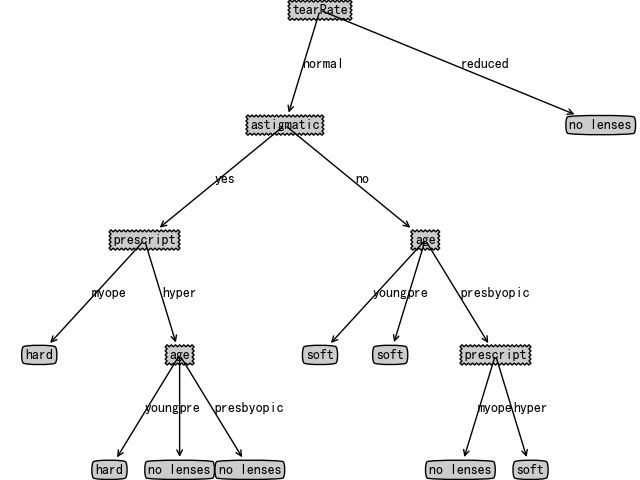

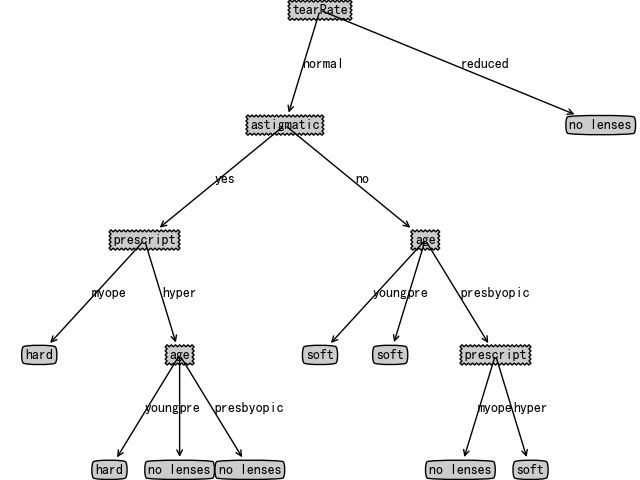

4. 实例:使用决策树预测隐形眼镜类型

import pandas as pd

data = pd.read_csv('Ch03/lenses.txt', sep='\t', header=None)

featMat = data.values.tolist()

lenselabels = ['age', 'prescript', 'astigmatic', 'tearRate', 'class']

lenseTree = createTree(featMat, lenselabels)

[IN]: lenseTree

[OUT]: {'tearRate': {'normal': {'astigmatic': {'yes': {'prescript': {'myope': 'hard',

'hyper': {'age': {'young': 'hard',

'pre': 'no lenses',

'presbyopic': 'no lenses'}}}},

'no': {'age': {'young': 'soft',

'pre': 'soft',

'presbyopic': {'prescript': {'myope': 'no lenses', 'hyper': 'soft'}}}}}},

'reduced': 'no lenses'}}

createPlot(lenseTree)