PyTorch官方教程 - Getting Started - 60分钟快速入门 - 神经网络

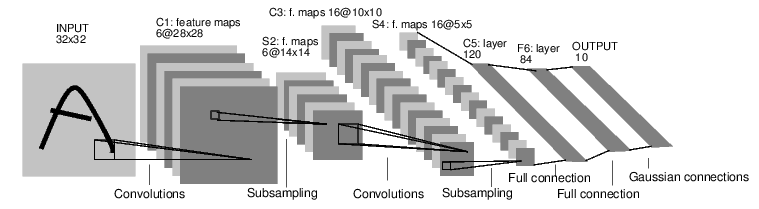

NEURAL NETWORKS

每个nn.Module需包含网络结构和forward(input)方法。

forward(input)方法返回输出

训练神经网络一般步骤:

- 定义神经网络、可学习参数(权值)

- 遍历输入数据集

- 前向传播

- 计算损失(输出与真实值距离)

- 反向传播梯度

- 更新网络权值, w e i g h t = w e i g h t − l e a r n i n g _ r a t e ∗ g r a d i e n t weight = weight - learning\_rate * gradient weight=weight−learning_rate∗gradient

import torch

import torch.nn as nn

import torch.nn.functional as F

定义网络

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 1 input image channel, 6 output channels, 3x3 square convolution kernel

self.conv1 = nn.Conv2d(in_channels=1, out_channels=6, kernel_size=3)

self.conv2 = nn.Conv2d(in_channels=6, out_channels=16, kernel_size=3)

# an affine operation: y = Wx + b

self.fc1 = nn.Linear(in_features=16 * 6 * 6, out_features=120) # 6*6 from image dimension

self.fc2 = nn.Linear(in_features=120, out_features=84)

self.fc3 = nn.Linear(in_features=84, out_features=10)

def forward(self, x):

# Max pooling over a (2, 2) window

x = F.max_pool2d(input=F.relu(input=self.conv1(x)), kernel_size=(2, 2))

# If the size is a square you can only specify a single number

x = F.max_pool2d(input=F.relu(input=self.conv2(x)), kernel_size=2)

x = x.view(-1, self.num_flat_features(x))

x = F.relu(input=self.fc1(x))

x = F.relu(input=self.fc2(x))

x = self.fc3(x)

return x

def num_flat_features(self, x):

size = x.size()[1 :] # all dimensions except the batch dimension

num_features = 1

for s in size:

num_features *= s

return num_features

net = Net()

print(net)

Net(

(conv1): Conv2d(1, 6, kernel_size=(3, 3), stride=(1, 1))

(conv2): Conv2d(6, 16, kernel_size=(3, 3), stride=(1, 1))

(fc1): Linear(in_features=576, out_features=120, bias=True)

(fc2): Linear(in_features=120, out_features=84, bias=True)

(fc3): Linear(in_features=84, out_features=10, bias=True)

)

模型的可学习参数由net.parameters()返回

params = list(net.parameters())

print(len(params))

print(params[0].size()) # conv1's .weight

10

torch.Size([6, 1, 3, 3])

随机输入

input = torch.randn(1, 1, 32, 32)

out = net(input)

print(out)

tensor([[-0.0848, -0.0075, 0.0200, 0.0464, 0.0374, 0.0861, 0.0148, 0.0441,

0.0547, -0.0514]], grad_fn=)

所有参数梯度缓存清零,随机梯度反向传播

net.zero_grad()

out.backward(torch.randn(1, 10))

torch.nn仅支持mini-batches,对于单个样本,用input.unsqueeze(0)增加batch维度

- torch.Tensor:一个多维数组、支持autograd运算,如backward(),并保存对张量的梯度。

- nn.Module:神经网络模块,封装参数、协助GPU运算、导出、加载等。

- nn.Parameter:参数张量,作为属性分配给模块时,自动注册为参数。

- autograd.Function:自动求导运算的正、反向定义。

损失函数

损失函数输入:(output, target),计算output与target间的距离。

output = net(input)

target = torch.randn(10) # a dummy target, for example

target - target.view(1, -1) # make it the same shape as output

criterion = nn.MSELoss()

loss = criterion(output, target)

print(loss)

tensor(0.6538, grad_fn=)

print(loss.grad_fn) # MSELoss

print(loss.grad_fn.next_functions[0][0]) # Linear

print(loss.grad_fn.next_functions[0][0].next_functions[0][0]) # ReLU

反向传播(Backprop)

loss.backward()

需要先将梯度清零,否则梯度将会累加。

net.zero_grad() # zeroes the gradient buffers of all parameters

print('conv1.bias.grad before backward')

print(net.conv1.bias.grad)

loss.backward()

print('conv1.bias.grad after backward')

print(net.conv1.bias.grad)

conv1.bias.grad before backward

tensor([0., 0., 0., 0., 0., 0.])

conv1.bias.grad after backward

tensor([-0.0038, -0.0011, -0.0077, -0.0036, -0.0100, -0.0093])

更新权值

随机梯度下降(Stochastic Gradient Descent,SGD)

weight = weight - learning_rate * gradient

learning_rate = 0.01

for f in net.parameters():

f.data.sub_(f.grad.data * learning_rate)

torch.optim

import torch.optim as optim

# create your optimizer

optimizer = optim.SGD(net.parameters(), lr=0.01)

# in your training loop

optimizer.zero_grad() # zero the gradient buffers

output = net(input)

loss = criterion(output, target)

loss.backward()

optimizer.step() # Does the update

D:\ProgramData\Anaconda3\envs\pytorch\lib\site-packages\torch\nn\modules\loss.py:443: UserWarning: Using a target size (torch.Size([10])) that is different to the input size (torch.Size([1, 10])). This will likely lead to incorrect results due to broadcasting. Please ensure they have the same size.

return F.mse_loss(input, target, reduction=self.reduction)