Spark on Kubernetes在Mac的Demo

文章目录

- 1 Overview

- 2 Start

- 2.1 部署本地的 K8S 集群

- 2.2 Spark 跑起来

- 2.3 应用日志

- 3 Summary

1 Overview

讲真,Spark 2.3 开始原生支持 K8S,按照Spark 2.4 官网的方法一开始真的没跑起来,K8S Dashboard 又一堆问题,可能我太菜了,头疼。

结果我再仔细看看官方指导,发现…

2 Start

2.1 部署本地的 K8S 集群

要在 K8S 上享受跑 Spark 的快感,首先你要有 K8S 集群,如果没有也没关系,我们本地装一个。

我使用的是 Mac,具体配置如下。

ProductName: Mac OS X

ProductVersion: 10.12.6

BuildVersion: 16G1114

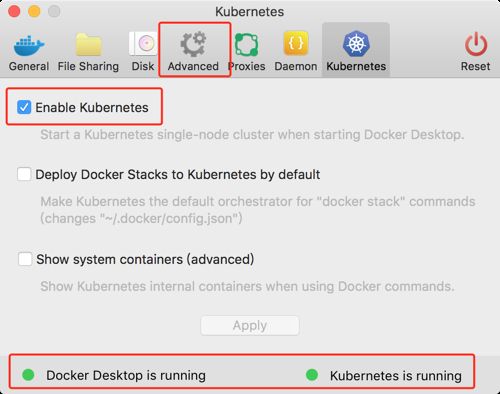

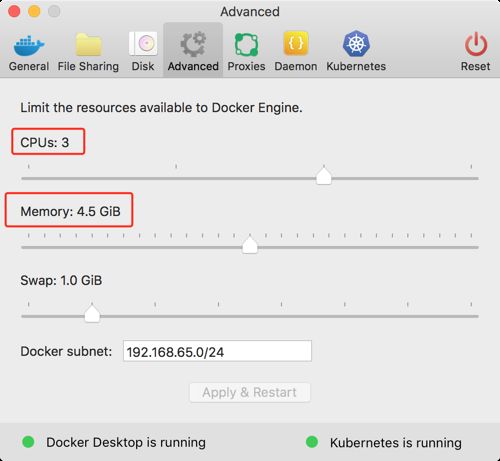

其实再仔细看看官方文档 prerequisties,可以发现一些不满足的条件,比如说默认的 Minikube 的资源是不足够运行一个 Spark App 的。我本地用的是 Docker Edge 里面配的 K8S Cluster,大家尝试的话可以下载并通过设置来开启,需要注意的是,资源要调大一点,不然 Spark 启动之后机会一直在等待资源。

另外就是 example jar 的问题,留意一下,官网上有一句:

This URI is the location of the example jar that is already in the Docker image.

注意了,这个配置里的 Image 指的是已经打包到镜像的 jar 文件!!!不是你本地的文件!!!

2.2 Spark 跑起来

➜ spark-2.4.2-bin-hadoop2.7 bin/spark-submit \

--master k8s://http://localhost:8001 \

--deploy-mode cluster \

--name spark-pi \

--class org.apache.spark.examples.SparkPi \

--conf spark.executor.instances=1 \

--conf spark.kubernetes.container.image=spark:2.3.0 \

local:///opt/spark/examples/jars/spark-examples_2.12-2.4.2.jar

2.3 应用日志

首先是展示在终端的日志,这部分的日志是从 LoggingPodStatusWatcherImpl 打印出来的,这个类的作用格式检测 K8S 上 Spark App 的 Pod 的状态 Status。

大家可以搜索一下关键词,phase,可以发现 Pod 的状态流转的过程

Pending -> Running -> Succeeded

19/04/29 14:40:14 WARN Utils: Kubernetes master URL uses HTTP instead of HTTPS.

log4j:WARN No appenders could be found for logger (io.fabric8.kubernetes.client.Config).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

19/04/29 14:40:21 INFO LoggingPodStatusWatcherImpl: State changed, new state:

pod name: spark-pi-1556520019644-driver

namespace: default

labels: spark-app-selector -> spark-c55df736c1134dd1ac14b67ad6f300b3, spark-role -> driver

pod uid: a9395174-6a49-11e9-8af3-025000000001

creation time: 2019-04-29T06:40:21Z

service account name: default

volumes: spark-local-dir-1, spark-conf-volume, default-token-97296

node name: N/A

start time: N/A

container images: N/A

phase: Pending

status: []

19/04/29 14:40:21 INFO LoggingPodStatusWatcherImpl: State changed, new state:

pod name: spark-pi-1556520019644-driver

namespace: default

labels: spark-app-selector -> spark-c55df736c1134dd1ac14b67ad6f300b3, spark-role -> driver

pod uid: a9395174-6a49-11e9-8af3-025000000001

creation time: 2019-04-29T06:40:21Z

service account name: default

volumes: spark-local-dir-1, spark-conf-volume, default-token-97296

node name: docker-desktop

start time: N/A

container images: N/A

phase: Pending

status: []

19/04/29 14:40:21 INFO LoggingPodStatusWatcherImpl: State changed, new state:

pod name: spark-pi-1556520019644-driver

namespace: default

labels: spark-app-selector -> spark-c55df736c1134dd1ac14b67ad6f300b3, spark-role -> driver

pod uid: a9395174-6a49-11e9-8af3-025000000001

creation time: 2019-04-29T06:40:21Z

service account name: default

volumes: spark-local-dir-1, spark-conf-volume, default-token-97296

node name: docker-desktop

start time: 2019-04-29T06:40:21Z

container images: spark:2.3.0

phase: Pending

status: [ContainerStatus(containerID=null, image=spark:2.3.0, imageID=, lastState=ContainerState(running=null, terminated=null, waiting=null, additionalProperties={}), name=spark-kubernetes-driver, ready=false, restartCount=0, state=ContainerState(running=null, terminated=null, waiting=ContainerStateWaiting(message=null, reason=ContainerCreating, additionalProperties={}), additionalProperties={}), additionalProperties={})]

19/04/29 14:40:22 INFO Client: Waiting for application spark-pi to finish...

19/04/29 14:40:24 INFO LoggingPodStatusWatcherImpl: State changed, new state:

pod name: spark-pi-1556520019644-driver

namespace: default

labels: spark-app-selector -> spark-c55df736c1134dd1ac14b67ad6f300b3, spark-role -> driver

pod uid: a9395174-6a49-11e9-8af3-025000000001

creation time: 2019-04-29T06:40:21Z

service account name: default

volumes: spark-local-dir-1, spark-conf-volume, default-token-97296

node name: docker-desktop

start time: 2019-04-29T06:40:21Z

container images: spark:2.3.0

phase: Running

status: [ContainerStatus(containerID=docker://93c8f1b06820a2f95c4aa13b498edfc35bd63bc0da83ce4ef6f63dfe6c13eef3, image=spark:2.3.0, imageID=docker://sha256:1352ff0f5275feb3b49248ed4b167659d8d752a143fe40f271c1430829336cbd, lastState=ContainerState(running=null, terminated=null, waiting=null, additionalProperties={}), name=spark-kubernetes-driver, ready=true, restartCount=0, state=ContainerState(running=ContainerStateRunning(startedAt=2019-04-29T06:40:24Z, additionalProperties={}), terminated=null, waiting=null, additionalProperties={}), additionalProperties={})]

19/04/29 14:40:46 INFO LoggingPodStatusWatcherImpl: State changed, new state:

pod name: spark-pi-1556520019644-driver

namespace: default

labels: spark-app-selector -> spark-c55df736c1134dd1ac14b67ad6f300b3, spark-role -> driver

pod uid: a9395174-6a49-11e9-8af3-025000000001

creation time: 2019-04-29T06:40:21Z

service account name: default

volumes: spark-local-dir-1, spark-conf-volume, default-token-97296

node name: docker-desktop

start time: 2019-04-29T06:40:21Z

container images: spark:2.3.0

phase: Succeeded

status: [ContainerStatus(containerID=docker://93c8f1b06820a2f95c4aa13b498edfc35bd63bc0da83ce4ef6f63dfe6c13eef3, image=spark:2.3.0, imageID=docker://sha256:1352ff0f5275feb3b49248ed4b167659d8d752a143fe40f271c1430829336cbd, lastState=ContainerState(running=null, terminated=null, waiting=null, additionalProperties={}), name=spark-kubernetes-driver, ready=false, restartCount=0, state=ContainerState(running=null, terminated=ContainerStateTerminated(containerID=docker://93c8f1b06820a2f95c4aa13b498edfc35bd63bc0da83ce4ef6f63dfe6c13eef3, exitCode=0, finishedAt=2019-04-29T06:40:45Z, message=null, reason=Completed, signal=null, startedAt=2019-04-29T06:40:24Z, additionalProperties={}), waiting=null, additionalProperties={}), additionalProperties={})]

19/04/29 14:40:46 INFO LoggingPodStatusWatcherImpl: Container final statuses:

Container name: spark-kubernetes-driver

Container image: spark:2.3.0

Container state: Terminated

Exit code: 0

19/04/29 14:40:46 INFO Client: Application spark-pi finished.

19/04/29 14:40:46 INFO ShutdownHookManager: Shutdown hook called

19/04/29 14:40:46 INFO ShutdownHookManager: Deleting directory /private/var/folders/n8/xsvrzm1964xgwh1mn8hqdglr0000gn/T/spark-0bacf5b1-88d9-41bf-bdcb-23d3e6d4a738

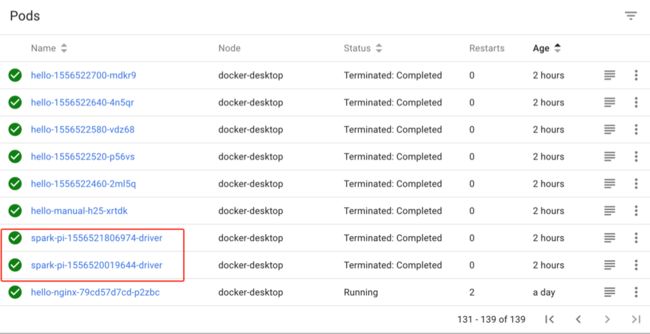

其次,可以到 K8S Dashboard 去找 Driver 和 Executor Pod 的日志,或者直接使用命令 kubectl logs 。关于这部分日志就不赘述了,是 Spark 的一些基本内容。

➜ spark-2.4.2-bin-hadoop2.7 kubectl logs spark-pi-1556521806974-driver

++ id -u

+ myuid=0

++ id -g

+ mygid=0

+ set +e

++ getent passwd 0

+ uidentry=root:x:0:0:root:/root:/bin/ash

+ set -e

+ '[' -z root:x:0:0:root:/root:/bin/ash ']'

+ SPARK_K8S_CMD=driver

+ case "$SPARK_K8S_CMD" in

+ shift 1

+ SPARK_CLASSPATH=':/opt/spark/jars/*'

+ env

+ sort -t_ -k4 -n

+ grep SPARK_JAVA_OPT_

+ sed 's/[^=]*=\(.*\)/\1/g'

+ readarray -t SPARK_EXECUTOR_JAVA_OPTS

+ '[' -n '' ']'

+ '[' -n '' ']'

+ PYSPARK_ARGS=

+ '[' -n '' ']'

+ R_ARGS=

+ '[' -n '' ']'

+ '[' '' == 2 ']'

+ '[' '' == 3 ']'

+ case "$SPARK_K8S_CMD" in

+ CMD=("$SPARK_HOME/bin/spark-submit" --conf "spark.driver.bindAddress=$SPARK_DRIVER_BIND_ADDRESS" --deploy-mode client "$@")

+ exec /sbin/tini -s -- /opt/spark/bin/spark-submit --conf spark.driver.bindAddress=10.1.0.23 --deploy-mode client --properties-file /opt/spark/conf/spark.properties --class org.apache.spark.examples.SparkPi spark-internal

19/04/29 07:10:17 WARN Utils: Kubernetes master URL uses HTTP instead of HTTPS.

19/04/29 07:10:18 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

19/04/29 07:10:19 INFO SparkContext: Running Spark version 2.4.2

19/04/29 07:10:19 INFO SparkContext: Submitted application: Spark Pi

19/04/29 07:10:19 INFO SecurityManager: Changing view acls to: root

19/04/29 07:10:19 INFO SecurityManager: Changing modify acls to: root

19/04/29 07:10:19 INFO SecurityManager: Changing view acls groups to:

19/04/29 07:10:19 INFO SecurityManager: Changing modify acls groups to:

19/04/29 07:10:19 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(root); groups with view permissions: Set(); users with modify permissions: Set(root); groups with modify permissions: Set()

19/04/29 07:10:20 INFO Utils: Successfully started service 'sparkDriver' on port 7078.

...

...

...

3 Summary

Spark 在2.3已经支持 K8S 的集群管理的模式了,相关的实现可以参考 Spark 源码中 resource-managers/kubernetes 下的实现,其实现的方案主要是利用了 K8S 的 Java Client 来调用 K8S 的 API。具体的设计,以后有空再慢慢研究。

至于为什么 On Yarn 跑的好好的,要突然切到 K8S 呢,这里参考了一篇文章,大家可以理解一下。

https://medium.com/@rachit1arora/why-run-spark-on-kubernetes-51c0ccb39c9b

- 数据处理的 Pipeline 已经逐渐容器化了,如果 Spark 都容器化了,那么跑在 K8S 上也就很合理,毕竟 K8S 调度 Docker 镜像的容器非常成熟。

- 跑在 K8S 上就没有了物理机的概念了,全部上云,这样对资源的利用以及成本的核算都会更

- 通过 K8S 的 NameSpace 和 Quotas,可以提供多租户的集群共享。