Scrapy-爬虫框架初试

望共同进步

转载请注明地址:

https://blog.csdn.net/weixin_39701039/article/details/105230628

项目已放在github上,有兴趣的可以clone下来

https://github.com/steptolisa/scrapy_douban1

git clone (git clone 链接)

这里以豆瓣电影评分为例,下载并且存入mongo数据库

一、准备环境,安装scrapy

在线安装 :pip install scrapy

离线安装 :下载.whl 或者.tar.gz 文件

.whl : pip install xxx.whl (绝对路径,或者.whl目录下是相对路径)

.tar.gz : 解压 进入 setup.py 文件目录下 python setup.py install

或者下载

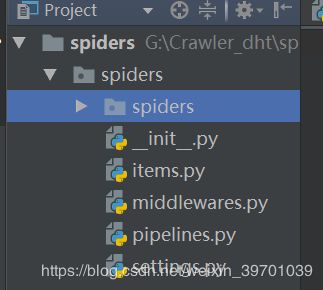

二、创建爬虫项目

scrapy startproject spiders (spiders 是项目名称)

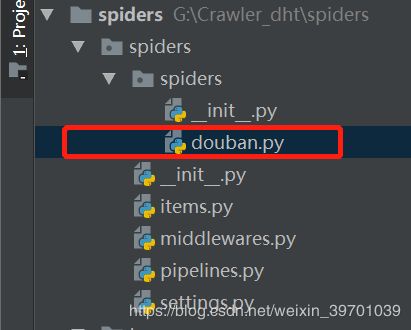

三、生成爬虫脚本

使用genspider命令在项目中创建爬虫脚本

进入项目下spiders目录下,即 cd spiders/spiders

scrapy genspider douban "www.movie.douban.com"

此时,会在spiders/spiders/spiders 产生一个新的文件douban.py,这个是爬取页面的主入口和下载页面的解析主入口。

Scrapy项目文件结构

- items.py 负责数据模型的建立,类似于实体类。

- middlewares.py 自己定义的中间件。

- pipelines.py 负责对spider返回数据的处理。 (管道文件)

- settings.py 负责对整个爬虫的配置,比如一些常量配置等。 (项目配置)

- spiders目录 负责存放继承自scrapy的爬虫类。(写解析页面代码的位置)

- scrapy.cfg scrapy基础配置

四、douban 代码实例

https://movie.douban.com/top250?start=25&filter=

查看下一页可以发现网址的规律

items.py

# -*- coding: utf-8 -*-

import scrapy

class SpidersItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

# 电影标题

title = scrapy.Field()

# 电影评分

score = scrapy.Field()

# 电影信息

content = scrapy.Field()

# 简介

info = scrapy.Field()spiders/douban.py

# -*- coding: utf-8 -*-

import scrapy

from spiders.items import SpidersItem

class DoubanSpider(scrapy.Spider):

name = 'douban'

allowed_domains = ['movie.douban.com']

start = 0

url = 'https://movie.douban.com/top250?start='

end = '&filter='

start_urls = [url + str(start) + end]

def parse(self, response):

item = SpidersItem()

movies = response.xpath("//div[@class='info']")

for each in movies:

title = each.xpath('div[@class="hd"]/a/span[@class="title"]/text()').extract_first()

content = each.xpath('div[@class="bd"]/p/text()').extract()

score = each.xpath('div[@class="bd"]/div[@class="star"]/span[@class="rating_num"]/text()').extract_first()

info = each.xpath('div[@class="bd"]/p[@class="quote"]/span/text()').extract_first()

item['title'] = title

item['content'] = ';'.join(content)

item['score'] = score

item['info'] = info

yield item

if self.start <= 225:

self.start += 25

yield scrapy.Request(self.url + str(self.start) + self.end, callback=self.parse)

pipelines.py

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

from . import settings

import pymongo

class SpidersPipeline(object):

def __init__(self):

# 获取setting主机名、端口号和数据库名

host = settings.MONGODB_HOST

port = settings.MONGODB_PORT

dbname = settings.MONGODB_DBNAME

docname = settings.MONGODB_DOCNAME

# pymongo.MongoClient(host, port) 创建MongoDB链接

client = pymongo.MongoClient(host=host, port=port)

# 数据库

mdb = client[dbname]

# 数据的表名

self.post = mdb[docname]

def process_item(self, item, spider):

data = dict(item)

self.post.insert(data)

return itemsettings.py

# -*- coding: utf-8 -*-

BOT_NAME = 'spiders'

SPIDER_MODULES = ['spiders.spiders']

NEWSPIDER_MODULE = 'spiders.spiders'

USER_AGENT = 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/48.0.2564.116 Safari/537.36'

# MONGODB 主机环回地址127.0.0.1

MONGODB_HOST = '127.0.0.1'

# 端口号,默认是27017

MONGODB_PORT = 27017

# 设置数据库名称

MONGODB_DBNAME = 'douban'

# 存放本次数据的表名称

MONGODB_DOCNAME = 'DouBanMovies'

ITEM_PIPELINES = {

'spiders.pipelines.SpidersPipeline': 300,

}

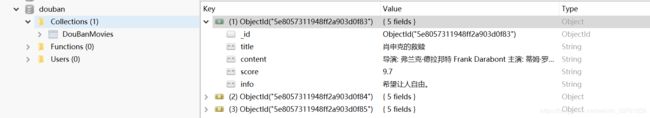

五、mongo数据库准备

这里网上可以查一下mongo 数据库的下载安装,暂且搁置,以后专门补上

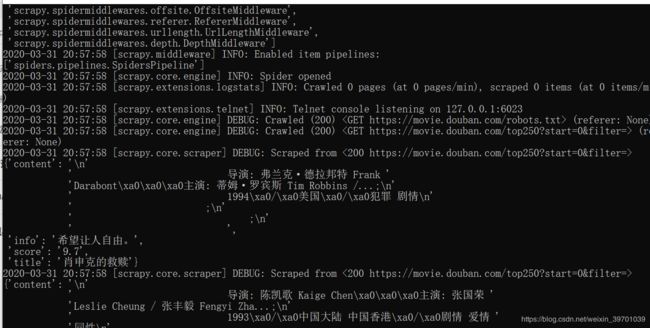

六、启动爬虫

进入spiders项目下

scrapy crawl douban (douban 指向douban.py 里的name属性 ,如上douban.py 代码 name = 'douban')

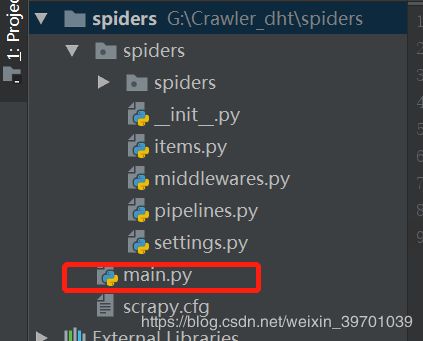

七、创建main.py 以便于debug

# -*- coding: utf-8 -*-

__author__ = 'dht'

__creattm = ''

__usefor__ = ''

from scrapy import cmdline

cmdline.execute(['scrapy','crawl','douban'])注意后两行就行,数组参数['scrapy命令位置','crawl', '爬虫名称']

望有所帮助,望采纳!!