Phoenix是一个在hbase上面实现的基于hadoop的OLTP技术,具有低延迟、事务性、可使用sql、提供jdbc接口的特点。 而且phoenix还提供了hbase二级索引的解决方案,丰富了hbase查询的多样性,继承了hbase海量数据快速随机查询的特点。但是在生产环境中,不可以用在OLTP中。在线事务处理的环境中,需要低延迟,而Phoenix在查询HBase时,虽然做了一些优化,但延迟还是不小。所以依然是用在OLAT中,再将结果返回存储下来。

Phoenix完全使用Java编写,作为HBase内嵌的JDBC驱动。Phoenix查询引擎会将SQL查询转换为一个或多个HBase扫描,并编排执行以生成标准的JDBC结果集。直接使用HBase API、协同处理器与自定义过滤器,对于简单查询来说,其性能量级是毫秒,对于百万级别的行数来说,其性能量级是秒。

由于资源问题,这里我们部署单节点测试环境。

Hbase整合Phoenix

下载Phoenix,这里使用5.0.0-HBase-2.0版本。download

下载Hbase,经测试2.2.0不兼容新版的Phoenix,这里我们使用2.1.5版本。download

1、 解压hbase、phoenix的tar包

[admin@mvxl2429 yst]$ tar -xf hbase-2.1.5-bin.tar.gz

[admin@mvxl2429 yst]$ tar -xf apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz

[admin@mvxl2429 yst]$ mv apache-phoenix-5.0.0-HBase-2.0-bin phoenix-5.0.0

[admin@mvxl2429 yst]$ ls

apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz hbase-2.1.5 hbase-2.1.5-bin.tar.gz phoenix-5.0.0

2、hbse整合phoenix

[admin@mvxl2429 yst]$ cp phoenix-5.0.0/phoenix-5.0.0-HBase-2.0-server.jar hbase-2.1.5/lib

[admin@mvxl2429 yst]$ ll hbase-2.1.5/lib | grep phoenix

-rw-r--r-- 1 admin admin 41800313 Jul 29 10:08 phoenix-5.0.0-HBase-2.0-server.jar

3、新建hbase数据目录和zk数据目录

[admin@mvxl2429 yst]$ mkdir -p /tmp/hbase/{zk,data}

[admin@mvxl2429 yst]$ ls /tmp/hbase

data zk

4、修改hbase相关配置

[admin@mvxl2429 yst]$ sed -i '/# export JAVA_HOME=/cexport JAVA_HOME=/usr/lib/jvm/jre-1.8.0' hbase-2.1.5/conf/hbase-env.sh

[admin@mvxl2429 yst]$ grep JAVA_HOME hbase-2.1.5/conf/hbase-env.sh

export JAVA_HOME=/usr/lib/jvm/jre-1.8.0

[admin@mvxl2429 yst]$ cat > hbase-2.1.5/conf/hbase-site.xml <

>

>

>

> hbase.rootdir

> /tmp/hbase/data

>

>

> hbase.zookeeper.property.dataDir

> /tmp/hbase/zk

>

>

> hbase.zookeeper.property.clientPort

> 2182

>

>

> EOF

-bash: [: too many arguments

[admin@mvxl2429 yst]$ cat hbase-2.1.5/conf/hbase-site.xml

hbase.rootdir

/tmp/hbase/data

hbase.zookeeper.property.dataDir

/tmp/hbase/zk

hbase.zookeeper.property.clientPort

2182

5、修改hosts

[admin@mvxl2429 bin]$ cat /etc/hosts

10.16.72.63 mvxl2429

127.0.0.1 localhost.localdomain localhost4 localhost4.localdomain4 localhost

127.0.0.1 localhost

6、启动hbase

[admin@mvxl2429 yst]$ sh hbase-2.1.5/bin/start-hbase.sh

running master, logging to /mnt/yst/hbase-2.1.5/bin/../logs/hbase-admin-master-mvxl2429.out

[admin@mvxl2429 yst]$ ps aux | grep hbase

admin 31823 0.0 0.0 110868 1700 pts/0 S 11:06 0:00 bash /mnt/yst/hbase-2.1.5/bin/hbase-daemon.sh --config /mnt/yst/hbase-2.1.5/bin/../conf foreground_start master

admin 31837 50.3 2.3 6489552 384212 pts/0 Sl 11:06 0:16 /usr/lib/jvm/jre-1.8.0/bin/java -Dproc_master -XX:OnOutOfMemoryError=kill -9 %p -XX:+UseConcMarkSweepGC -Dhbase.log.dir=/mnt/yst/hbase-2.1.5/bin/../logs -Dhbase.log.file=hbase-admin-master-mvxl2429.log -Dhbase.home.dir=/mnt/yst/hbase-2.1.5/bin/.. -Dhbase.id.str=admin -Dhbase.root.logger=INFO,RFA -Dhbase.security.logger=INFO,RFAS org.apache.hadoop.hbase.master.HMaster start

admin 32237 0.0 0.0 107884 1068 pts/0 S+ 11:07 0:00 grep hbase

7、验证一下整合效果

可以看到已经自动生成数据仓库用到的一些表。

[admin@mvxl2429 yst]$ ./phoenix-5.0.0/bin/sqlline.py localhost:2182

Setting property: [incremental, false]

Setting property: [isolation, TRANSACTION_READ_COMMITTED]

issuing: !connect jdbc:phoenix:localhost:2182 none none org.apache.phoenix.jdbc.PhoenixDriver

Connecting to jdbc:phoenix:localhost:2182

19/07/29 11:24:56 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Connected to: Phoenix (version 5.0)

Driver: PhoenixEmbeddedDriver (version 5.0)

Autocommit status: true

Transaction isolation: TRANSACTION_READ_COMMITTED

Building list of tables and columns for tab-completion (set fastconnect to true to skip)...

133/133 (100%) Done

Done

sqlline version 1.2.0

0: jdbc:phoenix:localhost:2182> !table

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+--------+

| TABLE_CAT | TABLE_SCHEM | TABLE_NAME | TABLE_TYPE | REMARKS | TYPE_NAME | SELF_REFERENCING_COL_NAME | REF_GENERATION | INDEX_STATE | IMMUTA |

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+--------+

| | SYSTEM | CATALOG | SYSTEM TABLE | | | | | | false |

| | SYSTEM | FUNCTION | SYSTEM TABLE | | | | | | false |

| | SYSTEM | LOG | SYSTEM TABLE | | | | | | true |

| | SYSTEM | SEQUENCE | SYSTEM TABLE | | | | | | false |

| | SYSTEM | STATS | SYSTEM TABLE | | | | | | false |

+------------+--------------+-------------+---------------+----------+------------+----------------------------+-----------------+--------------+--------+

0: jdbc:phoenix:localhost:2182> !quit

Closing: org.apache.phoenix.jdbc.PhoenixConnection

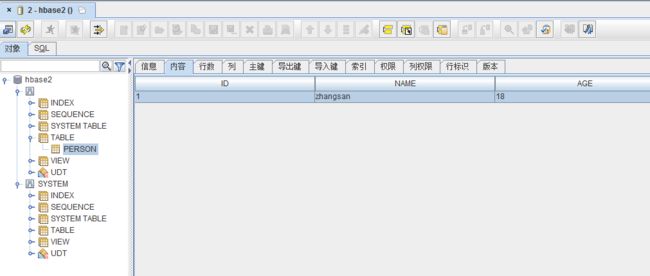

安装SQuirrel客户端

SQuirrel是一个图形化界面工具。由于Phoenix是一个JDBC驱动程序,因此与此类工具的集成是无缝的。通过SQuirrel,您可以在SQL选项卡中发出SQL语句(创建表,插入数据,运行查询),并在“对象”选项卡中检查表元数据(即列表,列,主键和类型)。

1、下载客户端 download

2、安装SQuirrel

java -jar squirrel-sql-3.9.1-standard.jar

3、配置Phoenix客户端jar包

将apache-phoenix-5.0.0-HBase-2.0-bin.tar.gz中的phoenix-5.0.0-HBase-2.0-client.jarjar包拷贝到squirrel-sql-3.9.1\lib文件夹下。

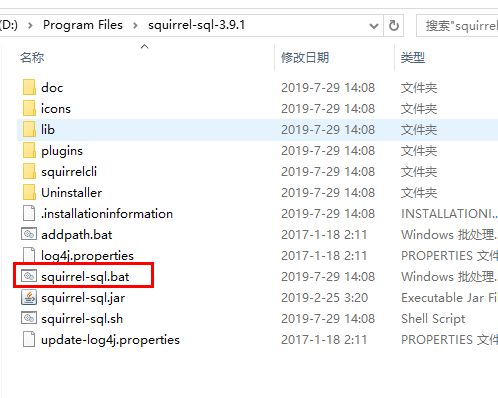

4、启动SQuirrel

在安装目录下,双击打开 squirrel-sql.bat

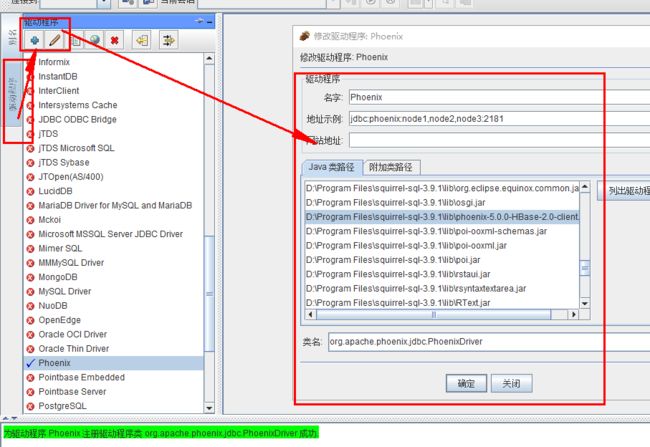

5、添加一个Phoenix驱动

设置zk地址和Phoenix驱动包信息

6、添加本地hosts

打开文件 c:\windows\system32\drivers\etc\hosts

添加以下内容:

10.16.72.63 mvxl2429

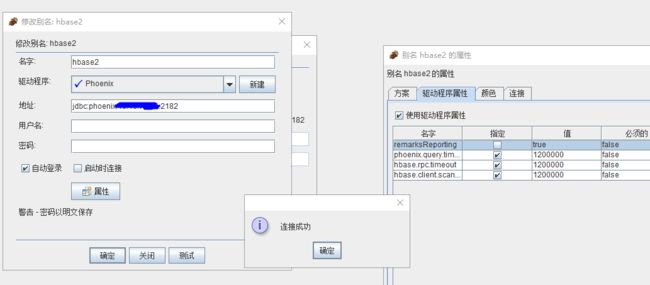

7、测试Phoenix连接

8、连接Phoenix

实现DataX数据同步

DataX是阿里开源的一个数据同步中间件。在阿里巴巴集团内被广泛使用的离线数据同步工具/平台,实现包括 MySQL、Oracle、SqlServer、Postgre、HDFS、Hive、ADS、HBase、TableStore(OTS)、MaxCompute(ODPS)、DRDS 等各种异构数据源之间高效的数据同步功能。Phoenix数据同步文档点击这里

1、开启Phoenix的瘦客户端

使用DataX同步需要用到Phoenix的瘦客户端连接。这里似乎有个BUG,如果该表了zk的端口,则无法连接。

[root@local14 bin]# ./queryserver.py start

starting Query Server, logging to /tmp/phoenix/phoenix-root-queryserver.log

2、添加DataX插件

下载打包好的DataX并没有phoenix5的同步插件,需要下载源码重新编译。DataX下载地址

[root@gz-tencent ~]# git clone https://github.com/alibaba/DataX.git

Cloning into 'DataX'...

remote: Enumerating objects: 7, done.

remote: Counting objects: 100% (7/7), done.

remote: Compressing objects: 100% (7/7), done.

remote: Total 1657 (delta 1), reused 4 (delta 0), pack-reused 1650

Receiving objects: 100% (1657/1657), 11.55 MiB | 113.00 KiB/s, done.

Resolving deltas: 100% (303/303), done.

[root@gz-tencent ~]# cd DataX ; mvn clean package -DskipTests assembly:assembly

[root@gz-tencent writer]# ll /root/DataX/hbase20xsqlwriter/target/datax/plugin/writer/hbase20xsqlwriter

total 32

-rw-r--r-- 1 root root 20401 Jul 29 23:30 hbase20xsqlwriter-0.0.1-SNAPSHOT.jar

drwxr-xr-x 2 root root 4096 Jul 29 23:30 libs

-rw-r--r-- 1 root root 262 Jul 29 23:30 plugin_job_template.json

-rwxr-xr-x 1 root root 263 Jul 29 23:30 plugin.json

3、添加DataX同步配置

[admin@mvxl2429 ~]$ cat /mnt/yst/datax/job/mysql2phoenix.json

{

"job": {

"setting": {

"speed": {

"channel": 16,

"byte": 1048576,

"record": 10000

}

},

"content": [{

"reader": {

"name": "mysqlreader",

"parameter": {

"username": "root",

"password": "root632",

"column": ["*"],

"splitPk": "id",

"connection": [{

"table": ["f_order_report_701"],

"jdbcUrl": ["jdbc:mysql://你的地址:3306/你的库"]

}]

}

},

"writer": {

"name": "hbase20xsqlwriter",

"parameter": {

"batchSize": "100",

"column": ["ID","OUTER_ORDER_ID","SHOP_NAME"],

"queryServerAddress": "http://127.0.0.1:8765",

"nullMode": "skip",

"table": "F_ORDER_REPORT_701"

}

}

}]

}

}

3、跑一个验证下

[root@local14 bin]# ./datax.py /root/data/datax/job/mysql2phoenix.json

DataX (DATAX-OPENSOURCE-3.0), From Alibaba !

Copyright (C) 2010-2017, Alibaba Group. All Rights Reserved.

2019-07-26 22:56:05.927 [main] INFO VMInfo - VMInfo# operatingSystem class => sun.management.OperatingSystemImpl

2019-07-26 22:56:05.936 [main] INFO Engine - the machine info =>

osInfo: Oracle Corporation 1.8 25.181-b13

jvmInfo: Linux amd64 3.10.0-862.11.6.el7.x86_64

cpu num: 1

totalPhysicalMemory: -0.00G

freePhysicalMemory: -0.00G

maxFileDescriptorCount: -1

currentOpenFileDescriptorCount: -1

GC Names [Copy, MarkSweepCompact]

MEMORY_NAME | allocation_size | init_size

Eden Space | 273.06MB | 273.06MB

Code Cache | 240.00MB | 2.44MB

Survivor Space | 34.13MB | 34.13MB

Compressed Class Space | 1,024.00MB | 0.00MB

Metaspace | -0.00MB | 0.00MB

Tenured Gen | 682.69MB | 682.69MB

2019-07-26 22:56:05.978 [main] INFO Engine -

{

"content":[

{

"reader":{

"name":"mysqlreader",

"parameter":{

"column":[

"id",

"outer_order_id",

"shop_name"

],

"connection":[

{

"jdbcUrl":[

""jdbc:mysql://你的地址:3306/你的库""

],

"table":[

"f_order_report_701"

]

}

],

"password":"*******",

"splitPk":"id",

"username":"root"

}

},

"writer":{

"name":"hbase20xsqlwriter",

"parameter":{

"batchSize":"100",

"column":[

"ID",

"OUTER_ORDER_ID",

"SHOP_NAME"

],

"nullMode":"skip",

"queryServerAddress":"http://127.0.0.1:8765",

"table":"F_ORDER_REPORT_701"

}

}

}

],

"entry":{

"jvm":"-Xms2048m -Xmx2048m"

},

"setting":{

"speed":{

"byte":1048576,

"channel":16,

"record":10000

}

}

}

2019-07-26 22:56:06.008 [main] WARN Engine - prioriy set to 0, because NumberFormatException, the value is: null

2019-07-26 22:56:06.011 [main] INFO PerfTrace - PerfTrace traceId=job_-1, isEnable=false, priority=0

2019-07-26 22:56:06.011 [main] INFO JobContainer - DataX jobContainer starts job.

2019-07-26 22:56:06.023 [main] INFO JobContainer - Set jobId = 0

2019-07-26 22:56:06.850 [job-0] INFO OriginalConfPretreatmentUtil - Available jdbcUrl:jdbc:mysql://172.16.14.155:3306/ins_632_prd?yearIsDateType=false&zeroDateTimeBehavior=convertToNull&tinyInt1isBit=false&rewriteBatchedStatements=true.

2019-07-26 22:56:06.925 [job-0] INFO OriginalConfPretreatmentUtil - table:[f_order_report_701] has columns:[id,outer_create_time,order_apply_id,outer_order_id,order_refund_type,shop_name,item_id,item_name,item_cnt,item_amount,adjust_amount,discount_amount,retail_price,settlement_amount,packing_return_id,allocated_time,pay_order_no,invoice_code,store_name,store_type,logistics_com_code,current_status,paragraph_time,receiver_province_name,invoice_type,shiped_return_time,invoice_time,is_return_invoice,category_name,source,volume,weight,create_time,trade_from,operate_time,tag,cate_id,outer_shop_id,platform_id,shop_id,order_item_id,store_id,franchiser,seller_memo,parent_order_id,write_off_amount,sales_order_id,replace_order_id,f_order_type,status,order_type,business_type,purchase_type,order_attribute,order_identifying,customer_code,customer_name,supplier_code,franchiser_name,item_type,received_time,ec_reimbursement_code,apply_type,sales_channel,gift_card_amount,customer_bill,purchase_price,purchase_amount,payment_amount,update_time,order_create_time,business_name,sale_type,order_payment_amount,order_purchase_amount,document_id,item_price,order_id,parent_outer_order_id,order_fx_relation_id,real_order_id,cims_entity_id,cims_entity_name,sales_center_code,outer_store_code,is_invoice,ou_id,outer_store_name,sales_center_name,compare_id,account_time,is_adjust,unique_id,is_group,operator_mip,commodity_type,receiver_city_name,tax_code,cashback_amount,wait_settle_store,brand].

2019-07-26 22:56:07.937 [job-0] INFO JobContainer - jobContainer starts to do prepare ...

2019-07-26 22:56:07.937 [job-0] INFO JobContainer - DataX Reader.Job [mysqlreader] do prepare work .

2019-07-26 22:56:07.938 [job-0] INFO JobContainer - DataX Writer.Job [hbase20xsqlwriter] do prepare work .

2019-07-26 22:56:07.938 [job-0] INFO JobContainer - jobContainer starts to do split ...

2019-07-26 22:56:07.939 [job-0] INFO JobContainer - Job set Max-Byte-Speed to 1048576 bytes.

2019-07-26 22:56:07.939 [job-0] INFO JobContainer - Job set Max-Record-Speed to 10000 records.

2019-07-26 22:56:07.944 [job-0] INFO JobContainer - DataX Reader.Job [mysqlreader] splits to [1] tasks.

2019-07-26 22:56:07.944 [job-0] INFO JobContainer - DataX Writer.Job [hbase20xsqlwriter] splits to [1] tasks.

2019-07-26 22:56:07.980 [job-0] INFO JobContainer - jobContainer starts to do schedule ...

2019-07-26 22:56:07.986 [job-0] INFO JobContainer - Scheduler starts [1] taskGroups.

2019-07-26 22:56:07.987 [job-0] INFO JobContainer - Running by standalone Mode.

2019-07-26 22:56:08.029 [taskGroup-0] INFO TaskGroupContainer - taskGroupId=[0] start [1] channels for [1] tasks.

2019-07-26 22:56:08.033 [taskGroup-0] INFO Channel - Channel set byte_speed_limit to -1, No bps activated.

2019-07-26 22:56:08.034 [taskGroup-0] INFO Channel - Channel set record_speed_limit to -1, No tps activated.

2019-07-26 22:56:08.099 [taskGroup-0] INFO TaskGroupContainer - taskGroup[0] taskId[0] attemptCount[1] is started

2019-07-26 22:56:08.116 [0-0-0-reader] INFO CommonRdbmsReader$Task - Begin to read record by Sql: [select id,outer_order_id,shop_name from f_order_report_701

] jdbcUrl:[jdbc:mysql://172.16.14.155:3306/ins_632_prd?yearIsDateType=false&zeroDateTimeBehavior=convertToNull&tinyInt1isBit=false&rewriteBatchedStatements=true].

2019-07-26 22:56:08.253 [0-0-0-reader] INFO CommonRdbmsReader$Task - Finished read record by Sql: [select id,outer_order_id,shop_name from f_order_report_701

] jdbcUrl:[jdbc:mysql://172.16.14.155:3306/ins_632_prd?yearIsDateType=false&zeroDateTimeBehavior=convertToNull&tinyInt1isBit=false&rewriteBatchedStatements=true].

2019-07-26 22:56:08.511 [taskGroup-0] INFO TaskGroupContainer - taskGroup[0] taskId[0] is successed, used[461]ms

2019-07-26 22:56:08.512 [taskGroup-0] INFO TaskGroupContainer - taskGroup[0] completed it's tasks.

2019-07-26 22:56:18.027 [job-0] INFO StandAloneJobContainerCommunicator - Total 30 records, 829 bytes | Speed 82B/s, 3 records/s | Error 0 records, 0 bytes | All Task WaitWriterTime 0.000s | All Task WaitReaderTime 0.000s | Percentage 100.00%

2019-07-26 22:56:18.027 [job-0] INFO AbstractScheduler - Scheduler accomplished all tasks.

2019-07-26 22:56:18.027 [job-0] INFO JobContainer - DataX Writer.Job [hbase20xsqlwriter] do post work.

2019-07-26 22:56:18.028 [job-0] INFO JobContainer - DataX Reader.Job [mysqlreader] do post work.

2019-07-26 22:56:18.028 [job-0] INFO JobContainer - DataX jobId [0] completed successfully.

2019-07-26 22:56:18.029 [job-0] INFO HookInvoker - No hook invoked, because base dir not exists or is a file: /root/data/datax/hook

2019-07-26 22:56:18.030 [job-0] INFO JobContainer -

[total cpu info] =>

averageCpu | maxDeltaCpu | minDeltaCpu

-1.00% | -1.00% | -1.00%

[total gc info] =>

NAME | totalGCCount | maxDeltaGCCount | minDeltaGCCount | totalGCTime | maxDeltaGCTime | minDeltaGCTime

Copy | 0 | 0 | 0 | 0.000s | 0.000s | 0.000s

MarkSweepCompact | 1 | 1 | 1 | 0.075s | 0.075s | 0.075s

2019-07-26 22:56:18.031 [job-0] INFO JobContainer - PerfTrace not enable!

2019-07-26 22:56:18.031 [job-0] INFO StandAloneJobContainerCommunicator - Total 30 records, 829 bytes | Speed 82B/s, 3 records/s | Error 0 records, 0 bytes | All Task WaitWriterTime 0.000s | All Task WaitReaderTime 0.000s | Percentage 100.00%

2019-07-26 22:56:18.032 [job-0] INFO JobContainer -

任务启动时刻 : 2019-07-26 22:56:06

任务结束时刻 : 2019-07-26 22:56:18

任务总计耗时 : 12s

任务平均流量 : 82B/s

记录写入速度 : 3rec/s

读出记录总数 : 30

读写失败总数 : 0

4、查看Phoenix中的数据记录

0: jdbc:phoenix:thin:url=http://localhost:876> select count(1) from F_ORDER_REPORT_701;

+-----------+

| COUNT(1) |

+-----------+

| 30 |

+-----------+

1 row selected (0.069 seconds)