姿态估计1-09:HR-Net(人体姿态估算)-源码无死角解析(5)-HighResolutionModule

以下链接是个人关于HR-Net(人体姿态估算) 所有见解,如有错误欢迎大家指出,我会第一时间纠正。有兴趣的朋友可以加微信:a944284742相互讨论技术。若是帮助到了你什么,一定要记得点赞!因为这是对我最大的鼓励。

姿态估计1-00:HR-Net(人体姿态估算)-目录-史上最新无死角讲解

前言

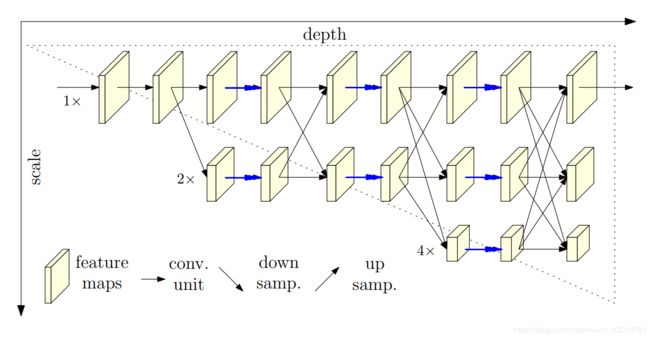

通过上篇博客,对于 HR-Net 的总体架构可谓是是否了解了,但是呢,对于lib/models/pose_hrnet.py中的类HighResolutionModule,也就是论文中平行子网络信息交换模块,该模块也是论文中比较核心的一个模块,接下来我们就来看看其具体实现过程。

代码注释

后续有领读,大家粗略看以下代码注释即可

class HighResolutionModule(nn.Module):

def __init__(self, num_branches, blocks, num_blocks, num_inchannels,

num_channels, fuse_method, multi_scale_output=True):

"""

:param num_branches: 当前 stage 分支平行子网络的数目

:param blocks: BasicBlock或者BasicBlock

:param num_blocks: BasicBlock或者BasicBlock的数目

:param num_inchannels: 输入通道数

当stage = 2时: num_inchannels = [32, 64]

当stage = 3时: num_inchannels = [32, 64, 128]

当stage = 4时: num_inchannels = [32, 64, 128, 256]

:param num_channels: 输出通道数目

当stage = 2时: num_inchannels = [32, 64]

当stage = 3时: num_inchannels = [32, 64, 128]

当stage = 4时: num_inchannels = [32, 64, 128, 256]

:param fuse_method: 默认SUM

:param multi_scale_output:

当stage = 2时: multi_scale_output=Ture

当stage = 3时: multi_scale_output=Ture

当stage = 4时: multi_scale_output=False

"""

super(HighResolutionModule, self).__init__()

# 对输入的一些参数进行检测

self._check_branches(

num_branches, blocks, num_blocks, num_inchannels, num_channels)

# 上面有详细介绍

self.num_inchannels = num_inchannels

self.fuse_method = fuse_method

self.num_branches = num_branches

self.multi_scale_output = multi_scale_output

# 为每个分支构建分支网络

# 当stage=2,3,4时,num_branches分别为:2,3,4,表示每个stage平行网络的数目

# 当stage=2,3,4时,num_blocks分别为:[4,4], [4,4,4], [4,4,4,4],

self.branches = self._make_branches(

num_branches, blocks, num_blocks, num_channels)

# 创建一个多尺度融合层,当stage=2,3,4时

# len(self.fuse_layers)分别为2,3,4. 其与num_branches在每个stage的数目是一致的

self.fuse_layers = self._make_fuse_layers()

a = len(self.fuse_layers)

self.relu = nn.ReLU(True)

def _check_branches(self, num_branches, blocks, num_blocks,

num_inchannels, num_channels):

if num_branches != len(num_blocks):

error_msg = 'NUM_BRANCHES({}) <> NUM_BLOCKS({})'.format(

num_branches, len(num_blocks))

logger.error(error_msg)

raise ValueError(error_msg)

if num_branches != len(num_channels):

error_msg = 'NUM_BRANCHES({}) <> NUM_CHANNELS({})'.format(

num_branches, len(num_channels))

logger.error(error_msg)

raise ValueError(error_msg)

if num_branches != len(num_inchannels):

error_msg = 'NUM_BRANCHES({}) <> NUM_INCHANNELS({})'.format(

num_branches, len(num_inchannels))

logger.error(error_msg)

raise ValueError(error_msg)

def _make_one_branch(self, branch_index, block, num_blocks, num_channels,

stride=1):

downsample = None

# 如果stride不为1, 或者输入通道数目与输出通道数目不一致

# 则通过卷积,对其通道数进行改变

if stride != 1 or \

self.num_inchannels[branch_index] != num_channels[branch_index] * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(

self.num_inchannels[branch_index],

num_channels[branch_index] * block.expansion,

kernel_size=1, stride=stride, bias=False

),

nn.BatchNorm2d(

num_channels[branch_index] * block.expansion,

momentum=BN_MOMENTUM

),

)

layers = []

# 为当前分支branch_index创建一个block,该处进行下采样

layers.append(

block(

self.num_inchannels[branch_index],

num_channels[branch_index],

stride,

downsample

)

)

# 把输出通道数,赋值给输入通道数,为下一stage作准备

self.num_inchannels[branch_index] = \

num_channels[branch_index] * block.expansion

# 为[1, num_blocks[branch_index]]分支创建block

for i in range(1, num_blocks[branch_index]):

layers.append(

block(

self.num_inchannels[branch_index],

num_channels[branch_index]

)

)

return nn.Sequential(*layers)

def _make_branches(self, num_branches, block, num_blocks, num_channels):

branches = []

# 循环为每个分支构建网络

# 当stage=2,3,4时,num_branches分别为:2,3,4,表示每个stage平行网络的数目

# 当stage=2,3,4时,num_blocks分别为:[4,4], [4,4,4], [4,4,4,4],

for i in range(num_branches):

branches.append(

self._make_one_branch(i, block, num_blocks, num_channels)

)

return nn.ModuleList(branches)

def _make_fuse_layers(self):

if self.num_branches == 1:

return None

# 平行子网络(分支)数目

num_branches = self.num_branches

# 输入通道数

num_inchannels = self.num_inchannels

fuse_layers = []

# 为每个分支都创建对应的特征融合网络,如果multi_scale_output==1,则只需要一个特征融合网络

for i in range(num_branches if self.multi_scale_output else 1):

fuse_layer = []

for j in range(num_branches):

# 每个分支网络的输出有多中情况

# 1.当前分支信息传递到上一分支(沿论文图示scale方向)的下一层(沿论文图示depth方向),进行上采样,分辨率加倍

if j > i:

fuse_layer.append(

nn.Sequential(

nn.Conv2d(

num_inchannels[j],

num_inchannels[i],

1, 1, 0, bias=False

),

nn.BatchNorm2d(num_inchannels[i]),

nn.Upsample(scale_factor=2**(j-i), mode='nearest')

)

)

# 2.当前分支信息传递到当前分支(论文图示沿scale方向)的下一层(沿论文图示depth方向),不做任何操作,分辨率相同

elif j == i:

fuse_layer.append(None)

# 3.当前分支信息传递到下前分支(论文图示沿scale方向)的下一层(沿论文图示depth方向),分辨率减半,分辨率减半

else:

conv3x3s = []

for k in range(i-j):

if k == i - j - 1:

num_outchannels_conv3x3 = num_inchannels[i]

conv3x3s.append(

nn.Sequential(

nn.Conv2d(

num_inchannels[j],

num_outchannels_conv3x3,

3, 2, 1, bias=False

),

nn.BatchNorm2d(num_outchannels_conv3x3)

)

)

else:

num_outchannels_conv3x3 = num_inchannels[j]

conv3x3s.append(

nn.Sequential(

nn.Conv2d(

num_inchannels[j],

num_outchannels_conv3x3,

3, 2, 1, bias=False

),

nn.BatchNorm2d(num_outchannels_conv3x3),

nn.ReLU(True)

)

)

fuse_layer.append(nn.Sequential(*conv3x3s))

fuse_layers.append(nn.ModuleList(fuse_layer))

return nn.ModuleList(fuse_layers)

def get_num_inchannels(self):

return self.num_inchannels

def forward(self, x):

# 当stage=2,3,4时,num_branches分别为:2,3,4,表示每个stage平行网络的数目

if self.num_branches == 1:

return [self.branches[0](x[0])]

# 当前有多少个网络分支,则有多少个x当作输入

# 当stage=2:x=[b,32,64,48],[b,64,32,24]

# -->x=[b,32,64,48],[b,64,32,24]

# 当stage=3:x=[b,32,64,48],[b,64,32,24],[b,128,16,12]

# -->x=[b,32,64,48],[b,64,32,24],[b,128,16,12]

# 当stage=4:x=[b,32,64,48],[b,64,32,24],[b,128,16,12],[b,256,8,6]

# -->[b,32,64,48],[b,64,32,24],[b,128,16,12],[b,256,8,6]

# 简单的说,该处就是对每个分支进行了BasicBlock或者Bottleneck操作

for i in range(self.num_branches):

x[i] = self.branches[i](x[i])

x_fuse = []

# 对每个分支进行融合(信息交流)

for i in range(len(self.fuse_layers)):

# 循环融合多个分支的输出信息,当作输入,进行下一轮融合

y = x[0] if i == 0 else self.fuse_layers[i][0](x[0])

for j in range(1, self.num_branches):

if i == j:

y = y + x[j]

else:

y = y + self.fuse_layers[i][j](x[j])

x_fuse.append(self.relu(y))

return x_fuse

代码领读

首先我们看到前线传播的如下部分:

for i in range(self.num_branches):

x[i] = self.branches[i](x[i])

x_fuse = []

# 对每个分支进行融合(信息交流)

for i in range(len(self.fuse_layers)):

# 循环融合多个分支的输出信息,当作输入,进行下一轮融合

y = x[0] if i == 0 else self.fuse_layers[i][0](x[0])

for j in range(1, self.num_branches):

if i == j:

y = y + x[j]

else:

y = y + self.fuse_layers[i][j](x[j])

x_fuse.append(self.relu(y))

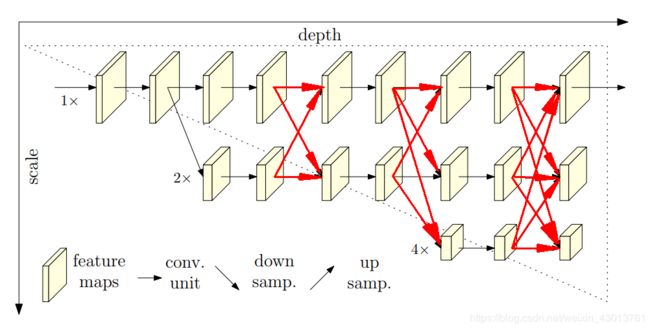

其对应论文如下图示部分(红色箭头):