yolo v3 keras版本增加图形界面UI

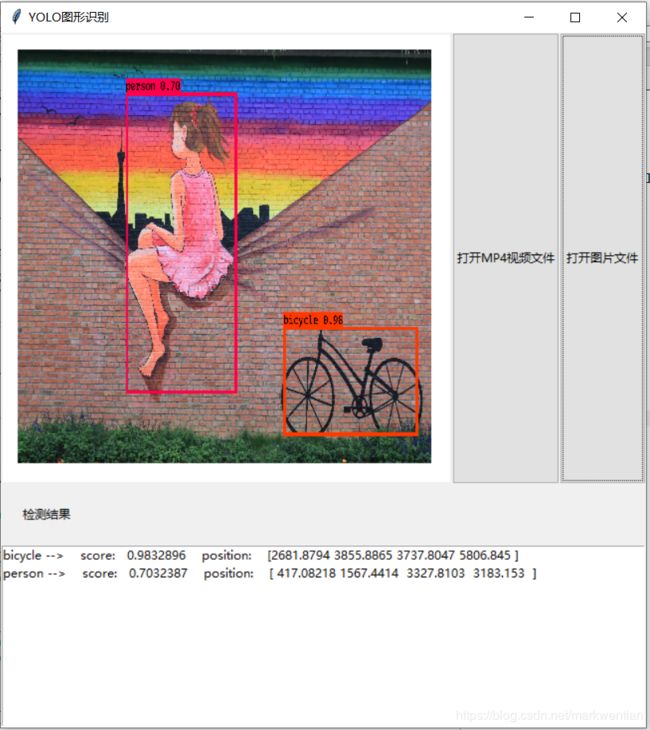

前一阵在看yolo v3 keras版本的代码,看完后自己重新训练了一个自己的数据集,但在测试时总觉得不太方便,于是尝试着在代码基础上增加一个可视化界面,可以进行图片的测试和视频的测试,由于是python新手,界面不求美观,但求基础功能能用,下面是最终的界面图:

界面左上部分显示检测的结果图片,右上半部分是选择图片和视频的按钮,其中点击视频检测时,选择mp4文件后,会弹出另一个视频实时检测的界面。

图片检测时,下面每一行显示一个检测结果,单击对应的结果后,图片上会只显示此结果的对应框,如下图:

目前这个代码基本功能可用,因此分享出来,修改的地方主要是修改了yolo_video.py和yolo.py模块。

感兴趣的童鞋可以在下面链接中下载源码(不包括.h5模型库):

https://github.com/markwentian/AI.git

yolo_video.py模块主要是添加界面功能,修改后代码为:

import sys

import argparse

from yolo import YOLO, detect_video

from tkinter import *

from tkinter import ttk

from tkinter import filedialog

from PIL import Image,ImageFont,ImageDraw,ImageTk

import numpy as np

from timeit import default_timer as timer

import time

def detect_img(yolo):

while True:

img = input('Input image filename:')

try:

image = Image.open('./'+img)

except:

print('Open Error! Try again!')

break

else:

r_image = yolo.detect_image(image)

r_image.show()

yolo.close_session()

FLAGS = None

class App:

def __init__(self,master,yolo):

self.master=master

self.initWidgets()

self.yolo=yolo

def initWidgets(self):

topF=Frame(self.master)

topF.pack(side=TOP,fill=BOTH)

self.cv=Canvas(topF,background='white',width=450,height=450)

self.cv.pack(side=LEFT,fill=BOTH,expand=YES)

ttk.Button(topF,text='打开图片文件',command=self.open_file).pack(side=RIGHT,fill=Y,expand=YES,anchor=CENTER)

ttk.Button(topF,text='打开MP4视频文件',command=self.open_video).pack(side=RIGHT,fill=Y,expand=YES,anchor=CENTER)

self.yolo_result=StringVar()

topF3=Frame(self.master)

topF3.pack(side=BOTTOM,fill=BOTH)

ttk.Label(topF3,text='检测结果',padding=20).pack(side=TOP,fill=X,expand=YES)

self.lb=Listbox(topF3,listvariable=self.yolo_result,selectmode='single')

self.lb.pack(side=BOTTOM,fill=X,expand=YES)

self.lb.bind("" ,self.click)

self.yolo_result_store=[]

self.class_names=[]

self.colors=[]

def click(self,event):

index_temp=self.lb.curselection()

index_temp2=index_temp[0]

yolo_result_click=self.yolo_result_store[index_temp2]

wdawdaw=self.yolo_result_store

predicted_class_click=yolo_result_click[0]

score_click=yolo_result_click[1]

box_click=yolo_result_click[2]

thickness_click=yolo_result_click[3]

c_click=yolo_result_click[4]

image_click=self.image.crop()

predicted_class=predicted_class_click

box=box_click

score=score_click

label='{} {:.2f}'.format(predicted_class,score)

draw=ImageDraw.Draw(image_click)

label_size=draw.textsize(label,self.font)

top, left, bottom, right = box

top = max(0, np.floor(top + 0.5).astype('int32'))

left = max(0, np.floor(left + 0.5).astype('int32'))

bottom = min(self.image.size[1], np.floor(bottom + 0.5).astype('int32'))

right = min(self.image.size[0], np.floor(right + 0.5).astype('int32'))

if top - label_size[1] >= 0:

text_origin = np.array([left, top - label_size[1]])

else:

text_origin = np.array([left, top + 1])

# My kingdom for a good redistributable image drawing library.

for i in range(thickness_click):

draw.rectangle(

[left + i, top + i, right - i, bottom - i],

outline=self.colors[c_click])

draw.rectangle(

[tuple(text_origin), tuple(text_origin + label_size)],

fill=self.colors[c_click])

draw.text(text_origin, label, fill=(0, 0, 0), font=self.font)

del draw

image_click=image_click.resize((416,416))

size0=image_click.size[0]

size1=image_click.size[1]

self.cv.bm=ImageTk.PhotoImage(image_click)

self.cv.create_image(size0/2+17,size1/2+17,image=self.cv.bm)

def open_file(self):

self.yolo_result.set('')

self.yolo_result_store.clear()

img=filedialog.askopenfilename(title='打开单个文件',filetypes=[("JPEG图像","*.jpg"),("JPEG图像","*.jpeg"),('PNG图像','*.png')],initialdir='F:/')

print(img)

print(type(img))

try:

self.image=Image.open(img)

except:

print('Open Error! Try again!')

else:

r_image,out_boxes,out_scores,out_classes,self.font,thickness,calculate_time,self.class_names,self.colors = self.yolo.detect_image(self.image)

image_main=self.image.crop()

for i, c in reversed(list(enumerate(out_classes))):

predicted_class = self.class_names[c]

box = out_boxes[i]

score = out_scores[i]

label = '{} {:.2f}'.format(predicted_class, score)

draw = ImageDraw.Draw(image_main)

label_size = draw.textsize(label, self.font)

top, left, bottom, right = box

top = max(0, np.floor(top + 0.5).astype('int32'))

left = max(0, np.floor(left + 0.5).astype('int32'))

bottom = min(self.image.size[1], np.floor(bottom + 0.5).astype('int32'))

right = min(self.image.size[0], np.floor(right + 0.5).astype('int32'))

print(label, (left, top), (right, bottom))

if top - label_size[1] >= 0:

text_origin = np.array([left, top - label_size[1]])

else:

text_origin = np.array([left, top + 1])

# My kingdom for a good redistributable image drawing library.

for i in range(thickness):

draw.rectangle(

[left + i, top + i, right - i, bottom - i],

outline=self.colors[c])

draw.rectangle(

[tuple(text_origin), tuple(text_origin + label_size)],

fill=self.colors[c])

draw.text(text_origin, label, fill=(0, 0, 0), font=self.font)

del draw

self.yolo_result_store.append((predicted_class,score,box,thickness,c))

self.lb.insert(END,str(predicted_class)+' -->'+' score: '+str(score)+' position: '+str(box))

image_main=image_main.resize((416,416))

size0=image_main.size[0]

size1=image_main.size[1]

self.cv.bm=ImageTk.PhotoImage(image_main)

self.cv.create_image(size0/2+17,size1/2+17,image=self.cv.bm)

def open_video(self):

video_path=filedialog.askopenfilename(title='打开单个文件',filetypes=[("视频文件","*.mp4")],initialdir='F:/')

detect_video(self.yolo,video_path)

def detect_img(self):

while True:

img=input('Input image filename:')

try:

image=Image.open(img)

except:

print('Open Error! Try again!')

continue

else:

r_image = yolo.detect_image(image)

r_image.show()

#self.yolo.close_session()

if __name__ == '__main__':

# class YOLO defines the default value, so suppress any default here

parser = argparse.ArgumentParser(argument_default=argparse.SUPPRESS)

'''

Command line options

'''

parser.add_argument(

'--model', type=str,

help='path to model weight file, default ' + YOLO.get_defaults("model_path")

)

parser.add_argument(

'--anchors', type=str,

help='path to anchor definitions, default ' + YOLO.get_defaults("anchors_path")

)

parser.add_argument(

'--classes', type=str,

help='path to class definitions, default ' + YOLO.get_defaults("classes_path")

)

parser.add_argument(

'--gpu_num', type=int,

help='Number of GPU to use, default ' + str(YOLO.get_defaults("gpu_num"))

)

parser.add_argument(

'--image', default=False, action="store_true",

help='Image detection mode, will ignore all positional arguments'

)

'''

Command line positional arguments -- for video detection mode

'''

parser.add_argument(

"--input", nargs='?', type=str,required=False,default='./path2your_video',

help = "Video input path"

)

parser.add_argument(

"--output", nargs='?', required=False, type=str, default="dayu.avi",

help = "[Optional] Video output path"

)

FLAGS = parser.parse_args()

#if FLAGS.image:

root=Tk()

root.title('YOLO图形识别')

App_inst=App(root,YOLO(**vars(FLAGS)))

root.resizable(width=True,height=True)

root.mainloop()

yolo.py的代码为:

# -*- coding: utf-8 -*-

"""

Class definition of YOLO_v3 style detection model on image and video

"""

import colorsys

import os

from timeit import default_timer as timer

import numpy as np

from keras import backend as K

from keras.models import load_model

from keras.layers import Input

from PIL import Image, ImageFont, ImageDraw

from yolo3.model import yolo_eval, yolo_body, tiny_yolo_body

from yolo3.utils import letterbox_image

import os

from keras.utils import multi_gpu_model

class YOLO(object):

_defaults = {

"model_path": 'model_data/yolo.h5',

"anchors_path": 'model_data/yolo_anchors.txt',

"classes_path": 'model_data/coco_classes.txt',

"score" : 0.3,

"iou" : 0.45,

"model_image_size" : (416, 416),

"gpu_num" : 1,

}

@classmethod

def get_defaults(cls, n):

if n in cls._defaults:

return cls._defaults[n]

else:

return "Unrecognized attribute name '" + n + "'"

def __init__(self, **kwargs):

self.__dict__.update(self._defaults) # set up default values

self.__dict__.update(kwargs) # and update with user overrides

self.class_names = self._get_class()

self.anchors = self._get_anchors()

self.sess = K.get_session()

self.boxes, self.scores, self.classes = self.generate()

self.model_path='model_data/yolo.h5'

self.anchors_path='model_data/yolo_anchors.txt'

self.classes_path='model_data/voc_class.txt'

self.gpu_num=1

self.model_image_size=(416,416)

def _get_class(self):

classes_path = os.path.expanduser(self.classes_path)

with open(classes_path) as f:

class_names = f.readlines()

class_names = [c.strip() for c in class_names]

return class_names

def _get_anchors(self):

anchors_path = os.path.expanduser(self.anchors_path)

with open(anchors_path) as f:

anchors = f.readline()

anchors = [float(x) for x in anchors.split(',')]

return np.array(anchors).reshape(-1, 2)

def generate(self):

model_path = os.path.expanduser(self.model_path)

assert model_path.endswith('.h5'), 'Keras model or weights must be a .h5 file.'

# Load model, or construct model and load weights.

num_anchors = len(self.anchors)

num_classes = len(self.class_names)

is_tiny_version = num_anchors==6 # default setting

try:

self.yolo_model = load_model(model_path, compile=False)

except:

self.yolo_model = tiny_yolo_body(Input(shape=(None,None,3)), num_anchors//2, num_classes) \

if is_tiny_version else yolo_body(Input(shape=(None,None,3)), num_anchors//3, num_classes)

self.yolo_model.load_weights(self.model_path) # make sure model, anchors and classes match

else:

assert self.yolo_model.layers[-1].output_shape[-1] == \

num_anchors/len(self.yolo_model.output) * (num_classes + 5), \

'Mismatch between model and given anchor and class sizes'

print('{} model, anchors, and classes loaded.'.format(model_path))

# Generate colors for drawing bounding boxes.

hsv_tuples = [(x / len(self.class_names), 1., 1.)

for x in range(len(self.class_names))]

self.colors = list(map(lambda x: colorsys.hsv_to_rgb(*x), hsv_tuples))

self.colors = list(

map(lambda x: (int(x[0] * 255), int(x[1] * 255), int(x[2] * 255)),

self.colors))

np.random.seed(10101) # Fixed seed for consistent colors across runs.

np.random.shuffle(self.colors) # Shuffle colors to decorrelate adjacent classes.

np.random.seed(None) # Reset seed to default.

# Generate output tensor targets for filtered bounding boxes.

self.input_image_shape = K.placeholder(shape=(2, ))

if self.gpu_num>=2:

self.yolo_model = multi_gpu_model(self.yolo_model, gpus=self.gpu_num)

boxes, scores, classes = yolo_eval(self.yolo_model.output, self.anchors,

len(self.class_names), self.input_image_shape,

score_threshold=self.score, iou_threshold=self.iou)

return boxes, scores, classes

def detect_image(self, image):

start = timer()

if self.model_image_size != (None, None):

assert self.model_image_size[0]%32 == 0, 'Multiples of 32 required'

assert self.model_image_size[1]%32 == 0, 'Multiples of 32 required'

boxed_image = letterbox_image(image, tuple(reversed(self.model_image_size)))

else:

new_image_size = (image.width - (image.width % 32),

image.height - (image.height % 32))

boxed_image = letterbox_image(image, new_image_size)

image_data = np.array(boxed_image, dtype='float32')

print(image_data.shape)

image_data /= 255.

image_data = np.expand_dims(image_data, 0) # Add batch dimension.

out_boxes, out_scores, out_classes = self.sess.run(

[self.boxes, self.scores, self.classes],

feed_dict={

self.yolo_model.input: image_data,

self.input_image_shape: [image.size[1], image.size[0]],

K.learning_phase(): 0

})

print('Found {} boxes for {}'.format(len(out_boxes), 'img'))

font = ImageFont.truetype(font='font/FiraMono-Medium.otf',

size=np.floor(3e-2 * image.size[1] + 0.5).astype('int32'))

thickness = (image.size[0] + image.size[1]) // 300

end = timer()

calculate_time=end - start

print(calculate_time)

return image,out_boxes,out_scores,out_classes,font,thickness,calculate_time,self.class_names,self.colors

def close_session(self):

self.sess.close()

def detect_image2(self, image):

start = timer()

if self.model_image_size != (None, None):

assert self.model_image_size[0]%32 == 0, 'Multiples of 32 required'

assert self.model_image_size[1]%32 == 0, 'Multiples of 32 required'

boxed_image = letterbox_image(image, tuple(reversed(self.model_image_size)))

else:

new_image_size = (image.width - (image.width % 32),

image.height - (image.height % 32))

boxed_image = letterbox_image(image, new_image_size)

image_data = np.array(boxed_image, dtype='float32')

print(image_data.shape)

image_data /= 255.

image_data = np.expand_dims(image_data, 0) # Add batch dimension.

out_boxes, out_scores, out_classes = self.sess.run(

[self.boxes, self.scores, self.classes],

feed_dict={

self.yolo_model.input: image_data,

self.input_image_shape: [image.size[1], image.size[0]],

K.learning_phase(): 0

})

print('Found {} boxes for {}'.format(len(out_boxes), 'img'))

font = ImageFont.truetype(font='font/FiraMono-Medium.otf',

size=np.floor(3e-2 * image.size[1] + 0.5).astype('int32'))

thickness = (image.size[0] + image.size[1]) // 300

for i, c in reversed(list(enumerate(out_classes))):

predicted_class = self.class_names[c]

box = out_boxes[i]

score = out_scores[i]

label = '{} {:.2f}'.format(predicted_class, score)

draw = ImageDraw.Draw(image)

label_size = draw.textsize(label, font)

top, left, bottom, right = box

top = max(0, np.floor(top + 0.5).astype('int32'))

left = max(0, np.floor(left + 0.5).astype('int32'))

bottom = min(image.size[1], np.floor(bottom + 0.5).astype('int32'))

right = min(image.size[0], np.floor(right + 0.5).astype('int32'))

print(label, (left, top), (right, bottom))

if top - label_size[1] >= 0:

text_origin = np.array([left, top - label_size[1]])

else:

text_origin = np.array([left, top + 1])

# My kingdom for a good redistributable image drawing library.

for i in range(thickness):

draw.rectangle(

[left + i, top + i, right - i, bottom - i],

outline=self.colors[c])

draw.rectangle(

[tuple(text_origin), tuple(text_origin + label_size)],

fill=self.colors[c])

draw.text(text_origin, label, fill=(0, 0, 0), font=font)

del draw

end = timer()

print(end - start)

return image

def detect_video(yolo, video_path, output_path=""):

import cv2

output_path='./test.mp4'

vid = cv2.VideoCapture(video_path)

if not vid.isOpened():

raise IOError("Couldn't open webcam or video")

video_FourCC = int(vid.get(cv2.CAP_PROP_FOURCC))

video_fps = vid.get(cv2.CAP_PROP_FPS)

video_size = (int(vid.get(cv2.CAP_PROP_FRAME_WIDTH)),

int(vid.get(cv2.CAP_PROP_FRAME_HEIGHT)))

isOutput = True if output_path != "" else False

#if isOutput:

print("!!! TYPE:", type(output_path), type(video_FourCC), type(video_fps), type(video_size))

out = cv2.VideoWriter(output_path, video_FourCC, video_fps, video_size)

accum_time = 0

curr_fps = 0

fps = "FPS: ??"

prev_time = timer()

while True:

return_value, frame = vid.read()

image = Image.fromarray(frame)

image = yolo.detect_image2(image)

result = np.asarray(image)

curr_time = timer()

exec_time = curr_time - prev_time

prev_time = curr_time

accum_time = accum_time + exec_time

curr_fps = curr_fps + 1

if accum_time > 1:

accum_time = accum_time - 1

fps = "FPS: " + str(curr_fps)

curr_fps = 0

cv2.putText(result, text=fps, org=(3, 15), fontFace=cv2.FONT_HERSHEY_SIMPLEX,

fontScale=0.50, color=(255, 0, 0), thickness=2)

cv2.namedWindow("result", cv2.WINDOW_NORMAL)

cv2.imshow("result", result)

#if isOutput:

out.write(result)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

#yolo.close_session()