elasticsearch 7.3使用x-pack kibana登录

转载来源 :

https://www.jianshu.com/p/9355bf7a72e6

介绍

Elasticsearch:分布式的 RESTful 风格的搜索和数据分析引擎,能够解决不断涌现出的各种用例。作为 Elastic Stack 的核心,它集中存储您的数据,帮助您发现意料之中以及意料之外的情况。

Kibana:能够以图表的形式呈现数据,并且具有可扩展的用户界面,供您全方位配置和管理 Elastic Stack。

X-Pack:将诸多强大功能集合到一个单独的程序包中,更将它带上了一个新的层次。

Elasticsearch配置文件

transport.host: localhost

transport.tcp.port: 9300

http.port: 9200

network.host: 0.0.0.0

bootstrap.memory_lock: false

bootstrap.system_call_filter: false

运行:./elasticsearch-6.2.4/bin/elasticsearch -d(-d参数是后台启动)

访问地址:http://localhost:9200/?pretty,这边可以使用chrome的elasticsearch head插件来访问

elasticsearch 7.3使用x-pack kibana登录v

第一步:切换到elasticsearch的目录下,使用下列命令生成证书

bin/elasticsearch-certutil cert -out config/elastic-certificates.p12 -pass ""

第二步:打开config/elasticsearch.yaml,在尾部添加下面一行代码:

xpack.security.enabled: true

xpack.security.transport.ssl.enabled: true

xpack.security.transport.ssl.verification_mode: certificate

xpack.security.transport.ssl.keystore.path: elastic-certificates.p12

xpack.security.transport.ssl.truststore.path: elastic-certificates.p12

然后启动elasticsearch

第三步:新打开一个终端,使用cd命令切换到elasticsearch目录,然后使用 bin/elasticsearch-setup-passwords auto 命令自动生成好几个默认用户和密码。 如果想手动生成密码,则使用 bin/elasticsearch-setup-passwords interactive 命令。一般默认会生成好几个管理员账户,其中一个叫elastic的用户是超级管理员。

[root@cmdb-server bin]# ./elasticsearch-setup-passwords auto

Initiating the setup of passwords for reserved users elastic,apm_system,kibana,logstash_system,beats_system,remote_monitoring_user.

The passwords will be randomly generated and printed to the console.

Please confirm that you would like to continue [y/N]y

Changed password for user apm_system

PASSWORD apm_system = vwMHscFAEtfqTh6hca8f

Changed password for user kibana

PASSWORD kibana = EFvC6s0tJ5DLQf0khfom

Changed password for user logstash_system

PASSWORD logstash_system = JOhNQEImNzS7JRDRdZOc

Changed password for user beats_system

PASSWORD beats_system = Rh3YIxCaaLyoCcA82K6m

Changed password for user remote_monitoring_user

PASSWORD remote_monitoring_user = FTkrJp5VPSoWiIqVVxKF

Changed password for user elastic

PASSWORD elastic = yLPivO0kca63PaAjxtQv

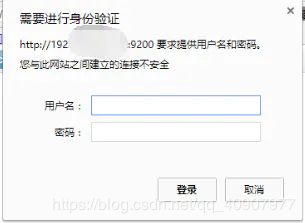

第四步:验证一下。打开浏览器,输入我们的elasticsearch的网址,比如本地的http://localhost:9200/ ,然后会弹出一个输入框,让我们输入账号和密码,输入后则可以看到一些介绍。

5、多节点集群X-Pack安全如何配置?

最简单的方法,

假定是初始部署集群阶段。

步骤1:清空data文件;

步骤2:将配置好的带证书的文件copy到另一台机器;

步骤3:根据集群配置ip、角色等信息即可。

6、小结

X-Pack安全配置的核心三步骤:

- 第一:设置:xpack.security.enabled: true。

- 第二:生成TLS证书。

- 第三:配置加密通信。

- 第四:设置密码。

配置:

kibana

vim kibana.yml

elasticsearch.username: "elastic"

elasticsearch.password: "密码"

备注:

此处用户名 elastic,kibana 都可以。原因未知,

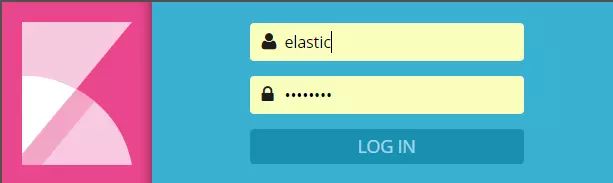

登录:

http://IP:5601

用户名:elastic (必须是他)

密码:

elasticsearch-6.2.4安装X-Pack

安装命令:

./elasticsearch-6.2.4/bin/elasticsearch-plugin install x-pack

./kibana-6.2.4-linux-x86_64/bin/kibana-plugin install x-pack

安装过程中跳出选项填y即可。

现在重新访问http://localhost:5601会提示输入账号密码,这里需要重置密码,运行下面任意一条命令即可

./elasticsearch-6.2.4/bin/x-pack/setup-passwords interactive # 自定义设置密码

./elasticsearch-6.2.4/bin/x-pack/setup-passwords auto

#自动生成密码,密码为changeme

这时输入账号:elastic,密码:changeme就可进去

解决警告

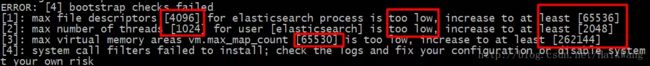

启动elasticsearch的时候,一般会有以下的警告提示

启动elasticsearch的警告界面

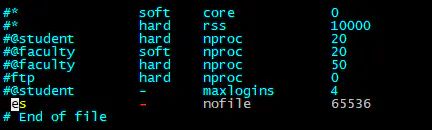

**解决方式:**切换回root,运行vim /etc/security/limits.conf,在文件末添加参数(图中的es为运行elasticsearch的用户名)

运行:vim /etc/sysctl.conf,在文件末添加参数

总结:这篇只是简单的安装配置,每个版本系统都会有些偏差,如果遇到问题最好在官网上查找解决方式

或者

可以用迅雷下载完成之后,直接安装即可。

[root@localhost bin]# ./kibana-plugin install x-pack-5.3.0.zip

启动kibana即可

浏览器输入http://192.168.75.142:5601

账户:elastic

密码:changeme

ELK集群 x-pack权限控制

x-pack搭建破解请参考之前的安装文档ELK+filebeat+x-pack平台搭建

1. 一些命令

查询所有用户

[elk@elk ~]$ curl -XGET -u elastic 'localhost:9200/_xpack/security/user?pretty'

配置http ssl认证后需要使用证书

[elk@elk ~]$ curl -XGET -u elastic 'https://localhost3:9200/_xpack/security/user?pretty' --key client.key --cert client.cer --cacert client-ca.cer -k -v

查询所有roles

[elk@elk ~]$ curl -XGET -u elastic 'localhost:9200/_xpack/security/role?pretty'

创建用户[chengen]

[elk@elk ~]$ curl -X POST -u elastic "localhost:9200/_xpack/security/user/chengen" -H 'Content-Type: application/json' -d'

{

"password" : "your passwd",

"roles" : [ "admin"],

"full_name" : "chengen",

"email" : "[email protected]",

"metadata" : {

"intelligence" : 7

}

}

'

画外音:加上“-u

elastic”是因为只有elastic用户有管理用户权限,另外,请求参数后面可以带上?pretty,这样返回的格式会好看一点儿

修改密码

curl -X POST -u elastic "localhost:9200/_xpack/security/user/test/_password" -H 'Content-Type: application/json' -d'

{

"password" : "your passwd"

}

'

修改username为test的密码,注意test是username不是fullname

禁用/启动/删除用户

curl -X PUT -u elastic "localhost:9200/_xpack/security/user/test/_disable"

curl -X PUT -u elastic "localhost:9200/_xpack/security/user/test/_enable"

curl -X DELETE -u elastic "localhost:9200/_xpack/security/user/test"

创建beats_admin1的roles,该用户组对filebeat有all权限,对.kibana有manage,read,index权限

curl -X POST -u elastic 'localhost:9200/_xpack/security/role/beats_admin1' -H 'Content-Type: application/json' -d '{

"indices" : [

{

"names" : [ "filebeat*" ],

"privileges" : [ "all" ]

},

{

"names" : [ ".kibana*" ],

"privileges" : [ "manage", "read", "index" ]

}

]

}'

安全API:https://www.elastic.co/guide/en/elasticsearch/reference/current/security-api.html

2. ElasticSearchHead

当你再次打开浏览器ElasticSearchHead插件的时候,会提示你输入密码

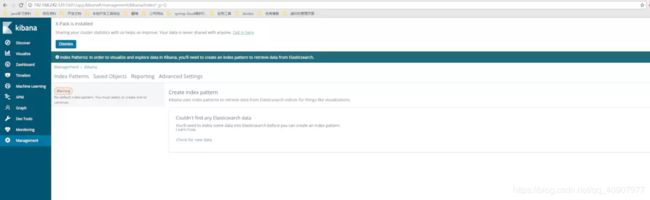

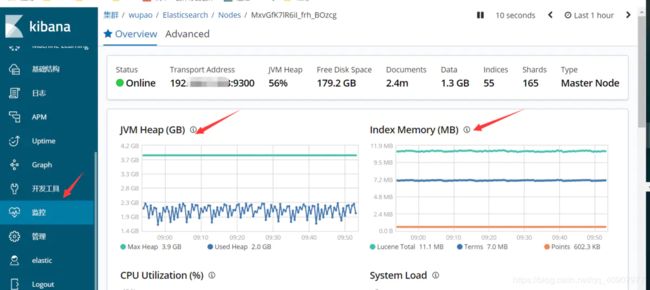

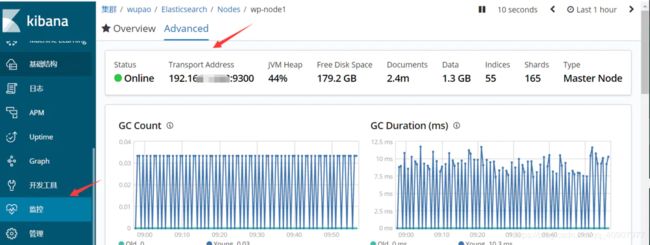

3. kibana再次打开kibana的时候会提示你输入密码

菜单栏功能会增加monitor等,可以查看集群状态,节点状态的监控信息,如

启用了X-Pack安全性之后,如果你加载一个Kibana指示板,该指示板访问你没有权限查看的索引中的数据,那么你将得到一个索引不存在的错误。X-Pack安全性目前还没有提供一种方法来控制哪些用户可以加载哪些仪表板。

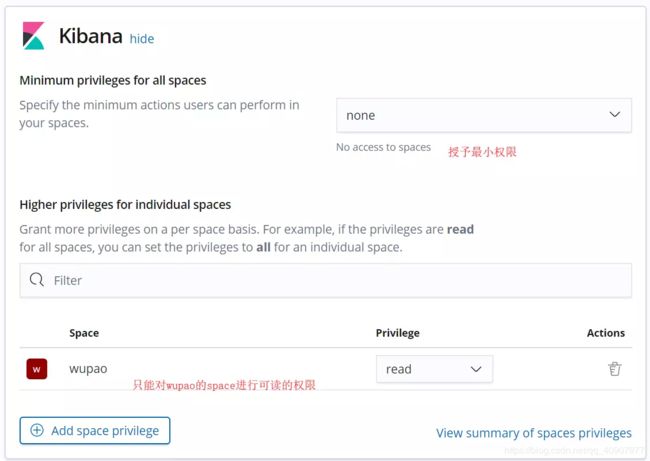

4.基于角色的权限控制

该功能的入口在 Management -> Users/Roles。Users 可以方便的管理用户并且对其赋予角色,角色和权限挂钩。Roles 可以方便的管理角色,对其进行赋权。Role 是 Permission 的集合,Permission 是 Privilege 的集合,下面来说说权限:

集群权限(Cluster Privilege);

Run As Privileges:可以使得新建角色拥有所选用户集的权限;

索引权限(Index Privilege):

Indices:指定在哪些索引上赋权;

Privileges:指定赋予哪些权限;

Granted Documents Query(可选):指定在哪些 Query 上赋权;

Granted Fields(可选):指定在哪些 fields 上赋权;

只将单个索引的权限放开给某些用户

创建role

授予role的elasticsearch权限

授予role的kibana权限

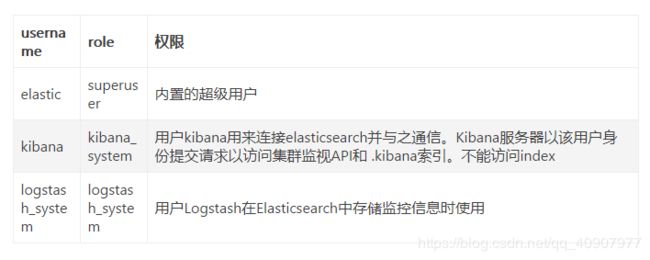

5.x-pack权限介绍

| role 释义 | 释义 |

|---|---|

| ingest_admin | 授予访问权限以管理所有索引模板和所有摄取管道配置。这个角色不能提供创建索引的能力; 这些特权必须在一个单独的角色中定义 |

| ibana_dashboard_only_user | 授予对Kibana仪表板的访问权限以及对.kibana索引的只读权限。 这个角色无法访问Kibana中的编辑工具 |

| kibana_system | 授予Kibana系统用户读取和写入Kibana索引所需的访问权限,管理索引模板并检查Elasticsearch集群的可用性。 此角色授予对.monitoring- 索引的读取访问权限以及对.reporting- 索引的读取和写入访问权限。 |

| kibana_user | 授予Kibana用户所需的最低权限。 此角色授予访问集群的Kibana索引和授予监视权限。 |

| logstash_admin | 授予访问用于管理配置的.logstash *索引的权限。 |

| logstash_system | 授予Logstash系统用户所需的访问权限,以将系统级别的数据(如监视)发送给Elasticsearch。不应将此角色分配给用户,因为授予的权限可能会在不同版本之间发生变化。此角色不提供对logstash索引的访问权限,不适合在Logstash管道中使用。 |

| machine_learning_admin | 授予manage_ml群集权限并读取.ml- *索引的访问权限 |

| machine_learning_user | 授予查看X-Pack机器学习配置,状态和结果所需的最低权限。此角色授予monitor_ml集群特权,并可以读取.ml-notifications和.ml-anomalies *索引,以存储机器学习结果 |

| monitoring_user | 授予除使用Kibana所需的X-Pack监视用户以外的任何用户所需的最低权限。 这个角色允许访问监控指标。 监控用户也应该分配kibana_user角色 |

| remote_monitoring_agent | 授予远程监视代理程序将数据写入此群集所需的最低权限 |

| reporting_user | 授予使用Kibana所需的X-Pack报告用户所需的特定权限。 这个角色允许访问报告指数。 还应该为报告用户分配kibana_user角色和一个授予他们访问将用于生成报告的数据的角色。 superuser #授予对群集的完全访问权限,包括所有索引和数据。 具有超级用户角色的用户还可以管理用户和角色,并模拟系统中的任何其他用户。 由于此角色的宽容性质,在将其分配给用户时要格外小心 |

| watcher_admin | 授予对.watches索引的写入权限,读取对监视历史记录的访问权限和触发的监视索引,并允许执行所有监视器操作 |

| watcher_user | 授予读取.watches索引,获取观看动作和观察者统计信息的权限 |

集群权限和索引权限请参考

https://www.elastic.co/guide/en/elastic-stack-overview/6.7/security-privileges.html#privileges-list-cluster

6. x-pack功能开关

| Setting | Description |

|---|---|

| xpack.security.enabled | 设置为 false 可以关闭 X-Pack security 功能。需要在 elasticsearch.yml 和 kibana.yml 同时配置。 |

| xpack.monitoring.enabled | 设置为 false 可以关闭 X-Pack monitoring 功能。 需要在elasticsearch.yml 和 kibana.yml 同时配置。 |

| xpack.graph.enabled | 设置为 false 可以关闭 X-Pack graph 功能。 需要在elasticsearch.yml 和 kibana.yml 同时配置。 |

| xpack.watcher.enabled | 设置为 false 可以关闭 Watcher 功能。 只需要在 elasticsearch.yml 配置。 |

| xpack.reporting.enabled | 设置为 false 可以关闭 reporting 功能。 只需要在 kibana.yml 配置。 |

修改配置后需要重启三个服务

nohup ./bin/logstash -f config/logstash.conf >/dev/null 2>&1 &

./bin/elasticsearch -d

./bin/kibana --verbose > kibana.log 2>&1 &

./filebeat -c filebeat_secure.yml -e -v

7. 对es集群,kibana,logsatsh,filebeat配置SSL,TLS和HTTPS安全

注意:

1.创建es证书证书一定要加–dns 和–ip否则后期通信会报错,如7.5记录的问题。

2.如果是集群请将es1中生成的证书elastic-stack-ca.p12拷贝到es2,es3并重新依次生成带有dns和ip的elastic-certificates.p12证书

3.如果集群安装x-pack是一定要配置证书加密的,否则集群无法正常启动

4.可以参照我下面的步骤进行安全证书操作,也可以参考https://www.elastic.co/cn/blog/configuring-ssl-tls-and-https-to-secure-elasticsearch-kibana-beats-and-logstash#run-filebeat

配置证书,因为配置完才发现了这篇文档,还没来得及尝试,感觉文章写的配置证书方法比较简单,有兴趣同学可以尝试一下。

7.1 es集群安全配置

elasticsearch-certuti创建证书

bin/elasticsearch-certutil ca //创建了elastic-stack-ca.p12

bin/elasticsearch-certutil cert --ca config/certs/elastic-stack-ca.p12 --dns 192.168.100.203 --ip 192.168.100.203 //创建了elastic-certificates.p12

config目录下创建certs文件夹,放入证书elastic-stack-ca.p12和elastic-certificates.p12

传输通信配置(第一步–集群节点间的认证)

xpack.security.transport.ssl.enabled: true

xpack.security.transport.ssl.verification_mode: certificate

xpack.security.transport.ssl.keystore.path: certs/elastic-certificates.p12

xpack.security.transport.ssl.truststore.path: certs/elastic-certificates.p12

内置用户定义密码(第二步,需要在配置HTTP ssl之前配置,因为设置密码的命令通过不安全的http与集群通信)

bin/elasticsearch-setup-passwords interactive

HTTP SSL加密配置(第三步–配置es集群的https)

对于http通信,Elasticsearch节点仅用作服务器,在elasticsearch.yml文件中指定如下

xpack.security.http.ssl.enabled: true

xpack.security.http.ssl.keystore.path: certs/elastic-certificates.p12

xpack.security.http.ssl.truststore.path: certs/elastic-certificates.p12

xpack.security.http.ssl.client_authentication: optional

综上,在elasticsearch.yml配置中定义以下内容如下:

xpack.security.enabled: true

xpack.security.transport.ssl.enabled: true

xpack.security.transport.ssl.verification_mode: certificate

xpack.security.transport.ssl.keystore.path: certs/elastic-certificates.p12

xpack.security.transport.ssl.truststore.path: certs/elastic-certificates.p12

xpack.security.http.ssl.enabled: true

xpack.security.http.ssl.keystore.path: certs/elastic-certificates.p12

xpack.security.http.ssl.truststore.path: certs/elastic-certificates.p12

xpack.security.http.ssl.client_authentication: optional

更改完毕后需要重启es集群此时访问集群需要https认证。此时elasticsearch head需要配置https://192.168.100.203:9200/

报错:

如果在elasticsearch.yml中配置了 xpack.security.authc.realms.pki1.type: pki

启动kibana后,会出现登录kibana地址时只能使用内置账户登录,如elastic,kibana等,后创建的自定义账户test等不可以登录。

解决:取消 xpack.security.authc.realms.pki1.type: pki 就恢复正常

参考: 在Elasticsearch中启用安全性,TLS / SSL和PKI身份验证的分步指南

7.2 kibana.yml安全配置

配置kibana到es的证书通信(生成elastic-stack-ca.pem)

cd /elk/elasticsearch-6.7.0

openssl pkcs12 -in config/certs/elastic-stack-ca.p12 -clcerts -nokeys -chain -out elastic-stack-ca.pem

config目录下创建certs并将elastic-stack-ca.pem放入

配置kibana https访问

创建kibana ssl证书(生成kibana.key和kibana.crt,在certs中新建ssll放入)

openssl req -subj '/CN=192.168.100.203/' -x509 -days $((100 * 365)) -batch -nodes -newkey rsa:2048 -keyout kibana.key -out kibana.crt

综上,在kibana.yml中配置文件如下:

#注意es地址为https

elasticsearch.hosts: ["https://192.168.100.203:9200"]

xpack.security.enabled: true

elasticsearch.username: "kibana"

elasticsearch.password: "your passwd"

#配置kibana https登录

server.ssl.enabled: true

server.ssl.certificate: /elk/kibana-6.7.0/config/certs/ssl/kibana.crt

server.ssl.key: /elk/kibana-6.7.0/config/certs/ssl/kibana.key

#kibana与es信任通信

elasticsearch.ssl.certificateAuthorities: config/certs/old/elastic-stack-ca.pem

elasticsearch.ssl.verificationMode: certificate

参考:

ELK的安全加固

kiban与logstash with x-pack

https://www.elastic.co/guide/en/kibana/6.3/configuring-tls.html

7.3 logstash安全配置

配置logstash与es集群通信

将7.2中生成的elastic-stack-ca.pem放入到/elk/logstash-6.7.0/config/certs中

在logstash中配置

xpack.monitoring.enabled: true

xpack.monitoring.elasticsearch.username: elastic

xpack.monitoring.elasticsearch.password: your passwd

xpack.monitoring.elasticsearch.hosts: ["https://192.168.100.203:9200","https://192.168.100.202:9200","https://192.168.100.201:9200"]

xpack.monitoring.elasticsearch.ssl.certificate_authority: "/elk/logstash-6.7.0/config/certs/elastic-stack-ca.pem"

xpack.monitoring.elasticsearch.ssl.verification_mode: certificate

xpack.monitoring.elasticsearch.sniffing: false

xpack.monitoring.collection.interval: 60s

xpack.monitoring.collection.pipeline.details.enabled: true

配置logstash与filebeat之间的通信

生成zip文件,包含instance.crt,instance.key在这里插入代码片

bin/elasticsearch-certutil cert --pem -ca config/certs/elastic-stack-ca.p12 --dns 192.168.100.203 --ip 192.168.100.203 ##生成zip文件,包含instance.crt,instance.key

转换成filebeat可用格式,生成instance.pkcs8.key

openssl pkcs8 -in instance.key -topk8 -nocrypt -out instance.pkcs8.key

logstash-sample.conf配置

input {

beats {

port => 5044

ssl => true

ssl_key => "/elk/logstash-6.7.0/config/certs/instance/instance.pkcs8.key"

ssl_certificate => "/elk/logstash-6.7.0/config/certs/instance/instance.crt"

}

}

output {

elasticsearch {

user => "elastic"

password => "your passwd"

ssl => true

ssl_certificate_verification => true

cacert => "/elk/logstash-6.7.0/config/certs/elastic-stack-ca.pem"

hosts => ["https://192.168.100.203:9200/","https://192.168.100.202:9200/","https://192.168.100.201:9200/"]

index => "testlog"

}

}

output将数据加密传输到es集群,input对filebeat传输的数据进行加密

如果logstash可以正常启动,说明配置正确

7.4 filebeat安全配置

将logsatsh中的ca证书:elastic-stack-ca.pem传输到beat所在的机器上,如:/opt/filebeat/certs/

修改filebeat.yml文件的output

output.logstash:

# The Logstash hosts

hosts: ["192.168.100.203:5044"]

ssl.certificate_authorities: ["/opt/filebeat/certs/elastic-stack-ca.pem"]

重启filebeat,如果数据可以在kibana上正常显示,便是配置正常

7.5 问题处理

1 “Host name ‘192.168.100.203’ does not match the certificate subject provided by the pee”

报错信息

[2019-06-24T13:57:08,032][ERROR][logstash.licensechecker.licensereader] Unable to retrieve license information from license server {:message=>"Host name '192.168.100.203' does not match the certificate subject provided by the peer (CN=instance)"}

解决:如果创建证书不添加dns和ip,es和logstash通过TLS通信时会此问题

PS 证书问题让我很抓狂,配置es集群以及logstash,kibana,filebest加密用了两天@@@,配置如下:

bin/elasticsearch-certutil cert --ca config/certs/elastic-stack-ca.p12 --dns 192.168.100.203 --ip 192.168.100.203

2 也是证书问题,和1类似

因为elk搭建在内网需要将外网(IP:1.2.3.4)的日志发送到elk,使用了frp内网穿透进行转发,外网机器在通过外网IP+端口访问时报错,如下:

2019-07-01T16:29:17.253+0800 ERROR pipeline/output.go:100 Failed to connect to backoff(async(tcp://1.2.3.4:5044)): x509: certificate is valid for 192.168.100.203, not 118.25.198.208

2019-07-01T16:29:17.253+0800 INFO pipeline/output.go:93 Attempting to reconnect to backoff(async(tcp://1.2.3.4:5044)) with 1 reconnect attempt(s)

需要在7.3 logstash安全配置,配置logstash与filebeat之间的通信生成zip文件时,dns和IP加如外网IP

bin/elasticsearch-certutil cert --pem -ca config/certs/elastic-stack-ca.p12 --dns 192.168.100.203,1.2.3.4 --ip 192.168.100.203,1.2.3.4 ##生成zip文件,包含instance.crt,instance.key

接下来的步骤一致,只需要更改logstash中的证书instance.pkcs8.key和instance.crt,代码如下,filebeat的ca证书不需要更改,数据就可以正常传输过来

input {

beats {

port => 5044

ssl => true

ssl_key => "/elk/logstash-6.7.0/config/certs/instance/instance.pkcs8.key"

ssl_certificate => "/elk/logstash-6.7.0/config/certs/instance/instance.crt"

}

}

参考:https://www.elastic.co/guide/en/elasticsearch/reference/6.7/configuring-tls.html#node-certificates

https://discuss.elastic.co/t/certificates-and-keys-for-kibana-and-logstash-with-x-pack/150390

关注证书的制作方法

关注证书的制作方法

3 “Could not index event to Elasticsearch”

报错信息

][WARN ][logstash.outputs.elasticsearch] Could not index event to Elasticsearch. {:status=>404, :action=>["index", {:_id=>nil, :_index=>"logstash-2017.07.05", :_type=>"syslog", :_routing=>nil}, 2017-07-05T03:50:10.577Z 10.91.142.103 <179>Jul 5 09:20:10 10.91.126.1 TMNX: 45006214 Base PORT-MINOR-etherAlarmSet-2017 [Port 5/2/4]: Alarm Remote Fault Set], :response=>{"index"=>{"_index"=>"logstash-2017.07.05", "_type"=>"syslog", "_id"=>nil, "status"=>404, "error"=>{"type"=>"index_not_found_exception", "reason"=>"no such index and [action.auto_create_index] ([.security,.monitoring*,.watches,.triggered_watches,.watcher-history*,.ml*]) doesn't match", "index_uuid"=>"na", "index"=>"logstash-2017.07.05"}}}}

解决:

去掉elasticsearch.yml中的限制即可

action.auto_create_index: ".security*,.monitoring*,.watches,.triggered_watches,.watcher-history*,.ml*"

参考:https://discuss.elastic.co/t/index-404-error-in-logstash/91830

4.es搭建集群时,需要集群正常,否则会报错如下:

[wp-node1] not enough master nodes discovered during pinging (found [[Candidate{node={wp-node1}{MxvGfK7lR6iIwww_frh_BOzcg}{yRKFsh2PT3aEzjI6IwwwG7n1Q}{192.168.100.203}{192.168.100.203:9300}{ml.machine_memory=16603000832, xpack.installed=true, ml.max_open_jobs=20,

][INFO ][o.e.d.z.ZenDiscovery ] [wp-node3] failed to send join request to master [{wp-node1}{MxvGfK7lR6iI_frh_BOzcg}{7Bc5VHF9QzCs-G2ERoAYaQ}{192.168.100.203}{192.168.100.203:9300}{ml.machine_memory=16603000832,

[wp-node2] failed to send join request to master [{wp-node1}{MxvGfK7lR6iI_frh_BOzcg}{M_VIMWHFSPmZT9n2D2_fZQ}{192.168.100.203}{192.168.100.203:9300}{ml.machine_memory=16603000832, ml.max_open_jobs=20, xpack.installed=true, ml.enabled=true}], reason [RemoteTransportException[[wp-node1][192.168.100.203:9300][internal:discovery/zen/join]]; nested: IllegalArgumentException[can't add node {wp-node2}{MxvGfK7lR6iI_frh_BOzcg}{gO2EuagySwq9d5AfDTK6qg}{192.168.1.202}{192.168.1.202:9300}{ml.machine_memory=12411887616, ml.max_open_jobs=20, xpack.installed=true, ml.enabled=true}, found existing node } with the same id but is a different node instance]; ]

5.此时curl访问es集群需要使用证书访问如

curl https://192.168.100.203:9200/_xpack/security/_authenticate?pretty \

--key client.key --cert client.cer --cacert client-ca.cer -k -v

访问logstash

curl -v --cacert ca.crt https://192.168.100.203:5044

6.logtstash日志报错如下:

[2019-07-03T18:18:29,035][ERROR][logstash.outputs.elasticsearch] Attempted to send a bulk request to elasticsearch' but Elasticsearch appears to be unreachable or down! {:error_message=>"Elasticsearch Unreachable: [https://elastic:[email protected]:9200/][Manticore::ConnectTimeout] Read timed out", :class=>"LogStash::Outputs::ElasticSearch::HttpClient::Pool::HostUnreachableError", :will_retry_in_seconds=>2}

[2019-07-03T18:18:29,035][ERROR][logstash.outputs.elasticsearch] Attempted to send a bulk request to elasticsearch' but Elasticsearch appears to be unreachable or down! {:error_message=>"Elasticsearch Unreachable: [https://elastic:[email protected]:9200/][Manticore::ConnectTimeout] Read timed out", :class=>"LogStash::Outputs::ElasticSearch::HttpClient::Pool::HostUnreachableError", :will_retry_in_seconds=>2}

[2019-07-03T18:18:29,515][WARN ][logstash.outputs.elasticsearch] Restored connection to ES instance {:url=>"https://elastic:[email protected]:9200/"}

原因:配置是对的,因为有些数据是可以发到elasticsearch上的,如果发的数据过多的话会发现这个问题

另:参考

http://www.51niux.com/?id=210

https://www.elastic.co/guide/en/beats/filebeat/current/configuring-ssl-logstash.html

参考链接 :

https://www.jianshu.com/p/9355bf7a72e6

Elasticsearch+kibana+X-Pack安装 :https://www.jianshu.com/p/54aa726f64d1

ELK集群 x-pack权限控制 :https://www.jianshu.com/p/23dbe4cc638e