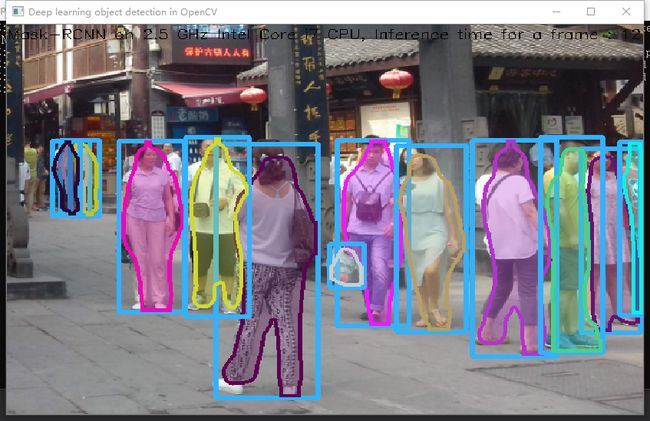

昨天有人问我关于调用mask_rcnn模型的问题,忽然想到最近三个月都没用opencv调用训练好的mask_rcnn模型了,今晚做个尝试,所以重新编译了 opencv4,跑个案例试试

#include#include #include #include <string.h> #include #include #include using namespace cv; using namespace dnn; using namespace std; RNG rng1; // Initialize the parameters float confThreshold = 0.5; // Confidence threshold float maskThreshold = 0.3; // Mask threshold //vector classes; //vectorcolors; // Draw the predicted bounding box void drawBox(Mat& frame, int classId, float conf, Rect box, Mat& objectMask); // Postprocess the neural network's output for each frame void postprocess(Mat& frame, const vector& outs); int main() { // Give the configuration and weight files for the model //String textGraph = "./mask_rcnn_inception_v2_coco_2018_01_28/mask_rcnn_inception_v2_coco_2018_01_28.pbtxt"; //String modelWeights = "./mask_rcnn_inception_v2_coco_2018_01_28/frozen_inference_graph.pb"; String modelWeights = "E:\\Opencv\\model_1\\mask_rcnn_inception_v2_coco_2018_01_28\\frozen_inference_graph.pb"; String textGraph = "E:\\Opencv\\model_1\\mask_rcnn_inception_v2_coco_2018_01_28\\mask_rcnn_inception_v2_coco_2018_01_28.pbtxt"; // Load the network Net net = readNetFromTensorflow(modelWeights, textGraph); net.setPreferableBackend(DNN_BACKEND_OPENCV); net.setPreferableTarget(DNN_TARGET_CPU); // Open a video file or an image file or a camera stream. string str, outputFile; VideoCapture cap(0);//根据摄像头端口id不同,修改下即可 //VideoWriter video; Mat frame, blob; // Create a window static const string kWinName = "Deep learning object detection in OpenCV"; namedWindow(kWinName, WINDOW_NORMAL); // Process frames. if (1>0) { // get frame from the video //cap >> frame; frame = cv::imread("D:\\image\\5.png"); // Stop the program if reached end of video if (frame.empty()) { cout << "Done processing !!!" << endl; cout << "Output file is stored as " << outputFile << endl; } // Create a 4D blob from a frame. blobFromImage(frame, blob, 1.0, Size(frame.cols, frame.rows), Scalar(), true, false); //blobFromImage(frame, blob); //Sets the input to the network net.setInput(blob); // Runs the forward pass to get output from the output layers std::vector outNames(2); outNames[0] = "detection_out_final"; outNames[1] = "detection_masks"; vector outs; net.forward(outs, outNames); // Extract the bounding box and mask for each of the detected objects postprocess(frame, outs); // Put efficiency information. The function getPerfProfile returns the overall time for inference(t) and the timings for each of the layers(in layersTimes) vector<double> layersTimes; double freq = getTickFrequency() / 1000; double t = net.getPerfProfile(layersTimes) / freq; string label = format("Mask-RCNN on 2.5 GHz Intel Core i7 CPU, Inference time for a frame : %0.0f ms", t); putText(frame, label, Point(0, 15), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 0)); // Write the frame with the detection boxes Mat detectedFrame; frame.convertTo(detectedFrame, CV_8U); imshow(kWinName, frame); } //cap.release(); waitKey(0); return 0; } // For each frame, extract the bounding box and mask for each detected object void postprocess(Mat& frame, const vector & outs) { Mat outDetections = outs[0]; Mat outMasks = outs[1]; // Output size of masks is NxCxHxW where // N - number of detected boxes // C - number of classes (excluding background) // HxW - segmentation shape const int numDetections = outDetections.size[2]; const int numClasses = outMasks.size[1]; outDetections = outDetections.reshape(1, outDetections.total() / 7); for (int i = 0; i < numDetections; ++i) { float score = outDetections.at<float>(i, 2); if (score > confThreshold) { // Extract the bounding box int classId = static_cast<int>(outDetections.at<float>(i, 1)); int left = static_cast<int>(frame.cols * outDetections.at<float>(i, 3)); int top = static_cast<int>(frame.rows * outDetections.at<float>(i, 4)); int right = static_cast<int>(frame.cols * outDetections.at<float>(i, 5)); int bottom = static_cast<int>(frame.rows * outDetections.at<float>(i, 6)); left = max(0, min(left, frame.cols - 1)); top = max(0, min(top, frame.rows - 1)); right = max(0, min(right, frame.cols - 1)); bottom = max(0, min(bottom, frame.rows - 1)); Rect box = Rect(left, top, right - left + 1, bottom - top + 1); // Extract the mask for the object Mat objectMask(outMasks.size[2], outMasks.size[3], CV_32F, outMasks.ptr<float>(i, classId)); // Draw bounding box, colorize and show the mask on the image drawBox(frame, classId, score, box, objectMask); } } } // Draw the predicted bounding box, colorize and show the mask on the image void drawBox(Mat& frame, int classId, float conf, Rect box, Mat& objectMask) { //Draw a rectangle displaying the bounding box rectangle(frame, Point(box.x, box.y), Point(box.x + box.width, box.y + box.height), Scalar(255, 178, 50), 3); //Get the label for the class name and its confidence /*string label = format("%.2f", conf); if (!classes.empty()) { CV_Assert(classId < (int)classes.size()); label = classes[classId] + ":" + label; }*/ //Display the label at the top of the bounding box /* int baseLine; Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine); box.y = max(box.y, labelSize.height); rectangle(frame, Point(box.x, box.y - round(1.5*labelSize.height)), Point(box.x + round(1.5*labelSize.width), box.y + baseLine), Scalar(255, 255, 255), FILLED); putText(frame, label, Point(box.x, box.y), FONT_HERSHEY_SIMPLEX, 0.75, Scalar(0, 0, 0), 1); */ //Scalar color = colors[classId%colors.size()]; Scalar color = Scalar(rng1.uniform(0, 255), rng1.uniform(0, 255), rng1.uniform(0, 255)); // Resize the mask, threshold, color and apply it on the image resize(objectMask, objectMask, Size(box.width, box.height)); Mat mask = (objectMask > maskThreshold); Mat coloredRoi = (0.3 * color + 0.7 * frame(box)); coloredRoi.convertTo(coloredRoi, CV_8UC3); // Draw the contours on the image vector contours; Mat hierarchy; mask.convertTo(mask, CV_8U); findContours(mask, contours, hierarchy, RETR_CCOMP, CHAIN_APPROX_SIMPLE); drawContours(coloredRoi, contours, -1, color, 5, LINE_8, hierarchy, 100); coloredRoi.copyTo(frame(box), mask); }

检测速度和python比起来偏慢

运行日志:

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\videoio_registry.cpp (187) cv::`anonymous-namespace'::VideoBackendRegistry::VideoBackendRegistry VIDEOIO: Enabled backends(7, sorted by priority): FFMPEG(1000); GSTREAMER(990); INTEL_MFX(980); MSMF(970); DSHOW(960); CV_IMAGES(950); CV_MJPEG(940)

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (340) cv::impl::getPluginCandidates Found 2 plugin(s) for GSTREAMER

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (172) cv::impl::DynamicLib::libraryLoad load E:\Opencv\opencv_4_1_1_install\bin\opencv_videoio_gstreamer411_64.dll => FAILED

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\videoio\src\backend_plugin.cpp (172) cv::impl::DynamicLib::libraryLoad load opencv_videoio_gstreamer411_64.dll => FAILED

[ INFO:0] global E:\Opencv\opencv-4.1.1\modules\core\src\ocl.cpp (888) cv::ocl::haveOpenCL Initialize OpenCL runtime...