python数据分析案例2-1:Python练习-Python爬虫框架Scrapy入门与实践

本文建立在学习完大壮老师视频Python最火爬虫框架Scrapy入门与实践,自己一步一步操作后做一个记录(建议跟我一样的新手都一步一步进行操作).

主要介绍:

1、scrapy框架简介、数据在框架内如何进行流动

2、scrapy框架安装、mongodb数据库安装

3、scrapy抓取项目如何创建

4、scrapy抓取项目如何进行数据解析

5、scrapy抓取项目如何绕过反爬机制抓取数据

6、scrapy抓取项目如何存储数据到不同的格式

=

抓取目标:

本文通过网页豆瓣电影排行数据的抓取和清洗,介绍Python使用

![]()

豆瓣电影排行

大壮老师介绍:

目前任职于某大型互联网公司人工智能中心。Python开发工程师,主要负责汽车简历数据抓取、商业推广平台数据抓取及接口开发、竞品信息数据抓取等工作。 开发语言:python、autoit。项目中主要使用工具requests 多线程抓取网页系统数据,使用autoit抓取软件系统数据,使用appium抓取app系统数据等。使用scrapy进行大数据量信息抓取。

准备工作:

1、具有一定的Python基础

2、具有一定的linux系统管理基础,编译安装软件,yum包管理工具等

3、具有一定数据库管理基础,增删改查

4、了解xpath语法和插件的使用方法

代码下载地址:Python爬虫框架Scrapy入门与实践

注意:

文件middlewares.py 中下面信息需要改为有效信息:

request.meta['proxy'] = 'http-cla.abuyun.com:9030'

proxy_name_pass = b'H622272STYB666BW:F78990HJSS7'

如果么有购买,测试功能需要取消该方法:

修改settings.py文件:注释douban.middlewares.my_proxy:

DOWNLOADER_MIDDLEWARES = { #'douban.middlewares.my_proxy': 543,}

操作 1 : 通过Pycharm CE 创建一个项目scrapy_douban

创建前需要安装好相应的环境和软件:

环境配置,安装

A : 安装Anaconda (包含Python环境,Conda,numpy,pandas 等大量依赖包) :

下载地址1:Anaconda 下载1

下载地址2(国内推荐): 清华大学开源镜像 Anaconda 下载

选择包 : 分别对应有Mac , windows, linux 包, 根据设备选择,

比如我的是win10-64bit : Anaconda3-5.3.1-Windows-x86_64.exe

Anaconda5.3

下载开发工具->PyCharm

logo如下:

![]()

PyCharm

创建项目: 下面选择Python方式是创建一个新的目录管理第三方源, 后面可能需要手动导入需要的包

图片.png

创建后就会自动生成项目,并导入初始化环境, 然后就可以创建代码了:

图片.png

操作 2 : 在pycharm的terminal里初始化

(下面调试是在win10系统进行,其他系统可能有点小区别)

在在pycharm的terminal里输入scrapy,有相关信息输出表示scrapy运行正常;

我这里出了一点问题,搞了几个小时,记录在这里:

scrapy不是批处理命令https://blog.csdn.net/childbor/article/details/107852133

最后终于搞好了:

直接在pycharm的terminal里初始化一个项目douban:

scrapy startproject douban

终端效果如下:New Scrapy project 'douban', using template directory 'C:\Users\Rechard\AppData\Roaming\Python\Python38\site-

packages\scrapy\templates\project', created in:

D:\SW_dvp\python\practice\scrapyprac\douban

You can start your first spider with:

cd douban

scrapy genspider example example.com

操作 3 : 修改settings.py设置文件:

# Obey robots.txt rules不遵守此协议

ROBOTSTXT_OBEY = False

#下载延时

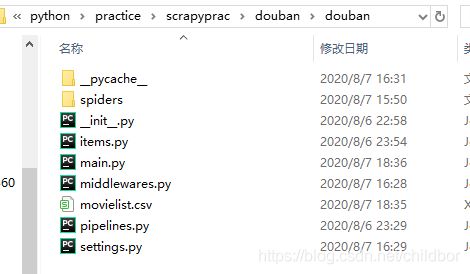

DOWNLOAD_DELAY = 0.5操作 4 : 生成初始化文件:

D:\SW_dvp\python\practice\scrapyprac\douban>cd douban

D:\SW_dvp\python\practice\scrapyprac\douban\douban>dir

驱动器 D 中的卷是 新加卷

卷的序列号是 BE92-2BF3

D:\SW_dvp\python\practice\scrapyprac\douban\douban 的目录

2020/08/06 23:45 .

2020/08/06 23:45 ..

2020/08/06 23:29 262 items.py

2020/08/06 23:29 3,648 middlewares.py

2020/08/06 23:29 360 pipelines.py

2020/08/06 23:45 3,091 settings.py

2020/08/06 22:59 spiders

2020/08/06 22:58 0 __init__.py

5 个文件 7,361 字节

3 个目录 440,007,593,984 可用字节

D:\SW_dvp\python\practice\scrapyprac\douban\douban>cd spiders

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>scrapy genspider douban_spider movie.douban.com

Created spider 'douban_spider' using template 'basic' in module:

douban.spiders.douban_spider

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>dir

驱动器 D 中的卷是 新加卷

卷的序列号是 BE92-2BF3

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders 的目录

2020/08/06 23:49 .

2020/08/06 23:49 ..

2020/08/06 23:49 218 douban_spider.py

2020/08/06 22:58 161 __init__.py

2020/08/06 23:49 __pycache__

2 个文件 379 字节

3 个目录 440,007,593,984 可用字节

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>

图片.png

抓取目标链接:https://movie.douban.com/top250

![]()

图片.png

操作 5 : 根据需要抓取的对象编辑数据模型文件 items.py ,创建对象(序号,名称,描述,评价等等).

修改前:

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.html

import scrapy

class DoubanItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

pass

修改后:

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.html

import scrapy

class DoubanItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

#序号

serial_number = scrapy.Field()

#电影名称

movie_name = scrapy.Field()

# 介绍

introduce = scrapy.Field()

# 星级

star = scrapy.Field()

# 评价

evaluate = scrapy.Field()

# 描述

describle = scrapy.Field()操作 6 : 编辑爬虫文件douban_spider.py :

import scrapy

class DoubanSpiderSpider(scrapy.Spider):

name = 'douban_spider'

allowed_domains = ['movie.douban.com']

start_urls = ['http://movie.douban.com/']

def parse(self, response):

pass修改后:

import scrapy

class DoubanSpiderSpider(scrapy.Spider):

# 爬虫的名称

name = 'douban_spider'

# 爬虫允许抓取的域名

allowed_domains = ['movie.douban.com']

# 爬虫抓取数据地址,给调度器

start_urls = ['http://movie.douban.com/top250']

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

douban_item = DoubanItem()

douban_item['serial_number'] = i_item.xpath(".//div[@class='item']//em/text()").extract_first()

douban_item['movie_name'] = i_item.xpath(

".//div[@class='info']/div[@class='hd']/a/span[1]/text()").extract_first()

descs = i_item.xpath(".//div[@class='info']//div[@class='hd']/p[1]/text()").extract()

for i_desc in descs:

i_desc_str = "".join(i_desc.split())

douban_item['introduce'] = i_desc_str

douban_item['star'] = i_item.xpath(".//span[@class='rating_num']/text()").extract_first()

douban_item['evaluate'] = i_item.xpath(".//div[@class='star']//span[4]/text()").extract_first()

douban_item['describle'] = i_item.xpath(".//p[@class='quote']/span/text()").extract_first()

yield douban_item

# 解析下一页

next_link = response.xpath("//span[@class='next']/link/@href").extract()

if next_link:

next_link = next_link[0]

yield scrapy.Request("https://movie.douban.com/top250" + next_link, callback=self.parse)

# 打印返回结果

print(response.text)

操作 7 : 开启scrapy项目:

打开终端, 在spiders文件路径下执行命令:scrapy crawl douban_spider

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>scrapy crawl douban_spider

执行返回:

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>scrapy crawl douban_spider

2020-08-06 23:57:05 [scrapy.utils.log] INFO: Scrapy 2.3.0 started (bot: douban)

2020-08-06 23:57:05 [scrapy.utils.log] INFO: Versions: lxml 4.5.2.0, libxml2 2.9.5, cssselect 1.1.0, parsel 1

.6.0, w3lib 1.22.0, Twisted 20.3.0, Python 3.8.5 (tags/v3.8.5:580fbb0, Jul 20 2020, 15:57:54) [MSC v.1924 64

bit (AMD64)], pyOpenSSL 19.1.0 (OpenSSL 1.1.1g 21 Apr 2020), cryptography 3.0, Platform Windows-10-10.0.1836

2-SP0

2020-08-06 23:57:05 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.selectreactor.SelectReactor

2020-08-06 23:57:05 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'douban',

'DOWNLOAD_DELAY': 0.5,

'NEWSPIDER_MODULE': 'douban.spiders',

'SPIDER_MODULES': ['douban.spiders']}

2020-08-06 23:57:05 [scrapy.extensions.telnet] INFO: Telnet Password: f51853d7a3614f1d

2020-08-06 23:57:05 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2020-08-06 23:57:09 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2020-08-06 23:57:09 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2020-08-06 23:57:09 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2020-08-06 23:57:09 [scrapy.core.engine] INFO: Spider opened

2020-08-06 23:57:09 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at

0 items/min)

2020-08-06 23:57:09 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-08-06 23:57:09 [scrapy.core.engine] DEBUG: Crawled (403) 上面返回发现有报错

2020-08-06 23:57:09 [scrapy.core.engine] DEBUG: Crawled (403) 我们还需要回到项目settings.py 里 设置USER_AGENT,不然请求无法通过

设置什么内容?

操作 8 : 设置请求头信息 USER_AGENT

我们需要打开网页,F12打开页面调试窗口,在网络(network)下,刷新页面,找到"top250",并点击它:

图片.png

找到请求信息的消息头,里面有User-Agent信息: (复制它)

图片.png

User-Agent: Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36

打开Pycharm CE的 settings.py 里 设置USER_AGENT:

# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36'

打开终端, 在spiders文件路径下重新执行命令:scrapy crawl douban_spider

D:\SW_dvp\python\practice\scrapyprac\douban\douban\spiders>scrapy crawl douban_spider

如果返回日志里有一堆html信息,说明执行成功:

2020-08-07 14:19:41 [scrapy.utils.log] INFO: Scrapy 2.3.0 started (bot: douban)

2020-08-07 14:19:41 [scrapy.utils.log] INFO: Versions: lxml 4.5.2.0, libxml2 2.9.5, cssselect 1.1.0, parsel 1

.6.0, w3lib 1.22.0, Twisted 20.3.0, Python 3.8.5 (tags/v3.8.5:580fbb0, Jul 20 2020, 15:57:54) [MSC v.1924 64

bit (AMD64)], pyOpenSSL 19.1.0 (OpenSSL 1.1.1g 21 Apr 2020), cryptography 3.0, Platform Windows-10-10.0.1836

2-SP0

2020-08-07 14:19:41 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.selectreactor.SelectReactor

2020-08-07 14:19:41 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'douban',

'DOWNLOAD_DELAY': 0.5,

'NEWSPIDER_MODULE': 'douban.spiders',

'SPIDER_MODULES': ['douban.spiders'],

'USER_AGENT': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 '

'(KHTML, like Gecko) Chrome/69.0.3497.100 Safari/537.36'}

2020-08-07 14:19:41 [scrapy.extensions.telnet] INFO: Telnet Password: 6026458f6c59e054

2020-08-07 14:19:41 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2020-08-07 14:19:46 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2020-08-07 14:19:46 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2020-08-07 14:19:46 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2020-08-07 14:19:46 [scrapy.core.engine] INFO: Spider opened

2020-08-07 14:19:46 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at

0 items/min)

2020-08-07 14:19:46 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-08-07 14:19:46 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to from

2020-08-07 14:19:47 [scrapy.core.engine] DEBUG: Crawled (200) (referer:

None)

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '希望让人自由。',

'evaluate': '2101715人评价',

'movie_name': '肖申克的救赎',

'serial_number': '1',

'star': '9.7'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '风华绝代。',

'evaluate': '1558620人评价',

'movie_name': '霸王别姬',

'serial_number': '2',

'star': '9.6'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '一部美国近现代史。',

'evaluate': '1588604人评价',

'movie_name': '阿甘正传',

'serial_number': '3',

'star': '9.5'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '怪蜀黍和小萝莉不得不说的故事。',

'evaluate': '1776366人评价',

'movie_name': '这个杀手不太冷',

'serial_number': '4',

'star': '9.4'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '失去的才是永恒的。 ',

'evaluate': '1541259人评价',

'movie_name': '泰坦尼克号',

'serial_number': '5',

'star': '9.4'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '最美的谎言。',

'evaluate': '992241人评价',

'movie_name': '美丽人生',

'serial_number': '6',

'star': '9.5'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '最好的宫崎骏,最好的久石让。 ',

'evaluate': '1651569人评价',

'movie_name': '千与千寻',

'serial_number': '7',

'star': '9.4'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '拯救一个人,就是拯救整个世界。',

'evaluate': '808069人评价',

'movie_name': '辛德勒的名单',

'serial_number': '8',

'star': '9.5'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '诺兰给了我们一场无法盗取的梦。',

'evaluate': '1514857人评价',

'movie_name': '盗梦空间',

'serial_number': '9',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '永远都不能忘记你所爱的人。',

'evaluate': '1054526人评价',

'movie_name': '忠犬八公的故事',

'serial_number': '10',

'star': '9.4'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '每个人都要走一条自己坚定了的路,就算是粉身碎骨。 ',

'evaluate': '1263353人评价',

'movie_name': '海上钢琴师',

'serial_number': '11',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '如果再也不能见到你,祝你早安,午安,晚安。',

'evaluate': '1137211人评价',

'movie_name': '楚门的世界',

'serial_number': '12',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '英俊版憨豆,高情商版谢耳朵。',

'evaluate': '1406144人评价',

'movie_name': '三傻大闹宝莱坞',

'serial_number': '13',

'star': '9.2'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '小瓦力,大人生。',

'evaluate': '995851人评价',

'movie_name': '机器人总动员',

'serial_number': '14',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '天籁一般的童声,是最接近上帝的存在。 ',

'evaluate': '978364人评价',

'movie_name': '放牛班的春天',

'serial_number': '15',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '爱是一种力量,让我们超越时空感知它的存在。',

'evaluate': '1166486人评价',

'movie_name': '星际穿越',

'serial_number': '16',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '一生所爱。',

'evaluate': '1117131人评价',

'movie_name': '大话西游之大圣娶亲',

'serial_number': '17',

'star': '9.2'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '我们一路奋战不是为了改变世界,而是为了不让世界改变我们。',

'evaluate': '686509人评价',

'movie_name': '熔炉',

'serial_number': '18',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '迪士尼给我们营造的乌托邦就是这样,永远善良勇敢,永远出乎意料。',

'evaluate': '1340440人评价',

'movie_name': '疯狂动物城',

'serial_number': '19',

'star': '9.2'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '香港电影史上永不过时的杰作。',

'evaluate': '909144人评价',

'movie_name': '无间道',

'serial_number': '20',

'star': '9.2'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '人人心中都有个龙猫,童年就永远不会消失。',

'evaluate': '939796人评价',

'movie_name': '龙猫',

'serial_number': '21',

'star': '9.2'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '千万不要记恨你的对手,这样会让你失去理智。',

'evaluate': '686248人评价',

'movie_name': '教父',

'serial_number': '22',

'star': '9.3'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '平民励志片。 ',

'evaluate': '1132039人评价',

'movie_name': '当幸福来敲门',

'serial_number': '23',

'star': '9.1'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '真正的幸福是来自内心深处。',

'evaluate': '1312622人评价',

'movie_name': '怦然心动',

'serial_number': '24',

'star': '9.1'}

2020-08-07 14:19:47 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250>

{'describle': '满满温情的高雅喜剧。',

'evaluate': '732668人评价',

'movie_name': '触不可及',

'serial_number': '25',

'star': '9.2'}

豆瓣电影 Top 250

....

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:\Users\Rechard\AppData\Roaming\Python\Python38\site-packages\scrapy\utils\defer.py", line 55, in mu

stbe_deferred

result = f(*args, **kw)

File "C:\Users\Rechard\AppData\Roaming\Python\Python38\site-packages\scrapy\core\spidermw.py", line 60, in

process_spider_input

return scrape_func(response, request, spider)

File "C:\Users\Rechard\AppData\Roaming\Python\Python38\site-packages\scrapy\core\scraper.py", line 152, in

call_spider

warn_on_generator_with_return_value(spider, callback)

File "C:\Users\Rechard\AppData\Roaming\Python\Python38\site-packages\scrapy\utils\misc.py", line 218, in wa

rn_on_generator_with_return_value

if is_generator_with_return_value(callable):

File "C:\Users\Rechard\AppData\Roaming\Python\Python38\site-packages\scrapy\utils\misc.py", line 203, in is

_generator_with_return_value

tree = ast.parse(dedent(inspect.getsource(callable)))

File "c:\program files\python\python38\lib\ast.py", line 47, in parse

return compile(source, filename, mode, flags,

File "", line 1

def parse(self, response):

^

IndentationError: unexpected indent

2020-08-07 14:19:48 [scrapy.core.engine] INFO: Closing spider (finished)

2020-08-07 14:19:48 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 978,

'downloader/request_count': 3,

'downloader/request_method_count/GET': 3,

'downloader/response_bytes': 25468,

'downloader/response_count': 3,

'downloader/response_status_count/200': 2,

'downloader/response_status_count/301': 1,

'elapsed_time_seconds': 2.099,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2020, 8, 7, 6, 19, 48, 395590),

'item_scraped_count': 25,

'log_count/DEBUG': 28,

'log_count/ERROR': 1,

'log_count/INFO': 10,

'request_depth_max': 1,

'response_received_count': 2,

'scheduler/dequeued': 3,

'scheduler/dequeued/memory': 3,

'scheduler/enqueued': 3,

'scheduler/enqueued/memory': 3,

'spider_exceptions/IndentationError': 1,

'start_time': datetime.datetime(2020, 8, 7, 6, 19, 46, 296590)}

2020-08-07 14:19:48 [scrapy.core.engine] INFO: Spider closed (finished)

我这里好像出了意外,不知道是什么原因?暂时搁置一下。

另外,本人安装Python是通过Anaconda管理,会安装大部分常用的模块,如果编译安装Python缺少模块,就可能执行失败

图片.png

如果执行失败,比如下面情况,像教程里老师缺少sqlite3:

![]()

图片.png

那么需要安装sqlite:

-

管理员执行命令: sudo yum -y install sqlite* -

再输入电脑密码回车

![]()

图片.png

安装成功后,需要重新编译一下Python,并开启sqlite

进入你的Python安装目录编译:

./configure --prefix='你的安装路径' --with-ssl

图片.png

操作 9 : 上面我们是在终端执行的,为了方便,现在设置在Pycharm CE开发工具中执行.

首先我们需要创建一个启动文件,比如main.py:

创建完成后编写如下main.py:

from scrapy import cmdline

# 输出未过滤的页面信息

cmdline.execute('scrapy crawl douban_spider'.split())

右键运行,返回信息和终端一样.

操作 10 : 下面进入爬虫文件douban_spider.py 进行进一步设置:

# -*- coding: utf-8 -*-

import scrapy

class DoubanSpiderSpider(scrapy.Spider):

# 爬虫的名称

name = 'douban_spider'

# 爬虫允许抓取的域名

allowed_domains = ['movie.douban.com']

# 爬虫抓取数据地址,给调度器

start_urls = ['http://movie.douban.com/top250']

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

print(i_item)其中:response.xpath("//div[@class='article']//ol[@class='grid_view']/li")是xml的解析方法xpath, 括号内是xpath语法:

(根据抓取网页的目录结构,等到上面结果, 意思是选取class为article的div下,class为grid_view的ol下的所有li标签)

![]()

图片.png

示例:

图片.png

回到上面,在douban_spider.py 编辑完成后,进入main.py运行:

2020-08-07 15:18:05 [scrapy.core.engine] INFO: Spider opened

2020-08-07 15:18:05 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2020-08-07 15:18:05 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2020-08-07 15:18:05 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to from

2020-08-07 15:18:06 [scrapy.core.engine] DEBUG: Crawled (200) (referer: None)

操作 11 : 返回我们选择的Selector对象

接下来进一步细分,获取详细的信息:

继续修改 信息:

1: 导入模型文件from douban.items import DoubanItem

意思是从目录文件douban下的items.py里,导入DoubanItem模型

2: 修改遍历:

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

douban_item = DoubanItem()

douban_item['serial_number'] = i_item.xpath(".//div[@class='item']//em/text()").extract_first()

print(douban_item)解释:

1 DoubanItem() 模型初始化

2 douban_item['serial_number'] 设置模型变量serial_number值,

3 i_item.xpath(".//div[@class='item']//em/text()")对返回结果进一步筛选,并且以"."开头表示拼接,以text()结束表示获取其信息

4 extract_first() 筛选结果的第一个值

修改后的douban_spider.py文件:

# -*- coding: utf-8 -*-

# -*- coding:utf-8 -*-

import scrapy

from douban.items import DoubanItem

class DoubanSpiderSpider(scrapy.Spider):

# 爬虫的名称

name = 'douban_spider'

# 爬虫允许抓取的域名

allowed_domains = ['movie.douban.com']

# 爬虫抓取数据地址,给调度器

start_urls = ['http://movie.douban.com/top250']

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

douban_item = DoubanItem()

douban_item['serial_number'] = i_item.xpath(".//div[@class='item']//em/text()").extract_first()

print(douban_item)

运行main.py:( 如下,序号获取成功)

2020-08-07 15:28:08 [scrapy.downloadermiddlewares.redirect] DEBUG: Redirecting (301) to from

2020-08-07 15:28:08 [scrapy.core.engine] DEBUG: Crawled (200) (referer: None)

{'serial_number': '1'}

{'serial_number': '2'}

{'serial_number': '3'}

{'serial_number': '4'}

{'serial_number': '5'}

{'serial_number': '6'}

{'serial_number': '7'}

{'serial_number': '8'}

{'serial_number': '9'}

{'serial_number': '10'}

{'serial_number': '11'}

{'serial_number': '12'}

{'serial_number': '13'}

{'serial_number': '14'}

{'serial_number': '15'} 操作 12 : 完善douban_spider.py文件(解析详细属性):

# -*- coding: utf-8 -*-

# -*- coding: utf-8 -*-

import scrapy

from douban.items import DoubanItem

class DoubanSpiderSpider(scrapy.Spider):

# 爬虫的名称

name = 'douban_spider'

# 爬虫允许抓取的域名

allowed_domains = ['movie.douban.com']

# 爬虫抓取数据地址,给调度器

start_urls = ['http://movie.douban.com/top250']

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

douban_item = DoubanItem()

douban_item['serial_number'] = i_item.xpath(".//div[@class='item']//em/text()").extract_first()

douban_item['movie_name'] = i_item.xpath(

".//div[@class='info']/div[@class='hd']/a/span[1]/text()").extract_first()

descs = i_item.xpath(".//div[@class='info']//div[@class='hd']/p[1]/text()").extract()

for i_desc in descs:

i_desc_str = "".join(i_desc.split())

douban_item['introduce'] = i_desc_str

douban_item['star'] = i_item.xpath(".//span[@class='rating_num']/text()").extract_first()

douban_item['evaluate'] = i_item.xpath(".//div[@class='star']//span[4]/text()").extract_first()

douban_item['describle'] = i_item.xpath(".//p[@class='quote']/span/text()").extract_first()

print(douban_item)再次运行main.py,返回信息:

2020-08-07 15:33:48 [scrapy.core.engine] DEBUG: Crawled (200) (referer: None)

{'describle': '希望让人自由。',

'evaluate': '2102115人评价',

'movie_name': '肖申克的救赎',

'serial_number': '1',

'star': '9.7'}

{'describle': '风华绝代。',

'evaluate': '1558620人评价',

'movie_name': '霸王别姬',

'serial_number': '2',

'star': '9.6'}

{'describle': '一部美国近现代史。',

'evaluate': '1588604人评价',

'movie_name': '阿甘正传',

'serial_number': '3',

'star': '9.5'}

{'describle': '怪蜀黍和小萝莉不得不说的故事。',

'evaluate': '1776366人评价',

'movie_name': '这个杀手不太冷',

'serial_number': '4',

'star': '9.4'} 操作 13 : yield命令和Scrapy框架

接着把刚才最后一行代码

print(douban_item)

替换成

yield douban_item

意思是将返回结果压入 item Pipline进行处理:(如下图介绍scrapy原理)

![]()

操作 14 : 继续编辑我们的爬虫douban_spider.py文件

操作 15 : 遍历 "下一页" , 获取所有数据

再次编辑douban_spider.py文件:

# -*- coding: utf-8 -*-

# -*- coding: utf-8 -*-

import scrapy

from douban.items import DoubanItem

class DoubanSpiderSpider(scrapy.Spider):

# 爬虫的名称

name = 'douban_spider'

# 爬虫允许抓取的域名

allowed_domains = ['movie.douban.com']

# 爬虫抓取数据地址,给调度器

start_urls = ['http://movie.douban.com/top250']

def parse(self, response):

movie_list = response.xpath("//div[@class='article']//ol[@class='grid_view']/li")

for i_item in movie_list:

douban_item = DoubanItem()

douban_item['serial_number'] = i_item.xpath(".//div[@class='item']//em/text()").extract_first()

douban_item['movie_name'] = i_item.xpath(

".//div[@class='info']/div[@class='hd']/a/span[1]/text()").extract_first()

descs = i_item.xpath(".//div[@class='info']//div[@class='hd']/p[1]/text()").extract()

for i_desc in descs:

i_desc_str = "".join(i_desc.split())

douban_item['introduce'] = i_desc_str

douban_item['star'] = i_item.xpath(".//span[@class='rating_num']/text()").extract_first()

douban_item['evaluate'] = i_item.xpath(".//div[@class='star']//span[4]/text()").extract_first()

douban_item['describle'] = i_item.xpath(".//p[@class='quote']/span/text()").extract_first()

yield douban_item

next_link = response.xpath("//span[@class='next']/link/@href").extract()

if next_link:

next_link = next_link[0]

yield scrapy.Request("https://movie.douban.com/top250" + next_link, callback=self.parse)

解释:

1 每次for循环结束后,需要获取next页面链接:next_link

2 如果到最后一页时没有下一页,需要判断一下

3 下一页地址拼接: 点击第二页时页面地址是https://movie.douban.com/top250?start=25&filter= 恰好就是https://movie.douban.com/top250 和 中href的拼接

4 callback=self.parse : 请求回调

运行main.py结果:(可以看到我们把最后一个序号250的数据加载到)

![]()

操作 16 : 保存数据到json文件 或者 csv文件

在douban路径执行:scrapy crawl douban_spider -o movielist.json

或者

在douban路径执行:scrapy crawl douban_spider -o movielist.csv

D:\SW_dvp\python\practice\scrapyprac\douban>scrapy crawl douban_spider -o movielist.csv

保存成功:

2020-08-07 15:50:39 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250?start=225&

filter=>

{'describle': '一个精彩的世界观正在缓缓建立。',

'evaluate': '265540人评价',

'movie_name': '黑客帝国2:重装上阵',

'serial_number': '249',

'star': '8.6'}

2020-08-07 15:50:39 [scrapy.core.scraper] DEBUG: Scraped from <200 https://movie.douban.com/top250?start=225&

filter=>

{'describle': '我完全康复了。',

'evaluate': '285937人评价',

'movie_name': '发条橙',

'serial_number': '250',

'star': '8.6'}

2020-08-07 15:50:39 [scrapy.core.engine] INFO: Closing spider (finished)

查看:里面有movielist.csv

也可以在

main.py里面写入这行命令,然后run,也可以保存成功;

from scrapy import cmdline

# 输出未过滤的页面信息

#cmdline.execute('scrapy crawl douban_spider'.split())

cmdline.execute('scrapy crawl douban_spider -o movielist.csv'.split())

查看保存结果:

操作 17 : 存储到数据库MongoDB(pymongo)--没安装成功,没做

首先检查是否安装pymongo:

打开终端

输入

python

回车

输入:

import pymongo

回车

如果没有安装就会报错:

-

... -

No module named 'pymongo'

安装pymongo:

输入命令:

pip install pymongo

回车安装.

安装成功以后,接下来需要编写存储代码.

进入项目

设置settings.py文件

(1)将settings.py被注释的下面代码开启:

-

ITEM_PIPELINES = { -

'douban.pipelines.DoubanPipeline': 300, -

}

(2)settings.py文件最后添加数据库信息:

启动数据库服务

host:你的ip地址;

port : pymongo默认端口

db_name: 数据库名

db_collection: 表名

-

# 定义MongoDB信息 -

mongo_host = '172.16.0.0' -

mongo_port = 27017 -

mongo_db_name = 'douban' -

mongo_db_collection = 'douban_movie'

修改你的pipelines.py文件如下:

-

# -*- coding: utf-8 -*- -

import pymongo -

from douban.settings import mongo_host ,mongo_port,mongo_db_name,mongo_db_collection -

# Define your item pipelines here -

# -

# Don't forget to add your pipeline to the ITEM_PIPELINES setting -

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html -

class DoubanPipeline(object): -

def __init__(self): -

host = mongo_host -

port = mongo_port -

dbname = mongo_db_name -

sheetname = mongo_db_collection -

client = pymongo.MongoClient(host=host,port=port) -

mydb = client[dbname] -

self.post = mydb[sheetname] -

def process_item(self, item, spider): -

data = dict(item) -

self.post.insert(data) -

return item

进入main.py运行.即可存储数据到数据库.

操作 17 : ip代理中间价编写(爬虫ip地址伪装)--没做

修改中间价文件:middlewares.py文件:

(1)文件开头导入base64文件:

import base64

(2)文件结尾添加方法:

-

class my_proxy(object): -

def process_request(self,request,spider): -

request.meta['proxy'] = 'http-cla.abuyun.com:9030' -

proxy_name_pass = b'H622272STYB666BW:F78990HJSS7' -

enconde_pass_name = base64.b64encode(proxy_name_pass) -

request.headers['Proxy-Authorization'] = 'Basic ' + enconde_pass_name.decode()

解释:根据阿布云注册购买http隧道列表信息

request.meta['proxy'] : '服务器地址:端口号'

proxy_name_pass: b'证书号:密钥' ,b开头是字符串base64处理

base64.b64encode() : 变量做base64处理

'Basic ' : basic后一定要有空格

大壮老师购买阿布云http隧道页:

![]()

修改settings.py文件:

(3)取消注释,并修改如下:

DOWNLOADER_MIDDLEWARES = {

'douban.middlewares.my_proxy': 543,

}

(4)进入main.py运行:

下面截图表示成功隐藏ip地址

![]()

操作 18 : 头信息User-Agent伪装

其实在上面'操作 8' 步骤里已经设置过一次User-Agent信息,不过信息是写死的,

接下里我们通过随机给出一个User-Agent信息的方式来实现简单伪装:

同样是修改中间价文件:middlewares.py文件:

(1)文件开头导入random文件(随机函数):

import random

(2)文件结尾添加方法:

添加新方法:

class my_useragent(object):

def process_request(self, request, spider):

UserAgentList = [

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)",

"Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)",

"Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)",

"Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)",

"Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0",

"Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5",

"Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/2.0 Safari/536.11",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.71 Safari/537.1 LBBROWSER",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.84 Safari/535.11 LBBROWSER",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; QQBrowser/7.0.3698.400)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SV1; QQDownload 732; .NET4.0C; .NET4.0E; 360SE)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (iPad; U; CPU OS 4_2_1 like Mac OS X; zh-cn) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8C148 Safari/6533.18.5",

"Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:2.0b13pre) Gecko/20110307 Firefox/4.0b13pre",

"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:16.0) Gecko/20100101 Firefox/16.0",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.11 (KHTML, like Gecko) Chrome/23.0.1271.64 Safari/537.11",

"Mozilla/5.0 (X11; U; Linux x86_64; zh-CN; rv:1.9.2.10) Gecko/20100922 Ubuntu/10.10 (maverick) Firefox/3.6.10",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36",

]

agent = random.choice(UserAgentList)

request.headers['User_Agent'] = agent

(3)修改settings.py文件:并修改如下:

增加一条设置: 'douban.middlewares.my_useragent': 544

DOWNLOADER_MIDDLEWARES = {

'douban.middlewares.DoubanDownloaderMiddleware': 543,

'douban.middlewares.my_useragent': 544,

}(4)进入main.py运行:

user agent设置成功

![]()

2020-08-07 16:31:07 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'douban.middlewares.DoubanDownloaderMiddleware',

'douban.middlewares.my_useragent',操作 19 : 最后

学习爬虫可用于个人学习和研究数据,不可涉及违法使用.