Pytorch实现MNIST(附SGD、Adam、AdaBound不同优化器下的训练比较)

学习工具最快的方法就是在使用的过程中学习,也就是在工作中(解决实际问题中)学习。文章结尾处附完整代码。

一、数据准备

在Pytorch中提供了MNIST的数据,因此我们只需要使用Pytorch提供的数据即可。

from torchvision import datasets, transforms

# batch_size 是指每次送入网络进行训练的数据量

batch_size = 64

# MNIST Dataset

# MNIST数据集已经集成在pytorch datasets中,可以直接调用

train_dataset = datasets.MNIST(root='./data/',

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = datasets.MNIST(root='./data/',

train=False,

transform=transforms.ToTensor())

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,

batch_size=batch_size,

shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,

batch_size=batch_size,

shuffle=False)

二、建立网络

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 输入1通道,输出10通道,kernel 5*5

self.conv1 = nn.Conv2d(in_channels=1, out_channels=10, kernel_size=5)

# 输入10通道,输出20通道,kernel 5*5

self.conv2 = nn.Conv2d(10, 20, 5)

# 输入20通道,输出40通道,kernel 3*3

self.conv3 = nn.Conv2d(20, 40, 3)

# 2*2的池化层

self.mp = nn.MaxPool2d(2)

# 全连接层(输入特征数,输出)

self.fc = nn.Linear(40, 10)

def forward(self, x):

# in_size = 64

# one batch 此时的x是包含batchsize维度为4的tensor,即(batchsize,channels,x,y)

# x.size(0)指batchsize的值,把batchsize的值作为网络的in_size

in_size = x.size(0)

# x: 64*1*28*28

x = F.relu(self.mp(self.conv1(x)))

# x: 64*10*12*12 (n+2p-f)/s + 1 = 28 - 5 + 1 = 24,所以在没有池化的时候是24*24,池化层为2*2 ,所以池化之后为12*12

x = F.relu(self.mp(self.conv2(x)))

# x: 64*20*4*4 同理,没有池化的时候是12 - 5 + 1 = 8 ,池化后为4*4

x = F.relu(self.mp(self.conv3(x)))

# 输出x : 64*40*2*2

x = x.view(in_size, -1) # 平铺 tensor 相当于resharp

# print(x.size())

# x: 64*320

x = self.fc(x)

# x: 64*10

# print(x.size())

return F.log_softmax(x) #64*10

三、开始训练

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

model = Net()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

# enumerate()枚举、列举,对于一个可迭代/遍历的对象,enumerate将其组成一个索引序列,利用它可以同时获得索引和值

for batch_idx, (data, target) in enumerate(train_loader): #batch_idx是enumerate()函数自带的索引,从0开始

# data.size():[64, 1, 28, 28]

# target.size():[64]

output = model(data)

# output:64*10

loss = F.nll_loss(output, target)

# 每200次,输出一次数据

if batch_idx % 200 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.

format(

epoch,

batch_idx * len(data),

len(train_loader.dataset),

100. * batch_idx / len(train_loader),

loss.item()))

optimizer.zero_grad() # 所有参数的梯度清零

loss.backward() # 即反向传播求梯度

optimizer.step() # 调用optimizer进行梯度下降更新参数

# 实验入口

for epoch in range(1, 10):

train(epoch)

对于训练中的一些参数解释如下:

batch_idx:batch的索引,即batch的数量。batch_size:每次送入网络的数据量

四、测试模型

def test():

test_loss = 0

correct = 0

for data, target in test_loader:

data, target = Variable(data, volatile=True), Variable(target)

output = model(data.cuda())

# 累加loss

test_loss += F.nll_loss(output, target.cuda(), size_average=False).item()

# get the index of the max log-probability

# 找出每列(索引)概率意义下的最大值

pred = output.data.max(1, keepdim=True)[1]

# print(pred)

correct += pred.eq(target.data.view_as(pred).cuda()).cuda().sum()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

# 实验入口

for epoch in range(1, 10):

print("test num"+str(epoch))

train(epoch)

test()

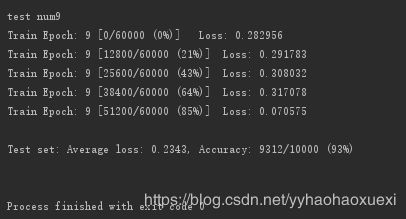

在此展示结果的最后一次:

可以看到,我们使用SGD作为优化器的优化函数时,测试集后来达到的正确率为92%

五、提高测试集正确率

5.1 增加训练次数(SGD版)

如题,我们将训练次数增至30,优化函数仍然使用SGD,即只在入口循环处改变epoch的取值范围。为了节省空间,结果只输出测试集loss和正确率。

我们可以看到,在num18时,测试集的正确率收敛,达到了96%;loss也是在0.1附近波动。

Test set: Average loss: 2.2955, Accuracy: 1018/10000 (10%)

test num1

Test set: Average loss: 2.2812, Accuracy: 2697/10000 (26%)

test num2

Test set: Average loss: 2.2206, Accuracy: 3862/10000 (38%)

test num3

Test set: Average loss: 1.8014, Accuracy: 6100/10000 (61%)

test num4

Test set: Average loss: 0.7187, Accuracy: 8049/10000 (80%)

test num5

Test set: Average loss: 0.4679, Accuracy: 8593/10000 (85%)

test num6

Test set: Average loss: 0.3685, Accuracy: 8898/10000 (88%)

test num7

Test set: Average loss: 0.3006, Accuracy: 9108/10000 (91%)

test num8

Test set: Average loss: 0.2713, Accuracy: 9177/10000 (91%)

test num9

Test set: Average loss: 0.2343, Accuracy: 9270/10000 (92%)

test num10

Test set: Average loss: 0.2071, Accuracy: 9370/10000 (93%)

test num11

Test set: Average loss: 0.1910, Accuracy: 9413/10000 (94%)

test num12

Test set: Average loss: 0.1783, Accuracy: 9453/10000 (94%)

test num13

Test set: Average loss: 0.1612, Accuracy: 9482/10000 (94%)

test num14

Test set: Average loss: 0.1603, Accuracy: 9497/10000 (94%)

test num15

Test set: Average loss: 0.1522, Accuracy: 9526/10000 (95%)

test num16

Test set: Average loss: 0.1410, Accuracy: 9555/10000 (95%)

test num17

Test set: Average loss: 0.1338, Accuracy: 9573/10000 (95%)

test num18

Test set: Average loss: 0.1307, Accuracy: 9588/10000 (95%)

test num19

Test set: Average loss: 0.1212, Accuracy: 9610/10000 (96%)

test num20

Test set: Average loss: 0.1232, Accuracy: 9622/10000 (96%)

test num21

Test set: Average loss: 0.1149, Accuracy: 9646/10000 (96%)

test num22

Test set: Average loss: 0.1104, Accuracy: 9652/10000 (96%)

test num23

Test set: Average loss: 0.1072, Accuracy: 9668/10000 (96%)

test num24

Test set: Average loss: 0.1113, Accuracy: 9646/10000 (96%)

test num25

Test set: Average loss: 0.1037, Accuracy: 9659/10000 (96%)

test num26

Test set: Average loss: 0.0970, Accuracy: 9700/10000 (97%)

test num27

Test set: Average loss: 0.1013, Accuracy: 9692/10000 (96%)

test num28

Test set: Average loss: 0.1015, Accuracy: 9675/10000 (96%)

test num29

Test set: Average loss: 0.0952, Accuracy: 9711/10000 (97%)

test num30

Test set: Average loss: 0.0885, Accuracy: 9727/10000 (97%)

Process finished with exit code 0

5.2 Adam版(训练次数:30)

Adam还是名副其实的老大,第一次就已经达到了SGD收敛时候的loss值和正确率。我们可以看到,在num26时,Adam优化函数下的模型对于测试集的预测正确率达到了99%,loss为0.0397,但是正确率似乎并没有收敛到99%。

test num1

Test set: Average loss: 0.1108, Accuracy: 9660/10000 (96%)

test num2

Test set: Average loss: 0.0932, Accuracy: 9709/10000 (97%)

test num3

Test set: Average loss: 0.0628, Accuracy: 9800/10000 (98%)

test num4

Test set: Average loss: 0.0562, Accuracy: 9813/10000 (98%)

test num5

Test set: Average loss: 0.0478, Accuracy: 9832/10000 (98%)

test num6

Test set: Average loss: 0.0442, Accuracy: 9850/10000 (98%)

test num7

Test set: Average loss: 0.0386, Accuracy: 9863/10000 (98%)

test num8

Test set: Average loss: 0.0768, Accuracy: 9753/10000 (97%)

test num9

Test set: Average loss: 0.0343, Accuracy: 9879/10000 (98%)

test num10

Test set: Average loss: 0.0347, Accuracy: 9877/10000 (98%)

test num11

Test set: Average loss: 0.0494, Accuracy: 9825/10000 (98%)

test num12

Test set: Average loss: 0.0571, Accuracy: 9811/10000 (98%)

test num13

Test set: Average loss: 0.0342, Accuracy: 9887/10000 (98%)

test num14

Test set: Average loss: 0.0400, Accuracy: 9870/10000 (98%)

test num15

Test set: Average loss: 0.0339, Accuracy: 9889/10000 (98%)

test num16

Test set: Average loss: 0.0371, Accuracy: 9889/10000 (98%)

test num17

Test set: Average loss: 0.0402, Accuracy: 9872/10000 (98%)

test num18

Test set: Average loss: 0.0434, Accuracy: 9887/10000 (98%)

test num19

Test set: Average loss: 0.0377, Accuracy: 9877/10000 (98%)

test num20

Test set: Average loss: 0.0402, Accuracy: 9883/10000 (98%)

test num21

Test set: Average loss: 0.0407, Accuracy: 9886/10000 (98%)

test num22

Test set: Average loss: 0.0482, Accuracy: 9871/10000 (98%)

test num23

Test set: Average loss: 0.0414, Accuracy: 9891/10000 (98%)

test num24

Test set: Average loss: 0.0407, Accuracy: 9890/10000 (98%)

test num25

Test set: Average loss: 0.0403, Accuracy: 9898/10000 (98%)

test num26

Test set: Average loss: 0.0397, Accuracy: 9902/10000 (99%)

test num27

Test set: Average loss: 0.0491, Accuracy: 9873/10000 (98%)

test num28

Test set: Average loss: 0.0416, Accuracy: 9896/10000 (98%)

test num29

Test set: Average loss: 0.0450, Accuracy: 9897/10000 (98%)

test num30

Test set: Average loss: 0.0500, Accuracy: 9875/10000 (98%)

Process finished with exit code 0

5.3 AdaBound版(训练次数:30)

AdaBound即最近北大、浙大本科生新提出的训练速度比肩Adam,性能媲美SGD的优化算法。

可以看到,在num4、num5时就正确率已经达到了98%,loss已经比Adam收敛时候的loss低。而在num8的时候,正确率突破99%!loss达到了0.0303!,在接下来的几次训练中,正确率和loss有细微的波动,但是随着训练次数的增加,正确率和loss还是达到了最佳的收敛值,波动并不是特别大。

test num1

Test set: Average loss: 0.1239, Accuracy: 9614/10000 (96%)

test num2

Test set: Average loss: 0.0965, Accuracy: 9704/10000 (97%)

test num3

Test set: Average loss: 0.0637, Accuracy: 9794/10000 (97%)

test num4

Test set: Average loss: 0.0485, Accuracy: 9852/10000 (98%)

test num5

Test set: Average loss: 0.0403, Accuracy: 9870/10000 (98%)

test num6

Test set: Average loss: 0.0513, Accuracy: 9836/10000 (98%)

test num7

Test set: Average loss: 0.0446, Accuracy: 9856/10000 (98%)

test num8

Test set: Average loss: 0.0303, Accuracy: 9910/10000 (99%)

test num9

Test set: Average loss: 0.0411, Accuracy: 9873/10000 (98%)

test num10

Test set: Average loss: 0.0422, Accuracy: 9870/10000 (98%)

test num11

Test set: Average loss: 0.0319, Accuracy: 9894/10000 (98%)

test num12

Test set: Average loss: 0.0303, Accuracy: 9905/10000 (99%)

test num13

Test set: Average loss: 0.0338, Accuracy: 9897/10000 (98%)

test num14

Test set: Average loss: 0.0313, Accuracy: 9904/10000 (99%)

test num15

Test set: Average loss: 0.0285, Accuracy: 9920/10000 (99%)

test num16

Test set: Average loss: 0.0319, Accuracy: 9917/10000 (99%)

test num17

Test set: Average loss: 0.0427, Accuracy: 9884/10000 (98%)

test num18

Test set: Average loss: 0.0351, Accuracy: 9894/10000 (98%)

test num19

Test set: Average loss: 0.0337, Accuracy: 9897/10000 (98%)

test num20

Test set: Average loss: 0.0321, Accuracy: 9910/10000 (99%)

test num21

Test set: Average loss: 0.0354, Accuracy: 9908/10000 (99%)

test num22

Test set: Average loss: 0.0332, Accuracy: 9905/10000 (99%)

test num23

Test set: Average loss: 0.0347, Accuracy: 9904/10000 (99%)

test num24

Test set: Average loss: 0.0362, Accuracy: 9906/10000 (99%)

test num25

Test set: Average loss: 0.0402, Accuracy: 9900/10000 (99%)

test num26

Test set: Average loss: 0.0380, Accuracy: 9900/10000 (99%)

test num27

Test set: Average loss: 0.0378, Accuracy: 9914/10000 (99%)

test num28

Test set: Average loss: 0.0356, Accuracy: 9913/10000 (99%)

test num29

Test set: Average loss: 0.0360, Accuracy: 9912/10000 (99%)

Process finished with exit code 0

六、完整代码

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.autograd import Variable

import adabound

# Training settings

batch_size = 64

# TODO dataset 和 dataloader

# MNIST Dataset

# MNIST数据集已经集成在pytorch datasets中,可以直接调用

train_dataset = datasets.MNIST(root='./data/',

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = datasets.MNIST(root='./data/',

train=False,

transform=transforms.ToTensor())

# Data Loader (Input Pipeline)

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,

batch_size=batch_size,

shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,

batch_size=batch_size,

shuffle=False)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 输入1通道,输出10通道,kernel 5*5

self.conv1 = nn.Conv2d(in_channels=1, out_channels=10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, 5)

self.conv3 = nn.Conv2d(20, 40, 3)

self.mp = nn.MaxPool2d(2)

# fully connect

self.fc = nn.Linear(40, 10)#(in_features, out_features)

def forward(self, x):

# in_size = 64

# one batch 此时的x是包含batchsize维度为4的tensor,即(batchsize,channels,x,y)

# x.size(0)指batchsize的值 把batchsize的值作为网络的in_size

in_size = x.size(0)

# x: 64*1*28*28

x = F.relu(self.mp(self.conv1(x)))

# x: 64*10*12*12 (n+2p-f)/s + 1 = 28 - 5 + 1 = 24,所以在没有池化的时候是24*24,池化层为2*2 ,所以池化之后为12*12

x = F.relu(self.mp(self.conv2(x)))

# x: 64*20*4*4 同理,没有池化的时候是12 - 5 + 1 = 8 ,池化后为4*4

x = F.relu(self.mp(self.conv3(x)))

# 输出x : 64*40*2*2

x = x.view(in_size, -1) # 平铺 tensor 相当于resharp

# print(x.size())

# x: 64*320

x = self.fc(x)

# x:64*10

# print(x.size())

return F.log_softmax(x) #64*10

model = Net()

model.cuda()

# optimizer = optim.SGD(model.parameters(), lr=0.001, momentum=0.5)

# optimizer = adabound.AdaBound(model.parameters(), lr=1e-3, final_lr=0.1)

optimizer = optim.Adam(model.parameters(), lr=0.001)

def train(epoch):

# enumerate()枚举、列举,对于一个可迭代/遍历的对象,enumerate将其组成一个索引序列,利用它可以同时获得索引和值

for batch_idx, (data, target) in enumerate(train_loader): # batch_idx是enumerate()函数自带的索引,从0开始

# data.size():[64, 1, 28, 28]

# target.size():[64]

output = model(data.cuda())

# print(batch_idx)

# output:64*10

loss = F.nll_loss(output, target.cuda())

# 每200次,输出一次数据

# if batch_idx % 200 == 0:

# print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.

# format(

# epoch,

# batch_idx * len(data),

# len(train_loader.dataset),

# 100. * batch_idx / len(train_loader),

# loss.item()))

optimizer.zero_grad() # 所有参数的梯度清零

loss.backward() #即反向传播求梯度

optimizer.step() #调用optimizer进行梯度下降更新参数

def test():

test_loss = 0

correct = 0

for data, target in test_loader:

data, target = Variable(data, volatile=True), Variable(target)

output = model(data.cuda())

# 累加loss

test_loss += F.nll_loss(output, target.cuda(), size_average=False).item()

# get the index of the max log-probability

# 找出每列(索引)概率意义下的最大值

pred = output.data.max(1, keepdim=True)[1]

# print(pred)

correct += pred.eq(target.data.view_as(pred).cuda()).cuda().sum()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

for epoch in range(1, 30):

print("test num"+str(epoch))

train(epoch)

test()

七、参考资料及获得的帮助

- AdaBound详解【首发】

- 完成本次实验得到了何树林同学的大力支持

- 本次实验的代码在网上参考修改,由于不慎关闭了相关页面……找不到出处了,如果有雷同,请及时告诉我,以便在此声明参考出处。