Python Spark MLlib之决策树多分类

数据准备

选择UCI数据集中的Covertype数据集(http://archive.ics.uci.edu/ml/datasets/Covertype)进行实验。点击查看数据集详细信息。

1、下载数据集并打开

终端输入命令

cd ~/pythonwork/PythonProject/data

wget http://archive.ics.uci.edu/ml/machine-learning-databases/covtype/covtype.data.gz

gzip -d covtype.data.gz

cat covtype.data|more

前54列为特征列,最后一列为标签列。总共7个类别标签。

- 1~10列:数值特征(numerical features):包含Elevation(海拔)、Aspect(方位)、Slope(斜率)等特征

- 11~14列:离散/分类特征:Wilderness Areas荒野分为4种,用OneHot编码方式处理

- 15~54列:离散/分类特征:Soil Types 土壤分为40种,用OneHot编码方式处理

- 55列:标签列:7个类别表示7种不同的森林覆盖类型。

2、打开IPython/Jupyter Notebook导入数据

终端输入命令运行IPython/Jupyter Notebook

cd ~/pythonwork/ipynotebook

PYSPARK_DRIVER_PYTHON=ipython PYSPARK_DRIVER_PYTHON_OPTS="notebook" MASTER=local[*] pyspark

在IPython/Jupyter Notebook中输入以下命令导入并读取数据:

## 定义路径

global Path

if sc.master[:5]=="local":

Path="file:/home/yyf/pythonwork/PythonProject/"

else:

Path="hdfs://master:9000/user/yyf/"

## 读取train.tsv

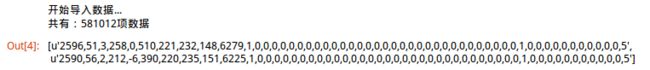

print("开始导入数据...")

rData = sc.textFile(Path+"data/covtype.data")

## 以逗号分割每一行

lines = rData.map(lambda x: x.split(","))

print("共有:"+str(lines.count())+"项数据")

## 取出前2项数据

rData.take(2)数据预处理

1、处理特征

## 处理特征

import numpy as np

def convert_float(v):

"""处理数值, 将字符串转化为float"""

return float(v)

def process_features(line, featureEnd):

"""处理特征,line为字段行,featureEnd为特征结束位置,此例为54"""

## 处理数值特征

Features = [convert_float(value) for value in line[:featureEnd]]

# 返回拼接的总特征列表

return Features2、处理标签

## 处理标签

def process_label(line):

return float(line[-1])-1 # 最后一个字段为类别标签, 设置从0开始

process_label(lines.first())3、构建LabeledPoint数据格式

Spark Mllib分类任务所支持的数据类型为LabeledPoint格式,LabeledPoint数据由标签label和特征feature组成。

## 构建LabeledPoint数据:

from pyspark.mllib.regression import LabeledPoint

labelpointRDD = lines.map(lambda r: LabeledPoint(process_label(r), \

process_features(r, len(r)-1)))

## 查看labelpoint第一项数据

labelpointRDD.take(1) 4、划分训练集、验证集及测试集

## 划分训练集、验证集和测试集

(trainData, validationData, testData) = labelpointRDD.randomSplit([7,1,2])

print("训练集样本个数:"+str(trainData.count()) + "验证集样本个数:"+str(validationData.count())+ "测试集样本个数:"+str(testData.count()))

# 将数据暂存在内存中,加快后续运算效率

trainData.persist()

validationData.persist()

testData.persist()训练模型

选择Spark MLlib中的决策树DecisionTree模块中的trainClassifier方法进行训练并建立模型:

- DecisionTree.trainClassifier(input, numClasses, categoricalFeaturesInfo, impurity,maxDepth,maxBins)

参数说明如下:

- (1) input:输入的训练数据,数据格式为LabeledPoint数据

- (2) numClasses:指定分类数目

- (3) categoricalFeaturesInfo:设置分类特征字段信息,本例采用OneHot编码处理离散/分类特征字段,故这里设置为空字典dict()

- (4) impurity:决策树的impurity评估方法(划分的度量选择):gini基尼系数,entropy熵

- (5) maxDepth:决策树最大深度

- (6) maxBins:决策树每个节点的最大分支数

## 使用决策数模型进行训练

from pyspark.mllib.tree import DecisionTree

model = DecisionTree.trainClassifier(trainData, numClasses=2,categoricalFeaturesInfo={}, impurity="entropy", maxDepth=5,maxBins=5)模型评估

为简单起见使用预测准确率作为模型评估的指标,自定义函数计算准确率(好吧,其实是pyspark MLlib的evaluation中的类用的时候老报错。。不知道什么原因)

## 定义模型评估函数

def ModelAccuracy(model, validationData):

## 计算模型的准确率

predict = model.predict(validationData.map(lambda p:p.features))

predict = predict.map(lambda p: float(p))

## 拼接预测值和实际值

predict_real = predict.zip(validationData.map(lambda p: p.label))

matched = predict_real.filter(lambda p:p[0]==p[1])

accuracy = float(matched.count()) / float(predict_real.count())

return accuracy

## 调用函数求模型在验证集上的准确率

acc = ModelAccuracy(model, validationData)

print("准确率accuracy="+str(acc))返回结果:准确率accuracy=0.697843332532

模型参数选择

DecisionTree的参数impurity,maxDepth,maxBins会影响模型的准确率及训练的时间,下面对不同模型参数取值进行测试评估。

创建trainEvaluateModel函数包含训练与评估功能,并计算训练评估的时间。

## 创建trainEvaluateModel函数包含训练与评估功能,并计算训练评估的时间。

from time import time

def trainEvaluateModel(trainData, validationData, impurityParm, maxDepthParm, maxBinsParm):

startTime = time.clock()

## 创建并训练模型

model = DecisionTree.trainClassifier(trainData, numClasses=7,categoricalFeaturesInfo={},

impurity=impurityParm, maxDepth=maxDepthParm,maxBins=maxBinsParm)

## 计算AUC

accuracy = ModelAccuracy(model, validationData)

duration = time.clock() - startTime # 持续时间

print("训练评估:参数"+"impurity="+str(impurityParm) +

", maxDepth="+str(maxDepthParm)+", maxBins="+str(maxBinsParm)+"\n"+

"===>消耗时间="+str(duration)+", 准确率accuracy="+str(accuracy))

return accuracy, duration, impurityParm, maxDepthParm, maxBinsParm, model1、评估impurity参数

## 评估impurity参数

impurityList=["gini","entropy"]

maxDepthList = [10]

maxBinsList = [10]

## 返回结果存放至metries中

metrics = [trainEvaluateModel(trainData, validationData, impurity, maxDepth, maxBins)

for impurity in impurityList

for maxDepth in maxDepthList

for maxBins in maxBinsList]返回结果:

训练评估:参数impurity=gini, maxDepth=10, maxBins=10

===>消耗时间=6.00259780884, 准确率accuracy=0.774288265394

训练评估:参数impurity=entropy, maxDepth=10, maxBins=10

===>消耗时间=15.1357119083, 准确率accuracy=0.764844259762

观察发现,此例中,分裂特征的度量impurity信息熵entropy比基尼系数gini训练时所花费的时间要长,而准确率二者相差不多。

2、评估maxDepth参数

## 评估maxDepth参数

impurityList=["gini"]

maxDepthList = [3,5,10,15,20,25, 30]

maxBinsList = [10]

## 返回结果存放至metries中

metrics = [trainEvaluateModel(trainData, validationData, impurity, maxDepth, maxBins)

for impurity in impurityList

for maxDepth in maxDepthList

for maxBins in maxBinsList]运行结果:

训练评估:参数impurity=gini, maxDepth=3, maxBins=10

===>消耗时间=8.66924381256, 准确率accuracy=0.673065695937

训练评估:参数impurity=gini, maxDepth=5, maxBins=10

===>消耗时间=10.3711090088, 准确率accuracy=0.69789484529

训练评估:参数impurity=gini, maxDepth=10, maxBins=10

===>消耗时间=6.82818198204, 准确率accuracy=0.774288265394

训练评估:参数impurity=gini, maxDepth=15, maxBins=10

===>消耗时间=8.52619194984, 准确率accuracy=0.84728184347

训练评估:参数impurity=gini, maxDepth=20, maxBins=10

===>消耗时间=15.7296249866, 准确率accuracy=0.88936776675

训练评估:参数impurity=gini, maxDepth=25, maxBins=10

===>消耗时间=36.0758471489, 准确率accuracy=0.906281122291

训练评估:参数impurity=gini, maxDepth=30, maxBins=10

===>消耗时间=56.580780983, 准确率accuracy=0.910539510285

观察发现,maxDepth参数在此例中的是影响准确率的关键因素,maxDepth越大准确率越高(从67%到90%的提升),但同时花费的时间也就越长。而同时最大深度maxDepth过大会有过拟合的风险(注意:Spark Mllib DecisionTree所支持的最大maxDepth值为30)。

3、评估maxBins参数

## 评估maxBins参数

impurityList=["gini"]

maxDepthList = [10]

maxBinsList = [5,10,15,100,200,500]

## 返回结果存放至metries中

metrics = [trainEvaluateModel(trainData, validationData, impurity, maxDepth, maxBins)

for impurity in impurityList

for maxDepth in maxDepthList

for maxBins in maxBinsList]返回结果:

训练评估:参数impurity=gini, maxDepth=10, maxBins=5

===>消耗时间=6.18475580215, 准确率accuracy=0.764552354133

训练评估:参数impurity=gini, maxDepth=10, maxBins=10

===>消耗时间=5.54818701744, 准确率accuracy=0.774288265394

训练评估:参数impurity=gini, maxDepth=10, maxBins=15

===>消耗时间=5.20354104042, 准确率accuracy=0.776571997665

训练评估:参数impurity=gini, maxDepth=10, maxBins=100

===>消耗时间=5.72198414803, 准确率accuracy=0.78464232975

训练评估:参数impurity=gini, maxDepth=10, maxBins=200

===>消耗时间=7.25856208801, 准确率accuracy=0.777550740067

训练评估:参数impurity=gini, maxDepth=10, maxBins=500

===>消耗时间=12.7459459305, 准确率accuracy=0.778254747759

观察发现,maxBins较大时,消耗时间也越长,准确率较高,但和maxBins较小时的差别不大。由此得出,maxBins在本例中可能不是很关键,设置参数时考虑效率则不能过大。

4、网格搜索最佳参数组合

## 定义函数gridSearch网格搜索最佳参数组合

def gridSearch(trainData, validationData, impurityList, maxDepthList, maxBinsList ):

metrics = [trainEvaluateModel(trainData, validationData, impurity, maxDepth, maxBins)

for impurity in impurityList

for maxDepth in maxDepthList

for maxBins in maxBinsList]

# 按照准确率accuracy从大到小排序,返回最大准确率accuracy的参数组合

sorted_metics = sorted(metrics, key=lambda k:k[0], reverse=True)

best_parameters = sorted_metics[0]

print("最佳参数组合:"+"impurity="+str( best_parameters[2]) +

", maxDepth="+str( best_parameters[3])+", maxBins="+str( best_parameters[4])+"\n"+

", 准确率accuracy="+str( best_parameters[0]))

return best_parameters

## 参数组合

impurityList=["gini", "entropy"]

maxDepthList = [3,5,10,20,25, 30]

maxBinsList = [5,10,50,100,200]

## 调用函数返回最佳参数组合

best_parameters = gridSearch(trainData, validationData, impurityList, maxDepthList, maxBinsList)返回结果:

最佳参数组合:impurity=entropy, maxDepth=30, maxBins=200

, 准确率accuracy=0.938201861328

准确率达到最高,但同时花费的时间也比较长,消耗时间=339.578785181高达5分钟多…,而另一组参数:

消耗时间=56.0052630901, 准确率accuracy=0.932947560012

训练评估:参数impurity=gini, maxDepth=30, maxBins=200

把impurity参数由entropy换成gini,时间减少了5倍多,同时,准确率差不多,故综合比较选择参数组合impurity=gini, maxDepth=30, maxBins=200

判断是否过拟合

前面已经得到最佳参数组合impurity=gini, maxDepth=30, maxBins=200及相应的准确率评估。使用该最佳参数组合训练模型,用该模型分别作用于训练数据和测试数据得出准确率,验证是否会过拟合:

## 使用最佳参数组合impurity=gini, maxDepth=30, maxBins=200训练模型

best_model = DecisionTree.trainClassifier(trainData, numClasses=7,categoricalFeaturesInfo={},

impurity="gini", maxDepth=30,maxBins=200)

acc1 = ModelAccuracy(best_model, trainData)

acc2 = ModelAccuracy(best_model, testData)

print("training: accuracy="+str(acc1))

print("testing: accuracy="+str(acc2))返回结果:

training: accuracy=0.996238068777

testing: accuracy=0.929162215253

观察发现,训练数据的准确率将近100%,而测试数据的准确率接近93%,则该模型发生了(轻度)过拟合。