- 数据下载连接:http://yann.lecun.com/exdb/mnist/

- 下载t10k-images-idx3-ubyte.gz;t10k-labels-idx1-ubyte.gz;train-images-idx3-ubyte.gz;train-labels-idx1-ubyte.gz

- 简单神经网络识别手写数字

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

FLAGS = tf.app.flags.FLAGS

tf.app.flags.DEFINE_integer("is_train", 1, "指定程序是训练还是预测")

def full_connected():

'''

简单神经网络对手写数字图片进行识别

:return: None

'''

mnist = input_data.read_data_sets("./data/mnist/", one_hot=True)

with tf.variable_scope("data"):

x = tf.placeholder(tf.float32, [None,784])

y_true = tf.placeholder(tf.int32, [None, 10])

with tf.variable_scope("fc_model"):

weight = tf.Variable(tf.random_normal([784,10], mean=0.0, stddev=1.0), name="w")

bias = tf.Variable(tf.constant(0.0, shape=[10]))

y_predict = tf.matmul(x, weight) + bias

with tf.variable_scope("soft_cross"):

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_true, logits=y_predict))

with tf.variable_scope("optimazer"):

train_op = tf.train.GradientDescentOptimizer(0.1).minimize(loss)

with tf.variable_scope("acc"):

equal_list = tf.equal(tf.arg_max(y_true,1), tf.arg_max(y_predict,1))

accuracy = tf.reduce_mean(tf.cast(equal_list, tf.float32))

tf.summary.scalar("losses", loss)

tf.summary.scalar("acc", accuracy)

tf.summary.histogram("weights", weight)

tf.summary.histogram("biases", bias)

merged = tf.summary.merge_all()

saver = tf.train.Saver()

init_op = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init_op)

filewriter = tf.summary.FileWriter("./summary/", graph=sess.graph)

if FLAGS.is_train == 1:

for i in range(2000):

mnist_x, mnist_y = mnist.train.next_batch(100)

sess.run(train_op, feed_dict={x: mnist_x, y_true:mnist_y})

summary = sess.run(merged, feed_dict={x: mnist_x, y_true:mnist_y})

filewriter.add_summary(summary, i)

print("训练第 %d 步,准确率为:%f " %(i, sess.run(accuracy, feed_dict={x: mnist_x, y_true:mnist_y})))

saver.save(sess, "./data/ckpt/fc_model")

else:

saver.restore(sess, "./data/ckpt/fc_model")

for i in range(100):

x_test, y_test = mnist.test.next_batch(1)

print("第 %d 张图片,手写数字目标是 %d, 预测结果是:%d" % (

i,

tf.argmax(y_test, 1).eval(),

tf.argmax(sess.run(y_predict, feed_dict={x: x_test, y_true: y_test}), 1).eval()

))

return None

if __name__ == '__main__':

full_connected()

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

def weight_variables(shape):

'''

初始化权重

:param shape:

:return: w 初始化的权重

'''

w = tf.Variable(tf.random_normal(shape=shape, mean=0.0, stddev=1.0))

return w

def bias_variables(shape):

'''

初始化偏置

:param shape:

:return: b 初始化的偏置

'''

b = tf.Variable(tf.constant(0.1, shape=shape))

return b

def model():

'''

自定义卷积模型

一卷积层:32个filter,5*5,strides=1,padding="SAME"; 池化:2*2, strides=2,padding="SAME"

二卷积层:64个filter,5*5,strides=1,padding="SAME";池化:2*2, strides=2

:return: None

'''

with tf.variable_scope("data"):

x = tf.placeholder(tf.float32, [None, 784])

y_true = tf.placeholder(tf.int32, [None, 10])

with tf.variable_scope("conv1"):

w_conv1 = weight_variables([5,5,1,32])

b_conv1 = bias_variables([32])

x_reshape = tf.reshape(x, [-1, 28,28,1])

x_relu1 = tf.nn.relu(tf.nn.conv2d(x_reshape, w_conv1, strides=[1,1,1,1], padding="SAME") + b_conv1)

x_pool1 = tf.nn.max_pool(x_relu1, ksize=[1,2,2,1], strides=[1,2,2,1], padding="SAME")

with tf.variable_scope("conv2"):

w_conv2 = weight_variables([5, 5, 32, 64])

b_conv2 = bias_variables([64])

x_relu2 = tf.nn.relu(tf.nn.conv2d(x_pool1, w_conv2, strides=[1, 1, 1, 1], padding="SAME") + b_conv2)

x_pool2 = tf.nn.max_pool(x_relu2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding="SAME")

with tf.variable_scope("fc"):

w_fc = weight_variables([7*7*64, 10])

b_fc = bias_variables([10])

x_fc_reshape = tf.reshape(x_pool2, [-1, 7*7*64])

y_predict = tf.matmul(x_fc_reshape, w_fc) + b_fc

return x, y_true, y_predict

def conv_fc():

mnist = input_data.read_data_sets("./data/mnist/", one_hot=True)

x, y_true, y_predict = model()

with tf.variable_scope("soft_cross"):

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_true, logits=y_predict))

with tf.variable_scope("optimazer"):

train_op = tf.train.GradientDescentOptimizer(0.0001).minimize(loss)

with tf.variable_scope("acc"):

equal_list = tf.equal(tf.arg_max(y_true, 1), tf.arg_max(y_predict, 1))

accuracy = tf.reduce_mean(tf.cast(equal_list, tf.float32))

init_op = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init_op)

for i in range(3000):

mnist_x, mnist_y = mnist.train.next_batch(50)

sess.run(train_op, feed_dict={x: mnist_x, y_true: mnist_y})

print("训练第 %d 步,准确率为:%f " % (i, sess.run(accuracy, feed_dict={x: mnist_x, y_true: mnist_y})))

if __name__ == '__main__':

conv_fc()

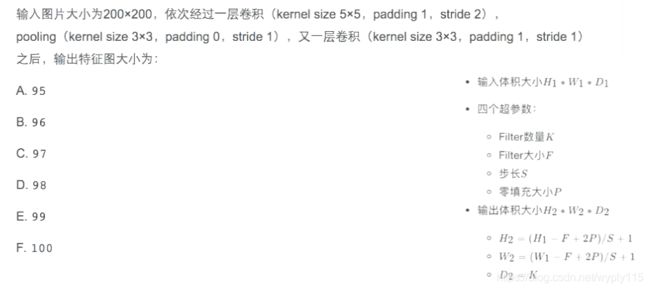

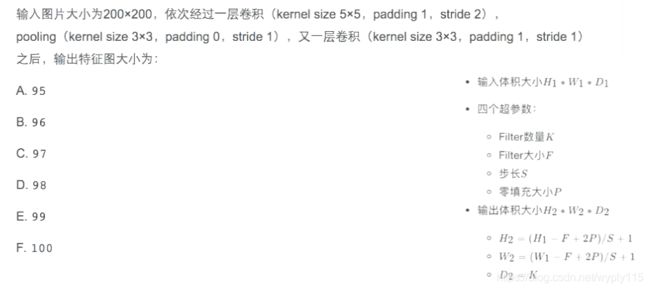

- 一到笔试题

计算过程(通道对输出不影响):

- 经过一层卷积:长,H2 = (200 - 5 + 2*1)/2 +1 = 99.5 (这里不是整数是需要自己分析卷积过程,步长为2,0.5步就是1,因为padding=1,padding是填充的0无需观察,因此结果就是99);长宽一样,因此不在计算宽。

- 经过pooling,H2 = (99 - 3 + 2*0)/1 +1 = 97

- 又经过一层卷积:H2 = (97 - 3 + 2*1)/1 +1 = 97,因此最终图片大小输出为97*97

因此答案是:C. 97