【Python实例第10讲】可视化股票市场结构

机器学习训练营——机器学习爱好者的自由交流空间(入群联系qq:2279055353)

本例采用几个无监督学习技术,从股票的历史报价变异里提取股票市场结构。这里,我们使用的数量是每日的报价变异。

学习一个图结构

我们使用稀疏的可逆协方差估计寻找哪些报价是条件相关的,即,给定其它报价下,它们是相关的。特别地,稀疏的可逆协方差估计给出了一个图,这个图实际上是一个报价的连接表。对于每一个标记(即报价),与之连接的标记对解释它的波动情况是有用的。

聚类

我们使用聚类的方法将相似的报价分到一起。具体地,我们使用AP聚类法(Affinity propagation Clustering). AP不要求各类大小相等,而且能根据数据自动确定类数。

聚类与图的区别在于,图反映了变量间的条件关系,而聚类反映了边际属性,即,被聚在一起的变量对完全的股票市场有相似的影响。

可视化

我们在一个2D图里同时输出3个模型,图中的节点代表股票,边代表:

-

类标签被用来定义节点的颜色

-

稀疏的协方差模型被用来表示节点力

-

2D嵌入被用来表示节点的位置

这个例子涉及大量的可视化代码,因为可视化对于图形表示是重要的。挑战之一是如何定位标签的位置,使重叠最少,这样图形更清楚可见。为此,我们沿着每个轴的最近邻方向使用一个启发式的方法。

实例详解

首先,加载必需的模块和函数库。

from __future__ import print_function

# Author: Gael Varoquaux [email protected]

# License: BSD 3 clause

import sys

from datetime import datetime

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.collections import LineCollection

import pandas as pd

from sklearn import cluster, covariance, manifold

print(__doc__)

从因特网获得数据

本例使用的数据来自2003年——2008年的股票市场历史资料。这种历史数据能够从quandl.com,

alphavantage.co这样的API获得。我们将获得的数据定义成一个数组对象,定义股票变异为收盘价与开盘价的差。

# The data is from 2003 - 2008. This is reasonably calm: (not too long ago so

# that we get high-tech firms, and before the 2008 crash). This kind of

# historical data can be obtained for from APIs like the quandl.com and

# alphavantage.co ones.

start_date = datetime(2003, 1, 1).date()

end_date = datetime(2008, 1, 1).date()

symbol_dict = {

'TOT': 'Total',

'XOM': 'Exxon',

'CVX': 'Chevron',

'COP': 'ConocoPhillips',

'VLO': 'Valero Energy',

'MSFT': 'Microsoft',

'IBM': 'IBM',

'TWX': 'Time Warner',

'CMCSA': 'Comcast',

'CVC': 'Cablevision',

'YHOO': 'Yahoo',

'DELL': 'Dell',

'HPQ': 'HP',

'AMZN': 'Amazon',

'TM': 'Toyota',

'CAJ': 'Canon',

'SNE': 'Sony',

'F': 'Ford',

'HMC': 'Honda',

'NAV': 'Navistar',

'NOC': 'Northrop Grumman',

'BA': 'Boeing',

'KO': 'Coca Cola',

'MMM': '3M',

'MCD': 'McDonald\'s',

'PEP': 'Pepsi',

'K': 'Kellogg',

'UN': 'Unilever',

'MAR': 'Marriott',

'PG': 'Procter Gamble',

'CL': 'Colgate-Palmolive',

'GE': 'General Electrics',

'WFC': 'Wells Fargo',

'JPM': 'JPMorgan Chase',

'AIG': 'AIG',

'AXP': 'American express',

'BAC': 'Bank of America',

'GS': 'Goldman Sachs',

'AAPL': 'Apple',

'SAP': 'SAP',

'CSCO': 'Cisco',

'TXN': 'Texas Instruments',

'XRX': 'Xerox',

'WMT': 'Wal-Mart',

'HD': 'Home Depot',

'GSK': 'GlaxoSmithKline',

'PFE': 'Pfizer',

'SNY': 'Sanofi-Aventis',

'NVS': 'Novartis',

'KMB': 'Kimberly-Clark',

'R': 'Ryder',

'GD': 'General Dynamics',

'RTN': 'Raytheon',

'CVS': 'CVS',

'CAT': 'Caterpillar',

'DD': 'DuPont de Nemours'}

symbols, names = np.array(sorted(symbol_dict.items())).T

quotes = []

for symbol in symbols:

print('Fetching quote history for %r' % symbol, file=sys.stderr)

url = ('https://raw.githubusercontent.com/scikit-learn/examples-data/'

'master/financial-data/{}.csv')

quotes.append(pd.read_csv(url.format(symbol)))

close_prices = np.vstack([q['close'] for q in quotes])

open_prices = np.vstack([q['open'] for q in quotes])

# The daily variations of the quotes are what carry most information

variation = close_prices - open_prices

根据相关性学习图结构

edge_model = covariance.GraphicalLassoCV(cv=5)

# standardize the time series: using correlations rather than covariance

# is more efficient for structure recovery

X = variation.copy().T

X /= X.std(axis=0)

edge_model.fit(X)

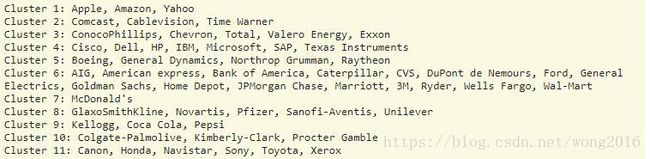

使用AP算法聚类

_, labels = cluster.affinity_propagation(edge_model.covariance_)

n_labels = labels.max()

for i in range(n_labels + 1):

print('Cluster %i: %s' % ((i + 1), ', '.join(names[labels == i])))

定位节点的最佳位置

# We use a dense eigen_solver to achieve reproducibility (arpack is

# initiated with random vectors that we don't control). In addition, we

# use a large number of neighbors to capture the large-scale structure.

node_position_model = manifold.LocallyLinearEmbedding(

n_components=2, eigen_solver='dense', n_neighbors=6)

embedding = node_position_model.fit_transform(X.T).T

可视化股票结构图

plt.figure(1, facecolor='w', figsize=(10, 8))

plt.clf()

ax = plt.axes([0., 0., 1., 1.])

plt.axis('off')

# Display a graph of the partial correlations

partial_correlations = edge_model.precision_.copy()

d = 1 / np.sqrt(np.diag(partial_correlations))

partial_correlations *= d

partial_correlations *= d[:, np.newaxis]

non_zero = (np.abs(np.triu(partial_correlations, k=1)) > 0.02)

# Plot the nodes using the coordinates of our embedding

plt.scatter(embedding[0], embedding[1], s=100 * d ** 2, c=labels,

cmap=plt.cm.nipy_spectral)

# Plot the edges

start_idx, end_idx = np.where(non_zero)

# a sequence of (*line0*, *line1*, *line2*), where::

# linen = (x0, y0), (x1, y1), ... (xm, ym)

segments = [[embedding[:, start], embedding[:, stop]]

for start, stop in zip(start_idx, end_idx)]

values = np.abs(partial_correlations[non_zero])

lc = LineCollection(segments,

zorder=0, cmap=plt.cm.hot_r,

norm=plt.Normalize(0, .7 * values.max()))

lc.set_array(values)

lc.set_linewidths(15 * values)

ax.add_collection(lc)

# Add a label to each node. The challenge here is that we want to

# position the labels to avoid overlap with other labels

for index, (name, label, (x, y)) in enumerate(

zip(names, labels, embedding.T)):

dx = x - embedding[0]

dx[index] = 1

dy = y - embedding[1]

dy[index] = 1

this_dx = dx[np.argmin(np.abs(dy))]

this_dy = dy[np.argmin(np.abs(dx))]

if this_dx > 0:

horizontalalignment = 'left'

x = x + .002

else:

horizontalalignment = 'right'

x = x - .002

if this_dy > 0:

verticalalignment = 'bottom'

y = y + .002

else:

verticalalignment = 'top'

y = y - .002

plt.text(x, y, name, size=10,

horizontalalignment=horizontalalignment,

verticalalignment=verticalalignment,

bbox=dict(facecolor='w',

edgecolor=plt.cm.nipy_spectral(label / float(n_labels)),

alpha=.6))

plt.xlim(embedding[0].min() - .15 * embedding[0].ptp(),

embedding[0].max() + .10 * embedding[0].ptp(),)

plt.ylim(embedding[1].min() - .03 * embedding[1].ptp(),

embedding[1].max() + .03 * embedding[1].ptp())

plt.show()

阅读更多精彩内容,请关注微信公众号:统计学习与大数据