PyTorch学习系列(九)——参数_初始化

本文转自如下:

https://www.cnblogs.com/lindaxin/p/8037561.html

https://blog.csdn.net/qq_19598705/article/details/80396047

之前我学习了神经网络中权值初始化的方法

那么如何在pytorch里实现呢。

PyTorch提供了多种参数初始化函数:

torch.nn.init.constant(tensor, val)

torch.nn.init.normal(tensor, mean=0, std=1)

torch.nn.init.xavier_uniform(tensor, gain=1)

等等。详细请参考:http://pytorch.org/docs/nn.html#torch-nn-init

注意上面的初始化函数的参数tensor,虽然写的是tensor,但是也可以是Variable类型的。而神经网络的参数类型Parameter是Variable类的子类,所以初始化函数可以直接作用于神经网络参数。

示例:

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3)

init.xavier_uniform(self.conv1.weight)

init.constant(self.conv1.bias, 0.1)

上面的语句是对网络的某一层参数进行初始化。如何对整个网络的参数进行初始化定制呢?

def weights_init(m):

classname=m.class.name

if classname.find(‘Conv’) != -1:

xavier(m.weight.data)

xavier(m.bias.data)

net = Net()

net.apply(weights_init) #apply函数会递归地搜索网络内的所有module并把参数表示的函数应用到所有的module上。

不建议访问以下划线为前缀的成员,他们是内部的,如果有改变不会通知用户。更推荐的一种方法是检查某个module是否是某种类型:

def weights_init(m):

if isinstance(m, nn.Conv2d):

xavier(m.weight.data)

xavier(m.bias.data)

转载这个网址的

https://blog.csdn.net/qq_19598705/article/details/80396047

本文发现pytorch使用,必须对权重初始化,否则损失无法收敛。

在使用大多如下使用:

def weights_init(m):

classname = m.class.name

# print(classname)

if classname.find(‘Conv3d’) != -1:

init.xavier_normal_(m.weight.data)

init.constant_(m.bias.data, 0.0)

elif classname.find(‘Linear’) != -1:

init.xavier_normal_(m.weight.data)

init.constant_(m.bias.data, 0.0)

model = C3D()

model.apply(weights_init)

1

2

torch.nn.init有如下几种(0.4版本,目前最新):

torch.nn.init.calculate_gain(nonlinearity, param=None)

gain = nn.init.calculate_gain(‘leaky_relu’)

1

torch.nn.init.uniform_(tensor, a=0, b=1)

w = torch.empty(3, 5)

nn.init.uniform_(w)

1

2

torch.nn.init.normal_(tensor, mean=0, std=1)

w = torch.empty(3, 5)

nn.init.normal_(w)

1

2

torch.nn.init.constant_(tensor, val)

torch.empty(3, 5)

nn.init.constant_(w, 0.3)

1

2

torch.nn.init.eye_(tensor)

w = torch.empty(3, 5)

nn.init.eye_(w)

1

2

torch.nn.init.dirac_(tensor)

w = torch.empty(3, 16, 5, 5)

nn.init.dirac_(w)

1

2

torch.nn.init.xavier_uniform_(tensor,gain=1)

w = torch.empty(3, 5)

nn.init.xavier_uniform_(w, gain=nn.init.calculate_gain(‘relu’))

1

2

torch.nn.init.xavier_normal_(tensor, gain=1)

w = torch.empty(3, 5)

nn.init.xavier_normal_(w)

1

2

torch.nn.init.kaiming_uniform_(tensor, a=0, mode=’fan_in’, nonlinearity=’leaky_relu’)

w = torch.empty(3, 5)

nn.init.kaiming_uniform_(w, mode=‘fan_in’, nonlinearity=‘relu’)

1

2

torch.nn.init.kaiming_normal_(tensor, a=0, mode=’fan_in’, nonlinearity=’leaky_relu’)

w = torch.empty(3, 5)

nn.init.kaiming_normal_(w, mode=‘fan_out’, nonlinearity=‘relu’)

1

2

torch.nn.init.orthogonal_(tensor, gain=1)

w = torch.empty(3, 5)

nn.init.orthogonal_(w)

1

2

torch.nn.init.sparse_(tensor, sparsity, std=0.01)

w = torch.empty(3, 5)

nn.init.sparse_(w, sparsity=0.1)

以下来自

https://blog.csdn.net/hyk_1996/article/details/82118797

卷积神经网络的权值初始化方法

2018年08月28日 14:07:56 hyk_1996 阅读数:974

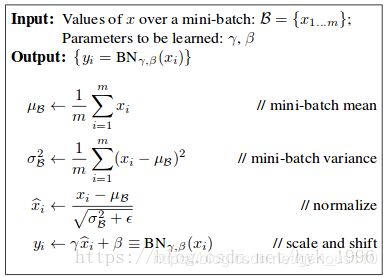

本文以CNN的三个主要构成部件——卷积层、BN层、全连接层为切入点,分别介绍其初始化方法。

卷积层

高斯初始化

从均值为0,方差为1的高斯分布中采样,作为初始权值。PyTorch中的相关函数如下:

torch.nn.init.normal_(tensor, mean=0, std=1)

1

kaiming高斯初始化

由FAIR的大牛Kaiming He提出来的卷积层权值初始化方法,目的是使得每一卷积层的输出的方差都为1,具体数学推导可以参考论文[1]. 权值的初始化方法如下:

Wl~N(0,2(1+a2)×nl−−−−−−−−−−−√)

Wl~N(0,2(1+a2)×nl)

其中,a为Relu或Leaky Relu的负半轴斜率,nlnl为输入的维数,即nl=卷积核边长2×channel数nl=卷积核边长2×channel数。

在PyTorch中,相关函数如下:

torch.nn.init.kaiming_normal_(tensor, a=0, mode=‘fan_in’, nonlinearity=‘leaky_relu’)

1

上述输入参数中,tensor是torch.Tensor变量,a为Relu函数的负半轴斜率,mode表示是让前向传播还是反向传播的输出的方差为1,nonlinearity可以选择是relu还是leaky_relu.

xavier高斯初始化

Glorot正态分布初始化方法,也称作Xavier正态分布初始化,参数由0均值,标准差为sqrt(2 / (fan_in + fan_out))的正态分布产生,其中fan_in和fan_out是分别权值张量的输入和输出元素数目. 这种初始化同样是为了保证输入输出的方差不变,但是原论文中([2])是基于线性函数推导的,同时在tanh激活函数上有很好的效果,但不适用于ReLU激活函数。

std=gain×2fan_in+fan_out−−−−−−−−−−−−−−√

std=gain×2fan_in+fan_out

在PyTorch中,相关函数如下:

torch.nn.init.xavier_normal_(tensor, gain=1)

1

对于scale因子γγ,初始化为1;对于shift因子ββ,初始化为0.

全连接层

对于全连接层,除了可以使用卷积层的基于高斯分布的初始方法外,也有使用均匀分布(uniform distribution)的初始化方法,或者直接设置为常量(constant)。

还有其它这里没有细讲的初始化方法,包括:

Orthogonal:用随机正交矩阵初始化。

sparse:用稀疏矩阵初始化。

TruncatedNormal:截尾高斯分布,类似于高斯分布,位于均值两个标准差以外的数据将会被丢弃并重新生成,形成截尾分布。PyTorch中似乎没有相关实现。

参考

[1] Delving deep into rectifiers: Surpassing human-level performance on ImageNet classification — He, K. et al. (2015)

[2] Understanding the difficulty of training deep feedforward neural networks — Glorot, X. & Bengio, Y. (2010)

来自如下的一个初始化例子

https://github.com/prlz77/ResNeXt.pytorch/blob/master/models/model.py

-- coding: utf-8 --

from future import division

“”"

Creates a ResNeXt Model as defined in:

Xie, S., Girshick, R., Dollár, P., Tu, Z., & He, K. (2016).

Aggregated residual transformations for deep neural networks.

arXiv preprint arXiv:1611.05431.

“”"

author = “Pau Rodríguez López, ISELAB, CVC-UAB”

email = "[email protected]"

import torch.nn as nn

import torch.nn.functional as F

from torch.nn import init

class ResNeXtBottleneck(nn.Module):

“”"

RexNeXt bottleneck type C (https://github.com/facebookresearch/ResNeXt/blob/master/models/resnext.lua)

“”"

def __init__(self, in_channels, out_channels, stride, cardinality, base_width, widen_factor):

""" Constructor

Args:

in_channels: input channel dimensionality

out_channels: output channel dimensionality

stride: conv stride. Replaces pooling layer.

cardinality: num of convolution groups.

base_width: base number of channels in each group.

widen_factor: factor to reduce the input dimensionality before convolution.

"""

super(ResNeXtBottleneck, self).__init__()

width_ratio = out_channels / (widen_factor * 64.)

D = cardinality * int(base_width * width_ratio)

self.conv_reduce = nn.Conv2d(in_channels, D, kernel_size=1, stride=1, padding=0, bias=False)

self.bn_reduce = nn.BatchNorm2d(D)

self.conv_conv = nn.Conv2d(D, D, kernel_size=3, stride=stride, padding=1, groups=cardinality, bias=False)

self.bn = nn.BatchNorm2d(D)

self.conv_expand = nn.Conv2d(D, out_channels, kernel_size=1, stride=1, padding=0, bias=False)

self.bn_expand = nn.BatchNorm2d(out_channels)

self.shortcut = nn.Sequential()

if in_channels != out_channels:

self.shortcut.add_module('shortcut_conv',

nn.Conv2d(in_channels, out_channels, kernel_size=1, stride=stride, padding=0,

bias=False))

self.shortcut.add_module('shortcut_bn', nn.BatchNorm2d(out_channels))

def forward(self, x):

bottleneck = self.conv_reduce.forward(x)

bottleneck = F.relu(self.bn_reduce.forward(bottleneck), inplace=True)

bottleneck = self.conv_conv.forward(bottleneck)

bottleneck = F.relu(self.bn.forward(bottleneck), inplace=True)

bottleneck = self.conv_expand.forward(bottleneck)

bottleneck = self.bn_expand.forward(bottleneck)

residual = self.shortcut.forward(x)

return F.relu(residual + bottleneck, inplace=True)

class CifarResNeXt(nn.Module):

“”"

ResNext optimized for the Cifar dataset, as specified in

https://arxiv.org/pdf/1611.05431.pdf

“”"

def __init__(self, cardinality, depth, nlabels, base_width, widen_factor=4):

""" Constructor

Args:

cardinality: number of convolution groups.

depth: number of layers.

nlabels: number of classes

base_width: base number of channels in each group.

widen_factor: factor to adjust the channel dimensionality

"""

super(CifarResNeXt, self).__init__()

self.cardinality = cardinality

self.depth = depth

self.block_depth = (self.depth - 2) // 9

self.base_width = base_width

self.widen_factor = widen_factor

self.nlabels = nlabels

self.output_size = 64

self.stages = [64, 64 * self.widen_factor, 128 * self.widen_factor, 256 * self.widen_factor]

self.conv_1_3x3 = nn.Conv2d(3, 64, 3, 1, 1, bias=False)

self.bn_1 = nn.BatchNorm2d(64)

self.stage_1 = self.block('stage_1', self.stages[0], self.stages[1], 1)

self.stage_2 = self.block('stage_2', self.stages[1], self.stages[2], 2)

self.stage_3 = self.block('stage_3', self.stages[2], self.stages[3], 2)

self.classifier = nn.Linear(self.stages[3], nlabels)

init.kaiming_normal(self.classifier.weight)

for key in self.state_dict():

if key.split('.')[-1] == 'weight':

if 'conv' in key:

init.kaiming_normal(self.state_dict()[key], mode='fan_out')

if 'bn' in key:

self.state_dict()[key][...] = 1

elif key.split('.')[-1] == 'bias':

self.state_dict()[key][...] = 0

def block(self, name, in_channels, out_channels, pool_stride=2):

""" Stack n bottleneck modules where n is inferred from the depth of the network.

Args:

name: string name of the current block.

in_channels: number of input channels

out_channels: number of output channels

pool_stride: factor to reduce the spatial dimensionality in the first bottleneck of the block.

Returns: a Module consisting of n sequential bottlenecks.

"""

block = nn.Sequential()

for bottleneck in range(self.block_depth):

name_ = '%s_bottleneck_%d' % (name, bottleneck)

if bottleneck == 0:

block.add_module(name_, ResNeXtBottleneck(in_channels, out_channels, pool_stride, self.cardinality,

self.base_width, self.widen_factor))

else:

block.add_module(name_,

ResNeXtBottleneck(out_channels, out_channels, 1, self.cardinality, self.base_width,

self.widen_factor))

return block

def forward(self, x):

x = self.conv_1_3x3.forward(x)

x = F.relu(self.bn_1.forward(x), inplace=True)

x = self.stage_1.forward(x)

x = self.stage_2.forward(x)

x = self.stage_3.forward(x)

x = F.avg_pool2d(x, 8, 1)

x = x.view(-1, self.stages[3])

return self.classifier(x)