案例一:波士顿房价预测问题

| 区别 |

回归任务 |

分类任务 |

| 预测输出类型 |

连续的实数值 |

离散的标签 |

| 常用损失函数loss()举例 |

均方差损失函数Loss=(y-z)^2 |

交叉熵损失函数 |

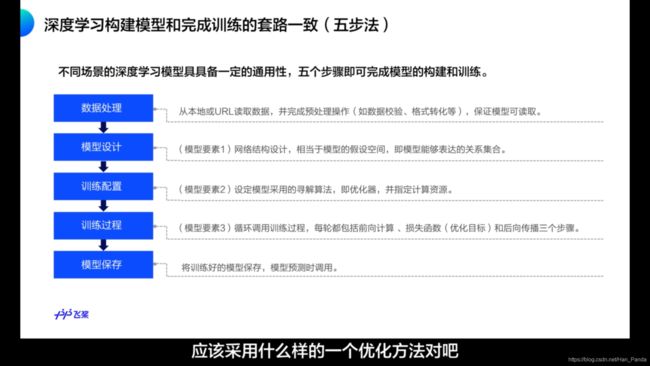

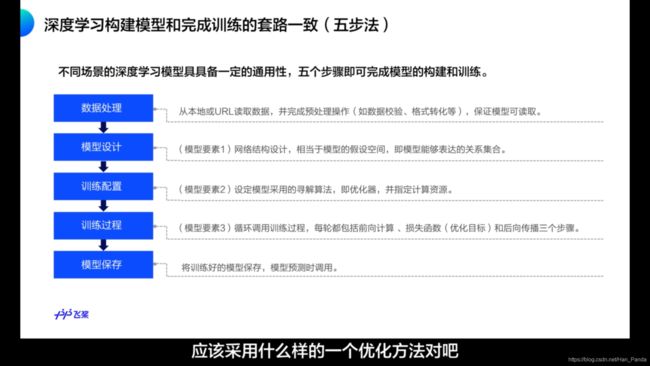

- 深度学习基本步骤

fromfile(...)

fromfile(file, dtype=float, count=-1, sep='', offset=0)

Construct an array from data in a text or binary file.

具体代码详解

Step1-数据处理:读取数据和预处理操作

def load_data():

datafile = './work/housing.data'

data = np.fromfile(datafile, sep=' ')

feature_names = [ 'CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', \

'DIS', 'RAD', 'TAX', 'PTRATIO', 'B', 'LSTAT', 'MEDV' ]

feature_num = len(feature_names)

data = data.reshape([data.shape[0] // feature_num, feature_num])

ratio = 0.8

offset = int(data.shape[0] * ratio)

training_data = data[:offset]

maximums, minimums, avgs = training_data.max(axis=0), training_data.min(axis=0), \

training_data.sum(axis=0) / training_data.shape[0]

for i in range(feature_num):

data[:, i] = (data[:, i] - avgs[i]) / (maximums[i] - minimums[i])

training_data = data[:offset]

test_data = data[offset:]

return training_data, test_data

training_data, test_data = load_data()

x = training_data[:, :-1]

y = training_data[:, -1:]

Step2-模型设计:网络结构(假设)

class Network(object):

def __init__(self, num_of_weights):

np.random.seed(0)

self.w = np.random.randn(num_of_weights, 1)

self.b = 0.

def forward(self, x):

z = np.dot(x, self.w) + self.b

return z

Step3-训练配置:优化器(寻解算法)和计算资源配置

class Network(object):

def __init__(self, num_of_weights):

np.random.seed(0)

self.w = np.random.randn(num_of_weights, 1)

self.b = 0.

def forward(self, x):

z = np.dot(x, self.w) + self.b

return z

def loss(self, z, y):

error = z - y

cost = error * error

cost = np.mean(cost)

return cost

Step4-训练过程:循环调用训练过程,前向计算+损失函数(优化目标)+后向传播

class Network(object):

def __init__(self, num_of_weights):

np.random.seed(0)

self.w = np.random.randn(num_of_weights, 1)

self.b = 0.

def forward(self, x):

z = np.dot(x, self.w) + self.b

return z

def loss(self, z, y):

error = z - y

num_samples = error.shape[0]

cost = error * error

cost = np.sum(cost) / num_samples

return cost

def gradient(self, x, y):

z = self.forward(x)

gradient_w = (z-y)*x

gradient_w = np.mean(gradient_w, axis=0)

gradient_w = gradient_w[:, np.newaxis]

gradient_b = (z - y)

gradient_b = np.mean(gradient_b)

return gradient_w, gradient_b

class Network(object):

def __init__(self, num_of_weights):

np.random.seed(0)

self.w = np.random.randn(num_of_weights, 1)

self.b = 0.

def forward(self, x):

z = np.dot(x, self.w) + self.b

return z

def loss(self, z, y):

error = z - y

num_samples = error.shape[0]

cost = error * error

cost = np.sum(cost) / num_samples

return cost

def gradient(self, x, y):

z = self.forward(x)

gradient_w = (z-y)*x

gradient_w = np.mean(gradient_w, axis=0)

gradient_w = gradient_w[:, np.newaxis]

gradient_b = (z - y)

gradient_b = np.mean(gradient_b)

return gradient_w, gradient_b

def update(self, gradient_w, gradient_b, eta = 0.01):

self.w = self.w - eta * gradient_w

self.b = self.b - eta * gradient_b

def train(self, x, y, iterations=100, eta=0.01):

losses = []

for i in range(iterations):

z = self.forward(x)

L = self.loss(z, y)

gradient_w, gradient_b = self.gradient(x, y)

self.update(gradient_w, gradient_b, eta)

losses.append(L)

if (i+1) % 10 == 0:

print('iter {}, loss {}'.format(i, L))

return losses

train_data, test_data = load_data()

x = train_data[:, :-1]

y = train_data[:, -1:]

net = Network(13)

num_iterations=1000

losses = net.train(x,y, iterations=num_iterations, eta=0.01)

plot_x = np.arange(num_iterations)

plot_y = np.array(losses)

plt.plot(plot_x, plot_y)

plt.show()

train_data, test_data = load_data()

np.random.shuffle(train_data)

batch_size = 10

n = len(train_data)

mini_batches = [train_data[k:k+batch_size] for k in range(0, n, batch_size)]

net = Network(13)

for mini_batch in mini_batches:

x = mini_batch[:, :-1]

y = mini_batch[:, -1:]

loss = net.train(x, y, iterations=1)

import numpy as np

class Network(object):

def __init__(self, num_of_weights):

self.w = np.random.randn(num_of_weights, 1)

self.b = 0.

def forward(self, x):

z = np.dot(x, self.w) + self.b

return z

def loss(self, z, y):

error = z - y

num_samples = error.shape[0]

cost = error * error

cost = np.sum(cost) / num_samples

return cost

def gradient(self, x, y):

z = self.forward(x)

N = x.shape[0]

gradient_w = 1. / N * np.sum((z-y) * x, axis=0)

gradient_w = gradient_w[:, np.newaxis]

gradient_b = 1. / N * np.sum(z-y)

return gradient_w, gradient_b

def update(self, gradient_w, gradient_b, eta = 0.01):

self.w = self.w - eta * gradient_w

self.b = self.b - eta * gradient_b

def train(self, training_data, num_epoches, batch_size=10, eta=0.01):

n = len(training_data)

losses = []

for epoch_id in range(num_epoches):

np.random.shuffle(training_data)

mini_batches = [training_data[k:k+batch_size] for k in range(0, n, batch_size)]

for iter_id, mini_batch in enumerate(mini_batches):

x = mini_batch[:, :-1]

y = mini_batch[:, -1:]

a = self.forward(x)

loss = self.loss(a, y)

gradient_w, gradient_b = self.gradient(x, y)

self.update(gradient_w, gradient_b, eta)

losses.append(loss)

print('Epoch {:3d} / iter {:3d}, loss = {:.4f}'.

format(epoch_id, iter_id, loss))

return losses

train_data, test_data = load_data()

net = Network(13)

losses = net.train(train_data, num_epoches=50, batch_size=100, eta=0.1)

plot_x = np.arange(len(losses))

plot_y = np.array(losses)

plt.plot(plot_x, plot_y)

plt.show()

Step5-保存模型:将训练好的模型保存