#cs231n#Assignment2:Dropout.ipynb

根据自己的理解和参考资料实现了一下

Dropout.ipynb

Dropout

Dropout [1] is a technique for regularizing neural networks by randomly setting some features to zero during the forward pass. In this exercise you will implement a dropout layer and modify your fully-connected network to optionally use dropout.

[1] Geoffrey E. Hinton et al, “Improving neural networks by preventing co-adaptation of feature detectors”, arXiv 2012

# As usual, a bit of setup

import time

import numpy as np

import matplotlib.pyplot as plt

from cs231n.classifiers.fc_net import *

from cs231n.data_utils import get_CIFAR10_data

from cs231n.gradient_check import eval_numerical_gradient, eval_numerical_gradient_array

from cs231n.solver import Solver

%matplotlib inline

plt.rcParams['figure.figsize'] = (10.0, 8.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

# for auto-reloading external modules

# see http://stackoverflow.com/questions/1907993/autoreload-of-modules-in-ipython

%load_ext autoreload

%autoreload 2

def rel_error(x, y):

""" returns relative error """

return np.max(np.abs(x - y) / (np.maximum(1e-8, np.abs(x) + np.abs(y))))# Load the (preprocessed) CIFAR10 data.

data = get_CIFAR10_data()

for k, v in data.iteritems():

print '%s: ' % k, v.shapeX_val: (1000, 3, 32, 32)

X_train: (49000, 3, 32, 32)

X_test: (1000, 3, 32, 32)

y_val: (1000,)

y_train: (49000,)

y_test: (1000,)

Dropout forward pass

In the file cs231n/layers.py, implement the forward pass for dropout. Since dropout behaves differently during training and testing, make sure to implement the operation for both modes.

Once you have done so, run the cell below to test your implementation.

x = np.random.randn(500, 500) + 10

for p in [0.3, 0.6, 0.75]:

out, _ = dropout_forward(x, {'mode': 'train', 'p': p})

out_test, _ = dropout_forward(x, {'mode': 'test', 'p': p})

print 'Running tests with p = ', p

print 'Mean of input: ', x.mean()

print 'Mean of train-time output: ', out.mean()

print 'Mean of test-time output: ', out_test.mean()

print 'Fraction of train-time output set to zero: ', (out == 0).mean()

print 'Fraction of test-time output set to zero: ', (out_test == 0).mean()

printRunning tests with p = 0.3

Mean of input: 9.99908067149

Mean of train-time output: 9.97006733854

Mean of test-time output: 9.99908067149

Fraction of train-time output set to zero: 0.700872

Fraction of test-time output set to zero: 0.0

Running tests with p = 0.6

Mean of input: 9.99908067149

Mean of train-time output: 9.98833459263

Mean of test-time output: 9.99908067149

Fraction of train-time output set to zero: 0.400528

Fraction of test-time output set to zero: 0.0

Running tests with p = 0.75

Mean of input: 9.99908067149

Mean of train-time output: 9.98971354845

Mean of test-time output: 9.99908067149

Fraction of train-time output set to zero: 0.250744

Fraction of test-time output set to zero: 0.0

Dropout backward pass

In the file cs231n/layers.py, implement the backward pass for dropout. After doing so, run the following cell to numerically gradient-check your implementation.

x = np.random.randn(10, 10) + 10

dout = np.random.randn(*x.shape)

dropout_param = {'mode': 'train', 'p': 0.8, 'seed': 123}

out, cache = dropout_forward(x, dropout_param)

dx = dropout_backward(dout, cache)

dx_num = eval_numerical_gradient_array(lambda xx: dropout_forward(xx, dropout_param)[0], x, dout)

print 'dx relative error: ', rel_error(dx, dx_num)dx relative error: 5.44560839735e-11

Fully-connected nets with Dropout

In the file cs231n/classifiers/fc_net.py, modify your implementation to use dropout. Specificially, if the constructor the the net receives a nonzero value for the dropout parameter, then the net should add dropout immediately after every ReLU nonlinearity. After doing so, run the following to numerically gradient-check your implementation.

N, D, H1, H2, C = 2, 15, 20, 30, 10

X = np.random.randn(N, D)

y = np.random.randint(C, size=(N,))

for dropout in [0, 0.25, 0.5]:

print 'Running check with dropout = ', dropout

model = FullyConnectedNet([H1, H2], input_dim=D, num_classes=C,

weight_scale=5e-2, dtype=np.float64,

dropout=dropout, seed=123)

loss, grads = model.loss(X, y)

print 'Initial loss: ', loss

for name in sorted(grads):

f = lambda _: model.loss(X, y)[0]

grad_num = eval_numerical_gradient(f, model.params[name], verbose=False, h=1e-5)

print '%s relative error: %.2e' % (name, rel_error(grad_num, grads[name]))

printRunning check with dropout = 0

Initial loss: 2.30304316117

W1 relative error: 4.80e-07

W2 relative error: 1.97e-07

W3 relative error: 1.56e-07

b1 relative error: 2.03e-08

b2 relative error: 1.69e-09

b3 relative error: 1.11e-10

Running check with dropout = 0.25

Initial loss: 2.30235424783

W1 relative error: 1.00e-07

W2 relative error: 2.26e-09

W3 relative error: 2.56e-05

b1 relative error: 9.37e-10

b2 relative error: 2.13e-01

b3 relative error: 1.25e-10

Running check with dropout = 0.5

Initial loss: 2.30424261716

W1 relative error: 1.21e-07

W2 relative error: 2.45e-08

W3 relative error: 8.06e-07

b1 relative error: 2.28e-08

b2 relative error: 6.84e-10

b3 relative error: 1.28e-10

Regularization experiment

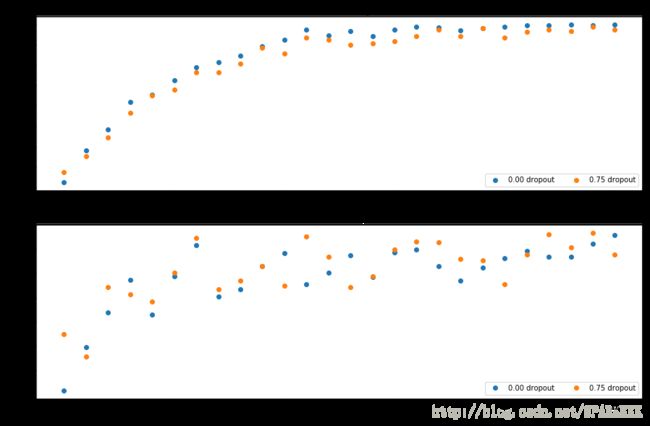

As an experiment, we will train a pair of two-layer networks on 500 training examples: one will use no dropout, and one will use a dropout probability of 0.75. We will then visualize the training and validation accuracies of the two networks over time.

# Train two identical nets, one with dropout and one without

num_train = 500

small_data = {

'X_train': data['X_train'][:num_train],

'y_train': data['y_train'][:num_train],

'X_val': data['X_val'],

'y_val': data['y_val'],

}

solvers = {}

dropout_choices = [0, 0.75]

for dropout in dropout_choices:

model = FullyConnectedNet([500], dropout=dropout)

print dropout

solver = Solver(model, small_data,

num_epochs=25, batch_size=100,

update_rule='adam',

optim_config={

'learning_rate': 5e-4,

},

verbose=True, print_every=100)

solver.train()

solvers[dropout] = solver0

(Iteration 1 / 125) loss: 8.596245

(Epoch 0 / 25) train acc: 0.224000; val_acc: 0.183000

(Epoch 1 / 25) train acc: 0.382000; val_acc: 0.219000

(Epoch 2 / 25) train acc: 0.484000; val_acc: 0.248000

(Epoch 3 / 25) train acc: 0.620000; val_acc: 0.275000

(Epoch 4 / 25) train acc: 0.654000; val_acc: 0.246000

(Epoch 5 / 25) train acc: 0.726000; val_acc: 0.278000

(Epoch 6 / 25) train acc: 0.788000; val_acc: 0.304000

(Epoch 7 / 25) train acc: 0.814000; val_acc: 0.261000

(Epoch 8 / 25) train acc: 0.846000; val_acc: 0.267000

(Epoch 9 / 25) train acc: 0.892000; val_acc: 0.286000

(Epoch 10 / 25) train acc: 0.922000; val_acc: 0.297000

(Epoch 11 / 25) train acc: 0.972000; val_acc: 0.271000

(Epoch 12 / 25) train acc: 0.946000; val_acc: 0.281000

(Epoch 13 / 25) train acc: 0.968000; val_acc: 0.295000

(Epoch 14 / 25) train acc: 0.940000; val_acc: 0.277000

(Epoch 15 / 25) train acc: 0.974000; val_acc: 0.298000

(Epoch 16 / 25) train acc: 0.988000; val_acc: 0.300000

(Epoch 17 / 25) train acc: 0.984000; val_acc: 0.286000

(Epoch 18 / 25) train acc: 0.970000; val_acc: 0.274000

(Epoch 19 / 25) train acc: 0.980000; val_acc: 0.285000

(Epoch 20 / 25) train acc: 0.986000; val_acc: 0.293000

(Iteration 101 / 125) loss: 0.154793

(Epoch 21 / 25) train acc: 0.994000; val_acc: 0.299000

(Epoch 22 / 25) train acc: 0.994000; val_acc: 0.294000

(Epoch 23 / 25) train acc: 0.998000; val_acc: 0.294000

(Epoch 24 / 25) train acc: 0.994000; val_acc: 0.305000

(Epoch 25 / 25) train acc: 0.998000; val_acc: 0.312000

0.75

(Iteration 1 / 125) loss: 10.053350

(Epoch 0 / 25) train acc: 0.274000; val_acc: 0.230000

(Epoch 1 / 25) train acc: 0.352000; val_acc: 0.211000

(Epoch 2 / 25) train acc: 0.444000; val_acc: 0.269000

(Epoch 3 / 25) train acc: 0.566000; val_acc: 0.263000

(Epoch 4 / 25) train acc: 0.650000; val_acc: 0.257000

(Epoch 5 / 25) train acc: 0.678000; val_acc: 0.281000

(Epoch 6 / 25) train acc: 0.764000; val_acc: 0.310000

(Epoch 7 / 25) train acc: 0.764000; val_acc: 0.267000

(Epoch 8 / 25) train acc: 0.808000; val_acc: 0.274000

(Epoch 9 / 25) train acc: 0.884000; val_acc: 0.286000

(Epoch 10 / 25) train acc: 0.858000; val_acc: 0.270000

(Epoch 11 / 25) train acc: 0.934000; val_acc: 0.311000

(Epoch 12 / 25) train acc: 0.922000; val_acc: 0.294000

(Epoch 13 / 25) train acc: 0.898000; val_acc: 0.269000

(Epoch 14 / 25) train acc: 0.906000; val_acc: 0.278000

(Epoch 15 / 25) train acc: 0.916000; val_acc: 0.300000

(Epoch 16 / 25) train acc: 0.942000; val_acc: 0.307000

(Epoch 17 / 25) train acc: 0.972000; val_acc: 0.306000

(Epoch 18 / 25) train acc: 0.940000; val_acc: 0.292000

(Epoch 19 / 25) train acc: 0.980000; val_acc: 0.291000

(Epoch 20 / 25) train acc: 0.934000; val_acc: 0.271000

(Iteration 101 / 125) loss: 0.579881

(Epoch 21 / 25) train acc: 0.964000; val_acc: 0.296000

(Epoch 22 / 25) train acc: 0.974000; val_acc: 0.313000

(Epoch 23 / 25) train acc: 0.968000; val_acc: 0.302000

(Epoch 24 / 25) train acc: 0.988000; val_acc: 0.314000

(Epoch 25 / 25) train acc: 0.974000; val_acc: 0.296000

# Plot train and validation accuracies of the two models

train_accs = []

val_accs = []

for dropout in dropout_choices:

solver = solvers[dropout]

train_accs.append(solver.train_acc_history[-1])

val_accs.append(solver.val_acc_history[-1])

plt.subplot(3, 1, 1)

for dropout in dropout_choices:

plt.plot(solvers[dropout].train_acc_history, 'o', label='%.2f dropout' % dropout)

plt.title('Train accuracy')

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.legend(ncol=2, loc='lower right')

plt.subplot(3, 1, 2)

for dropout in dropout_choices:

plt.plot(solvers[dropout].val_acc_history, 'o', label='%.2f dropout' % dropout)

plt.title('Val accuracy')

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.legend(ncol=2, loc='lower right')

plt.gcf().set_size_inches(15, 15)

plt.show()Question

Explain what you see in this experiment. What does it suggest about dropout?

Answer

用不用dropout最后都能对训练集实现接近100%,但对于验证集用dropout的网络表现得好了一点点,这表明了dropout是一种特殊的正则化方式,可以防止过拟合

参考资料

http://www.jianshu.com/p/9c4396653324

http://blog.csdn.net/xieyi4650/article/category/6498212