python中lxml模块的使用

lxml是python的一个解析库,支持HTML和XML的解析,支持XPath解析方式,而且解析效率非常高

1.lxml的安装

pip install lxml

2.导入lxml 的 etree 库

from lxml import etree

3.利用etree.HTML,将字符串转化为Element对象,Element对象具有xpath的方法,返回结果的列表,能够接受bytes类型的数据和str类型的数据。

from lxml import etree

html = etree.HTML(response.text)

ret_list = html.xpath("xpath字符串")

也可以这样使用:

from lxml import etree

htmlDiv = etree.HTML(response.content.decode())

hrefs = htmlDiv.xpath("//h4//a/@href")

4.把转化后的element对象转化为字符串,返回bytes类型,etree.tostring(element)

假设我们现有如下的html字符换,尝试对他进行操作:

<div> <ul>

<li class="item-1"><a href="link1.html">first item</a></li>

<li class="item-1"><a href="link2.html">second item</a></li>

<li class="item-inactive"><a href="link3.html">third item</a></li>

<li class="item-1"><a href="link4.html">fourth item</a></li>

<li class="item-0"><a href="link5.html">fifth item</a> # 注意,此处缺少一个 闭合标签

</ul> </div>

代码示例:

from lxml import etree

text = ''' '''

html = etree.HTML(text)

print(type(html))

handeled_html_str = etree.tostring(html).decode()

print(handeled_html_str)

输出结果:

<class 'lxml.etree._Element'>

<html><body><div> <ul>

<li class="item-1"><a href="link1.html">first item</a></li>

<li class="item-1"><a href="link2.html">second item</a></li>

<li class="item-inactive"><a href="link3.html">third item</a></li>

<li class="item-1"><a href="link4.html">fourth item</a></li>

<li class="item-0"><a href="link5.html">fifth item</a>

</li></ul> </div> </body></html>

可以发现,lxml确实能够把确实的标签补充完成,但是请注意lxml是人写的,很多时候由于网页不够规范,或者是lxml的bug。

即使参考url地址对应的响应去提取数据,任然获取不到,这个时候我们需要使用etree.tostring的方法,观察etree到底把html转化成了什么样子,即根据转化后的html字符串去进行数据的提取。

5.lxml的深入练习

from lxml import etree

text = ''' '''

html = etree.HTML(text)

#获取href的列表和title的列表

href_list = html.xpath("//li[@class='item-1']/a/@href")

title_list = html.xpath("//li[@class='item-1']/a/text()")

#组装成字典

for href in href_list:

item = {}

item["href"] = href

item["title"] = title_list[href_list.index(href)]

print(item)

输出为:

{'href': 'link1.html', 'title': 'first item'}

{'href': 'link2.html', 'title': 'second item'}

{'href': 'link4.html', 'title': 'fourth item'}

6.lxml模块的进阶使用

返回的是element对象,可以继续使用xpath方法,对此我们可以在后面的数据提取过程中:先根据某个标签进行分组,分组之后再示例如下:

from lxml import etree

text = ''' '''

html = etree.HTML(text)

li_list = html.xpath("//li[@class='item-1']")

print(li_list)

结果为:

[<Element li at 0x11106cb48>, <Element li at 0x11106cb88>, <Element li at 0x11106cbc8>]数据的提取

可以发现结果是一个element对象,这个对象能够继续使用xpath方法

先根据li标签进行分组,之后再进行数据的提取

from lxml import etree

text = ''' '''

#根据li标签进行分组

html = etree.HTML(text)

li_list = html.xpath("//li[@class='item-1']")

#在每一组中继续进行数据的提取

for li in li_list:

item = {}

item["href"] = li.xpath("./a/@href")[0] if len(li.xpath("./a/@href"))>0 else None

item["title"] = li.xpath("./a/text()")[0] if len(li.xpath("./a/text()"))>0 else None

print(item)

结果是:

{'href': None, 'title': 'first item'}

{'href': 'link2.html', 'title': 'second item'}

{'href': 'link4.html', 'title': 'fourth item'}

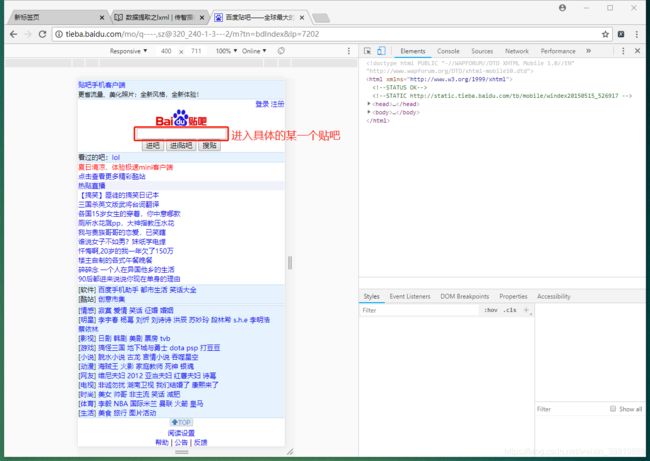

7.案列:贴吧极速版:

import requests

from lxml import etree

class TieBaSpider:

def __init__(self, tieba_name):

#1. start_url

self.start_url= "http://tieba.baidu.com/mo/q---C9E0BC1BC80AA0A7CE472600CDE9E9E3%3AFG%3D1-sz%40320_240%2C-1-3-0--2--wapp_1525330549279_782/m?kw={}&lp=6024".format(tieba_name)

self.headers = {"User-Agent": "Mozilla/5.0 (Linux; Android 8.0; Pixel 2 Build/OPD3.170816.012) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.139 Mobile Safari/537.36"}

self.part_url = "http://tieba.baidu.com/mo/q---C9E0BC1BC80AA0A7CE472600CDE9E9E3%3AFG%3D1-sz%40320_240%2C-1-3-0--2--wapp_1525330549279_782"

def parse_url(self,url): #发送请求,获取响应

# print(url)

response = requests.get(url,headers=self.headers)

return response.content

def get_content_list(self,html_str): #3. 提取数据

html = etree.HTML(html_str)

div_list = html.xpath("//body/div/div[contains(@class,'i')]")

content_list = []

for div in div_list:

item = {}

item["href"] = self.part_url+div.xpath("./a/@href")[0]

item["title"] = div.xpath("./a/text()")[0]

item["img_list"] = self.get_img_list(item["href"], [])

content_list.append(item)

#提取下一页的url地址

next_url = html.xpath("//a[text()='下一页']/@href")

next_url = self.part_url + next_url[0] if len(next_url)>0 else None

return content_list, next_url

def get_img_list(self,detail_url, img_list):

#1. 发送请求,获取响应

detail_html_str = self.parse_url(detail_url)

#2. 提取数据

detail_html = etree.HTML(detail_html_str)

img_list += detail_html.xpath("//img[@class='BDE_Image']/@src")

#详情页下一页的url地址

next_url = detail_html.xpath("//a[text()='下一页']/@href")

next_url = self.part_url + next_url[0] if len(next_url)>0 else None

if next_url is not None: #当存在详情页的下一页,请求

return self.get_img_list(next_url, img_list)

#else不用写

img_list = [requests.utils.unquote(i).split("src=")[-1] for i in img_list]

return img_list

def save_content_list(self,content_list):#保存数据

for content in content_list:

print(content)

def run(self): #实现主要逻辑

next_url = self.start_url

while next_url is not None:

#1. start_url

#2. 发送请求,获取响应

html_str = self.parse_url(next_url)

#3. 提取数据

content_list, next_url = self.get_content_list(html_str)

#4. 保存

self.save_content_list(content_list)

#5. 获取next_url,循环2-5

if __name__ == '__main__':

tieba = TieBaSpider("每日中国")

tieba.run()