pytorch推理时将prelu转成relu实现

很多时候,我们的推理框架如,tensorrt,支持relu实现,但是不支持Prelu。此时我发现了该项目https://github.com/PKUZHOU/MTCNN_FaceDetection_TensorRT,其中说道:

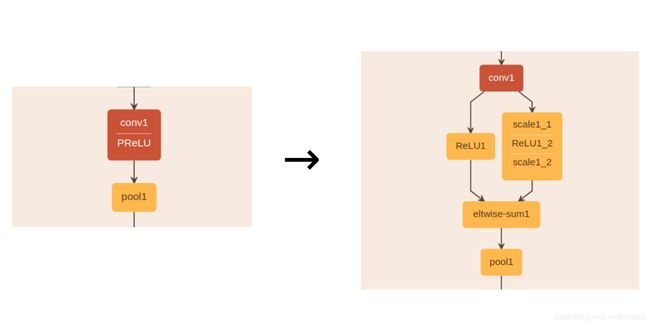

Considering TensorRT don’t support PRelu layer, which is widely used in MTCNN, one solution is to add Plugin Layer (costome layer) but experiments show that this method breaks the CBR process in TensorRT and is very slow. I use Relu layer, Scale layer and ElementWise addition Layer to replace Prelu (as illustrated below), which only adds a bit of computation and won’t affect CBR process, the weights of scale layers derive from original Prelu layers.

那我们在使用pytorch的模型时,训练正常使用Prelu,但是在推理时,或者在pytorch导出成ONNX时,同样可以参考该思路,将Prelu转换成relu,如下实验:

from torch.nn import Linear, Conv2d, BatchNorm1d, BatchNorm2d, PReLU, ReLU, Sigmoid, Dropout2d, Dropout, AvgPool2d, MaxPool2d, AdaptiveAvgPool2d, Sequential, Module, Parameter

import torch

checkpoint = torch.load('model.pth',

map_location=lambda storage, loc: storage)

state_dict = {k.replace("module.", ""): v for k, v in checkpoint.items()}

prelu_dict = {}

for k in state_dict:

if 'relu' in k:

newname = k.replace('.weight', '')

prelu_dict[newname] = state_dict[k]

prelu_dict['relu1_1'] = -1 * prelu_dict['relu1_1'].view(1, 64, 1, 1).repeat(1, 1, 56, 56).to('cuda')

neg56 = torch.ones(1, 64, 56, 56) * -1

neg56 = neg56.to('cuda')

def l2_norm(input):

norm = torch.norm(input, 2, 1, True)#求指定维度上的范数

output = torch.div(input, norm)#input除以norm

return output

class Resnet20(Module):

def __init__(self, embedding_size = 0, class_num = 0):

super(Resnet20, self).__init__()

self.conv1_1 = Conv2d(3, out_channels=64, kernel_size=(3, 3), groups=1, stride=(2, 2), padding=(1, 1), bias=True)

self._bn1_1 = BatchNorm2d(64, eps=2e-5, momentum=0.9)

self.relu1_1 = PReLU(64)

self.relu = ReLU()

def forward(self, x):

out = self.conv1_1(x)

out = self._bn1_1(out)

# out = self.relu1_1(out)

temp1 = self.relu(out)

temp2 = out * neg56

temp2 = self.relu(temp2)

temp2 = temp2 * prelu_dict['relu1_1']

out = temp1 + temp2

return l2_norm(out)