- element实现动态路由+面包屑

软件技术NINI

vue案例vue.js前端

el-breadcrumb是ElementUI组件库中的一个面包屑导航组件,它用于显示当前页面的路径,帮助用户快速理解和导航到应用的各个部分。在Vue.js项目中,如果你已经安装了ElementUI,就可以很方便地使用el-breadcrumb组件。以下是一个基本的使用示例:安装ElementUI(如果你还没有安装的话):你可以通过npm或yarn来安装ElementUI。bash复制代码npmi

- Linux下QT开发的动态库界面弹出操作(SDL2)

13jjyao

QT类qt开发语言sdl2linux

需求:操作系统为linux,开发框架为qt,做成需带界面的qt动态库,调用方为java等非qt程序难点:调用方为java等非qt程序,也就是说调用方肯定不带QApplication::exec(),缺少了这个,QTimer等事件和QT创建的窗口将不能弹出(包括opencv也是不能弹出);这与qt调用本身qt库是有本质的区别的思路:1.调用方缺QApplication::exec(),那么我们在接口

- 第四天旅游线路预览——从换乘中心到喀纳斯湖

陟彼高冈yu

基于Googleearthstudio的旅游规划和预览旅游

第四天:从贾登峪到喀纳斯风景区入口,晚上住宿贾登峪;换乘中心有4路车,喀纳斯①号车,去喀纳斯湖,路程时长约5分钟;将上面的的行程安排进行动态展示,具体步骤见”Googleearthstudio进行动态轨迹显示制作过程“、“Googleearthstudio入门教程”和“Googleearthstudio进阶教程“相关内容,得到行程如下所示:Day4-2-480p

- Google earth studio 简介

陟彼高冈yu

旅游

GoogleEarthStudio是一个基于Web的动画工具,专为创作使用GoogleEarth数据的动画和视频而设计。它利用了GoogleEarth强大的三维地图和卫星影像数据库,使用户能够轻松地创建逼真的地球动画、航拍视频和动态地图可视化。网址为https://www.google.com/earth/studio/。GoogleEarthStudio是一个基于Web的动画工具,专为创作使用G

- 每日算法&面试题,大厂特训二十八天——第二十天(树)

肥学

⚡算法题⚡面试题每日精进java算法数据结构

目录标题导读算法特训二十八天面试题点击直接资料领取导读肥友们为了更好的去帮助新同学适应算法和面试题,最近我们开始进行专项突击一步一步来。上一期我们完成了动态规划二十一天现在我们进行下一项对各类算法进行二十八天的一个小总结。还在等什么快来一起肥学进行二十八天挑战吧!!特别介绍小白练手专栏,适合刚入手的新人欢迎订阅编程小白进阶python有趣练手项目里面包括了像《机器人尬聊》《恶搞程序》这样的有趣文章

- Python爬虫解析工具之xpath使用详解

eqa11

python爬虫开发语言

文章目录Python爬虫解析工具之xpath使用详解一、引言二、环境准备1、插件安装2、依赖库安装三、xpath语法详解1、路径表达式2、通配符3、谓语4、常用函数四、xpath在Python代码中的使用1、文档树的创建2、使用xpath表达式3、获取元素内容和属性五、总结Python爬虫解析工具之xpath使用详解一、引言在Python爬虫开发中,数据提取是一个至关重要的环节。xpath作为一门

- Redis系列:Geo 类型赋能亿级地图位置计算

Ly768768

redisbootstrap数据库

1前言我们在篇深刻理解高性能Redis的本质的时候就介绍过Redis的几种基本数据结构,它是基于不同业务场景而设计的:动态字符串(REDIS_STRING):整数(REDIS_ENCODING_INT)、字符串(REDIS_ENCODING_RAW)双端列表(REDIS_ENCODING_LINKEDLIST)压缩列表(REDIS_ENCODING_ZIPLIST)跳跃表(REDIS_ENCODI

- Low Power概念介绍-Voltage Area

飞奔的大虎

随着智能手机,以及物联网的普及,芯片功耗的问题最近几年得到了越来越多的重视。为了实现集成电路的低功耗设计目标,我们需要在系统设计阶段就采用低功耗设计的方案。而且,随着设计流程的逐步推进,到了芯片后端设计阶段,降低芯片功耗的方法已经很少了,节省的功耗百分比也不断下降。芯片的功耗主要由静态功耗(staticleakagepower)和动态功耗(dynamicpower)构成。静态功耗主要是指电路处于等

- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

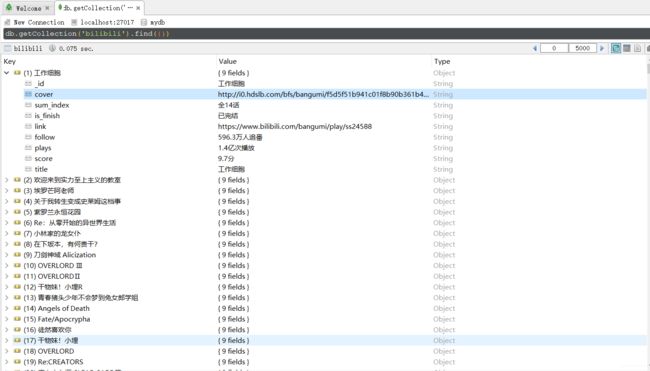

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- Java面试题精选:消息队列(二)

芒果不是芒

Java面试题精选javakafka

一、Kafka的特性1.消息持久化:消息存储在磁盘,所以消息不会丢失2.高吞吐量:可以轻松实现单机百万级别的并发3.扩展性:扩展性强,还是动态扩展4.多客户端支持:支持多种语言(Java、C、C++、GO、)5.KafkaStreams(一个天生的流处理):在双十一或者销售大屏就会用到这种流处理。使用KafkaStreams可以快速的把销售额统计出来6.安全机制:Kafka进行生产或者消费的时候会

- ArrayList 源码解析

程序猿进阶

Java基础ArrayListListjava面试性能优化架构设计idea

ArrayList是Java集合框架中的一个动态数组实现,提供了可变大小的数组功能。它继承自AbstractList并实现了List接口,是顺序容器,即元素存放的数据与放进去的顺序相同,允许放入null元素,底层通过数组实现。除该类未实现同步外,其余跟Vector大致相同。每个ArrayList都有一个容量capacity,表示底层数组的实际大小,容器内存储元素的个数不能多于当前容量。当向容器中添

- Java爬虫框架(一)--架构设计

狼图腾-狼之传说

java框架java任务html解析器存储电子商务

一、架构图那里搜网络爬虫框架主要针对电子商务网站进行数据爬取,分析,存储,索引。爬虫:爬虫负责爬取,解析,处理电子商务网站的网页的内容数据库:存储商品信息索引:商品的全文搜索索引Task队列:需要爬取的网页列表Visited表:已经爬取过的网页列表爬虫监控平台:web平台可以启动,停止爬虫,管理爬虫,task队列,visited表。二、爬虫1.流程1)Scheduler启动爬虫器,TaskMast

- Java:爬虫框架

dingcho

Javajava爬虫

一、ApacheNutch2【参考地址】Nutch是一个开源Java实现的搜索引擎。它提供了我们运行自己的搜索引擎所需的全部工具。包括全文搜索和Web爬虫。Nutch致力于让每个人能很容易,同时花费很少就可以配置世界一流的Web搜索引擎.为了完成这一宏伟的目标,Nutch必须能够做到:每个月取几十亿网页为这些网页维护一个索引对索引文件进行每秒上千次的搜索提供高质量的搜索结果简单来说Nutch支持分

- Linux查看服务器日志

TPBoreas

运维linux运维

一、tail这个是我最常用的一种查看方式用法如下:tail-n10test.log查询日志尾部最后10行的日志;tail-n+10test.log查询10行之后的所有日志;tail-fn10test.log循环实时查看最后1000行记录(最常用的)一般还会配合着grep用,(实时抓包)例如:tail-fn1000test.log|grep'关键字'(动态抓包)tail-fn1000test.log

- WebMagic:强大的Java爬虫框架解析与实战

Aaron_945

Javajava爬虫开发语言

文章目录引言官网链接WebMagic原理概述基础使用1.添加依赖2.编写PageProcessor高级使用1.自定义Pipeline2.分布式抓取优点结论引言在大数据时代,网络爬虫作为数据收集的重要工具,扮演着不可或缺的角色。Java作为一门广泛使用的编程语言,在爬虫开发领域也有其独特的优势。WebMagic是一个开源的Java爬虫框架,它提供了简单灵活的API,支持多线程、分布式抓取,以及丰富的

- vue + Element UI table动态合并单元格

我家媳妇儿萌哒哒

elementUIvue.js前端javascript

一、功能需求1、根据名称相同的合并工作阶段和主要任务合并这两列,但主要任务内容一样,但要考虑主要任务一样,但工作阶段不一样的情况。(枞向合并)2、落实情况里的定量内容和定性内容值一样则合并。(横向合并)二、功能实现exportdefault{data(){return{tableData:[{name:'a',address:'1',age:'1',six:'2'},{name:'a',addre

- 00. 这里整理了最全的爬虫框架(Java + Python)

有一只柴犬

爬虫系列爬虫javapython

目录1、前言2、什么是网络爬虫3、常见的爬虫框架3.1、java框架3.1.1、WebMagic3.1.2、Jsoup3.1.3、HttpClient3.1.4、Crawler4j3.1.5、HtmlUnit3.1.6、Selenium3.2、Python框架3.2.1、Scrapy3.2.2、BeautifulSoup+Requests3.2.3、Selenium3.2.4、PyQuery3.2

- 粒子群优化 (PSO) 在三维正弦波函数中的应用

subject625Ruben

机器学习人工智能matlab算法

在这篇博客中,我们将展示如何使用粒子群优化(PSO)算法求解三维正弦波函数,并通过增加正弦波扰动,使优化过程更加复杂和有趣。本文将介绍目标函数的定义、PSO参数设置以及算法执行的详细过程,并展示搜索空间中的动态过程和收敛曲线。1.目标函数定义我们使用的目标函数是一个三维正弦波函数,定义如下:objectiveFunc=@(x)sin(sqrt(x(1).^2+x(2).^2))+0.5*sin(5

- 《 C++ 修炼全景指南:四 》揭秘 C++ List 容器背后的实现原理,带你构建自己的双向链表

Lenyiin

技术指南C++修炼全景指南c++list链表stl

本篇博客,我们将详细讲解如何从头实现一个功能齐全且强大的C++List容器,并深入到各个细节。这篇博客将包括每一步的代码实现、解释以及扩展功能的探讨,目标是让初学者也能轻松理解。一、简介1.1、背景介绍在C++中,std::list是一个基于双向链表的容器,允许高效的插入和删除操作,适用于频繁插入和删除操作的场景。与动态数组不同,list允许常数时间内的插入和删除操作,支持双向遍历。这篇文章将详细

- 这样旅行的人,值得拥有丰富而饱满的体验

究竟

01“一张车票就实现了来拉萨的梦想。原以为很遥远,现也觉得旅途值得。也不过山河故人而已。”打开朋友圈,看到了强子新发的动态,配了两张图,一张图里是拉萨火车站,另一张图里是二十来张排列得整整齐齐的火车票,终点站都是拉萨。又想起几天前,姑娘秀了一波在青海湖的美照,照片里的她,身穿鲜艳的红色长裙,坐在牦牛背上,阳光打下来,她笑靥如花。橙色的旗子风中飘扬,那蓝绿色的青海湖和天空再美,也都成了陪衬。再看看自

- 代码随想录Day 41|动态规划之买卖股票问题,leetcode题目121. 买卖股票的最佳时机、122. 买卖股票的最佳时机Ⅱ、123. 买卖股票的最佳时机Ⅲ

LluckyYH

动态规划leetcode算法数据结构

提示:DDU,供自己复习使用。欢迎大家前来讨论~文章目录买卖股票的最佳时机相关题目题目一:121.买卖股票的最佳时机解题思路:题目二:122.买卖股票的最佳时机II解题思路:题目三:123.买卖股票的最佳时机III解题思路总结买卖股票的最佳时机相关题目题目一:121.买卖股票的最佳时机[[121.买卖股票的最佳时机](https://leetcode.cn/problems/combination

- python爬取微信小程序数据,python爬取小程序数据

2301_81900439

前端

大家好,小编来为大家解答以下问题,python爬取微信小程序数据,python爬取小程序数据,现在让我们一起来看看吧!Python爬虫系列之微信小程序实战基于Scrapy爬虫框架实现对微信小程序数据的爬取首先,你得需要安装抓包工具,这里推荐使用Charles,至于怎么使用后期有时间我会出一个事例最重要的步骤之一就是分析接口,理清楚每一个接口功能,然后连接起来形成接口串思路,再通过Spider的回调

- 2021-11-26

雅雅_201d

感恩活着的美好幸福喜悦。谢谢谢谢谢谢感恩打卡261.感恩亲爱的自己每天坚定的动态静心,让我充满力量充满奇迹。谢谢谢谢谢谢2.感恩遇见的每一个在走心的人儿,高频的能量在深深的吸引着我引领着我。谢谢谢谢谢谢3.感恩范先生有着良好的生命状态,和高能量的智慧传递,让我愉悦安心。谢谢谢谢谢谢4.感恩宝贝女儿,积极正向充满力量,每一声回应都让我更加的安心更加的有力量。谢谢谢谢谢谢5.感谢亲爱的爸爸妈妈非常喜悦

- uniapp实现动态标记效果详细步骤【前端开发】

2401_85123349

uni-app

第二个点在于实现将已经被用户标记的内容在下一次获取后刷新它的状态为已标记。这是什么意思呢?比如说上面gif图中的这些人物对象,有一些已被该用户添加为关心,那么当用户下一次进入该页面时,这些已经被添加关心的对象需要以“红心”状态显现出来。这个点的难度还不算大,只需要在每一次获取后端的内容后对标记对象进行状态更新即可。II.动态标记效果实现思路和步骤首先,整体的思路是利用动态类名对不同的元素进行选择。

- 51单片机——I2C总线存储器24C02的应用

老侯(Old monkey)

51单片机嵌入式硬件单片机

目标实现功能单片机先向24C02写入256个字节的数据,再从24C02中一次读取2个字节的数据、并在数码管上动态显示,直至读完24C02中256个字节的数据。1.I2C总线简介I2C总线有两根双向的信号线,一根是数据线SDA,另一根是时钟线SCL。I2C总线通过上拉电阻接正电源,因此,当总线空闲时为高电平。2.I2C通信协议起始信号、停止信号由主机发出。在数据传送时,当时钟线为高电平时,数据线上的

- python中文版软件下载-Python中文版

编程大乐趣

python中文版是一种面向对象的解释型计算机程序设计语言。python中文版官网面向对象编程,拥有高效的高级数据结构和简单而有效的方法,其优雅的语法、动态类型、以及天然的解释能力,让它成为理想的语言。软件功能强大,简单易学,可以帮助用户快速编写代码,而且代码运行速度非常快,几乎可以支持所有的操作系统,实用性真的超高的。python中文版软件介绍:python中文版的解释器及其扩展标准库的源码和编

- 免费像素画绘制软件 | Pixelorama v1.0.3

dntktop

软件运维windows

Pixelorama是一款开源像素艺术多工具软件,旨在为用户提供一个强大且易于使用的平台来创作各种像素艺术作品,包括精灵、瓷砖和动画。这款软件以其丰富的工具箱、动画支持、像素完美模式、剪裁遮罩、预制及可导入的调色板等特色功能,满足了像素艺术家们的各种需求。用户可以享受到动态工具映射、洋葱皮效果、帧标签、播放动画时绘制等高级功能,以及非破坏性的、完全可定制的图层效果,如轮廓、渐变映射、阴影和调色板化

- 后端开发刷题 | 把数字翻译成字符串(动态规划)

jingling555

笔试题目动态规划java算法数据结构后端

描述有一种将字母编码成数字的方式:'a'->1,'b->2',...,'z->26'。现在给一串数字,返回有多少种可能的译码结果数据范围:字符串长度满足0=10&&num<=26){if(i==1){dp[i]+=1;}else{dp[i]+=dp[i-2];}}}returndp[nums.length()-1];}}

- C#动态加载DLL程序集及使用反射创建实例-简记

不全

C#相关Asp.netWebFormAsp.netMVCc#Assembly反射程序集

Assembly动态加载程序集:分两种情况:1、需要加载的程序集已经在程序中被引用了,则直接从当前程序域中查找即可:Assemblyassembly=AppDomain.CurrentDomain.GetAssemblies().FirstOrDefault(x=>x.GetName().Name.Contains("theAssemblyName"));2、需要加载的程序集未被加载,则使用程序集

- 滑动窗口+动态规划

wniuniu_

算法动态规划算法

前言:分析这个题目的时候,就知道要这两个线段要分开,但是要保证得到最优解,那么我们在选取第二根线段的时候,要保证我们第一根线段是左边最优解并且我们选的两根线段的右端点一定是我们的数组的点(贪心思想)classSolution{public:intmaximizeWin(vector&prizePositions,intk){intn=prizePositions.size();vectormx(n

- Enum 枚举

120153216

enum枚举

原文地址:http://www.cnblogs.com/Kavlez/p/4268601.html Enumeration

于Java 1.5增加的enum type...enum type是由一组固定的常量组成的类型,比如四个季节、扑克花色。在出现enum type之前,通常用一组int常量表示枚举类型。比如这样:

public static final int APPLE_FUJI = 0

- Java8简明教程

bijian1013

javajdk1.8

Java 8已于2014年3月18日正式发布了,新版本带来了诸多改进,包括Lambda表达式、Streams、日期时间API等等。本文就带你领略Java 8的全新特性。

一.允许在接口中有默认方法实现

Java 8 允许我们使用default关键字,为接口声明添

- Oracle表维护 快速备份删除数据

cuisuqiang

oracle索引快速备份删除

我知道oracle表分区,不过那是数据库设计阶段的事情,目前是远水解不了近渴。

当前的数据库表,要求保留一个月数据,且表存在大量录入更新,不存在程序删除。

为了解决频繁查询和更新的瓶颈,我在oracle内根据需要创建了索引。但是随着数据量的增加,一个半月数据就要超千万,此时就算有索引,对高并发的查询和更新来说,让然有所拖累。

为了解决这个问题,我一般一个月会进行一次数据库维护,主要工作就是备

- java多态内存分析

麦田的设计者

java内存分析多态原理接口和抽象类

“ 时针如果可以回头,熟悉那张脸,重温嬉戏这乐园,墙壁的松脱涂鸦已经褪色才明白存在的价值归于记忆。街角小店尚存在吗?这大时代会不会牵挂,过去现在花开怎么会等待。

但有种意外不管痛不痛都有伤害,光阴远远离开,那笑声徘徊与脑海。但这一秒可笑不再可爱,当天心

- Xshell实现Windows上传文件到Linux主机

被触发

windows

经常有这样的需求,我们在Windows下载的软件包,如何上传到远程Linux主机上?还有如何从Linux主机下载软件包到Windows下;之前我的做法现在看来好笨好繁琐,不过也达到了目的,笨人有本方法嘛;

我是怎么操作的:

1、打开一台本地Linux虚拟机,使用mount 挂载Windows的共享文件夹到Linux上,然后拷贝数据到Linux虚拟机里面;(经常第一步都不顺利,无法挂载Windo

- 类的加载ClassLoader

肆无忌惮_

ClassLoader

类加载器ClassLoader是用来将java的类加载到虚拟机中,类加载器负责读取class字节文件到内存中,并将它转为Class的对象(类对象),通过此实例的 newInstance()方法就可以创建出该类的一个对象。

其中重要的方法为findClass(String name)。

如何写一个自己的类加载器呢?

首先写一个便于测试的类Student

- html5写的玫瑰花

知了ing

html5

<html>

<head>

<title>I Love You!</title>

<meta charset="utf-8" />

</head>

<body>

<canvas id="c"></canvas>

- google的ConcurrentLinkedHashmap源代码解析

矮蛋蛋

LRU

原文地址:

http://janeky.iteye.com/blog/1534352

简述

ConcurrentLinkedHashMap 是google团队提供的一个容器。它有什么用呢?其实它本身是对

ConcurrentHashMap的封装,可以用来实现一个基于LRU策略的缓存。详细介绍可以参见

http://code.google.com/p/concurrentlinke

- webservice获取访问服务的ip地址

alleni123

webservice

1. 首先注入javax.xml.ws.WebServiceContext,

@Resource

private WebServiceContext context;

2. 在方法中获取交换请求的对象。

javax.xml.ws.handler.MessageContext mc=context.getMessageContext();

com.sun.net.http

- 菜鸟的java基础提升之道——————>是否值得拥有

百合不是茶

1,c++,java是面向对象编程的语言,将万事万物都看成是对象;java做一件事情关注的是人物,java是c++继承过来的,java没有直接更改地址的权限但是可以通过引用来传值操作地址,java也没有c++中繁琐的操作,java以其优越的可移植型,平台的安全型,高效性赢得了广泛的认同,全世界越来越多的人去学习java,我也是其中的一员

java组成:

- 通过修改Linux服务自动启动指定应用程序

bijian1013

linux

Linux中修改系统服务的命令是chkconfig (check config),命令的详细解释如下: chkconfig

功能说明:检查,设置系统的各种服务。

语 法:chkconfig [ -- add][ -- del][ -- list][系统服务] 或 chkconfig [ -- level <</SPAN>

- spring拦截器的一个简单实例

bijian1013

javaspring拦截器Interceptor

Purview接口

package aop;

public interface Purview {

void checkLogin();

}

Purview接口的实现类PurviesImpl.java

package aop;

public class PurviewImpl implements Purview {

public void check

- [Velocity二]自定义Velocity指令

bit1129

velocity

什么是Velocity指令

在Velocity中,#set,#if, #foreach, #elseif, #parse等,以#开头的称之为指令,Velocity内置的这些指令可以用来做赋值,条件判断,循环控制等脚本语言必备的逻辑控制等语句,Velocity的指令是可扩展的,即用户可以根据实际的需要自定义Velocity指令

自定义指令(Directive)的一般步骤

&nbs

- 【Hive十】Programming Hive学习笔记

bit1129

programming

第二章 Getting Started

1.Hive最大的局限性是什么?一是不支持行级别的增删改(insert, delete, update)二是查询性能非常差(基于Hadoop MapReduce),不适合延迟小的交互式任务三是不支持事务2. Hive MetaStore是干什么的?Hive persists table schemas and other system metadata.

- nginx有选择性进行限制

ronin47

nginx 动静 限制

http {

limit_conn_zone $binary_remote_addr zone=addr:10m;

limit_req_zone $binary_remote_addr zone=one:10m rate=5r/s;...

server {...

location ~.*\.(gif|png|css|js|icon)$ {

- java-4.-在二元树中找出和为某一值的所有路径 .

bylijinnan

java

/*

* 0.use a TwoWayLinkedList to store the path.when the node can't be path,you should/can delete it.

* 1.curSum==exceptedSum:if the lastNode is TreeNode,printPath();delete the node otherwise

- Netty学习笔记

bylijinnan

javanetty

本文是阅读以下两篇文章时:

http://seeallhearall.blogspot.com/2012/05/netty-tutorial-part-1-introduction-to.html

http://seeallhearall.blogspot.com/2012/06/netty-tutorial-part-15-on-channel.html

我的一些笔记

===

- js获取项目路径

cngolon

js

//js获取项目根路径,如: http://localhost:8083/uimcardprj

function getRootPath(){

//获取当前网址,如: http://localhost:8083/uimcardprj/share/meun.jsp

var curWwwPath=window.document.locati

- oracle 的性能优化

cuishikuan

oracleSQL Server

在网上搜索了一些Oracle性能优化的文章,为了更加深层次的巩固[边写边记],也为了可以随时查看,所以发表这篇文章。

1.ORACLE采用自下而上的顺序解析WHERE子句,根据这个原理,表之间的连接必须写在其他WHERE条件之前,那些可以过滤掉最大数量记录的条件必须写在WHERE子句的末尾。(这点本人曾经做过实例验证过,的确如此哦!

- Shell变量和数组使用详解

daizj

linuxshell变量数组

Shell 变量

定义变量时,变量名不加美元符号($,PHP语言中变量需要),如:

your_name="w3cschool.cc"

注意,变量名和等号之间不能有空格,这可能和你熟悉的所有编程语言都不一样。同时,变量名的命名须遵循如下规则:

首个字符必须为字母(a-z,A-Z)。

中间不能有空格,可以使用下划线(_)。

不能使用标点符号。

不能使用ba

- 编程中的一些概念,KISS、DRY、MVC、OOP、REST

dcj3sjt126com

REST

KISS、DRY、MVC、OOP、REST (1)KISS是指Keep It Simple,Stupid(摘自wikipedia),指设计时要坚持简约原则,避免不必要的复杂化。 (2)DRY是指Don't Repeat Yourself(摘自wikipedia),特指在程序设计以及计算中避免重复代码,因为这样会降低灵活性、简洁性,并且可能导致代码之间的矛盾。 (3)OOP 即Object-Orie

- [Android]设置Activity为全屏显示的两种方法

dcj3sjt126com

Activity

1. 方法1:AndroidManifest.xml 里,Activity的 android:theme 指定为" @android:style/Theme.NoTitleBar.Fullscreen" 示例: <application

- solrcloud 部署方式比较

eksliang

solrCloud

solrcloud 的部署其实有两种方式可选,那么我们在实践开发中应该怎样选择呢? 第一种:当启动solr服务器时,内嵌的启动一个Zookeeper服务器,然后将这些内嵌的Zookeeper服务器组成一个集群。 第二种:将Zookeeper服务器独立的配置一个集群,然后将solr交给Zookeeper进行管理

谈谈第一种:每启动一个solr服务器就内嵌的启动一个Zoo

- Java synchronized关键字详解

gqdy365

synchronized

转载自:http://www.cnblogs.com/mengdd/archive/2013/02/16/2913806.html

多线程的同步机制对资源进行加锁,使得在同一个时间,只有一个线程可以进行操作,同步用以解决多个线程同时访问时可能出现的问题。

同步机制可以使用synchronized关键字实现。

当synchronized关键字修饰一个方法的时候,该方法叫做同步方法。

当s

- js实现登录时记住用户名

hw1287789687

记住我记住密码cookie记住用户名记住账号

在页面中如何获取cookie值呢?

如果是JSP的话,可以通过servlet的对象request 获取cookie,可以

参考:http://hw1287789687.iteye.com/blog/2050040

如果要求登录页面是html呢?html页面中如何获取cookie呢?

直接上代码了

页面:loginInput.html

代码:

<!DOCTYPE html PUB

- 开发者必备的 Chrome 扩展

justjavac

chrome

Firebug:不用多介绍了吧https://chrome.google.com/webstore/detail/bmagokdooijbeehmkpknfglimnifench

ChromeSnifferPlus:Chrome 探测器,可以探测正在使用的开源软件或者 js 类库https://chrome.google.com/webstore/detail/chrome-sniffer-pl

- 算法机试题

李亚飞

java算法机试题

在面试机试时,遇到一个算法题,当时没能写出来,最后是同学帮忙解决的。

这道题大致意思是:输入一个数,比如4,。这时会输出:

&n

- 正确配置Linux系统ulimit值

字符串

ulimit

在Linux下面部 署应用的时候,有时候会遇上Socket/File: Can’t open so many files的问题;这个值也会影响服务器的最大并发数,其实Linux是有文件句柄限制的,而且Linux默认不是很高,一般都是1024,生产服务器用 其实很容易就达到这个数量。下面说的是,如何通过正解配置来改正这个系统默认值。因为这个问题是我配置Nginx+php5时遇到了,所以我将这篇归纳进

- hibernate调用返回游标的存储过程

Supanccy2013

javaDAOoracleHibernatejdbc

注:原创作品,转载请注明出处。

上篇博文介绍的是hibernate调用返回单值的存储过程,本片博文说的是hibernate调用返回游标的存储过程。

此此扁博文的存储过程的功能相当于是jdbc调用select 的作用。

1,创建oracle中的包,并在该包中创建的游标类型。

---创建oracle的程

- Spring 4.2新特性-更简单的Application Event

wiselyman

application

1.1 Application Event

Spring 4.1的写法请参考10点睛Spring4.1-Application Event

请对比10点睛Spring4.1-Application Event

使用一个@EventListener取代了实现ApplicationListener接口,使耦合度降低;

1.2 示例

包依赖

<p