利用LDA模型对邮件内的内容做主题分类

import numpy as np

import pandas as pd

import re

from gensim import corpora,models,similarities

import gensim

import sys

import io

sys.stdout = io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030')

df= pd.read_csv("./LDA_Data.csv",encoding="gbk")

df=df[["Id","ExtractedBodyText"]].dropna()

def clean_email_text(text):

text = text.replace("\n"," ")

text = re.sub(r"-"," ",text)

text = re.sub(r"\d+/\d+/\d+","",text)

text= re.sub(r"[0-2]?[0-9]:[0-6][0-9]","",text)

text = re.sub(r"[\w]+@[\.\w]+","",text)

text = re.sub(r"/[a-zA-Z]*[:\//\]*[A-Za-z0-9\-]+\.+[A-Za-z0-9\.\/%&=\?\-_]+/i","",text)

pure_text = ""

for letter in text:

if letter.isalpha() or letter==" ":

pure_text+=letter

text = " ".join(word for word in pure_text.split() if len(word)>1)

return text

df["new"]=df["ExtractedBodyText"].apply(lambda s:clean_email_text(s))

doclist = df["new"].values

print("修改后为-",df)

stoplist = ['very', 'ourselves', 'am', 'doesn', 'through', 'me', 'against', 'up', 'just', 'her', 'ours',

'couldn', 'because', 'is', 'isn', 'it', 'only', 'in', 'such', 'too', 'mustn', 'under', 'their',

'if', 'to', 'my', 'himself', 'after', 'why', 'while', 'can', 'each', 'itself', 'his', 'all', 'once',

'herself', 'more', 'our', 'they', 'hasn', 'on', 'ma', 'them', 'its', 'where', 'did', 'll', 'you',

'didn', 'nor', 'as', 'now', 'before', 'those', 'yours', 'from', 'who', 'was', 'm', 'been', 'will',

'into', 'same', 'how', 'some', 'of', 'out', 'with', 's', 'being', 't', 'mightn', 'she', 'again', 'be',

'by', 'shan', 'have', 'yourselves', 'needn', 'and', 'are', 'o', 'these', 'further', 'most', 'yourself',

'having', 'aren', 'here', 'he', 'were', 'but', 'this', 'myself', 'own', 'we', 'so', 'i', 'does', 'both',

'when', 'between', 'd', 'had', 'the', 'y', 'has', 'down', 'off', 'than', 'haven', 'whom', 'wouldn',

'should', 've', 'over', 'themselves', 'few', 'then', 'hadn', 'what', 'until', 'won', 'no', 'about',

'any', 'that', 'for', 'shouldn', 'don', 'do', 'there', 'doing', 'an', 'or', 'ain', 'hers', 'wasn',

'weren', 'above', 'a', 'at', 'your', 'theirs', 'below', 'other', 'not', 're', 'him', 'during', 'which']

texts = [[word for word in doc.lower().split() if word not in stoplist] for doc in doclist]

dictionary = corpora.Dictionary(texts)

corpus = [dictionary.doc2bow(text) for text in texts]

print(corpus[4])

lda = gensim.models.ldamodel.LdaModel(corpus=corpus,id2word=dictionary,num_topics=3)

print(lda.print_topic(2,topn=5))

print(lda.print_topics(num_topics = 3,num_words = 5))

sentence = "As long as you are willing to work hard, you can always find your own happiness."

sentence = clean_email_text(sentence)

sentence = [word for word in sentence.lower().split() if word not in stoplist]

print("句子为",sentence)

sentence_bows = dictionary.doc2bow(sentence)

print(lda.get_document_topics(sentence_bows))

print("词典",sentence_bows)

print(lda.get_term_topics(0))

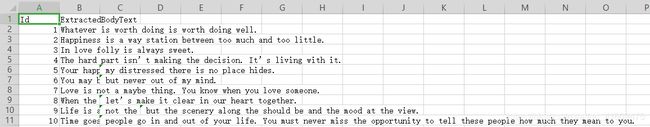

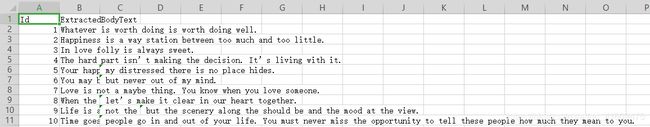

自己编写的数据跑的实验,数据格式如下

经过代码

经过代码

df["new"]=df["ExtractedBodyText"].apply(lambda s:clean_email_text(s))

的运行,处理后的表格形式为