tensorflow中dropout的使用

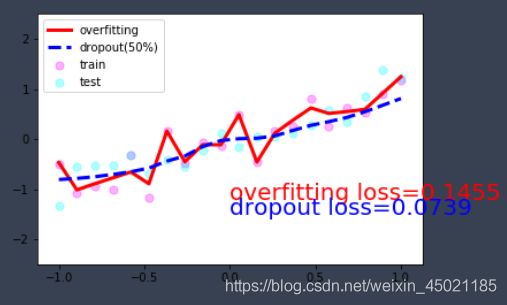

dropou测试:dropout是tensorflow中防止训练过拟合的一种措施,tf.layers.dropout函数需要传入一个参数keep_prob,训练过程中,每次迭代将只使用keep_prob*cell_size的神经元进行训练,其余神经元不进行迭代。

import tensorflow as tf

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

tf.set_random_seed(1)

np.random.seed(1)

# 参数

N_SAMPLES = 20

N_HIDDEN = 300

LR = 0.01

# 训练数据。y=x+noise

x = np.linspace(-1, 1, N_SAMPLES)[:, np.newaxis]

y = x + 0.3*np.random.randn(N_SAMPLES)[:, np.newaxis]

# 测试数据

test_x = x.copy()

test_y = test_x + 0.3*np.random.randn(N_SAMPLES)[:, np.newaxis]

# 数据可视化

plt.scatter(x, y, c='magenta', s=50, alpha=0.5, label='train')

plt.scatter(test_x, test_y, c='cyan', s=50, alpha=0.5, label='test')

plt.legend(loc='upper left')

plt.ylim((-2.5, 2.5))

plt.show()

# 创建variable和tensor

tf_x = tf.placeholder(tf.float32, [None, 1])

tf_y = tf.placeholder(tf.float32, [None, 1])

tf_is_training = tf.placeholder(tf.bool, None) # to control dropout when training and testing

# 建立普通的两层神经网络,并生成损失函数和优化器

# addition:这里直接使用新得api:tf.layers,tf.layers.dense <===> activation_func(w*x+b) 简化代码

o1 = tf.layers.dense(tf_x, N_HIDDEN, tf.nn.relu)

o2 = tf.layers.dense(o1, N_HIDDEN, tf.nn.relu)

o_out = tf.layers.dense(o2, 1)

o_loss = tf.losses.mean_squared_error(tf_y, o_out)

o_train = tf.train.AdamOptimizer(LR).minimize(o_loss)

# 建立两层带dropout的神经网路,并生成损失函数和优化器

# addition:tf.layers.dropout<===>tf.nn.dropout(wx_plus_b,keep_prob)

d1 = tf.layers.dense(tf_x, N_HIDDEN, tf.nn.relu)

d1 = tf.layers.dropout(d1, rate=0.5, training=tf_is_training) # drop out 50% of inputs

d2 = tf.layers.dense(d1, N_HIDDEN, tf.nn.relu)

d2 = tf.layers.dropout(d2, rate=0.5, training=tf_is_training) # drop out 50% of inputs

d_out = tf.layers.dense(d2, 1)

d_loss = tf.losses.mean_squared_error(tf_y, d_out)

d_train = tf.train.AdamOptimizer(LR).minimize(d_loss)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

plt.ion() # 持续作图

for t in range(500):

sess.run([o_train, d_train], {tf_x: x, tf_y: y, tf_is_training: True}) # train, set is_training=True

if t % 10 == 0:

# plotting

plt.cla()

o_loss_, d_loss_, o_out_, d_out_ = sess.run(

[o_loss, d_loss, o_out, d_out], {tf_x: test_x, tf_y: test_y, tf_is_training: False} # test, set is_training=False

)

plt.scatter(x, y, c='magenta', s=50, alpha=0.3, label='train'); plt.scatter(test_x, test_y, c='cyan', s=50, alpha=0.3, label='test')

plt.plot(test_x, o_out_, 'r-', lw=3, label='overfitting'); plt.plot(test_x, d_out_, 'b--', lw=3, label='dropout(50%)')

plt.text(0, -1.2, 'overfitting loss=%.4f' % o_loss_, fontdict={'size': 20, 'color': 'red'}); plt.text(0, -1.5, 'dropout loss=%.4f' % d_loss_, fontdict={'size': 20, 'color': 'blue'})

plt.legend(loc='upper left'); plt.ylim((-2.5, 2.5)); plt.pause(0.1)

plt.ioff()

plt.show()

输出结果: