tensorflow2实现DeepFM(基于DataFrame格式训练数据)

网上有很多deepFM的实现版本,最广为人知的就是chenchenglong的那一版,这个实现是在Libsvm格式训练数据的基础上实现的,我下面的实现是在DateFrame格式训练数据的基础上实现的。

模型结构和训练数据的格式息息相关,为了配合DataFrame格式的训练数据,我把数据拆分成numeric部分和catogorical两部分,作为两个输入喂给模型(keras支持多输入和多输出),输出只有一个。

FM要求变量做embedding后还要和自身的值相乘,这个对categorical类型的变量比较容易,因为one-hot后只有一维激活并且是1,所以embedding后不需要再乘以1(embedding向量乘以1还是本身)。numerical的变量先通过embedding得到一个向量,然后将对应的numerical的变量广播后乘以该enbedding向量,得到最终对numeric变量的embedding。

为了节省FM一阶部分的内存占用,FM的一阶部分我们也借助了embedding来实现,只不过embedding的维度是1而已。

import tensorflow as tf

import pandas as pd

import numpy as np

from tensorflow import keras

from tensorflow.keras import layers

# train data info

categorical_feature_number = 13945

df_numeric_columns = ['I1', 'I3', 'I4', 'I5', 'I6', 'I7', 'I8', 'I9', 'I10', 'I11', 'I12', 'I13']

df_categorical_columns = ['C1', 'C2', 'C4', 'C5', 'C6', 'C7', 'C8', 'C10', 'C11',

'C13', 'C14', 'C15', 'C17', 'C18','C19', 'C20',

'C22', 'C23', 'C24', 'C25', 'C26', 'I2']

# define numeric embedding

class NumericEmbeddingLayer(layers.Layer):

def __init__(self, input_dim, output_dim, name=""):

super(NumericEmbeddingLayer, self).__init__()

self.input_dim = input_dim

self.output_dim = output_dim

self.embedding_w = self.add_weight(shape=(self.input_dim, self.output_dim)

, initializer="random_normal"

, trainable=True

, name=name)

def call(self, inputs):

assert(inputs.shape[1]==self.input_dim)

inputs = layers.RepeatVector(self.output_dim)(inputs)

inputs = tf.transpose(inputs, perm=[0,2,1])

return inputs * self.embedding_w

# define catrgorical embedding

class CategoricalEmbeddingLayer(layers.Layer):

def __init__(self, input_dim, output_dim, name=""):

super(CategoricalEmbeddingLayer, self).__init__()

self.input_dim = input_dim

self.output_dim = output_dim

self.embedding_layers = layers.Embedding(self.input_dim, self.output_dim, name=name)

def call(self, inputs):

return self.embedding_layers(inputs)

embedding_dim = 16

numeric_column_number = len(df_numeric_columns)

categorical_column_number = len(df_categorical_columns)

feature_dict_dim = categorical_feature_number

# define model

numeric_input = keras.Input(shape=(numeric_column_number,), name="numeric_input")

categorical_input = keras.Input(shape=(categorical_column_number,), name="categorical_input")

""" linear 部分尝试使用embedding来实现"""

fm_linear_numeric_feature_embedding_layer = NumericEmbeddingLayer(numeric_column_number, 1)

fm_linear_numeric_feature_embedding_input = fm_linear_numeric_feature_embedding_layer(numeric_input)

fm_linear_categorical_feature_embedding_layer = CategoricalEmbeddingLayer(feature_dict_dim, 1)

fm_linear_categorical_feature_embedding_input = fm_linear_categorical_feature_embedding_layer(categorical_input)

fm_linear_output = layers.concatenate([fm_linear_numeric_feature_embedding_input, fm_linear_categorical_feature_embedding_input]

, axis=1

, name="linear_input_concat")

fm_linear_output = tf.squeeze(fm_linear_output, axis=2, name="linear_squeeze")

fm_linear_output = layers.Dense(1, activation=tf.nn.relu, name="fm_linear_ouput")(fm_linear_output)

print(fm_linear_output)

""" linear embedding end """

""" cross """

# fm model : cross , FM默认所有特征全部交叉,可以在下面的代码中设置一个mask,把不要参与交叉的特征drop掉

# numeric

numeric_feature_embedding_layer = NumericEmbeddingLayer(numeric_column_number, embedding_dim)

numeric_feature_embedding_input = numeric_feature_embedding_layer(numeric_input)

# categorical

categorical_feature_embedding_layer = CategoricalEmbeddingLayer(feature_dict_dim, embedding_dim)

categorical_feature_embedding_input = categorical_feature_embedding_layer(categorical_input)

# fm crossoutput

fm_embedding_input = layers.concatenate([numeric_feature_embedding_input, categorical_feature_embedding_input]

, axis=1

, name="embedding_concat")

#! mask here

sum_then_square = tf.square(tf.reduce_sum(fm_embedding_input, axis=1))

square_then_sum = tf.reduce_sum(tf.square(fm_embedding_input) ,axis=1)

fm_cross_output = tf.subtract(sum_then_square, square_then_sum)

""" cross end """

# fm model

# fm output

fm_out = layers.concatenate([fm_linear_output, fm_cross_output], axis=1, name="fm_out")

Tensor("fm_linear_ouput/Identity:0", shape=(None, 1), dtype=float32)

# deep model

deep_struct = {

"L1":256,"L2":128,"L3":64}

deep_input = layers.Reshape((1, (numeric_column_number+categorical_column_number)*embedding_dim), name="deep_reshape_layer")(fm_embedding_input)

x1 = layers.Dense(deep_struct["L1"], activation=tf.nn.relu, name="deep_x1")(deep_input)

x1 = layers.Dropout(0.25)(x1)

x2 = layers.Dense(deep_struct["L2"], activation=tf.nn.relu, name="deep_x2")(x1)

deep_out = layers.Dense(deep_struct["L3"], activation=tf.nn.relu, name="deep_out")(x2)

# deepfm model

deep_out_squeeze = tf.squeeze(deep_out, axis=1, name="deep_squeeze")

deepfm_output = layers.concatenate([fm_out, deep_out_squeeze], axis=1)

# deepfm_output = fm_out

deepfm_output = layers.Dense(1, activation='sigmoid', name="deepfm_out")(deepfm_output)

# Define Model

model = keras.Model(inputs=[numeric_input, categorical_input], outputs=[deepfm_output])

optimizer = tf.keras.optimizers.Adam()

model.compile(optimizer=optimizer,

loss='binary_crossentropy',

metrics=['accuracy','AUC'])

model.summary()

Model: "model"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

numeric_input (InputLayer) [(None, 12)] 0

__________________________________________________________________________________________________

categorical_input (InputLayer) [(None, 22)] 0

__________________________________________________________________________________________________

numeric_embedding_layer_1 (Nume (None, 12, 16) 192 numeric_input[0][0]

__________________________________________________________________________________________________

categorical_embedding_layer_1 ( (None, 22, 16) 223120 categorical_input[0][0]

__________________________________________________________________________________________________

embedding_concat (Concatenate) (None, 34, 16) 0 numeric_embedding_layer_1[0][0]

categorical_embedding_layer_1[0][

__________________________________________________________________________________________________

deep_reshape_layer (Reshape) (None, 1, 544) 0 embedding_concat[0][0]

__________________________________________________________________________________________________

numeric_embedding_layer (Numeri (None, 12, 1) 12 numeric_input[0][0]

__________________________________________________________________________________________________

categorical_embedding_layer (Ca (None, 22, 1) 13945 categorical_input[0][0]

__________________________________________________________________________________________________

deep_x1 (Dense) (None, 1, 256) 139520 deep_reshape_layer[0][0]

__________________________________________________________________________________________________

linear_input_concat (Concatenat (None, 34, 1) 0 numeric_embedding_layer[0][0]

categorical_embedding_layer[0][0]

__________________________________________________________________________________________________

tf_op_layer_Sum (TensorFlowOpLa [(None, 16)] 0 embedding_concat[0][0]

__________________________________________________________________________________________________

tf_op_layer_Square_1 (TensorFlo [(None, 34, 16)] 0 embedding_concat[0][0]

__________________________________________________________________________________________________

dropout (Dropout) (None, 1, 256) 0 deep_x1[0][0]

__________________________________________________________________________________________________

tf_op_layer_linear_squeeze (Ten [(None, 34)] 0 linear_input_concat[0][0]

__________________________________________________________________________________________________

tf_op_layer_Square (TensorFlowO [(None, 16)] 0 tf_op_layer_Sum[0][0]

__________________________________________________________________________________________________

tf_op_layer_Sum_1 (TensorFlowOp [(None, 16)] 0 tf_op_layer_Square_1[0][0]

__________________________________________________________________________________________________

deep_x2 (Dense) (None, 1, 128) 32896 dropout[0][0]

__________________________________________________________________________________________________

fm_linear_ouput (Dense) (None, 1) 35 tf_op_layer_linear_squeeze[0][0]

__________________________________________________________________________________________________

tf_op_layer_Sub (TensorFlowOpLa [(None, 16)] 0 tf_op_layer_Square[0][0]

tf_op_layer_Sum_1[0][0]

__________________________________________________________________________________________________

deep_out (Dense) (None, 1, 64) 8256 deep_x2[0][0]

__________________________________________________________________________________________________

fm_out (Concatenate) (None, 17) 0 fm_linear_ouput[0][0]

tf_op_layer_Sub[0][0]

__________________________________________________________________________________________________

tf_op_layer_deep_squeeze (Tenso [(None, 64)] 0 deep_out[0][0]

__________________________________________________________________________________________________

concatenate (Concatenate) (None, 81) 0 fm_out[0][0]

tf_op_layer_deep_squeeze[0][0]

__________________________________________________________________________________________________

deepfm_out (Dense) (None, 1) 82 concatenate[0][0]

==================================================================================================

Total params: 418,058

Trainable params: 418,058

Non-trainable params: 0

__________________________________________________________________________________________________

from matplotlib import pyplot as plt

tf.keras.utils.plot_model(model, to_file='model.png', show_shapes=True)

# read data

features = pd.read_csv("features.csv")

labels = pd.read_csv("labels.csv")#.reset_index(drop=True)

number = features.shape[0]

split=0.9

train_features = features[0: int(split*number)]

train_labels = labels[0: int(split*number)]

""" test dataset """

test_features = features[int(split*number):]

test_labels = labels[int(split*number):]

x_test_numeric = test_features[df_numeric_columns].to_numpy()

x_test_categorical = test_features[df_categorical_columns].to_numpy()

y_test = test_labels.to_numpy()

validation_data = ({

"numeric_input":x_test_numeric, "categorical_input":x_test_categorical}, y_test)

""" test data end """

batch_size = 1024

def data_generator(features, labels, batch_size=batch_size):

sample_number = features.shape[0] - 1

idx = 0

while 1:

if idx > sample_number or idx + batch_size > sample_number:

idx = 0

x_numeric = features[idx:idx+batch_size][df_numeric_columns].to_numpy()

x_categorical = features[idx:idx+batch_size][df_categorical_columns].to_numpy()

y = labels[idx:idx+batch_size].to_numpy()

idx += batch_size

yield ({

"numeric_input":x_numeric, "categorical_input":x_categorical}, y)

history = model.fit(data_generator(train_features, train_labels)

, batch_size=batch_size

, steps_per_epoch=600000/batch_size

, epochs=5

, verbose=2

, validation_data=validation_data)

Epoch 1/5

WARNING:tensorflow:From C:\Users\admin\anaconda3\envs\tf2.2\lib\site-packages\tensorflow\python\ops\resource_variable_ops.py:1817: calling BaseResourceVariable.__init__ (from tensorflow.python.ops.resource_variable_ops) with constraint is deprecated and will be removed in a future version.

Instructions for updating:

If using Keras pass *_constraint arguments to layers.

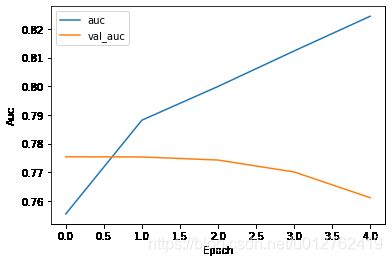

586/585 - 14s - loss: 0.4857 - accuracy: 0.7720 - auc: 0.7554 - val_loss: 0.4695 - val_accuracy: 0.7806 - val_auc: 0.7754

Epoch 2/5

586/585 - 16s - loss: 0.4610 - accuracy: 0.7840 - auc: 0.7881 - val_loss: 0.4696 - val_accuracy: 0.7810 - val_auc: 0.7753

Epoch 3/5

586/585 - 16s - loss: 0.4504 - accuracy: 0.7898 - auc: 0.7999 - val_loss: 0.4726 - val_accuracy: 0.7812 - val_auc: 0.7742

Epoch 4/5

586/585 - 15s - loss: 0.4390 - accuracy: 0.7960 - auc: 0.8123 - val_loss: 0.4822 - val_accuracy: 0.7796 - val_auc: 0.7701

Epoch 5/5

586/585 - 15s - loss: 0.4272 - accuracy: 0.8021 - auc: 0.8244 - val_loss: 0.4896 - val_accuracy: 0.7747 - val_auc: 0.7611

# plot loss

plt.plot(history.history['loss'], label='loss')

plt.plot(history.history['val_loss'], label='val_loss')

plt.xlabel('Epoch')

plt.ylabel('Loss')

plt.legend()

# print auc

plt.plot(history.history['auc'], label='auc')

plt.plot(history.history['val_auc'], label='val_auc')

plt.xlabel('Epoch')

plt.ylabel('Auc')

plt.legend()

# predict

x_numeric_predict = features[df_numeric_columns].to_numpy()

x_categorical_predict = features[df_categorical_columns].to_numpy()

# 注意:在训练的时候我们使用了Dropout,如果:

#1. 调用model.predict接口进行预测,不需要我们做任何特殊的处理,模型自己会根据预测时候的规则来时处理dropout

#2. 调用model.call()方法做预测,则需要显式传入参数training=False

y_predict = model.predict([x_numeric_predict, x_categorical_predict])

print(y_predict)

[[0.08364728]

[0.1166788 ]

[0.05836251]

...

[0.03018185]

[0.01302776]

[0.0837431 ]]