TensorFlow入门教程(29)车牌识别之使用DenseNet+CTC模型进行文字识别(五)

#

#作者:韦访

#博客:https://blog.csdn.net/rookie_wei

#微信:1007895847

#添加微信的备注一下是CSDN的

#欢迎大家一起学习

#

1、概述

前面几讲,我们已经实现了对车牌的检测,现在就来实现对检测出来的车牌进行文字识别。

环境配置:

操作系统:Ubuntu 64位

显卡:GTX 1080ti

Python:Python3.7

TensorFlow:2.3.0

2、车牌数据集制作

因为只是教程,所以,还是使用CCPD2019数据集,但是需要我们进行预处理,以加快模型的训练速度。由前面的教程可知,我们EAST模型检测出来的是车牌的最小外接矩形,所以,我们先写个制作数据集的代码,目的是将CCPD2019数据集中车牌的最小外接矩形剪切出来并保存成图片文件,这样我们在训练的时候就不用重复操作这个预处理过程。首先导入CCPD的图片路径到列表中,代码如下,

'''

从txt文本中获取图片路径

'''

def get_iamges_from_txt(data_path, filename):

files = []

with open(filename, "r") as fd:

lines = fd.readlines()

for line in lines:

line = line.strip()

if len(line) > 0:

files.append(os.path.join(data_path, line))

return files然后,遍历这个列表,先根据文件名得到车牌的车牌号和四个顶点坐标。需要注意的是,我们不完全使用这四个顶点的坐标,因为正常情况下,这四个顶点是刚好把车牌截取出来,但是,由于EAST检测车牌的时候,多少会存在一点偏差,即,可能多截或少截了车牌的一部分内容。所以,我们也要模仿这种偏差,在不影响识别的前提下,随机多截或少截车牌内容,代码如下,

def get_plate_poly(parts):

text_polys = []

# get plate poly

polys_part = parts[3].split("_")

poly = []

for p in polys_part:

poly.append(p.split("&"))

text_polys.append(np.asarray(poly).astype(np.int32))

return text_polys

# 随机缩放车牌坐标

def get_plate_poly_random_scale(parts, image):

h, w, _ = image.shape

text_polys = get_plate_poly(parts)

text_poly = text_polys[0]

rect_w = np.abs(text_poly[1][0] - text_poly[0][0])

rect_h = np.abs(text_poly[3][1] - text_poly[0][1])

min_scale_ratio = 0.05

max_scale_ratio = 0.08

p0 = [np.clip(text_poly[0][0]+np.random.randint(-int(rect_w*min_scale_ratio), int(rect_w*max_scale_ratio)), 0, w), np.clip(text_poly[0][1]+np.random.randint(-int(rect_h*min_scale_ratio), int(rect_h*max_scale_ratio)), 0, h)]

p1 = [np.clip(text_poly[1][0]+np.random.randint(-int(rect_w*max_scale_ratio), int(rect_w*min_scale_ratio)), 0, w), np.clip(text_poly[1][1]+np.random.randint(-int(rect_h*min_scale_ratio), int(rect_h*max_scale_ratio)), 0, h)]

p2 = [np.clip(text_poly[2][0]+np.random.randint(-int(rect_w*max_scale_ratio), int(rect_w*min_scale_ratio)), 0, w), np.clip(text_poly[2][1]+np.random.randint(-int(rect_h*max_scale_ratio), int(rect_h*min_scale_ratio)), 0, h)]

p3 = [np.clip(text_poly[3][0]+np.random.randint(-int(rect_w*min_scale_ratio), int(rect_w*max_scale_ratio)), 0, w), np.clip(text_poly[3][1]+np.random.randint(-int(rect_h*max_scale_ratio), int(rect_h*min_scale_ratio)), 0, h)]

if DEBUG:

cv2.circle(image, tuple(p1), 10, (0,255,0), 4)

random_polys = np.asarray([[p0, p1, p2, p3]]).astype(np.int32)

return random_polys

# 根据文件名获取车牌四个顶点的坐标,因为EAST检测出来的车牌不一定很完美,

# 所以这里在识别的任务中,就模仿这种不完美,对车牌进行随机的缩放

def get_plate_attribute(filename, image):

parts = filename.split("-")

if np.random.random() < 5./10:

text_polys = get_plate_poly_random_scale(parts, image)

else:

text_polys = get_plate_poly(parts)

number = get_plate_number(parts)

# print("number_part:", number)

return np.array(text_polys, dtype=np.float32), number

得到车牌四个顶点的坐标以后,先求这个四个顶点的最小外接矩形,

'''

求四边形的最小外接矩形

'''

def get_min_area_rect(poly):

rect = cv2.minAreaRect(poly)

box = cv2.boxPoints(rect)

return box, rect[2]然后再对顶点排序,p0对应左上角,p1对应右上角,p2对应右下角,p3对应左下角,并求出p2_p3这条边相对x轴的夹角,

'''

对矩形的顶点进行排序,排序后的结果是,p0-左上角,p1-右上角,p2-右下角,p3-左下角

矩形与水平轴的夹角,即为p2_p3边到x轴的夹角,以逆时针为正,顺时针为负

'''

def sort_poly_and_get_angle(poly, image=None):

# 先找到矩形最底部的顶点

p_lowest = np.argmax(poly[:, 1])

if np.count_nonzero(poly[:, 1] == poly[p_lowest, 1]) == 2:

# 如果矩形底部的边刚好与x轴平行

# 这种情况下,x坐标加y坐标之和最小的顶点就是左上角的顶点,即p0

p0_index = np.argmin(np.sum(poly, axis=1))

p1_index = (p0_index + 1) % 4

p2_index = (p0_index + 2) % 4

p3_index = (p0_index + 3) % 4

return poly[[p0_index, p1_index, p2_index, p3_index]], 0.

else:

# 如果矩形底部与x轴有夹角

# 找到最底部顶点的右边的第一个顶点

p_lowest_right = (p_lowest - 1) % 4

# 求最底部顶点与其右边第一个顶点组成的边与x轴的夹角

angle = np.arctan(-(poly[p_lowest][1] - poly[p_lowest_right][1])/(poly[p_lowest][0] - poly[p_lowest_right][0] + 1e-5))

# 下面的代码其实自己画个图就很好理解了

if angle > np.pi/4:

# 如果这个夹角大于45度,那么,最底部的顶点为p2顶点

p2_index = p_lowest

p1_index = (p2_index - 1) % 4

p0_index = (p2_index - 2) % 4

p3_index = (p2_index + 1) % 4

# 这种情况下,p2-p3边与x轴的夹角就为-(np.pi/2 - angle)

return poly[[p0_index, p1_index, p2_index, p3_index]], -(np.pi/2 - angle)

else:

# 如果这个夹角小于等于45度,那么,最底部的顶点为p3顶点

p3_index = p_lowest

p0_index = (p3_index + 1) % 4

p1_index = (p3_index + 2) % 4

p2_index = (p3_index + 3) % 4

return poly[[p0_index, p1_index, p2_index, p3_index]], angle然后就是截取车牌,因为车牌可能是斜的,所以,要先将图片根据我们上面求得的夹角旋转,经过旋转以后,车牌的最小外接矩形就会是水平的,这样我们就可以截取了,

# 将车牌裁剪出来,因为车牌有可能是斜的,所以要先将图片旋转到车牌的矩形框为水平时,再裁剪,

# 将车牌裁剪出来,因为车牌有可能是斜的,所以要先将图片旋转到车牌的矩形框为水平时,再裁剪

def crop_plate(image, angle, p0, p1, p2, p3):

# DEBUG = True

angle = -angle * (180 / math.pi)

# print("angle:", angle)

h, w, _ = image.shape

rotate_mat = cv2.getRotationMatrix2D((w / 2, h / 2), angle, 1) # 按angle角度旋转图像

h_new = int(w * np.fabs(np.sin(np.radians(angle))) + h * np.fabs(np.cos(np.radians(angle))))

w_new = int(h * np.fabs(np.sin(np.radians(angle))) + w * np.fabs(np.cos(np.radians(angle))))

rotate_mat[0, 2] += (w_new - w) / 2

rotate_mat[1, 2] += (h_new - h) / 2

rotated_image = cv2.warpAffine(image, rotate_mat, (w_new, h_new), borderValue=(255, 255, 255))

# 旋转后图像的四点坐标

[[p1[0]], [p1[1]]] = np.dot(rotate_mat, np.array([[p1[0]], [p1[1]], [1]]))

[[p3[0]], [p3[1]]] = np.dot(rotate_mat, np.array([[p3[0]], [p3[1]], [1]]))

[[p2[0]], [p2[1]]] = np.dot(rotate_mat, np.array([[p2[0]], [p2[1]], [1]]))

[[p0[0]], [p0[1]]] = np.dot(rotate_mat, np.array([[p0[0]], [p0[1]], [1]]))

if DEBUG:

cv2.circle(rotated_image, tuple(p0), 10, (0,255,0), 4)

cv2.circle(rotated_image, tuple(p1), 10, (0,0,255), 4)

cv2.imshow('image', image)

cv2.imshow('rotateImg2', rotated_image)

cv2.waitKey(0)

crop_image = rotated_image[int(p0[1]):int(p3[1]), int(p0[0]):int(p1[0])]

return crop_image得到车牌图片以后,保存即可。

这样,我们就得到了两个文件夹和一个TXT文本文件,如下图所示,

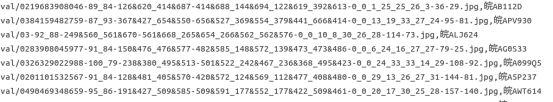

其中,train和val文件夹下保存的是车牌图片,train.txt和val.txt文件则是这些车牌图片的索引和其对应的车牌号,用逗号隔开,如下图所示,

3、CRNN

数据集做好以后,我们先来看CRNN的论文,我们模型就是基于它的思想。

论文链接:https://arxiv.org/abs/1507.05717

直接看网络结构,

如上图所示,模型主要由三部分构成:卷积层、循环层和转录层。

卷积层主要做特征提取的工作。循环层则由一个双向循环神经网络构成,用来预测标签分布。最后一层转录层则有CTC来完成。知道这个模型的结构就可以了,我们不完全按照它的来,直接用DenseNet+CTC就可以完成文字的识别,当然也可以用DenseNet+BiRNN+CTC,我们两个模型都实现。

4、数据增强

数据增强部分,我们对图片做随机缩放、随机改变亮度和随机小角度旋转的操作,代码如下,

'''

随机缩放图片

'''

def random_scale_image(image):

random_scale = np.array([0.5, 0.75, 1., 1.25, 1.5])

rd_scale = np.random.choice(random_scale)

x_scale_variation = np.random.randint(-10, 10) / 100.

y_scale_variation = np.random.randint(-10, 10) / 100.

x_scale = rd_scale + x_scale_variation

y_scale = rd_scale + y_scale_variation

image = cv2.resize(image, dsize=None, fx=x_scale, fy=y_scale)

return image

'''

随机改变亮度

'''

def random_brightness(image):

random_delta = np.random.randint(60, 140) / 100.

random_bias = np.random.randint(10, 30)

image = np.uint8(np.clip((image*random_delta+random_bias), 0, 255))

return image

'''

随机旋转

'''

def random_rotate(images):

h, w, _ = images.shape

random_angle = np.random.randint(-15,15)

random_scale = np.random.randint(8,10) / 10.0

mat_rotate = cv2.getRotationMatrix2D(center=(w*0.5, h*0.5), angle=random_angle, scale=random_scale)

images = cv2.warpAffine(images, mat_rotate, (w, h))

return images为了能进行批量训练,将输入图片的大小固定,但不是直接将图片缩放至固定大小(避免车牌图片的严重变形),而是按比例进行缩放,CRNN模型要求输入图片的高度是固定的,宽度随意。所以,我们先根据原车牌图片的按比例缩放至固定高度,然后再对宽度进行填充,代码如下,

def resize(image, FLAGS):

h, w, _ = image.shape

scale = h / float(FLAGS.input_size_h)

new_w = int(np.around(w / scale))

new_w = np.where(new_w > FLAGS.input_size_w, FLAGS.input_size_w, new_w)

black_image = np.zeros((FLAGS.input_size_h, FLAGS.input_size_w, 3), dtype=np.uint8)

resize_image = cv2.resize(image, (new_w, FLAGS.input_size_h))

# print("black_image:", black_image.shape, " image:", image.shape, " resize_image:", resize_image.shape)

new_h, new_w, _ = resize_image.shape

start_w = np.where(new_w+10>FLAGS.input_size_w, 0, 10)

black_image[:new_h,start_w:new_w+start_w,:] = resize_image[:new_h,:new_w,:]

# cv2.imshow("resize_image", resize_image)

# cv2.imshow("black_image", black_image)

# cv2.waitKey(0)

return black_image5、导入字符数据

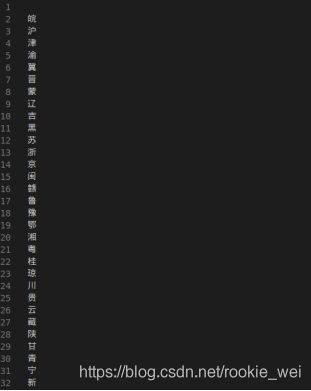

跟语音识别一样,文字识别也需要将所有待识别的文字(外加一个空格符,当然也可以不用空格符)放到一个文件中,一行一个字符,如下图所示,

我们先将上面的文件以字典的形式导入,这样我们后面才能将车牌号转成向量的形式,代码如下,

'''

导入车牌包含的字符,以字典的形式返回

'''

def get_char_vector(filename):

char_list = []

with open(filename, 'r', encoding='utf-8') as fd:

lines = fd.readlines()

for line in lines:

char_list.append(line.strip("\n"))

vector = dict(zip(char_list, range(len(char_list))))

return vector, char_list接着将数据集中所有图片路径和对应的车牌号导入列表中,这里车牌号是以向量的形式保存,代码如下,

def get_images(filename, char_vector):

root_dir,_ = os.path.split(filename)

images_list = []

labels_list = []

with open(filename, "r") as fd:

lines = fd.readlines()

for line in lines:

line = line.strip().split(",")

if len(line) == 2:

images_list.append(os.path.join(root_dir, line[0]))

# print("line[1]:", line[1])

labels_list.append([char_vector[i] for i in line[1]])

# break

# print("labels_list:", labels_list)

randnum = random.randint(0,100)

random.seed(randnum)

random.shuffle(images_list)

random.seed(randnum)

random.shuffle(labels_list)

return np.asarray(images_list), np.asarray(labels_list)6、操作数据集

我们这里以tf.keras.utils.Sequence类来操作数据集,对这个类不熟悉的话,可以看看我另一篇博客(https://blog.csdn.net/rookie_wei/article/details/100013787),导入数据的代码我就不多解释了,重点来看__getitem__函数,代码如下,

def __getitem__(self, index):

if self.subset == "train":

batch_filename = self.train_filelist[index * self.FLAGS.batch_size : (index + 1) * self.FLAGS.batch_size]

batch_label = self.train_labellist[index * self.FLAGS.batch_size : (index + 1) * self.FLAGS.batch_size]

else:

batch_filename = self.valid_filelist[index * self.FLAGS.batch_size : (index + 1) * self.FLAGS.batch_size]

batch_label = self.valid_labellist[index * self.FLAGS.batch_size : (index + 1) * self.FLAGS.batch_size]

images = []

labels = []

input_lengths = []

label_lenghts = []

for filename, label in zip(batch_filename, batch_label):

image = preprocess(filename, self.FLAGS)

images.append(image)

labels.append(label)

# ctc 输入长度

input_lengths.append([self.FLAGS.ctc_input_lengths])

# 文本长度

label_lenghts.append([len(label)])

images = np.asarray(images)

labels = np.asarray(labels)

input_lengths = np.asarray(input_lengths)

label_lenghts = np.asarray(label_lenghts)

inputs = {

'input_image': images,

'labels': labels,

'input_length': input_lengths,

'label_length': label_lenghts,

}

outputs = {'ctc': np.zeros([self.FLAGS.batch_size])}

return (inputs, outputs)主要是看返回的inputs和outputs的形式,之所以这样返回数据,是因为求CTC loss时,需要我们传入这些数据。

7、网络模型

网络模型就非常简单了,先来看DenseNet的,代码如下,

def densenet169(FLAGS, num_classes):

input_shape = (FLAGS.input_size_h, None, 3)

input = tf.keras.layers.Input(shape=input_shape, name='input_image')

x = tf.keras.applications.densenet.preprocess_input(input)

x = tf.keras.applications.DenseNet169(include_top=False, weights='imagenet')(x)

x = tf.keras.layers.Dropout(0.2)(x)

x = tf.keras.layers.BatchNormalization(axis=-1, epsilon=1.1e-5)(x)

x = tf.keras.layers.Activation('relu')(x)

x = tf.keras.layers.Permute((2, 1, 3), name='permute')(x)

x = tf.keras.layers.TimeDistributed(tf.keras.layers.Flatten(), name='flatten')(x)

y_pred = tf.keras.layers.Dense(num_classes, name='out', activation='softmax')(x)

model = tf.keras.Model(inputs=input, outputs=y_pred)

model.summary()

return model, y_pred, input这里直接用keras定义好的DenseNet169,如果想在DenseNet后再加一个双向循环神经网络也可以用下面的代码,

def densenet169_BiGRU(FLAGS, num_classes):

input_shape = (FLAGS.input_size_h, None, 3)

input = tf.keras.layers.Input(shape=input_shape, name='input_image')

x = tf.keras.applications.densenet.preprocess_input(input)

x = tf.keras.applications.DenseNet169(include_top=False, weights='imagenet')(x)

x = tf.keras.layers.Dropout(0.2)(x)

x = tf.keras.layers.BatchNormalization(axis=-1, epsilon=1.1e-5)(x)

x = tf.keras.layers.Activation('relu')(x)

x = tf.keras.layers.Permute((2, 1, 3), name='permute')(x)

x = tf.keras.layers.TimeDistributed(tf.keras.layers.Flatten(), name='flatten')(x)

x = tf.keras.layers.Bidirectional(tf.keras.layers.GRU(512, return_sequences=True, implementation=2), name='blstm')(x)

y_pred = tf.keras.layers.Dense(num_classes, name='out', activation='softmax')(x)

model = tf.keras.Model(inputs=input, outputs=y_pred)

model.summary()

return model, y_pred, input如果你不想用整个DenseNet,只想用它到某些层的特征,就可以用下面的代码,

def densenet88(FLAGS, num_classes):

input_shape = (FLAGS.input_size_h, None, 3)

input = tf.keras.layers.Input(shape=input_shape, name='input_image')

basemodel = tf.keras.applications.DenseNet121(input_tensor=input, include_top=False, weights='imagenet')

x = basemodel.get_layer('pool4_pool').output

x = tf.keras.layers.Dropout(0)(x)

x = tf.keras.layers.BatchNormalization(axis=-1, epsilon=1.1e-5)(x)

x = tf.keras.layers.Activation('relu')(x)

x = tf.keras.layers.Permute((2, 1, 3), name='permute')(x)

x = tf.keras.layers.TimeDistributed(tf.keras.layers.Flatten(), name='flatten')(x)

y_pred = tf.keras.layers.Dense(num_classes, name='out', activation='softmax')(x)

model = tf.keras.Model(inputs=input, outputs=y_pred)

model.summary()

return model, y_pred, input其中,num_classes是车牌号包含的字符个数加一,这个1是预留给CTC的Blank的。定义完DenseNet后,我们来看CTC的定义,代码如下,

def ctc_lambda_func(args):

y_pred, labels, input_length, label_length = args

return tf.keras.backend.ctc_batch_cost(labels, y_pred, input_length, label_length)

def get_models(FLAGS, num_classes):

densenet_model, y_pred, input = densenet(FLAGS, num_classes)

labels = tf.keras.layers.Input(name='labels', shape=[None], dtype='float32')

input_length = tf.keras.layers.Input(name='input_length', shape=[1], dtype='int64')

label_length = tf.keras.layers.Input(name='label_length', shape=[1], dtype='int64')

ctc_loss = tf.keras.layers.Lambda(ctc_lambda_func, output_shape=(1,), name='ctc')([y_pred, labels, input_length, label_length])

ctc_model = tf.keras.Model(inputs=[input, labels, input_length, label_length], outputs=ctc_loss)

ctc_model.compile(loss={'ctc': lambda y_true, y_pred: y_pred}, optimizer='adam', metrics=['accuracy'])

ctc_model.summary()

return densenet_model, ctc_model可以看到,CTC模型的输入格式刚好跟我们在做数据处理时的输出一致。

8、训练

接下来就是训练了,也是比较简单,直接用fit函数即可,先来试试DenseNet169+CTC的,训练代码如下,

def main(_):

train_ds = Plate_Dataset(FLAGS, "train")

valid_ds = Plate_Dataset(FLAGS, "valid")

num_classes = train_ds.get_num_classes() + 1

checkpoint_path = os.path.join(FLAGS.checkpoint_path, FLAGS.densenet_model_net, "plate-densenet-{epoch:04d}.ckpt")

checkpoint = tf.keras.callbacks.ModelCheckpoint(filepath=checkpoint_path, save_freq=100,

monitor='val_loss', save_best_only=False, save_weights_only=True)

lr_schedule = lambda epoch: FLAGS.learning_rate * 0.4**epoch

learning_rate = np.array([lr_schedule(i) for i in range(10)])

changelr = tf.keras.callbacks.LearningRateScheduler(lambda epoch: float(learning_rate[epoch]))

earlystop = tf.keras.callbacks.EarlyStopping(monitor='val_loss', patience=2, verbose=1)

tensorboard = tf.keras.callbacks.TensorBoard(log_dir=FLAGS.tensorboard_dir, write_graph=True, update_freq=100)

_, model = get_models(FLAGS, num_classes)

checkpoint_dir = os.path.dirname(checkpoint_path)

latest_ckpt = tf.train.latest_checkpoint(checkpoint_dir)

latest_epoch = 0

if latest_ckpt:

print("---------------load_weights--------------------")

model.load_weights(latest_ckpt)

epoch_index_start = latest_ckpt.rfind("-")

epoch_index_end = latest_ckpt.rfind(".ckpt")

latest_epoch = int(latest_ckpt[epoch_index_start+1:epoch_index_end])

print("latest_epoch:", latest_epoch)

steps_per_epoch = train_ds.get_lenght()

validation_steps = 100

model.fit(train_ds,

epochs = FLAGS.epochs,

initial_epoch = latest_epoch,

validation_data = valid_ds,

steps_per_epoch = steps_per_epoch,

validation_steps = validation_steps,

callbacks = [checkpoint, earlystop, changelr, tensorboard])

h5_path = os.path.join("models", "plate-"+FLAGS.densenet_model_net+".h5")

model.save(h5_path)运行结果,

可以看到,验证集的准确率高达0.9837。

9、验证模型

准确率是否真有上面的那么好呢?我们随机从验证集中拷贝一些图片来测试看看,测试代码如下,

def pading_plate_width(image):

h, w, _ = image.shape

new_w = np.where(FLAGS.input_size_w > w+20, FLAGS.input_size_w, w+20)

new_image = np.zeros((h, new_w, 3), dtype=np.uint8)

start_w = 10

new_image[:h,start_w:w+start_w,:] = image[:h,:w,:]

return new_image

'''

随机旋转,这里不旋转一下识别效果反而降低,可能是训练的时候大部分都旋转的原因

'''

def random_rotate(images):

h, w, _ = images.shape

random_angle = np.random.randint(-15,15)

random_scale = np.random.randint(8,10) / 10.0

mat_rotate = cv2.getRotationMatrix2D(center=(w*0.5, h*0.5), angle=random_angle, scale=random_scale)

images = cv2.warpAffine(images, mat_rotate, (w, h))

return images

def resize(image):

image = random_rotate(image)

h, w, _ = image.shape

new_w = np.around(w / (h/FLAGS.input_size_h)).astype(np.int32)

image = cv2.resize(image, (new_w, FLAGS.input_size_h))

image = pading_plate_width(image)

# cv2.imshow("image", image)

image = image[np.newaxis,:,:,:]

return image

def get_images(data_path):

files = []

for ext in ['jpg', 'png', 'jpeg']:

files.extend(glob.glob(os.path.join(data_path, '*.{}'.format(ext))))

return files

def decode(pred, char_list, num_classes):

plate_char_list = []

pred_text = pred.argmax(axis=2)[0]

# print("pred_text:", pred_text, " char_list len:", len(char_list))

for i in range(len(pred_text)):

if pred_text[i] != num_classes - 1 and ((not (i > 0 and pred_text[i] == pred_text[i - 1])) or (i > 1 and pred_text[i] == pred_text[i - 2])):

plate_char_list.append(char_list[pred_text[i]])

return u''.join(plate_char_list)

def main(_):

_,char_list = get_char_vector(FLAGS.char_filename)

num_classes = len(char_list) + 1

model,_,_ = densenet(FLAGS, num_classes)

h5_path = os.path.join("models", "plate-"+FLAGS.densenet_model_net+".h5")

if os.path.exists(h5_path):

print("---------------load_weights--------------------")

model.load_weights(h5_path)

image_filenames = get_images("test_images/input")

for filename in image_filenames:

image = cv2.imread(filename)

_, y_label = get_plate_attribute(filename, image)

resized_image = resize(image)

y_pred = model.predict(resized_image)

pre_plate = decode(y_pred, char_list, num_classes)

print("y_label:", y_label, " y_pred:", pre_plate)

# cv2.imshow("image", image)

# cv2.waitKey(0)运行结果,

10、验证模型在CCDP数据集的准确率

最后,我们自己写个代码,在CCDP数据集的训练集和测试集中验证一下模型的准确率,代码如下,

def pading_plate_width(image):

h, w, _ = image.shape

new_w = np.where(FLAGS.input_size_w > w+20, FLAGS.input_size_w, w+20)

new_image = np.zeros((h, new_w, 3), dtype=np.uint8)

start_w = 10

new_image[:h,start_w:w+start_w,:] = image[:h,:w,:]

return new_image

'''

随机旋转,这里不旋转一下识别效果反而降低,可能是训练的时候大部分都旋转的原因

'''

def random_rotate(images):

h, w, _ = images.shape

random_angle = np.random.randint(-15,15)

random_scale = np.random.randint(8,10) / 10.0

mat_rotate = cv2.getRotationMatrix2D(center=(w*0.5, h*0.5), angle=random_angle, scale=random_scale)

images = cv2.warpAffine(images, mat_rotate, (w, h))

return images

def resize(image):

image = random_rotate(image)

h, w, _ = image.shape

new_w = np.around(w / (h/FLAGS.input_size_h)).astype(np.int32)

image = cv2.resize(image, (new_w, FLAGS.input_size_h))

image = pading_plate_width(image)

# cv2.imshow("image", image)

image = image[np.newaxis,:,:,:]

return image

def decode(pred, char_list, num_classes):

plate_char_list = []

pred_text = pred.argmax(axis=2)[0]

# print("pred_text:", pred_text, " char_list len:", len(char_list))

for i in range(len(pred_text)):

if pred_text[i] != num_classes - 1 and ((not (i > 0 and pred_text[i] == pred_text[i - 1])) or (i > 1 and pred_text[i] == pred_text[i - 2])):

plate_char_list.append(char_list[pred_text[i]])

return u''.join(plate_char_list)

def get_plate_number(inputs, char_list):

plate_char_list = []

chars = inputs["labels"][0]

for c in chars:

plate_char_list.append(char_list[c])

return u''.join(plate_char_list)

def get_accuracy(model, ds, char_list, num_classes):

error = 0

total = 0

for (inputs, _) in ds:

y_label = get_plate_number(inputs, char_list)

image = inputs["input_image"][0]

image = resize(image)

y_pred = model.predict(image)

pre_plate = decode(y_pred, char_list, num_classes)

# print("y_label:", y_label, " y_pred:", pre_plate, " total:", total)

# cv2.waitKey(0)

if y_label != pre_plate:

error += 1

total += 1

if total == 10000:

break

accuracy = 1 - float(error)/total

return accuracy

def main(_):

train_ds = Plate_Dataset(FLAGS, "train")

valid_ds = Plate_Dataset(FLAGS, "valid")

_,char_list = get_char_vector(FLAGS.char_filename)

num_classes = len(char_list) + 1

model,_,_ = densenet(FLAGS, num_classes)

h5_path = os.path.join("models", "plate-"+FLAGS.densenet_model_net+".h5")

if os.path.exists(h5_path):

print("---------------load_weights--------------------")

model.load_weights(h5_path)

accuracy = get_accuracy(model, train_ds, char_list, num_classes)

print("train accuracy:", accuracy)

accuracy = get_accuracy(model, valid_ds, char_list, num_classes)

print("valid accuracy:", accuracy)运行结果,

10、完整代码

https://mianbaoduo.com/o/bread/YZWcl5xu