Docker配置Hadoop集群并使用WordCount测试

Docker搭建Hadoop集群踩坑指南

- 制作镜像

-

- 1、拉取ubuntu镜像

- 2、使用Dockerfile构建包含jdk的ubuntu镜像

- 3、进入映像

- 4、升级apt-get

- 5、安装vim

- 6、更新apt-get镜像源

- 7、重新升级apt-get

- 8、安装wget

- 9、创建并进入安装hadoop的文件目录

- 10、通过wget下载hadoop安装包

- 11、解压hadoop

- 12、配置环境变量并重启配置文件

- 13、创建文件夹并修改配置文件

- 14、修改hadoop环境变量

- 15、安装SSH

-

- 修改ssh配置

- 16、导出镜像

- 17、集群测试

-

- 修改master中slaves文件

- 启动hadoop

- 18、使用wordCount测试集群

本人由于毕业设计选题,开始接触Hadoop,预期搭建一个具有分布式存储与高性能处理数据的框架,技术选型初步采用Docker进行分布式集群搭建,并且使用Docker封装出一个灵活添加使用的算法层, 环境搭建中踩坑多次,特此记录分享,有需求的小伙伴可以参考一下。

操作系统:Ubuntu20.04 默认Docker环境已经搭建完成

制作镜像

1、拉取ubuntu镜像

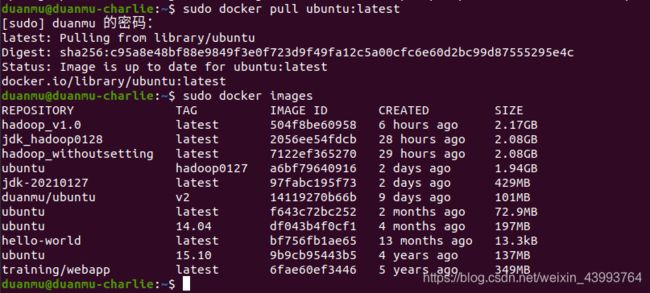

首先拉取一个ubuntu最新的镜像作为基础映像docker pull ubuntu:latest,我这里已经拉取过了,如果第一次pull会开始下载。下载后可以看一下docker images已经有一个ubuntu版本号为latest的了。

2、使用Dockerfile构建包含jdk的ubuntu镜像

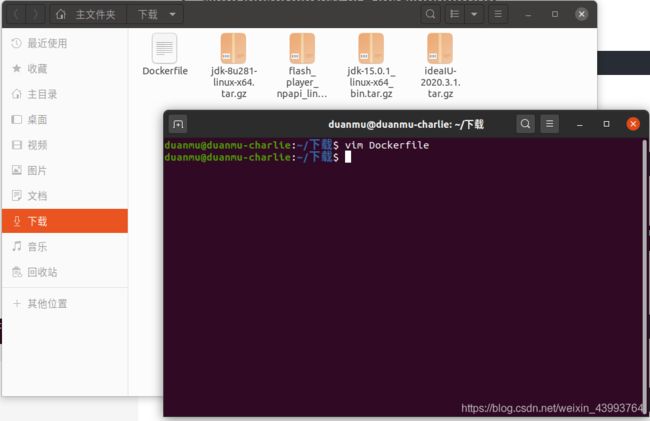

去jdk官网下载jdk包。我下载的jdk1.8 jdk-8u281-linux-x64.tar.gz,在下载好的tar.gz文件所在的目录,新建一个Dockerfile文件,并进入编辑状态

vim Dockerfile

输入下面的文件内容,其中jdk的版本号要根据你下载的版本适当修改:

FROM ubuntu:latest

MAINTAINER duanmu

ADD jdk-8u281-linux-x64.tar.gz /usr/local/

ENV JAVA_HOME /usr/local/jdk1.8.0_281

ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

ENV PATH $PATH:$JAVA_HOME/bin

编辑好后保存,开始build镜像

docker build -t jdk-20210127 .

构建一个包含jdk的ubuntu镜像命名为jdk-20210127 ,注意最后的“.”

3、进入映像

新建一个以jdk-20210127为基础镜像的容器命名为ubuntu_hadoop并指定容器的hostname为charlie,并进入容器。

docker run -it --name=ubuntu_hadoop -h charlie jdk-20210127

java -version

已经可以显示jdk的版本号了。

4、升级apt-get

apt-get update

5、安装vim

apt-get install vim

6、更新apt-get镜像源

默认的apt-get下载源速度太慢,更换下载源可以提升速度,进入下载源列表文件,按a进入insert模式

vim /etc/apt/sources.list

将其中内容全部替换为以下内容

deb-src http://archive.ubuntu.com/ubuntu focal main restricted #Added by software-properties

deb http://mirrors.aliyun.com/ubuntu/ focal main restricted

deb-src http://mirrors.aliyun.com/ubuntu/ focal main restricted multiverse universe #Added by software-properties

deb http://mirrors.aliyun.com/ubuntu/ focal-updates main restricted

deb-src http://mirrors.aliyun.com/ubuntu/ focal-updates main restricted multiverse universe #Added by software-properties

deb http://mirrors.aliyun.com/ubuntu/ focal universe

deb http://mirrors.aliyun.com/ubuntu/ focal-updates universe

deb http://mirrors.aliyun.com/ubuntu/ focal multiverse

deb http://mirrors.aliyun.com/ubuntu/ focal-updates multiverse

deb http://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse

deb-src http://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse #Added by software-properties

deb http://archive.canonical.com/ubuntu focal partner

deb-src http://archive.canonical.com/ubuntu focal partner

deb http://mirrors.aliyun.com/ubuntu/ focal-security main restricted

deb-src http://mirrors.aliyun.com/ubuntu/ focal-security main restricted multiverse universe #Added by software-properties

deb http://mirrors.aliyun.com/ubuntu/ focal-security universe

deb http://mirrors.aliyun.com/ubuntu/ focal-security multiverse

其中focal是ubuntu20.04的版本号,其他版本诸如xenial等修改版本号即可。

7、重新升级apt-get

apt-get update

8、安装wget

apt-get install wget

9、创建并进入安装hadoop的文件目录

mkdir -p soft/apache/hadoop/

cd soft/apache/hadoop

10、通过wget下载hadoop安装包

wget http://mirrors.ustc.edu.cn/apache/hadoop/common/hadoop-3.3.0/hadoop-3.3.0.tar.gz

本人使用的是比较新的hadoop3.3.0版本,其他版本也可以访问这个网站的目录树去找。(3.3.0在后续配置中有一步骤略有差别,之后会提。)

11、解压hadoop

tar -xvzf Hadoop-3.3.0.tar.gz

12、配置环境变量并重启配置文件

vim ~/.bashrc

新增以下环境变量:

export JAVA_HOME=/usr/local/jdk1.8.0_281

export HADOOP_HOME=/soft/apache/hadoop/hadoop-3.3.0

export HADOOP_CONFIG_HOME=$HADOOP_HOME/etc/hadoop

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

并重启配置文件

source ~/.bashrc

13、创建文件夹并修改配置文件

cd $HADOOP_HOME

mkdir tmp

mkdir namenode

mkdir datanode

修改配置文件:

cd $HADOOP_CONFIG_HOME

vim core-site.xml

将下面内容替换:

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>/soft/apache/hadoop/hadoop-3.3.0/tmp</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://master:9000</value>

<final>true</final>

</property>

</configuration>

更改hdfs-site.xml

vim hdfs-site.xml

用下面配置替换:

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value>

<final>true</final>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/soft/apache/hadoop/hadoop-3.3.0/namenode</value>

<final>true</final>

</property>

<property>

<name>dfs.datanode.name.dir</name>

<value>/soft/apache/hadoop/hadoop-3.3.0/datanode</value>

<final>true</final>

</property>

</configuration>

接下来

cp marred-site.xml.template marred-site.xml

vim mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>mapred.job.tarcker</name>

<value>master:9001</value>

</property>

</configuration>

14、修改hadoop环境变量

在hadoop的安装目录下,找到hadoop-env,sh文件

vim hadoop-env.sh

在最后添加

export JAVA_HOME=/usr/local/jdk1.8.0_281

刷新

hadoop namenode -format

15、安装SSH

hadoop的环境必须满足ssh免密登陆,先安装ssh

apt-get install net-tools

apt-get install ssh

创建sshd目录

mkdir -p ~/var/run/sshd

生成访问密钥

cd ~/

ssh-keygen -t rsa -P '' -f ~/.ssh/id_dsa

cd .ssh

cat id_dsa.pub >> authorized_keys

这一步骤提示安装路径与设置密码时全布直接按回车即可设置成免密。

修改ssh配置

vim /etc/ssh/ssh_config

添加,将下面这句话直接添加即可,也可以在文件中找到被注释的这句话去修改。

StrictHostKeyChecking no #将ask改为no

vim etc/ssh/sshd_config

在末尾添加:

#禁用密码验证

PasswordAuthentication no

#启用密钥验证

RSAAuthentication yes

PubkeyAuthentication yes

AuthorizedKeysFile .ssh/authorized_keys

最后使用下面语句测试是否免密登陆,

ssh localhost

出现下图即免密配置成功。

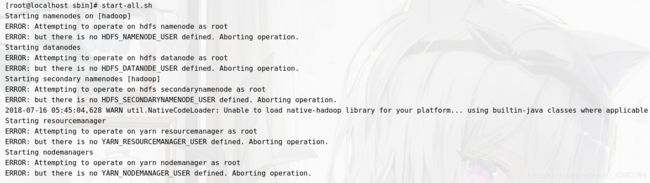

最后在在高版本hadoop配置过程中,最后启动时常常报如下的错:

为了避免踩坑,先提前设置,进入环境变量

为了避免踩坑,先提前设置,进入环境变量

vim /etc/profile

增加如下内容并保存:

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

使配置生效

source /etc/profile

16、导出镜像

至此镜像已经配置完成,退出容器,将配置好的镜像保存,其中xxxx为刚刚操作的容器的id,可以使用docker ps -a查看

docker commit xxxx ubuntu:hadoop

此时ubuntu_hadoop就是最终配置好的包含hadoop的镜像。

17、集群测试

依次构建并启动三个以刚刚生成的镜像为基本镜像的容器,依次命名为master 、slave1、slave2,并将master做端口映射(提示:容器要处于运行状态,生成容器后使用ctrl+P+Q退出可以使容器保持后台运行。)

docker run -it -h master --name=master -p 9870:9870 -p 8088:8088 ubuntu:hadoop

docker run -it -h slave1 --name=slave1 ubuntu:hadoop

docker run -it -h slave2 --name=slave2 ubuntu:hadoop

修改每个容器的host文件

对matser、slave1、slave2里的host文件,分别加入其他两个容器的ip

vim /etc/hosts

127.0.0.1 localhost

::1 localhost ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

172.17.0.2 master

172.17.0.3 slave1

172.17.0.4 slave2

修改master中slaves文件

注意,在hadoop3.3.0版本中并不是修改slaves文件,而是修改workers文件。此处为3.3.0版本的一些变化。

老版本(自行查找hadoop版本中已存在文件是slaves还是iworkers)

cd $HADOOP_CONFIG_HOME/

vim slaves

3.3.0

cd $HADOOP_CONFIG_HOME/

vim workers

将其他两个节点名称加入文件

slave1

slave2

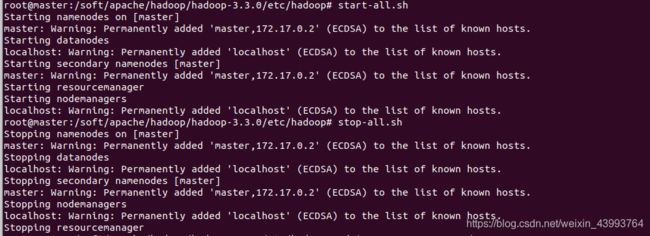

启动hadoop

start-all.sh

出现下图即为配置成功,

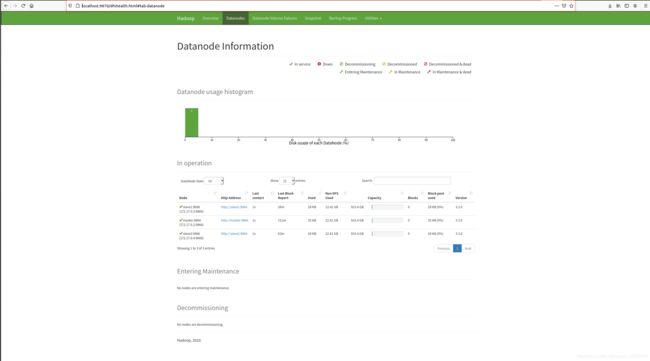

此时可以访问localhost:9870和localhost:8088 ,去监控集群运行状态了,如下图

此时可以访问localhost:9870和localhost:8088 ,去监控集群运行状态了,如下图

18、使用wordCount测试集群

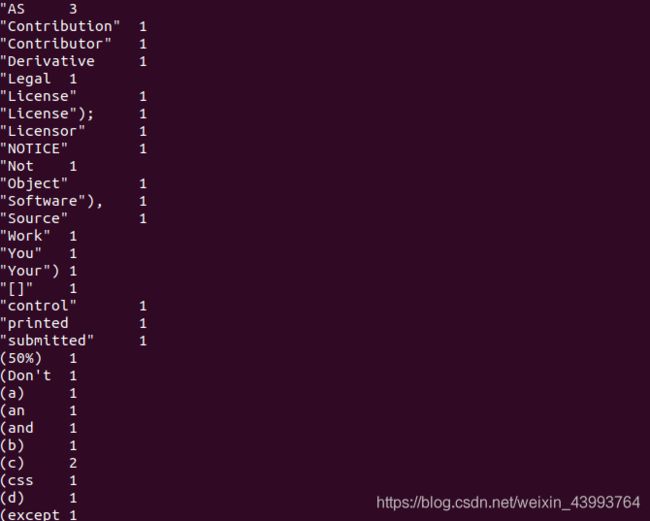

进入hadoop目录,查看一下所有文件,以文件中的LICENSE.txt为输入文件,来统计其中单词出现频率作为测试。

cd $HADOOP_HOME

ll

首先在HDFS文件存储系统中新建一个inputs文件夹。

hadoop fs -mkdir /input

可以使用下面命令看到文件夹创建成功

hadoop fs -ls /

把license.txt放进input文件夹

hadoop fs -put LICENSE.txt /input

查看已经放入

hadoop fs -ls /input

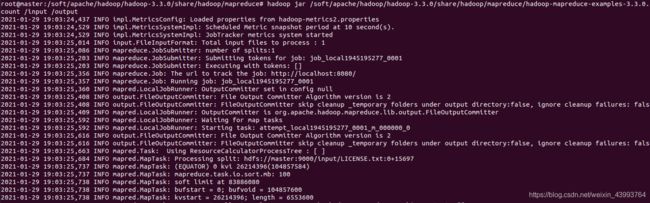

使用示例程序进行统计(mapreduce示例包不同版本路径不同需要自行查找,下面是3.3.0版本)

hadoop jar /soft/apache/hadoop/hadoop-3.3.0/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.3.0.jar wordcount /input /output

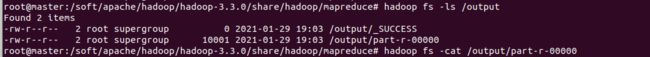

运行后查看文件夹,发现多了一个output文件夹,打开output文件夹,多了两个文件,_SUCCESS和part-r-00000,说明运行成功。

hadoop fs -ls /

hadoop fs -ls /output

hadoop fs -cat /output/part-r-00000

至此,已经完成了基本的Docker搭建hadoop分布式集群。并进行简单的测试,接下来我也会在学习过程中持续更新,兄弟萌觉得有用点个赞奥!!!

20210129 duan